The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Hypothesis Generation and Testing interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Hypothesis Generation and Testing Interview

Q 1. Explain the difference between a hypothesis and a prediction.

While often used interchangeably, a hypothesis and a prediction are distinct. A hypothesis is a tentative explanation for an observation, phenomenon, or scientific problem. It’s a statement proposing a possible relationship between variables. A prediction, on the other hand, is a specific, testable statement about what will happen in a particular experiment or observation if the hypothesis is correct. Think of the hypothesis as the ‘why’ and the prediction as the ‘what’.

Example:

Hypothesis: Increased sunlight exposure leads to increased plant growth.

Prediction: Plants exposed to 12 hours of sunlight per day will show significantly greater height and biomass than plants exposed to only 6 hours of sunlight per day.

Q 2. Describe the steps involved in the scientific method, focusing on hypothesis generation and testing.

The scientific method is an iterative process. Regarding hypothesis generation and testing, the key steps are:

- Observation: Notice a phenomenon or problem.

- Question: Formulate a question about the observation.

- Hypothesis Generation: Propose a testable explanation (hypothesis) for the observation.

- Prediction: Make a specific, measurable prediction based on the hypothesis.

- Experimentation/Data Collection: Design and conduct an experiment to test the prediction. Collect data.

- Analysis: Analyze the data to determine if the results support or refute the prediction and, consequently, the hypothesis.

- Conclusion: Draw conclusions based on the analysis. This may lead to revising the hypothesis, designing further experiments, or accepting the hypothesis (at least until contradictory evidence arises).

- Communication: Share findings with the scientific community through publications or presentations.

Q 3. What are the different types of hypotheses (e.g., null, alternative)?

Hypotheses are often categorized as:

- Null Hypothesis (H0): This states there is no significant relationship between the variables being studied. It’s often the default position that researchers try to disprove.

- Alternative Hypothesis (H1 or Ha): This states there is a significant relationship between the variables. This is what the researcher is usually trying to support.

Example:

Imagine studying the effect of a new drug on blood pressure.

H0: The new drug has no effect on blood pressure.

H1: The new drug lowers blood pressure.

Note: Alternative hypotheses can be directional (e.g., ‘drug lowers blood pressure’) or non-directional (e.g., ‘drug affects blood pressure’).

Q 4. How do you formulate a testable hypothesis?

A testable hypothesis must be:

- Falsifiable: It must be possible to design an experiment that could disprove the hypothesis. If a hypothesis cannot be proven wrong, it’s not scientifically useful.

- Specific: It should clearly state the relationship between the variables being studied. Avoid vague or ambiguous language.

- Measurable: The variables involved must be measurable or observable using scientific methods.

- Relatable to existing knowledge: It should build upon or challenge existing scientific understanding.

Example of a poorly formulated hypothesis: ‘Meditation makes people feel better.’

Example of a well-formulated hypothesis: ‘Daily 30-minute meditation practice for four weeks will significantly reduce self-reported stress levels as measured by the Perceived Stress Scale compared to a control group.’

Q 5. Explain the concept of statistical significance.

Statistical significance refers to the probability that the observed results of a study are not due to chance alone. It indicates whether the findings are likely to reflect a real effect rather than random variation. We typically use a significance level (alpha), often set at 0.05 (5%). This means there’s a less than 5% chance of observing the results if there’s no real effect.

If the p-value (explained in the next answer) is less than alpha (e.g., p < 0.05), the results are considered statistically significant, suggesting the hypothesis is supported.

Q 6. What are Type I and Type II errors? How can they be minimized?

Type I error (False Positive): Incorrectly rejecting the null hypothesis when it’s actually true. You conclude there’s an effect when there isn’t. Think of this as a false alarm.

Type II error (False Negative): Incorrectly failing to reject the null hypothesis when it’s actually false. You conclude there’s no effect when there actually is. This is a missed opportunity.

Minimizing errors:

- Increase sample size: Larger samples provide more statistical power, reducing the chance of both Type I and Type II errors.

- Use appropriate statistical tests: Choosing the right test for the data type and research design is crucial.

- Control extraneous variables: Minimize the influence of factors that might confound the results.

- Replicate studies: Repeating the study with different samples and methods helps confirm findings and reduce the risk of errors.

- Adjust alpha level: A stricter alpha (e.g., 0.01) reduces the probability of a Type I error but increases the risk of a Type II error. The choice depends on the context and potential consequences of each error type.

Q 7. What are p-values, and how are they interpreted?

A p-value is the probability of obtaining results as extreme as, or more extreme than, the observed results, assuming the null hypothesis is true. It quantifies the strength of evidence against the null hypothesis.

Interpretation:

- p < 0.05 (or another pre-defined alpha level): The results are statistically significant. The probability of observing these results by chance alone is low, suggesting the null hypothesis is likely false and the alternative hypothesis is supported.

- p ≥ 0.05: The results are not statistically significant. The probability of observing these results by chance alone is high, meaning there’s insufficient evidence to reject the null hypothesis.

It is crucial to remember that a p-value doesn’t provide the probability that the null hypothesis is true. It only reflects the probability of the data given the null hypothesis. A small p-value provides evidence *against* the null hypothesis, but it doesn’t prove the alternative hypothesis is true.

Q 8. Explain the difference between correlation and causation.

Correlation and causation are two distinct concepts in statistics. Correlation simply refers to a relationship between two variables – when one changes, the other tends to change as well. This relationship can be positive (both variables increase together), negative (one increases as the other decreases), or zero (no relationship). Causation, on the other hand, implies that one variable *directly influences* or *causes* a change in the other. A crucial difference is that correlation does not imply causation.

Example: Ice cream sales and crime rates might be positively correlated – both tend to increase during the summer. However, this doesn’t mean that buying ice cream *causes* crime. Both are likely influenced by a third variable: warmer weather.

Understanding this distinction is critical. Observing a correlation requires further investigation to determine if a causal link exists. This often involves controlled experiments or sophisticated statistical modeling to rule out other potential explanations.

Q 9. Describe different statistical tests used for hypothesis testing (e.g., t-test, ANOVA, chi-square).

Several statistical tests are used for hypothesis testing, depending on the type of data and the research question. Here are a few common ones:

- t-test: Compares the means of two groups. There are different versions (independent samples t-test for comparing means between two independent groups, paired samples t-test for comparing means of the same group at two different time points).

- ANOVA (Analysis of Variance): Compares the means of three or more groups. It determines if there’s a statistically significant difference between the group means.

- Chi-square test: Analyzes the relationship between two categorical variables. It assesses whether the observed frequencies differ significantly from the expected frequencies.

Each test has its own specific assumptions and calculations, and choosing the right one is crucial for obtaining valid results. Misusing a test can lead to incorrect conclusions.

Q 10. When would you use a one-tailed vs. a two-tailed test?

The choice between a one-tailed and a two-tailed test depends on the nature of your hypothesis.

- Two-tailed test: Used when you suspect a difference between groups but don’t know the direction of the difference (e.g., ‘Group A’s mean is different from Group B’s mean’). It tests for differences in both directions (Group A > Group B or Group A < Group B).

- One-tailed test: Used when you have a specific directional hypothesis (e.g., ‘Group A’s mean is *greater* than Group B’s mean’). It only tests for a difference in one direction.

One-tailed tests are more powerful (more likely to detect a true effect) if your directional hypothesis is correct, but they are less powerful if the effect is in the opposite direction. Two-tailed tests are more conservative and are generally preferred unless there’s strong prior evidence supporting a directional hypothesis.

Q 11. How do you choose the appropriate statistical test for a given dataset and hypothesis?

Selecting the appropriate statistical test involves considering several factors:

- Type of data: Is your data continuous (e.g., height, weight), categorical (e.g., gender, color), or ordinal (e.g., rankings)?

- Number of groups: Are you comparing the means of two groups, three or more groups, or assessing the relationship between variables?

- Hypothesis: Is your hypothesis directional (one-tailed) or non-directional (two-tailed)?

- Assumptions of the test: Does your data meet the assumptions (e.g., normality, independence) of the test you’re considering?

A decision tree or flowchart can be helpful in guiding this process. For example, if you have two independent groups and continuous data that meets the assumptions of normality and equal variances, an independent samples t-test would be appropriate.

Q 12. What are the assumptions of the t-test?

The assumptions of the t-test are crucial for ensuring the validity of its results. Violating these assumptions can lead to inaccurate conclusions.

- Independence of observations: The observations within each group and between groups should be independent of each other. This means that one observation should not influence another.

- Normality of data: The data within each group should be approximately normally distributed. While the t-test is relatively robust to violations of normality with larger sample sizes, severe departures from normality can affect the results.

- Homogeneity of variances (for independent samples t-test): The variances of the two groups should be approximately equal. If variances are significantly different, a Welch’s t-test, which doesn’t assume equal variances, can be used.

Checking these assumptions involves visual inspection of histograms or Q-Q plots and using statistical tests like the Shapiro-Wilk test for normality and Levene’s test for equality of variances.

Q 13. Explain the concept of power in hypothesis testing.

In hypothesis testing, power refers to the probability of correctly rejecting a null hypothesis when it is actually false. In simpler terms, it’s the ability of a test to detect a true effect. A high-power test is more likely to find a significant result if a real difference or relationship exists.

Factors affecting power include:

- Sample size: Larger sample sizes generally lead to higher power.

- Effect size: The magnitude of the difference or relationship being studied. Larger effect sizes are easier to detect.

- Significance level (alpha): The probability of rejecting the null hypothesis when it is true (Type I error). Lower significance levels reduce power.

Low power can lead to false negatives (Type II errors), where a real effect is missed. Researchers aim for high power (typically 80% or higher) to minimize the risk of false negatives.

Q 14. How do you determine the appropriate sample size for a hypothesis test?

Determining the appropriate sample size is crucial for obtaining reliable results. Several factors influence sample size determination:

- Desired power: The probability of correctly rejecting a false null hypothesis (typically 80% or higher).

- Significance level (alpha): The probability of rejecting a true null hypothesis (typically 0.05).

- Effect size: The magnitude of the effect you expect to observe. Larger effect sizes require smaller sample sizes.

- Standard deviation: The variability in the data. Higher variability requires larger sample sizes.

Power analysis, often conducted using statistical software, is used to calculate the required sample size. Inputting the desired power, significance level, effect size, and standard deviation allows you to determine the minimum number of participants or observations needed for your study. Ignoring proper sample size calculation can lead to underpowered studies that fail to detect meaningful effects, wasting resources and time.

Q 15. What is effect size, and why is it important?

Effect size quantifies the strength of the relationship between variables in a statistical analysis. It’s not just about whether a difference exists (statistical significance), but also how large that difference is. A small, statistically significant effect might be practically meaningless, while a large effect might be highly relevant even if not statistically significant due to small sample size.

Imagine two weight-loss programs. Program A shows a statistically significant 2-pound weight loss (p < 0.05), but program B shows a 10-pound weight loss (also p < 0.05). While both are statistically significant, Program B has a much larger effect size, making it clinically more relevant. Effect size is crucial because it helps us understand the practical importance of our findings, guiding decisions and resource allocation.

Common effect size measures include Cohen’s d (for comparing two group means), Pearson’s r (for correlation), and eta-squared (for ANOVA).

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of confidence intervals.

A confidence interval (CI) provides a range of plausible values for a population parameter (like a mean or proportion) based on a sample of data. It’s expressed as a percentage (e.g., 95% CI) and indicates the probability that the true population parameter falls within that interval. A 95% CI means if we were to repeat the study many times, 95% of the calculated CIs would contain the true population parameter.

For example, a 95% confidence interval for the average height of women might be 5’4″ to 5’6″. This doesn’t mean there’s a 95% chance the true average height is within that range; it means the method used to construct the interval has a 95% chance of capturing the true value.

Q 17. How do you interpret confidence intervals in the context of hypothesis testing?

In hypothesis testing, we use confidence intervals to assess the plausibility of the null hypothesis. If the null hypothesis value (e.g., zero difference between groups) falls outside the confidence interval, it provides strong evidence against the null hypothesis, suggesting the observed effect is statistically significant. Conversely, if the null hypothesis value falls within the confidence interval, we don’t have enough evidence to reject the null hypothesis.

Let’s say we’re testing whether a new drug lowers blood pressure. Our 95% confidence interval for the difference in blood pressure between the treatment and control groups is ( -5 mmHg, -2 mmHg). Since this interval does not contain 0 (the null hypothesis value representing no difference), we can reject the null hypothesis and conclude the drug significantly lowers blood pressure. The width of the interval also tells us something about the precision of our estimate; a narrower interval indicates greater precision.

Q 18. Describe different experimental designs used for hypothesis testing (e.g., A/B testing, randomized controlled trials).

Several experimental designs facilitate hypothesis testing.

- A/B Testing: This is a randomized controlled experiment comparing two versions (A and B) of a variable (e.g., website design, advertisement copy). Participants are randomly assigned to either version, and the results are compared to see which performs better. It’s widely used in online marketing and web development.

- Randomized Controlled Trials (RCTs): These are considered the gold standard in medical research and other fields. Participants are randomly assigned to either a treatment group or a control group (placebo or standard treatment). This randomization minimizes bias and allows researchers to infer causality.

- Pre-post designs: Measurements are taken before and after an intervention to assess the effect. However, these are susceptible to confounding factors, requiring careful consideration of other influences.

- Cohort studies: Groups of individuals (cohorts) are followed over time to observe outcomes. This is useful for studying long-term effects but can be affected by confounding variables. While not strictly experimental, they’re useful for generating hypotheses.

Q 19. What are some common pitfalls to avoid when conducting hypothesis testing?

Common pitfalls in hypothesis testing include:

- P-hacking: Manipulating data or analyses to achieve a statistically significant result. This leads to false positives and unreliable conclusions.

- Ignoring effect size: Focusing solely on p-values without considering the practical significance of the findings.

- Confirmation bias: Seeking evidence to support a pre-existing belief, rather than objectively evaluating all evidence.

- Type I and Type II errors: A Type I error (false positive) occurs when we reject a true null hypothesis. A Type II error (false negative) occurs when we fail to reject a false null hypothesis. Understanding and managing the probabilities of these errors is critical.

- Inappropriate statistical tests: Using the wrong statistical test for the type of data and research question. This leads to inaccurate results.

Q 20. How do you handle outliers in your data when conducting hypothesis testing?

Outliers are data points significantly different from other observations. Handling them requires careful consideration. Simply removing them without justification is inappropriate.

First, investigate why the outlier exists. Is it a data entry error? Is it a genuinely unusual observation? If it’s an error, correct it or remove it. If it’s a genuine observation, consider using robust statistical methods less sensitive to outliers, such as median instead of mean, or non-parametric tests. You should clearly document your approach to handling outliers in your report.

Visualization (box plots, scatter plots) can help identify outliers. Always check your data thoroughly.

Q 21. How do you deal with missing data when conducting hypothesis testing?

Missing data is a common problem. Ignoring it can bias results. Strategies for handling missing data include:

- Deletion: Removing observations with missing data. This is simple but can reduce power and introduce bias if data is not missing completely at random (MCAR).

- Imputation: Replacing missing values with estimated values. Methods include mean/median imputation, regression imputation, and multiple imputation (more sophisticated and preferred if data isn’t MCAR). The choice depends on the pattern of missingness and the type of data.

- Analysis methods robust to missing data: Some statistical methods are less sensitive to missing data, such as multiple imputation or maximum likelihood estimation.

The best approach depends on the extent and pattern of missing data and the nature of the study. Always document your handling of missing data transparently.

Q 22. How do you interpret the results of a hypothesis test in a practical context?

Interpreting the results of a hypothesis test involves assessing whether the evidence supports rejecting the null hypothesis. The null hypothesis is a statement of no effect or no difference. We use statistical tests (like t-tests, ANOVA, chi-squared tests) to calculate a p-value. The p-value represents the probability of observing the obtained results (or more extreme results) if the null hypothesis were true.

Practical Interpretation: A small p-value (typically less than 0.05) suggests that the observed results are unlikely to have occurred by chance alone if the null hypothesis were true. This leads us to reject the null hypothesis and conclude that there is statistically significant evidence to support the alternative hypothesis (your research hypothesis). Conversely, a large p-value (greater than 0.05) means we fail to reject the null hypothesis – there isn’t enough evidence to support the alternative hypothesis. It’s crucial to remember that failing to reject the null hypothesis doesn’t prove it’s true; it simply means we lack sufficient evidence to reject it. The context of the study, effect size, and potential biases should also be considered when interpreting p-values. We need to understand the practical significance alongside the statistical significance.

Example: Let’s say we’re testing if a new drug lowers blood pressure. Our null hypothesis is that the drug has no effect. If our p-value is 0.01, we reject the null hypothesis and conclude that the drug significantly lowers blood pressure. However, we would also consider the magnitude of the blood pressure reduction (effect size) – a small reduction statistically significant might not be clinically meaningful.

Q 23. How do you communicate the results of a hypothesis test to a non-technical audience?

Communicating hypothesis test results to a non-technical audience requires avoiding jargon and focusing on clear, concise language. Instead of using terms like ‘p-value’ or ‘statistical significance,’ explain the findings in plain language, using relatable analogies.

Strategies:

- Use visuals: Charts, graphs, and simple tables can effectively convey complex information.

- Focus on the story: Explain the research question, the findings, and their implications in a narrative style.

- Use analogies: Compare statistical results to everyday scenarios to make them more understandable.

- Avoid jargon: Replace technical terms with simpler explanations. For example, instead of ‘rejecting the null hypothesis,’ say ‘we found evidence to support the idea that…’

- Focus on the practical implications: Highlight how the findings affect decision-making or real-world outcomes.

Example: Instead of saying, ‘Our hypothesis test revealed a statistically significant difference (p<0.05) between the two groups,' you might say, 'Our study showed that the new marketing campaign led to a noticeable increase in sales compared to the old campaign. This increase was unlikely to have occurred by chance.'

Q 24. Describe a situation where you had to generate and test a hypothesis. What were the results?

In a previous role, I was tasked with evaluating the effectiveness of a new customer onboarding process. My hypothesis was that the new process would reduce customer churn (rate at which customers stop using a service) compared to the old process.

Hypothesis Generation: Based on my observations and user feedback, I hypothesized that the new onboarding process, which included more personalized tutorials and proactive support, would significantly decrease churn rates.

Hypothesis Testing: I collected data on churn rates for customers using both the old and new onboarding processes over a three-month period. I then conducted a two-sample t-test to compare the mean churn rates of the two groups.

Results: The t-test revealed a statistically significant difference (p<0.01) between the two groups, with the new onboarding process resulting in a significantly lower churn rate. This supported my hypothesis and led to the adoption of the new process across the organization.

Q 25. What tools or software are you familiar with for conducting hypothesis testing (e.g., R, Python, SPSS)?

I’m proficient in several tools for conducting hypothesis testing:

- R: A powerful open-source statistical software environment with extensive packages for statistical analysis (e.g.,

t.test(),aov(),chisq.test()). - Python (with libraries like SciPy and Statsmodels): Python offers flexibility and scalability, particularly for large datasets. The

scipy.statsmodule provides a wide range of statistical tests. For example, a t-test can be performed usingscipy.stats.ttest_ind(). - SPSS: A user-friendly commercial software package commonly used in social sciences for statistical analysis. It provides a point-and-click interface suitable for researchers less familiar with coding.

My choice of tool depends on the specific project requirements, dataset size, and the level of customization needed.

Q 26. How do you ensure the validity and reliability of your hypothesis testing process?

Ensuring the validity and reliability of hypothesis testing involves meticulous planning and execution. Key steps include:

- Proper study design: A well-defined research question, a representative sample, and a robust experimental design are essential. Randomization and blinding techniques minimize bias.

- Data quality control: Thoroughly checking data for errors and inconsistencies is critical. Data cleaning and preprocessing are crucial steps.

- Appropriate statistical tests: Selecting the correct statistical test based on the data type and research question is crucial for obtaining valid results.

- Assumption checks: Most statistical tests have assumptions (e.g., normality, independence). Checking these assumptions before running the test is vital to avoid invalid conclusions. Transformations or alternative tests may be needed if assumptions are violated.

- Replication: If feasible, repeating the study to confirm the findings strengthens the reliability of the results.

- Peer review: Having another expert review the methodology and results helps identify potential flaws or biases.

Q 27. Explain the importance of defining success metrics before starting hypothesis testing.

Defining success metrics before starting hypothesis testing is crucial because it provides a clear framework for evaluating the results and ensuring the study aligns with the overall goals. Without well-defined metrics, it’s difficult to interpret the results meaningfully or determine if the hypothesis was successfully tested.

Importance:

- Focus: Clearly defined metrics keep the research focused on relevant aspects.

- Measurability: Metrics provide a way to quantify the impact of the intervention or phenomenon under study.

- Decision-making: Clear metrics facilitate informed decision-making based on the results of the hypothesis test.

- Objectivity: Well-defined metrics minimize subjective interpretation of the results.

Example: If testing the effectiveness of a new marketing campaign, success metrics might include conversion rates, click-through rates, and customer acquisition cost. These metrics define what constitutes a successful outcome and guide the analysis and interpretation of results.

Q 28. How do you handle unexpected results during a hypothesis test?

Handling unexpected results in a hypothesis test requires a systematic approach. Simply dismissing unexpected findings is not good scientific practice.

Strategies:

- Review the methodology: Carefully check for errors in the experimental design, data collection, or analysis. Look for potential biases.

- Examine the data: Investigate whether outliers or unexpected data patterns contributed to the unexpected results. Consider data transformations or robust statistical methods.

- Explore alternative explanations: Consider whether other factors or variables might explain the results. Formulate new hypotheses based on the unexpected findings.

- Increase sample size: A larger sample size can provide more power to detect significant effects and reduce the impact of random variation.

- Replicate the study: If resources allow, repeat the experiment to see if the unexpected results are reproducible.

- Re-evaluate the assumptions: Check if the assumptions of the statistical test were met. If not, consider using a more suitable method.

- Acknowledge limitations: Report the unexpected results honestly and acknowledge any limitations of the study that might have contributed to them.

Unexpected results can often lead to new insights and discoveries. They highlight the need for critical thinking and a willingness to explore alternative explanations.

Key Topics to Learn for Hypothesis Generation and Testing Interview

- Defining a Testable Hypothesis: Understanding the criteria for a strong, falsifiable hypothesis, including clear variables and measurable outcomes. This includes exploring different types of hypotheses (e.g., directional, non-directional).

- Research Design & Methodology: Choosing appropriate research methods (e.g., experimental, observational, correlational) to effectively test your hypothesis. Consider factors like sample size, data collection techniques, and potential biases.

- Data Analysis & Interpretation: Mastering the statistical techniques needed to analyze your data and draw meaningful conclusions. Understanding p-values, confidence intervals, and effect sizes is crucial.

- Understanding Bias and Validity: Identifying and mitigating potential sources of bias in your research design and data analysis. Knowing the difference between internal and external validity is essential for reliable results.

- Communicating Results: Effectively presenting your findings, both verbally and in written form, using clear and concise language appropriate for your audience. This includes the ability to explain complex concepts simply.

- Iterative Hypothesis Refinement: Understanding that hypothesis testing is an iterative process. Knowing how to adapt your hypotheses based on the results obtained, and design subsequent experiments to further investigate findings.

- Practical Applications: Exploring real-world examples of hypothesis generation and testing across different fields (e.g., A/B testing in marketing, clinical trials in medicine, data analysis in finance).

Next Steps

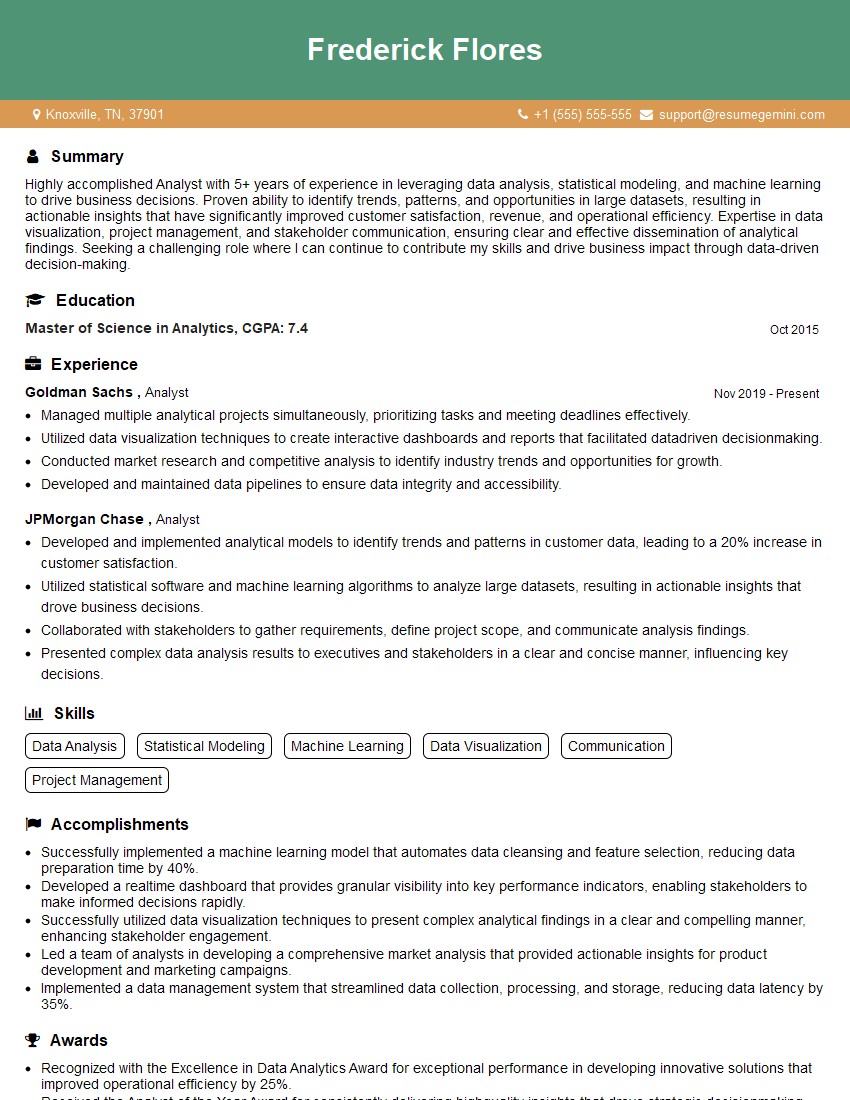

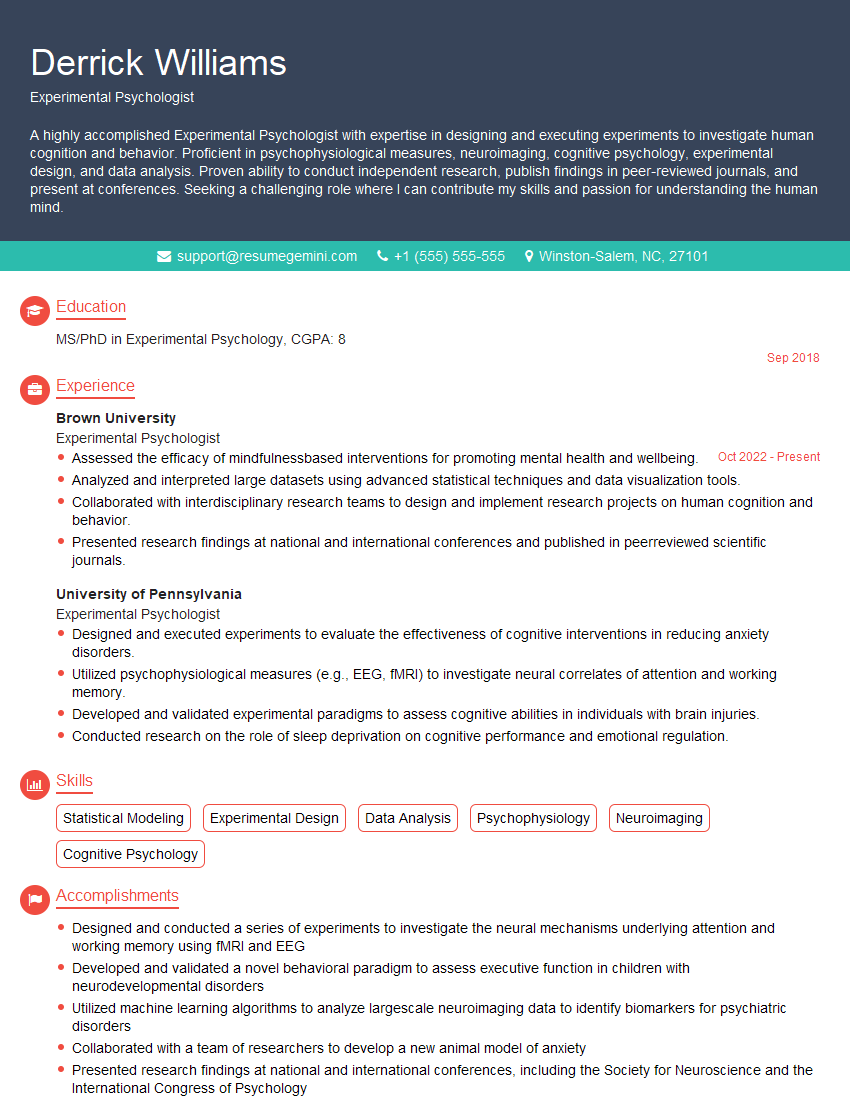

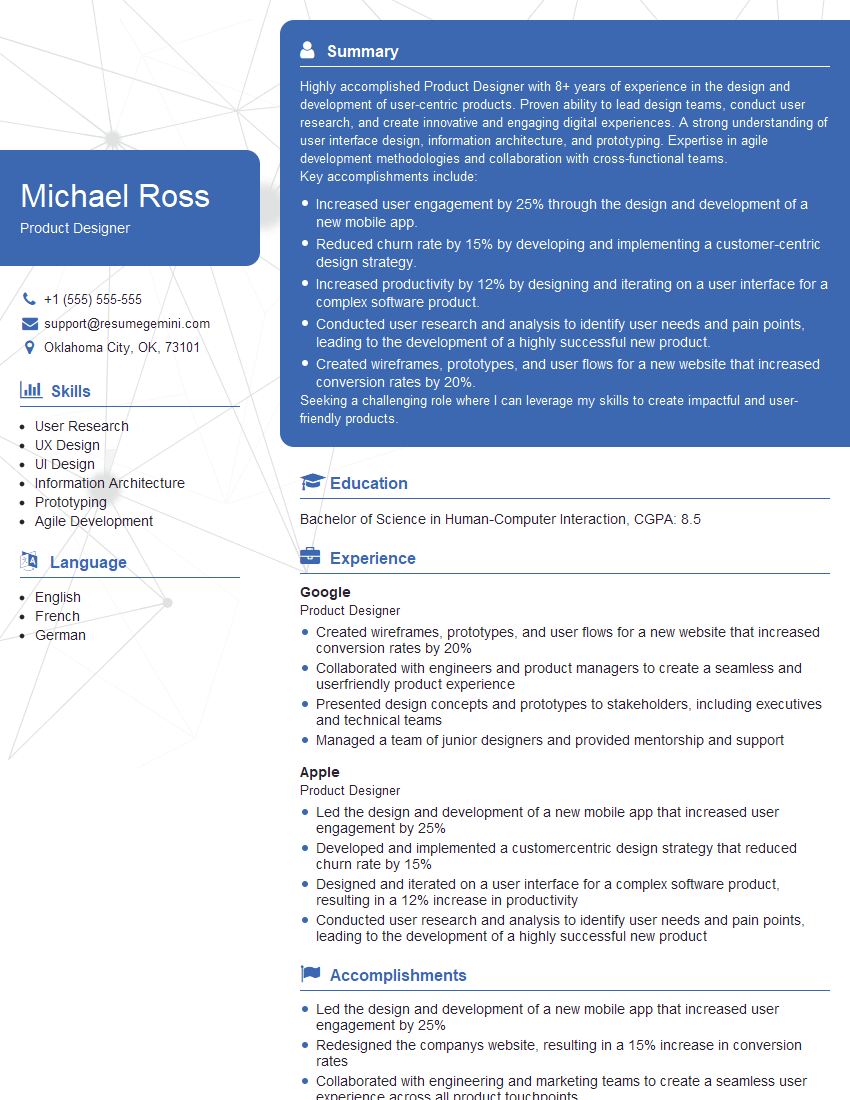

Mastering hypothesis generation and testing is a highly valuable skill sought after in many competitive fields, significantly boosting your career prospects and opening doors to advanced roles. To maximize your job search success, it’s crucial to present your skills effectively. Creating an ATS-friendly resume is key to getting your application noticed. ResumeGemini is a trusted resource to help you build a professional and impactful resume that highlights your abilities. Examples of resumes tailored to showcase expertise in Hypothesis Generation and Testing are available within ResumeGemini to help guide you. Take the next step and create a resume that truly reflects your capabilities and ambitions.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.