Are you ready to stand out in your next interview? Understanding and preparing for Water Quality Modeling (QUAL2K, WASP) interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Water Quality Modeling (QUAL2K, WASP) Interview

Q 1. Explain the differences between QUAL2K and WASP.

QUAL2K and WASP are both widely used one-dimensional water quality models, but they differ in several key aspects. Think of them as two different cars designed for the same journey – both get you there, but one might be better suited for certain terrains.

- Complexity: WASP (Water Quality Analysis Simulation Program) is generally considered more complex and feature-rich than QUAL2K. It can handle more sophisticated processes, including multiple layers and more intricate nutrient cycling dynamics.

- Calibration and Parameterization: WASP often requires more extensive calibration and parameterization due to its higher complexity, meaning more data and expertise are needed. QUAL2K, being simpler, can be easier to set up and calibrate, especially for less data-rich scenarios.

- Applications: While both model dissolved oxygen, nutrients, and other water quality parameters, WASP’s capabilities extend to more complex scenarios such as estuaries and reservoirs with stratification. QUAL2K is often more suitable for simpler river systems.

- User Interface: WASP’s interface can be steeper to learn, requiring more experience in model building. QUAL2K generally offers a more user-friendly interface and is accessible to a wider range of users.

In essence, the choice between QUAL2K and WASP depends heavily on the specific water body’s characteristics, the available data, and the level of detail required in the simulation.

Q 2. Describe your experience calibrating a QUAL2K model.

Calibrating a QUAL2K model is an iterative process involving comparing model outputs to observed field data. Imagine you’re trying to adjust the knobs on a complex machine to get the perfect output – you need to refine those settings until the machine mimics reality accurately. My approach typically involves these steps:

- Data Gathering and Preprocessing: This involves collecting historical data on water quality parameters (e.g., DO, BOD, nutrients) and flow rates at various points along the river reach.

- Initial Model Setup: Setting up the model geometry, defining the reach segments, and inputting initial estimates for model parameters (e.g., reaeration coefficient, BOD decay rate).

- Parameter Estimation: Employing a combination of manual adjustment and automated optimization techniques (e.g., using a sensitivity analysis to identify key parameters and then using least-squares methods or other statistical methods to refine them), to minimize the difference between the model’s predictions and the observed data. I’ve had success using the PEST (Parameter ESTimation) software to help automate this iterative process.

- Model Validation: After calibration using a portion of the data set, the model’s ability to predict water quality in an independent data set is assessed. This is crucial in ensuring the model’s predictive capability.

- Documentation: Maintaining thorough documentation of the calibration process, including the parameter values and their uncertainties, is essential.

For instance, during a project modeling a section of the River X, I discovered that adjusting the reaeration coefficient significantly impacted dissolved oxygen predictions. By meticulously calibrating this and other parameters, I improved the model’s predictive capability significantly, ultimately informing management decisions on pollution control strategies.

Q 3. How do you handle data gaps in water quality modeling?

Data gaps are a common challenge in water quality modeling. Think of it like assembling a jigsaw puzzle with missing pieces – you need to find creative ways to fill those gaps. I generally employ several strategies:

- Data Interpolation/Extrapolation: Using statistical techniques (e.g., linear interpolation, spline interpolation) to estimate missing values based on available data. This approach requires caution and is best used when the gaps are small and the data show clear trends.

- Data Imputation: More sophisticated methods like multiple imputation can be applied for larger data gaps, generating multiple plausible imputed data sets. These sets are then used to assess the impact of data uncertainty on modeling results.

- Spatial and Temporal Correlation: Exploiting correlations between data points to estimate values. For example, if you have good data for a particular parameter at one location, you can use its relationship to values at other locations to help predict the missing data.

- Expert Judgment: In some cases, expert knowledge and professional judgment may be required, particularly when dealing with significant data gaps or unusual situations.

- Sensitivity Analysis: The model’s sensitivity to the uncertain data is explored in order to determine which parameters are most heavily influenced by these uncertainties.

The choice of method depends on the extent and nature of the gaps, the overall data quality, and the sensitivity of the model to the missing data. Properly acknowledging and managing data uncertainty is vital for transparent and robust modeling.

Q 4. What are the limitations of using QUAL2K or WASP for specific water bodies?

QUAL2K and WASP, while powerful tools, have limitations. These models rely on simplifying assumptions that may not always hold true in real-world scenarios.

- One-Dimensional Nature: Both models primarily treat water bodies as one-dimensional systems. This means they may not accurately represent complex hydrodynamic processes in stratified lakes, estuaries, or areas with significant mixing. They can oversimplify complex 3D flows and transport processes.

- Simplified Biogeochemical Processes: The models use simplified representations of complex biogeochemical processes. For instance, nutrient cycling models are commonly based on simplistic assumptions about the transformations and interactions between different nutrients.

- Data Requirements: Both models require relatively extensive data for calibration and validation. The lack of sufficient, high-quality data can limit the accuracy and applicability of the models.

- Spatial Scale: The models are typically applied to specific reaches of rivers or smaller water bodies. Their applicability to very large spatial scales is limited.

- Specific Water Body Characteristics: Some water bodies have unique characteristics (e.g., significant tidal influences, extreme temperature variations) that may exceed the capabilities of these models.

For example, applying QUAL2K to a highly stratified lake with complex sediment interactions might yield inaccurate results. In such cases, more sophisticated, three-dimensional models might be necessary.

Q 5. Explain the concept of model sensitivity analysis in the context of QUAL2K/WASP.

Model sensitivity analysis helps identify which parameters most significantly influence model outputs. Think of it as testing the effect each ‘knob’ has on the final ‘product’ of your model. This is crucial for both calibration and uncertainty analysis.

In QUAL2K/WASP, sensitivity analysis can be performed using various techniques, including:

- One-at-a-time (OAT) method: Each parameter is varied individually while keeping others constant. This is a simple approach but might miss interactions between parameters.

- Global sensitivity analysis methods: Techniques like Sobol indices or variance-based methods provide a more comprehensive assessment of parameter influence, accounting for parameter interactions.

- Morris method: Evaluates the main effects and interactions of parameters in an efficient manner.

The results of the sensitivity analysis guide calibration efforts, prioritizing the accurate estimation of the most influential parameters. They also inform the uncertainty analysis by identifying parameters that contribute most to the uncertainty in the model predictions. For instance, if we discover through sensitivity analysis that the reaeration coefficient dramatically impacts DO predictions, we will pay more attention to obtaining accurate estimates for that coefficient during model calibration.

Q 6. How do you validate a water quality model?

Model validation is the crucial step of verifying the model’s ability to accurately predict water quality. It’s like testing your recipe by making the dish – you want to see if it meets your expectations. This involves using independent data that were not used during the model calibration. Common techniques include:

- Comparison of model predictions to independent measurements: This involves collecting new water quality data and comparing them to the model’s predictions. Statistical measures (e.g., R-squared, RMSE) are used to quantify the agreement between the model and observations.

- Predictive performance measures: Assess model accuracy, precision, and bias. For example, comparing predicted concentrations against independent measurements at various points in time and space.

- Qualitative assessments: Examining the overall trends and patterns in the model outputs to ensure consistency with known processes and behaviours of the water body.

- Uncertainty analysis: Assessing how model uncertainty impacts the validation results. This gives us a range of possible predictions rather than just a single value.

For example, in a validation exercise, I used data from a different year than the calibration data to see if the model could accurately predict water quality under different conditions. If the model performs well with the independent data, we can have more confidence in its accuracy and predictive capability.

Q 7. Describe your experience with different water quality parameters and their modeling in QUAL2K/WASP.

My experience encompasses a wide range of water quality parameters commonly modeled using QUAL2K/WASP.

- Dissolved Oxygen (DO): I have extensively modeled DO, focusing on factors affecting its concentration such as reaeration, BOD decay, and photosynthesis. For instance, in one project, we modeled the impact of wastewater discharge on downstream DO levels.

- Biochemical Oxygen Demand (BOD): I have incorporated BOD into models to assess the impact of organic pollutants on oxygen levels and used various models of BOD decay kinetics.

- Nutrients (Nitrogen and Phosphorus): I’ve worked with various forms of nitrogen (nitrate, nitrite, ammonia) and phosphorus, modeling their transport and transformation in rivers and lakes. I’ve used this work to assess nutrient loading impacts from agricultural runoff.

- Temperature: Temperature is a crucial parameter affecting many other water quality aspects. I’ve incorporated temperature dynamics into models to better represent the impact on DO saturation and biological processes.

- Phytoplankton and Algae: I have experience modeling phytoplankton growth and its relationship with nutrient availability and light penetration. In a recent study, we assessed the impact of different nutrient reduction strategies on algal blooms in a lake.

- Sediment transport and associated contaminants: Modeling sediment and contaminant transport is more common in WASP, with many different possible forms of sediment and contaminant modeling incorporated depending on the project specifications.

My experience includes using these parameters to support decision making in various contexts, from regulatory compliance to water resource management and environmental impact assessments.

Q 8. How do you incorporate point and non-point source pollution into your models?

Incorporating pollution sources into QUAL2K or WASP involves specifying their location, type, and characteristics. Point sources, like a wastewater treatment plant discharge, are easily represented by defining their location and the pollutant loading rates (e.g., kg/day of BOD, nitrogen, phosphorus). We would input this directly into the model as a boundary condition or internal source term at the specific node representing the discharge location. Non-point sources, like agricultural runoff, are more complex. They are distributed across a watershed and their contribution varies with rainfall, land use, and other factors. We address this using sub-models or empirical relationships. For instance, we might use a GIS-based approach to estimate runoff from different land-use areas within the watershed, coupling this with water quality parameters measured in the field. This then allows us to estimate the distributed load of pollutants entering the water body at various points along the stream network, effectively representing the diffuse nature of non-point source pollution.

For example, in a modeling project for a river basin impacted by agricultural runoff, I’ve used a combination of a land-use map, rainfall data, and a curve number method to estimate daily runoff volumes and pollutant concentrations. These were then distributed proportionally to the river segments impacted by the different land uses.

Q 9. What are the key assumptions behind QUAL2K/WASP?

QUAL2K and WASP, while powerful, rely on several key assumptions. One crucial assumption is that the water body is well-mixed within each segment or reach of the model. This means that concentrations are assumed to be uniform across the cross-section of the river, lake, or estuary. This is a simplification; reality involves variations in flow and concentration across the cross-section. Another important assumption is that the processes represented (advection, dispersion, reaction) are adequately described by the selected equations and parameters. We assume that the biochemical and physical processes are steady-state or quasi-steady-state over the time-step used. Further, the models assume a certain degree of linearity for reactions, which might not always be the case in real-world conditions, especially at high concentrations. Finally, the accuracy of the model is heavily dependent on the quality and quantity of input data, such as flow rates, pollutant loads, and water quality parameters. A flawed assumption anywhere in the input chain will undermine model accuracy.

Q 10. Explain your understanding of the advective-dispersive equation and its role in water quality modeling.

The advective-dispersive equation is the backbone of many water quality models, including QUAL2K and WASP. It describes how a pollutant’s concentration changes over time and space due to advection (transport with the flow) and dispersion (mixing caused by turbulence and other factors). Imagine dropping a dye into a flowing stream; advection carries it downstream, while dispersion spreads it out laterally and vertically. The equation mathematically represents this process. It’s typically written as:

∂C/∂t = -U(∂C/∂x) + D(∂²C/∂x²) - kCWhere:

Cis the pollutant concentrationtis timexis distanceUis the flow velocityDis the dispersion coefficientkis the first-order decay rate of the pollutantIn water quality modeling, this equation, often solved numerically, is used to track the movement and fate of pollutants through a river or other water body. The dispersion term is particularly important, representing the mixing processes that dilute pollutants and influence their overall transport and fate. The decay term accounts for biological or chemical transformations (e.g., degradation of organic matter).

Q 11. How do you handle uncertainty in water quality modeling?

Uncertainty is inherent in water quality modeling. We address this through several strategies. First, we thoroughly analyze and document data quality, understanding measurement errors and limitations in input data. Second, we perform sensitivity analysis to assess how variations in model parameters influence the output. This helps identify which parameters have the most significant impact on the results and prioritize data collection or refinement efforts. Third, we use probabilistic methods, such as Monte Carlo simulations, to run the model repeatedly with different parameter sets, drawn from probability distributions reflecting our uncertainty about the values. This provides a range of possible outcomes, rather than a single deterministic prediction. Finally, we compare model results with observed data, and where discrepancies are significant, we explore potential sources of uncertainty, recalibrate parameters, or revise the model structure.

For example, in one project, we used a Monte Carlo approach to simulate the uncertainty in predicting phosphorus concentrations in a lake. By running the model thousands of times with randomly sampled parameters, we obtained a distribution of predicted concentrations, enabling us to quantify the uncertainty and provide a more robust prediction.

Q 12. Describe your experience with different boundary conditions in water quality models.

Boundary conditions specify the inflow and outflow characteristics of the modeled system. In QUAL2K or WASP, common boundary conditions include:

- Inflow boundary conditions: These define the flow rate and water quality characteristics (e.g., concentrations of pollutants) entering the system. Data from upstream monitoring stations are typically used. Different types exist, such as prescribed concentration, prescribed flux and even dynamic inflow conditions, depending on the data available.

- Outflow boundary conditions: These usually represent how water and pollutants leave the modeled system. Depending on the system, this could involve specifying a constant outflow, zero-gradient outflow (no concentration change at the boundary), or a more complex relationship linked to downstream conditions.

- Initial conditions: These define the initial distribution of water quality variables within the modeled reach before the simulation begins. These might be based on field measurements.

The proper selection of boundary conditions is crucial for accurate model results. For instance, using an incorrect inflow concentration can significantly affect downstream predictions. I’ve encountered scenarios where the boundary conditions were improperly defined, leading to unrealistic model outputs. In such cases, careful review of available data, consultation with field experts, and a thorough understanding of hydrological processes involved are critical to defining appropriate boundary conditions.

Q 13. What are some common errors encountered when using QUAL2K/WASP, and how do you address them?

Common errors in using QUAL2K/WASP often stem from data issues, model setup, or parameter selection. Data errors, like inaccurate flow rates or pollutant loads, can lead to significantly flawed results. Incorrect model setup, such as defining the network improperly or misspecifying the boundary conditions, can also generate unrealistic results. Inappropriate calibration parameters, in turn, can lead to overly optimistic or pessimistic predictions. Furthermore, neglecting to account for model assumptions, as mentioned earlier, can affect the quality of the results.

To address these errors, I follow a structured approach:

- Data validation and QA/QC: Rigorously check data accuracy, consistency, and completeness. Identify and correct or remove outliers.

- Sensitivity analysis: Determine the influence of different parameters on the model output to pinpoint crucial data points and areas for improvement.

- Model calibration and validation: Use observed data to adjust model parameters and ensure the model reasonably replicates reality. Always compare model outputs to independently collected validation data to confirm accuracy.

- Regular checks of model outputs: Review output for any anomalies or unexpected behaviour; unrealistic values (like negative concentrations) are immediate flags for problems.

Experience has taught me to prioritize meticulous data quality control and carefully validate every step of the modeling process, from data collection to final results interpretation. A methodical and iterative approach is key to minimizing errors.

Q 14. How do you select appropriate model parameters in QUAL2K/WASP?

Selecting appropriate model parameters in QUAL2K/WASP is a crucial step and often involves a combination of literature review, expert judgment, and calibration. We start by reviewing the available literature to find parameter ranges reported for similar systems. Expert knowledge, often from hydrologists and water quality scientists familiar with the study area, helps in refining these ranges. We then proceed with a calibration process, systematically adjusting parameters within their plausible ranges until the model reasonably reproduces observed water quality data. Several calibration techniques exist, including manual adjustment and automated optimization algorithms. The goal is to find a parameter set that minimizes the discrepancy between model predictions and observed values, while ensuring the parameters remain within scientifically plausible bounds. The entire process is guided by good scientific judgment and validation against independent datasets.

For example, in a project modeling a river, I began by using literature values for the reaeration coefficient. Then, I adjusted this parameter during calibration, comparing modeled dissolved oxygen concentrations against observed data. The final value reflected both existing literature and the observed data, ensuring that the selected parameter remains realistically within expected ranges.

Q 15. How do you interpret the results of a QUAL2K/WASP simulation?

Interpreting QUAL2K/WASP results involves a multi-step process focusing on model calibration and validation, followed by a thorough analysis of the output data. It’s not just about numbers; it’s about understanding what those numbers mean in the context of the water body.

First, we examine the model’s calibration statistics. Good calibration implies the model accurately represents the observed data. Metrics like the Nash-Sutcliffe efficiency (NSE) and the root mean square error (RMSE) are crucial here. An NSE close to 1 indicates excellent agreement, while a low RMSE suggests minimal discrepancies between modeled and observed values. Visual inspection of time series plots comparing modeled and observed concentrations is also essential.

Next, we analyze the model’s predictions. We’ll focus on key water quality parameters like dissolved oxygen (DO), biochemical oxygen demand (BOD), and nutrient levels (nitrogen and phosphorus). We look for trends, identifying areas of concern, such as low DO zones indicating potential hypoxia. We also analyze the spatial distribution of pollutants, pinpointing potential pollution sources or areas of high vulnerability.

Finally, we consider the uncertainties associated with the model. No model is perfect, and acknowledging inherent uncertainties is crucial. This involves sensitivity analyses to determine the parameters most influencing model outputs and error propagation analysis to assess the uncertainties associated with our predictions. For instance, a high sensitivity to a parameter with large uncertainty will lead to higher uncertainty in our predictions. We communicate this uncertainty transparently to stakeholders.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with using GIS software in conjunction with QUAL2K/WASP.

GIS software is an indispensable tool for water quality modeling. I extensively use ArcGIS and QGIS to prepare input data for QUAL2K/WASP and visualize the results. My workflow typically begins with importing and pre-processing spatial data, such as river networks, land use, and point source locations. This often involves geoprocessing tasks such as creating stream networks, defining reach lengths, and calculating watershed areas.

For QUAL2K/WASP, this processed data provides the spatial framework for the model. I use GIS to delineate the model reach, assign appropriate parameters (e.g., width, depth, slope) to each reach, and define locations of point and non-point sources. After running the model, GIS is crucial for visualizing results. I often create maps showing the spatial distribution of water quality parameters along the river network, allowing for a clear visual representation of pollution hotspots and areas that meet water quality standards. This is critical for communication with stakeholders and decision-makers.

For example, in a recent project, we used GIS to overlay predicted DO concentrations with sensitive aquatic habitats, highlighting areas where low DO levels posed a significant threat to fish populations. This spatial analysis provided a powerful visual argument for implementing remediation strategies.

Q 17. How do you present your water quality modeling results to stakeholders?

Presenting water quality modeling results to stakeholders requires clear, concise communication tailored to their level of technical understanding. I utilize a combination of methods to ensure effective knowledge transfer.

For technical audiences (e.g., engineers, scientists), I use detailed reports, including tables, graphs, and maps showing model calibration, validation, and predictions. I also discuss model limitations and uncertainties. For non-technical stakeholders (e.g., the general public, policy-makers), I employ simpler visuals like maps with clear color-coded indicators to highlight areas of concern. Infographics and short presentations with minimal technical jargon are particularly effective.

I always start by explaining the purpose of the model and its limitations in a non-technical way, before presenting the key findings. I encourage questions and discussions, ensuring everyone understands the implications of the results and the potential management options. Interactive presentations, online dashboards, and easily digestible summaries are also part of my approach. For example, in one project, a simple color-coded map showing areas exceeding nutrient standards proved more effective in securing funding for a remediation project than a lengthy technical report.

Q 18. What are the strengths and weaknesses of using steady-state versus dynamic modeling approaches?

Steady-state and dynamic modeling approaches offer different advantages and disadvantages depending on the specific application and the nature of the water body being studied.

Steady-state models assume that conditions in the water body remain constant over time. They are simpler to build and run, requiring less computational power and data. This makes them suitable for preliminary assessments or screening-level analyses, offering a quick overview of water quality conditions under average conditions. However, they fail to capture the temporal variability in water quality, making them inadequate for systems experiencing significant fluctuations, such as those influenced by storm events or seasonal changes in temperature and flow.

Dynamic models account for the temporal variations in water quality parameters and flow conditions. They provide a more realistic representation of water body dynamics, offering insight into the short-term and long-term impacts of pollution events or management interventions. However, they require more computational resources, more comprehensive data sets (including time-series data), and are more complex to calibrate and validate.

Choosing between these approaches depends on the project’s goals. A steady-state model might suffice for evaluating long-term average conditions, while a dynamic model is necessary for assessing the impact of short-term events or understanding seasonal variations in water quality.

Q 19. Describe your experience with different types of water quality models (e.g., 1D, 2D).

My experience encompasses various water quality models, ranging from 1D to 2D and even some 3D applications. 1D models, like QUAL2K and WASP, are commonly used for river systems. They simplify the water body as a series of interconnected reaches, considering only longitudinal variations in water quality. They’re computationally efficient but lack the detail to capture lateral variations in water quality within a reach.

2D models offer more spatial resolution, considering both longitudinal and lateral variations. They are particularly useful for lakes, reservoirs, and estuaries where spatial heterogeneity in water quality is significant. However, they require more computational resources and input data, including bathymetry (depth) information. I have experience with using 2D models (like EFDC) for evaluating water quality in lakes and coastal areas.

The choice of model dimension depends on the specific application and the level of detail required. For larger river systems, 1D models are often sufficient, while for smaller, more complex water bodies, 2D or even 3D models may be necessary. Each model type has its own strengths and weaknesses regarding computational demands, data requirements, and the level of spatial resolution.

Q 20. How do you incorporate climate change impacts into your water quality modeling?

Incorporating climate change impacts into water quality modeling requires considering several factors. Changes in precipitation patterns, temperature, and streamflow significantly influence water quality. For example, increased rainfall intensity can lead to more frequent and intense storm events, resulting in increased pollutant loading to water bodies. Higher temperatures can accelerate biological processes, affecting DO levels and nutrient cycling.

I typically incorporate climate change projections into my models by using climate change scenarios from General Circulation Models (GCMs) or regional climate models. These scenarios provide predictions for future precipitation, temperature, and streamflow. I then use this information to modify model inputs, such as rainfall, temperature, and inflow data, reflecting projected changes. This allows us to assess the potential future impacts of climate change on water quality.

For example, I might use projected changes in temperature to modify the model’s temperature-dependent reaction rates for BOD degradation or algal growth. Projected changes in rainfall and streamflow are used to adjust inflow rates and pollutant loading to the model. This allows us to simulate the potential impacts of climate change on water quality parameters over a range of future scenarios.

Q 21. Describe your experience with model coupling (e.g., coupling a hydrodynamic model with QUAL2K/WASP).

Model coupling involves linking different models to simulate the interactions between different environmental processes. Coupling a hydrodynamic model with a water quality model, like QUAL2K/WASP, is essential for accurately simulating water quality in dynamic systems. Hydrodynamic models simulate water flow and transport, while water quality models simulate the fate and transport of pollutants.

By coupling these models, we obtain a more comprehensive understanding of water quality dynamics. For example, the hydrodynamic model provides the flow velocity and water depth, which are crucial inputs for the water quality model’s advection and dispersion calculations. This ensures accurate simulation of pollutant transport. Coupling also allows for simulating complex interactions between flow and water quality, such as the impact of stratification on DO levels or the influence of flow patterns on nutrient distribution.

In my experience, I’ve used various approaches to couple hydrodynamic and water quality models. This often involves using specialized software or developing custom interfaces to exchange data between the models. For example, I have used MIKE 11 (a hydrodynamic model) coupled with QUAL2K to simulate water quality in a river system. The output of MIKE 11 (flow and water levels) is used as input to QUAL2K to accurately simulate the transport of pollutants.

Q 22. What is your experience with different calibration techniques in QUAL2K/WASP?

Calibration in QUAL2K and WASP involves adjusting model parameters to best match observed water quality data. It’s like fine-tuning a recipe – you adjust the ingredients (model parameters) until the final dish (model output) tastes just right (matches the observed data). There are several techniques:

- Manual Calibration: This is an iterative process where you manually adjust parameters based on your understanding of the system and the model’s sensitivity analysis. It’s like experimenting in the kitchen – trying different amounts of ingredients until you achieve the desired outcome. This approach is often used for initial calibration but can be time-consuming.

- Automatic Calibration: This involves using optimization algorithms (e.g., Nelder-Mead, Levenberg-Marquardt) to automatically find the best parameter set that minimizes the difference between observed and simulated data. It’s like using a sophisticated kitchen appliance to automatically adjust the ingredients for the perfect result. This is more efficient for complex systems with many parameters.

- Parameter Estimation Techniques: These statistical methods, like Bayesian approaches, incorporate uncertainty in both the model and the data to provide a more robust calibration. This is like using advanced techniques to understand the range of acceptable ingredients while still creating the perfect recipe.

The choice of technique depends on the complexity of the model, the availability of data, and the desired level of accuracy. Often a combination of techniques is employed. For instance, I might use manual calibration initially to gain an understanding of the system and then use automatic calibration to fine-tune the parameters, followed by a Bayesian approach to account for uncertainty.

Q 23. How do you manage large datasets for water quality modeling?

Managing large datasets in water quality modeling requires a structured approach. Think of it like organizing a vast library – you need a system to efficiently find and use the information you need. Here’s my strategy:

- Database Management Systems (DBMS): I utilize relational databases (e.g., PostgreSQL, MySQL) or specialized water quality databases to store and manage the data. This provides a structured way to organize the vast amount of information efficiently.

- Data Preprocessing and Cleaning: This is a crucial step. I use scripting languages like Python with libraries such as Pandas and NumPy to clean, transform, and validate the data before using it in the model. This ensures data quality and consistency, essential for accurate modeling results.

- Data Visualization Tools: Tools like R or Python with libraries like Matplotlib and Seaborn allow me to visually inspect the data, identify outliers, and check for patterns. This helps in understanding the data’s characteristics before using it in any analysis.

- Cloud Computing: For extremely large datasets, cloud platforms like AWS or Google Cloud provide scalable storage and computing resources. This is critical for handling computationally intensive tasks involved in water quality modeling of large river systems or watersheds.

By employing these methods, I ensure data integrity, efficient retrieval, and the capacity to handle even the most extensive datasets.

Q 24. Explain your understanding of water quality criteria and standards.

Water quality criteria and standards define acceptable levels of pollutants in water bodies, safeguarding human health and the environment. They’re like traffic laws for water quality – setting limits to ensure safe and sustainable use.

Criteria are typically based on scientific evidence and specify the acceptable concentration of various pollutants (e.g., dissolved oxygen, nutrients, heavy metals) for different designated uses (e.g., drinking water, aquatic life, recreation). Standards are often legally mandated limits that must be met, usually determined by regulatory agencies such as the EPA in the US or equivalent bodies in other countries. These standards often incorporate safety factors and account for uncertainties in the scientific data.

Understanding these criteria and standards is crucial for water quality modeling. The model’s results must be compared against these limits to assess whether the water body meets the required standards and to evaluate the effectiveness of water quality management strategies.

Q 25. What is your experience with regulatory requirements for water quality modeling?

Regulatory requirements for water quality modeling vary depending on the location and the specific application, but generally involve demonstrating the model’s accuracy, reliability, and applicability to the specific problem. This is like a building inspection – the model needs to meet certain standards before it is used for decision making.

My experience includes working with EPA guidelines for TMDL (Total Maximum Daily Load) calculations, which often requires a detailed description of the model, its calibration and validation, and a thorough uncertainty analysis. This also includes adherence to data quality objectives, model documentation, and peer review processes. I’m familiar with reporting requirements that necessitate clear communication of the results and their implications for decision-makers.

Q 26. How do you evaluate the cost-effectiveness of different water quality management strategies?

Evaluating the cost-effectiveness of water quality management strategies requires a multi-faceted approach that combines technical modeling with economic analysis. It’s like comparing different investment options – you need to weigh the costs against the benefits.

I typically use cost-benefit analysis, which involves quantifying the costs of implementing different management strategies (e.g., wastewater treatment upgrades, land-use changes) and the benefits (e.g., improved water quality, recreational opportunities, avoided health costs). The net present value (NPV) is a key metric, considering the time value of money. I often incorporate sensitivity analysis to assess the uncertainty associated with cost and benefit estimations. Optimization techniques can be used to find the most cost-effective strategy that achieves desired water quality targets. The entire process is documented rigorously, justifying the chosen strategy based on sound scientific and economic principles. In practice this involves collaborating with economists and stakeholders to collect the necessary cost data and to understand the societal values associated with improved water quality.

Q 27. Describe a challenging water quality modeling project you’ve worked on and how you overcame the challenges.

One challenging project involved modeling nutrient pollution in a highly dynamic coastal estuary. The complexity stemmed from the interplay of multiple sources (e.g., river inflow, groundwater, atmospheric deposition), tidal influences, and complex ecological processes. The available data was sparse and of varying quality, further complicating the effort.

To overcome this, I used a multi-pronged approach:

- Data Integration: I combined water quality monitoring data with hydrological and meteorological information, using data assimilation techniques to improve the model’s representation of the estuary’s dynamics.

- Model Sensitivity Analysis: This helped identify the most influential parameters and guide the calibration process, making it more focused and effective.

- Uncertainty Analysis: I employed Monte Carlo simulations to quantify the uncertainty associated with model parameters and predictions, leading to more robust conclusions.

- Stakeholder Collaboration: Regular communication with local agencies and experts provided valuable insights and ensured the model addressed the relevant issues.

The successful completion of this project required a blend of technical expertise, problem-solving skills, and effective communication. The final model provided valuable insights into the estuary’s nutrient dynamics, guiding effective pollution management strategies.

Key Topics to Learn for Water Quality Modeling (QUAL2K, WASP) Interview

- Model Fundamentals: Understanding the underlying principles of QUAL2K and WASP, including their strengths and limitations. This includes grasping the different numerical methods employed and their implications.

- Data Input and Calibration: Mastering the process of preparing and inputting diverse datasets (e.g., water quality parameters, flow data, meteorological data) and effectively calibrating the model to real-world conditions. Consider exploring sensitivity analysis techniques.

- Scenario Development and Analysis: Gaining proficiency in designing and executing various scenarios to evaluate the impacts of different management strategies (e.g., pollution control measures, land use changes) on water quality. Practice interpreting and communicating the results.

- Spatial and Temporal Resolution: Understanding the significance of model resolution (both spatial and temporal) and its impact on the accuracy and reliability of the simulation results. Know how to choose appropriate resolution based on project needs.

- Water Quality Parameters: Develop a strong grasp of key water quality parameters (e.g., dissolved oxygen, nutrients, temperature, pathogens) and their interrelationships within the model. Practice diagnosing model output and identifying potential issues.

- Model Verification and Validation: Learn how to rigorously evaluate the accuracy and reliability of your model results using appropriate statistical methods and comparison with observed data. This is crucial for building trust in your modeling work.

- Practical Applications: Explore case studies and real-world applications of QUAL2K and WASP in diverse environmental settings (e.g., rivers, lakes, estuaries). Understanding specific applications will enhance your problem-solving skills.

- Troubleshooting and Debugging: Develop the ability to identify and resolve common issues encountered during model setup, calibration, and simulation. This demonstrates practical experience and problem-solving ability.

Next Steps

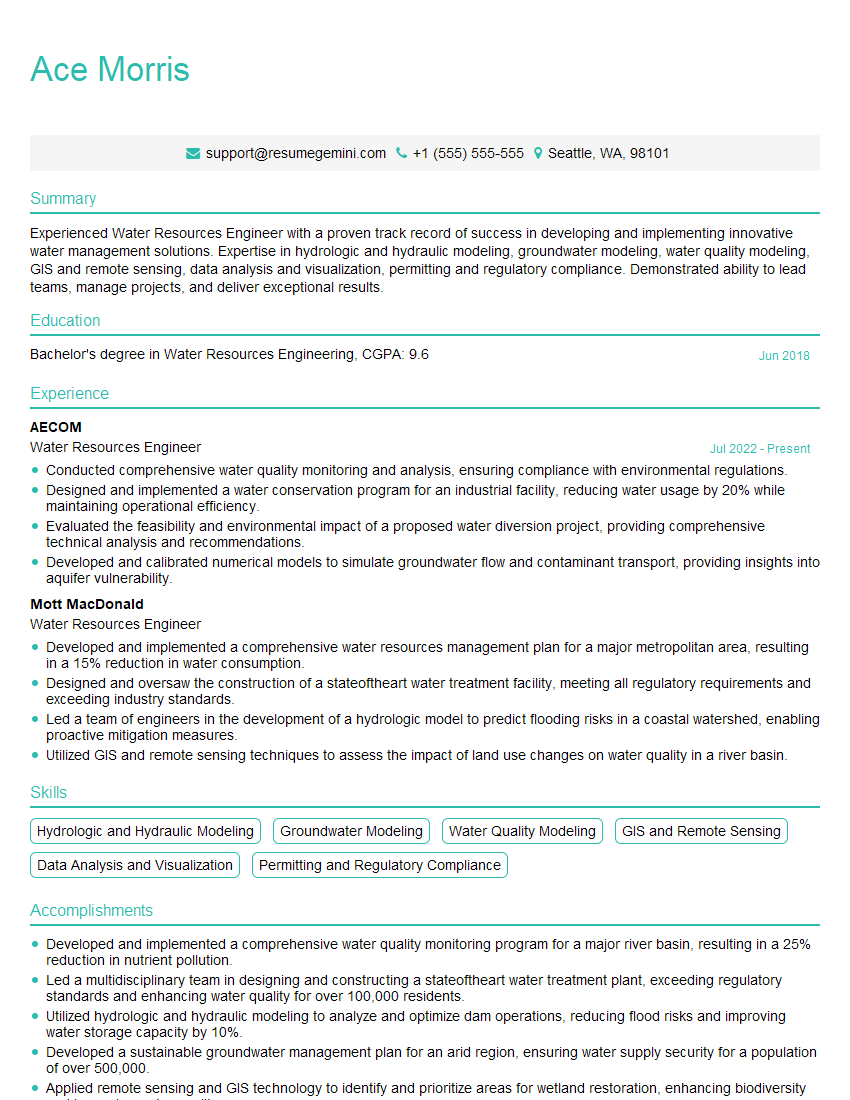

Mastering water quality modeling with QUAL2K and WASP is crucial for career advancement in environmental engineering and related fields. It opens doors to challenging and rewarding opportunities involving impactful environmental projects. To maximize your job prospects, create a compelling and ATS-friendly resume that effectively highlights your skills and experience. ResumeGemini is a trusted resource to help you build a professional resume that stands out. Examples of resumes tailored to Water Quality Modeling (QUAL2K, WASP) roles are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.