Unlock your full potential by mastering the most common Uncertainty and Sensitivity Analysis interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Uncertainty and Sensitivity Analysis Interview

Q 1. Explain the difference between aleatory and epistemic uncertainty.

Aleatory and epistemic uncertainties represent two fundamentally different types of uncertainty. Think of it like this: aleatory uncertainty is inherent randomness, like the flip of a coin – you can’t predict the outcome with certainty, even with perfect knowledge. Epistemic uncertainty, on the other hand, is uncertainty due to a lack of knowledge. It’s like not knowing the exact probability of heads because the coin might be biased, but we could potentially reduce this uncertainty with more information.

Aleatory uncertainty, also known as inherent or irreducible uncertainty, is a reflection of the natural variability in a system. This type of uncertainty is often associated with random processes or events that are inherently unpredictable, regardless of how much information we have. Examples include the randomness in weather patterns, the variation in the yield of a crop, or the inherent variability in the strength of a material.

Epistemic uncertainty, also known as reducible or subjective uncertainty, is due to incomplete knowledge or imperfect models. This uncertainty arises from our lack of understanding or inability to precisely measure a variable. It can often be reduced by gathering more data, refining our models, or improving our measurement techniques. Examples include uncertainty in the parameters of a complex model or uncertainty in the design of a structure due to lack of site investigation.

Q 2. Describe various methods for quantifying uncertainty.

Quantifying uncertainty involves assigning numerical values to the potential range of outcomes. Several methods exist, each with its own strengths and weaknesses:

- Probability distributions: These assign probabilities to different outcomes. For example, a normal distribution can model the uncertainty in a measurement, while a uniform distribution can represent equally likely outcomes within a given range. We choose the distribution based on available knowledge and the nature of the uncertainty.

- Fuzzy sets: Useful when probabilities are hard to define, fuzzy sets represent uncertainty through membership functions, which describe the degree to which an element belongs to a set. This is particularly useful for linguistic variables like ‘high’ or ‘low’.

- Interval analysis: This method defines uncertainty using intervals instead of probability distributions. It only considers the upper and lower bounds of possible values.

- Bayesian methods: These use prior knowledge and observed data to update the probability distributions representing uncertain parameters. This approach is particularly powerful in situations where prior information is available.

The choice of method depends heavily on the nature of the uncertainty and the available data. For example, if we have historical data on a variable, we can use a probability distribution fit to the data. If we have little information, interval analysis might be more appropriate.

Q 3. What are the key steps involved in performing a sensitivity analysis?

A sensitivity analysis helps us understand how changes in input variables affect the output of a model. The key steps are:

- Define the model and inputs: Clearly identify the model and the input variables that are subject to uncertainty.

- Choose a method for varying inputs: Decide on a sampling method (e.g., Monte Carlo simulation, Latin Hypercube Sampling) to generate input values across the range of uncertainty.

- Run the model: Run the model for each set of input values generated in the previous step.

- Analyze the results: Analyze the output to identify which input variables have the greatest impact on the model output. This often involves calculating sensitivity indices or visualizing the relationship between inputs and outputs.

- Document and report findings: Document the methodology, results, and conclusions of the sensitivity analysis.

Consider a simple example of calculating the area of a rectangle. The inputs are length and width. A sensitivity analysis would show that a change in a longer side has a larger effect on the area than an equivalent change in a shorter side.

Q 4. Explain the concept of a sensitivity index (e.g., Sobol index).

A sensitivity index quantifies the influence of an input variable on the model output. The Sobol index is a common global sensitivity index that measures the total effect of an input variable, including both its direct effect and its indirect effects through interactions with other variables. It ranges from 0 to 1, where 0 indicates no influence and 1 indicates complete influence.

For instance, imagine a model predicting crop yield based on rainfall and temperature. A high Sobol index for rainfall suggests that variations in rainfall significantly affect the crop yield. A low index for temperature might indicate that temperature is less influential on the yield compared to rainfall.

Other sensitivity indices include:

- Partial Rank Correlation Coefficient (PRCC): Useful for monotonic relationships between inputs and outputs.

- Morris method: An elementary effects method, efficient for screening many input variables.

The choice of index depends on the model’s characteristics and the type of analysis needed. For non-linear, high dimensional models, Sobol indices are popular because of their ability to capture interactions between inputs.

Q 5. How do you choose an appropriate sampling method for uncertainty analysis (e.g., Monte Carlo, Latin Hypercube)?

Selecting a sampling method depends on factors like the model’s complexity, dimensionality (number of input variables), and computational cost. Let’s compare two popular methods:

- Monte Carlo simulation: This method uses random sampling from the input variable distributions. It’s simple to implement but can be computationally expensive, especially for high-dimensional models. It’s suitable for complex models where other methods might be difficult to apply.

- Latin Hypercube Sampling (LHS): This method is a stratified sampling technique that ensures a more uniform coverage of the input space compared to Monte Carlo. It’s more efficient than Monte Carlo, requiring fewer simulations to achieve the same level of accuracy for many problems.

For low-dimensional models, Monte Carlo can be adequate, but for higher dimensions, LHS or other advanced techniques like optimal Latin Hypercube sampling or Quasi-Monte Carlo methods are recommended to improve efficiency. The choice also depends on the desired level of accuracy and the computational resources available. If computational cost is a significant constraint, LHS is often preferred over basic Monte Carlo.

Q 6. What are the limitations of Monte Carlo simulation?

While Monte Carlo simulation is a powerful tool, it has limitations:

- Computational cost: The primary limitation is the computational cost, especially for complex models or when high accuracy is required. A large number of simulations might be needed to obtain reliable results.

- Convergence issues: The accuracy of Monte Carlo results depends on the number of simulations. More simulations generally lead to more accurate estimates, but convergence can be slow, especially for rare events.

- Difficulty with rare events: Accurately estimating the probabilities of rare events can be challenging as they require a very large number of simulations. Specialized techniques like importance sampling are needed to address this.

- Statistical uncertainties: Even with a large number of simulations, there’s always some level of statistical uncertainty in the results. Proper statistical analysis is essential to quantify this uncertainty.

In practice, these limitations can be mitigated through techniques like variance reduction methods, improved sampling strategies, and careful experimental design. Despite these limitations, Monte Carlo remains a very popular and versatile method, particularly suitable when other methods are difficult to apply.

Q 7. Describe different types of sensitivity analysis techniques (e.g., local, global).

Sensitivity analysis techniques can be broadly categorized as local or global:

- Local sensitivity analysis: This examines the impact of small changes in input variables around a specific point in the input space. Techniques like partial derivatives or finite difference methods are used. Local methods are efficient but only provide information about the sensitivity in the vicinity of the chosen point; they may miss non-linear relationships or interactions between input parameters.

- Global sensitivity analysis: This considers the effect of variations across the entire range of input uncertainties. Methods like Sobol indices, Morris method, and variance-based methods are employed. Global methods provide a more comprehensive understanding of sensitivity, considering the entire input space and interactions between variables, but are generally more computationally expensive.

The choice between local and global methods depends on the nature of the model and the objectives of the analysis. If the model is highly non-linear or interactions between input variables are expected, global sensitivity analysis is preferred. Local methods can be useful for initial screening or when computational resources are limited.

Q 8. How do you handle correlated inputs in uncertainty analysis?

Ignoring correlations in uncertainty analysis can lead to inaccurate estimations of uncertainty. Correlated inputs mean that changes in one input are linked to changes in others. For example, the daily high and low temperatures are correlated – a high daily high is often associated with a high daily low. Failing to account for this correlation would underestimate the uncertainty in a model dependent on both variables.

We handle correlated inputs using techniques that capture their dependence. Common methods include:

- Copulas: Copulas are mathematical functions that model the dependence structure between random variables, irrespective of their marginal distributions. They allow us to specify the correlation between inputs while still maintaining flexibility in defining their individual distributions.

- Joint probability distributions: If the correlation is straightforward, we can directly define a joint probability distribution (e.g., a bivariate normal distribution) that captures the correlations between inputs. This approach is suitable when you have a good understanding of the relationship between the inputs.

- Simulation techniques using correlated random number generators: Most simulation software packages (like @RISK or OpenTURNS) offer functionalities to generate correlated random numbers based on specified correlation matrices. This allows you to sample the input space in a way that reflects the true dependence structure.

The choice of method depends on the nature and complexity of the correlations and the availability of data. A thorough understanding of the system is critical in selecting the appropriate method. For instance, if we’re modeling oil prices and gas prices, which are heavily correlated, using a copula or joint distribution is more accurate than assuming independence.

Q 9. Explain the concept of variance-based sensitivity analysis.

Variance-based sensitivity analysis, also known as Sobol’ sensitivity analysis, quantifies the influence of individual input variables and their interactions on the variance of the model output. It provides a measure of how much each input contributes to the total uncertainty in the model’s prediction. It’s particularly useful when dealing with models with numerous inputs.

The method decomposes the variance of the model output into contributions attributable to each input variable, and also to their interactions. The contribution of an input variable is expressed as a sensitivity index (e.g., first-order index, total-order index).

- First-order index: Measures the main effect of an input variable on the output variance, ignoring interactions.

- Total-order index: Measures the total effect of an input variable, including its main effect and all its interactions with other inputs.

Imagine building a house; the total cost is the output. Variance-based SA would tell us how much of the cost variation is due to the price of lumber (one input), how much to the labor costs (another), and how much to interactions (e.g., unexpected delays causing both material and labor costs to rise). A high total-order index for lumber suggests its price significantly impacts the overall cost variability.

The Sobol’ method typically involves Monte Carlo simulations, which can be computationally expensive for complex models with many inputs. However, efficient algorithms and software packages have made variance-based SA more accessible. The results are often presented graphically (e.g., bar charts of sensitivity indices) to facilitate interpretation.

Q 10. What is the difference between screening and quantitative sensitivity analysis?

Screening and quantitative sensitivity analyses are different stages in the sensitivity analysis process, with distinct goals and methodologies.

Screening analysis aims to quickly identify the most important input variables in a model with a large number of parameters. It’s less computationally intensive and focuses on identifying influential variables without precisely quantifying their impact. Common screening methods include:

- Morris method: Uses elementary effects to rank inputs based on their average influence.

- FAST (Fourier Amplitude Sensitivity Test): Uses Fourier analysis to estimate the sensitivity indices.

Think of it like a preliminary scan to prioritize further investigation. We use screening to narrow down many candidates before doing the detailed, computationally expensive quantitative analysis.

Quantitative sensitivity analysis, on the other hand, provides precise estimates of the sensitivity indices for the variables identified as important during screening. This step gives a detailed understanding of how much each input variable contributes to the output uncertainty. Methods include:

- Variance-based methods (Sobol’): As explained previously.

- Regression-based methods: Employ regression models to estimate the sensitivity of the output to changes in the inputs.

Quantitative analysis is like detailed analysis of the short-listed candidates from screening, providing a clearer picture of their relative impacts. It typically follows screening, providing more precise results, albeit at higher computational cost.

Q 11. How do you present the results of an uncertainty and sensitivity analysis?

Presenting the results of an uncertainty and sensitivity analysis requires a clear, concise, and visually appealing approach. The goal is to communicate the key findings effectively to both technical and non-technical audiences.

Common methods include:

- Summary tables: Present key statistics (e.g., mean, standard deviation, percentiles) of model outputs, along with sensitivity indices for each input variable. A table summarizing the mean, 5th, 50th (median), and 95th percentiles of a predicted quantity offers a concise overview of the uncertainty.

- Histograms and probability density functions: Show the distribution of model outputs, visually representing the uncertainty. This demonstrates the range of possible outcomes.

- Bar charts of sensitivity indices: Clearly display the relative importance of each input variable. The length of each bar directly represents its influence on the output variance.

- Tornado diagrams: A visual way to compare the sensitivity of the model outputs to various inputs, usually ranking the inputs by their impact on the output, offering an easy-to-interpret overview.

- Scatter plots: Show the relationship between input variables and model outputs.

- Cumulative distribution function (CDF) plots: Illustrate the probability of the model output falling below a certain threshold.

The choice of visualization method depends on the specific analysis and the audience. Always include a clear explanation of the results in plain language, avoiding technical jargon wherever possible. A well-written report with supporting visuals is key to successful communication of insights.

Q 12. Describe your experience with software for uncertainty and sensitivity analysis (e.g., @RISK, OpenTURNS).

I have extensive experience using several software packages for uncertainty and sensitivity analysis. My proficiency includes:

- @RISK: A widely used add-in for Microsoft Excel, @RISK offers a user-friendly interface for performing Monte Carlo simulations and sensitivity analysis. I have used it extensively in various projects, leveraging its capabilities for defining probability distributions, running simulations, and analyzing results.

- OpenTURNS: A powerful open-source platform offering a wider range of methods for uncertainty quantification and sensitivity analysis. Its flexibility and customization options have proved invaluable for more complex and research-oriented projects requiring advanced techniques. For instance, its capabilities with copulas and advanced variance-based methods surpassed the capabilities of more basic commercial tools.

- R: I am also proficient in using R with packages like ‘sensitivity’ and ‘lhs’ for implementing various sensitivity analysis techniques. This provides flexibility and control, allowing for tailoring to very specific needs.

My experience extends to using these tools in various applications, including risk assessment, environmental modeling, and engineering design. I am familiar with their strengths and weaknesses and can choose the appropriate tool based on the project’s requirements and complexity. The selection often involves a trade-off between ease of use and the availability of specific algorithms and techniques.

Q 13. How do you validate the results of your uncertainty analysis?

Validating the results of an uncertainty analysis is crucial to ensure the reliability of the conclusions. This involves multiple steps:

- Data validation: Ensure the input data used for the analysis is accurate, complete, and representative of the real-world system. Verify data quality through checks, comparisons with existing datasets and expert knowledge.

- Model validation: Check the model’s accuracy and predictive power. This could include comparing model predictions with historical data or experimental results where possible. Consider techniques like comparing model results with physical observations, if applicable.

- Sensitivity analysis validation: Validate the sensitivity analysis results by comparing them with the results of alternative methods, or by using different software packages. Assess the robustness of findings across differing approaches.

- Expert judgment: Incorporate expert knowledge and opinions to assess the reasonableness of the uncertainty estimates. Experts can identify potential biases, flaws or limitations in the analysis process.

- Scenario analysis: Exploring different scenarios and comparing the results to assess the robustness of conclusions. This involves testing various combinations of input values and examining if conclusions remain valid.

Validation is an iterative process. Discrepancies between the model and reality could indicate errors in the data, model, or analysis methodology. Thorough validation builds confidence in the results and strengthens the reliability of conclusions drawn from the uncertainty analysis.

Q 14. Explain how uncertainty analysis can be used in risk assessment.

Uncertainty analysis plays a vital role in risk assessment by quantifying the uncertainty in the prediction of risk. Risk is typically represented as a combination of the likelihood (probability) of an event occurring and its consequences (impact). Uncertainty analysis helps refine this evaluation.

Here’s how:

- Quantifying uncertainty in risk probabilities: Many risk assessments involve probabilistic models. Uncertainty analysis determines the range of possible probabilities for each risk event, considering the uncertainties in the input parameters. For instance, in assessing the risk of a flood, uncertainty in rainfall predictions would directly affect the uncertainty in the probability of a flood event.

- Quantifying uncertainty in risk consequences: The consequences of a risk event are often uncertain. Uncertainty analysis helps determine the range of possible impacts, considering uncertainties in cost estimations, environmental damage, or human health effects. When assessing the risk of a chemical spill, uncertainty in the environmental impact assessment affects the severity estimate.

- Determining risk tolerance and decision-making: By quantifying the uncertainty in both probability and impact, we get a more comprehensive understanding of the overall risk. This helps in determining acceptable levels of risk and in making informed decisions about risk mitigation strategies. Risk mitigation strategies are evaluated and compared against their cost and uncertainty.

In summary, uncertainty analysis provides a more nuanced and realistic picture of risk, moving beyond simple point estimates to a range of possible outcomes and their associated probabilities. This comprehensive approach leads to better-informed decision-making in risk management.

Q 15. Discuss the challenges in applying uncertainty analysis to real-world problems.

Applying uncertainty analysis to real-world problems presents numerous challenges. The biggest hurdle is often the inherent complexity of real-world systems. These systems rarely behave according to simplified mathematical models; they are influenced by countless interacting variables, many of which are poorly understood or difficult to measure accurately. This leads to significant uncertainties in model inputs and parameters.

Another major challenge is data scarcity. Many real-world applications lack sufficient high-quality data to properly characterize the uncertainties. Even when data exists, it may be incomplete, inconsistent, or biased, hindering accurate uncertainty quantification. Furthermore, defining the scope of the analysis and selecting appropriate uncertainty models can be subjective and require considerable judgment. Finally, communicating the results of a complex uncertainty analysis to non-technical stakeholders, who may lack a deep understanding of probability and statistics, can be a substantial challenge.

For instance, imagine modeling the spread of an infectious disease. Factors such as population density, contact rates, incubation periods, and the effectiveness of interventions are all highly uncertain. Accurately capturing these uncertainties in a model requires substantial expertise and often involves simplifying assumptions that might affect the results.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you deal with incomplete or uncertain data in your analysis?

Dealing with incomplete or uncertain data is central to uncertainty analysis. Several strategies can be employed. Firstly, we can use expert elicitation to quantify uncertainty where data is scarce. This involves systematically gathering opinions from subject matter experts and combining them to create probability distributions for uncertain parameters. Secondly, we can employ Bayesian methods, which are particularly well-suited for incorporating prior knowledge or belief about parameters, even when data is limited. Bayesian methods update beliefs in the light of new data, providing a more robust estimate.

Thirdly, we can use imputation techniques to fill in missing data. These might involve simple methods like replacing missing values with the mean or median, or more sophisticated methods such as multiple imputation, which creates multiple plausible datasets to account for the uncertainty due to missing data. Finally, sensitivity analysis can help identify which parameters are most influential, allowing us to focus our data collection efforts and modeling efforts on those parameters with the greatest impact. For example, if our data on a specific parameter is limited but sensitivity analysis shows that it is not a critical parameter in our model, then the uncertainty associated with this parameter might be neglected.

Q 17. Describe your experience with Bayesian methods for uncertainty quantification.

I have extensive experience applying Bayesian methods for uncertainty quantification. Bayesian methods provide a powerful framework for incorporating prior information and updating beliefs in the face of new evidence. In my work, I have used Bayesian networks and Markov Chain Monte Carlo (MCMC) methods extensively. Bayesian networks are particularly useful for modeling complex systems with many interacting variables, allowing us to represent conditional dependencies between parameters visually. MCMC methods, such as Metropolis-Hastings, are computationally intensive but effective for sampling from complex posterior distributions. They are crucial for quantifying the uncertainty around model parameters and predictions.

For example, I used Bayesian methods in a project assessing the risk of coastal erosion. We used prior information on sediment transport rates and sea-level rise from previous studies and combined this with new data from recent coastal surveys. Bayesian analysis allowed us to quantify the uncertainty in the predicted erosion rates, highlighting scenarios with high and low risk for coastal communities.

Q 18. How do you communicate complex uncertainty analysis results to non-technical stakeholders?

Communicating complex uncertainty analysis results to non-technical stakeholders requires clear, concise, and visual communication. Instead of focusing on technical details, the emphasis should be on the key findings and their implications. I typically use visual aids such as histograms, box plots, and probability maps to represent uncertainty distributions. Furthermore, I avoid using technical jargon and instead employ clear language, using analogies and real-world examples to illustrate concepts. For instance, instead of saying “the 95% credible interval for parameter X is [a, b],” I might say “there’s a 95% chance that parameter X lies between a and b.”

I often use a narrative approach, telling a story that conveys the key insights from the analysis. I focus on the potential impacts of uncertainty, highlighting the implications of different scenarios. A summary table of key findings, with clear explanations, can be an incredibly helpful communication tool. Engaging in active listening and addressing stakeholders’ concerns is also vital to ensure effective communication.

Q 19. Explain the concept of a probability distribution and its importance in uncertainty analysis.

A probability distribution is a mathematical function that describes the likelihood of different outcomes for a random variable. In simpler terms, it shows the range of possible values a variable can take and the probability of each value occurring. The importance of probability distributions in uncertainty analysis lies in their ability to represent and quantify uncertainty. Instead of assuming a single, precise value for an uncertain parameter, a probability distribution assigns probabilities to a range of possible values, reflecting our uncertainty about the true value.

For example, instead of assuming a precise value for the height of a building, we could represent this uncertainty with a normal distribution. This distribution would specify the most likely height (the mean) and the spread of plausible heights (the standard deviation). Using probability distributions allows us to propagate uncertainty through a model and quantify the uncertainty in the model’s outputs. This provides a more realistic and comprehensive representation of the system’s behavior under uncertainty.

Q 20. What are the different types of probability distributions commonly used in uncertainty analysis?

Many probability distributions are commonly used in uncertainty analysis, each suited to different situations. Some of the most common include:

- Normal (Gaussian): A symmetrical bell-shaped distribution, often used when uncertainties are roughly symmetrical around a mean value.

- Lognormal: The logarithm of the variable follows a normal distribution; used when uncertainties are skewed towards higher values, such as concentrations of pollutants.

- Uniform: All values within a specified range have equal probability; used when we have little information about the distribution but know the bounds.

- Triangular: Defined by a minimum, most likely, and maximum value; used when we have some knowledge about the most likely value but less information about the extremes.

- Beta: Defined on the interval [0,1]; commonly used to represent probabilities or proportions.

- Gamma: Used for positive-valued variables, often modelling waiting times or durations.

The choice of distribution depends heavily on the specific context and available information.

Q 21. How do you select the appropriate probability distribution for a given parameter?

Selecting the appropriate probability distribution for a given parameter requires a combination of judgment, data analysis, and knowledge of the system. The process often involves these steps:

- Data Analysis: Examine available data to understand the shape, central tendency, and spread of the data. Histograms and other graphical representations are valuable here. Look for skewness and other features that suggest specific distributions.

- Expert Elicitation: If data is scarce, consult with experts to gather information about the range of possible values and their relative likelihood. Structured elicitation techniques are crucial here.

- Prior Knowledge: Consider any prior knowledge or information about the parameter. For instance, if a parameter must be positive, distributions like lognormal or gamma might be appropriate.

- Distribution Fitting: Use statistical methods to fit various distributions to the available data (if data is available). Goodness-of-fit tests can be helpful to compare the quality of the fits.

- Sensitivity Analysis: Sometimes, a certain degree of approximation in the choice of distribution is acceptable if the parameter’s influence on the model output is low. A sensitivity analysis helps in identifying such parameters.

Ultimately, the best distribution is one that reasonably represents the uncertainty and is appropriate for the analysis being conducted. It’s important to document the rationale behind the distribution choice.

Q 22. Explain the concept of a confidence interval and its interpretation in uncertainty analysis.

A confidence interval in uncertainty analysis quantifies the uncertainty associated with an estimate. Imagine you’re trying to estimate the average height of trees in a forest. You can’t measure every tree, so you take a sample. The confidence interval provides a range within which the true average height is likely to fall, with a specified level of confidence (e.g., 95%).

For example, a 95% confidence interval of 10 meters to 12 meters for the average tree height means we are 95% confident that the true average height lies somewhere between 10 and 12 meters. This doesn’t mean there’s a 95% chance the true average is in that range; it means that if we were to repeat the sampling process many times, 95% of the resulting confidence intervals would contain the true average height.

The width of the confidence interval reflects the uncertainty: a narrower interval indicates less uncertainty, while a wider interval reflects greater uncertainty. Factors influencing the width include sample size (larger samples yield narrower intervals) and the variability in the data (more variable data leads to wider intervals).

Q 23. What is the role of sensitivity analysis in model calibration?

Sensitivity analysis plays a crucial role in model calibration by helping us understand which input parameters have the most significant impact on the model’s output. Model calibration involves adjusting model parameters to best fit observed data. Without sensitivity analysis, we might spend considerable time tweaking parameters that have little effect on the overall fit, while neglecting those with a strong influence.

For instance, imagine calibrating a hydrological model to predict river flow. Sensitivity analysis could reveal that parameters related to rainfall intensity have a much stronger impact on the predicted flow than parameters related to soil texture. This knowledge allows us to focus calibration efforts on the more influential parameters, improving efficiency and accuracy.

In essence, sensitivity analysis guides the calibration process by prioritizing the parameters most critical to the model’s performance. It helps us avoid wasting time on less impactful parameters, leading to a more efficient and robust calibration.

Q 24. How can you use sensitivity analysis to improve the efficiency of a simulation model?

Sensitivity analysis can significantly improve the efficiency of simulation models by identifying and reducing the computational burden associated with uncertain parameters. By pinpointing the most influential parameters, we can focus our computational resources on those areas.

One strategy is to reduce the number of simulations required. If a sensitivity analysis shows that only a few parameters strongly influence the output, we can reduce the number of simulations needed to explore the parameter space. Instead of exploring a vast multi-dimensional space, we can concentrate on the dimensions (parameters) that truly matter.

Another way is to improve model design. Understanding which parameters are most sensitive can highlight areas for model simplification. If a less sensitive parameter is computationally expensive to model, we might consider simplifying its representation or even removing it if the impact on the output is negligible. This can dramatically improve the simulation’s speed and efficiency without significantly compromising accuracy.

Q 25. Describe a situation where you had to deal with significant uncertainty in a project. How did you address it?

During a project involving the design of a new offshore wind farm, we faced considerable uncertainty regarding future electricity prices and the long-term performance of the turbines. These uncertainties could significantly impact the project’s financial viability.

To address this, we employed a probabilistic approach using Monte Carlo simulation. We defined probability distributions for the uncertain parameters (electricity prices, turbine lifespan, maintenance costs, etc.), based on expert judgment and historical data. The Monte Carlo simulation ran thousands of iterations, sampling from these distributions to generate a range of possible project outcomes (e.g., net present value).

This analysis allowed us to quantify the risk associated with the project. We identified scenarios with high financial risk and explored strategies to mitigate these risks, such as hedging against price volatility or investing in more robust turbine technology. The results provided a more informed basis for decision-making, allowing us to weigh potential profits against potential losses and make a more informed investment decision.

Q 26. Explain the difference between deterministic and stochastic models. When is each more appropriate?

Deterministic models assume that all input parameters are known with certainty, yielding a single, predictable output. Stochastic models, on the other hand, explicitly incorporate uncertainty by representing input parameters as probability distributions, leading to a range of possible outputs.

Deterministic models are appropriate when the inputs are well-defined and the system’s behavior is predictable. For example, calculating the area of a rectangle using a known length and width is a deterministic task. In contrast, stochastic models are better suited for situations where uncertainty is inherent, such as predicting the spread of a disease where infection rates and individual susceptibility vary.

Choosing between deterministic and stochastic modeling depends on the context. If uncertainty is negligible or the focus is on a simplified representation, a deterministic model may suffice. However, when uncertainty is significant and critical to understanding potential outcomes, a stochastic model is necessary to provide a more realistic and nuanced assessment.

Q 27. What are some common pitfalls to avoid when conducting uncertainty and sensitivity analysis?

Several pitfalls can compromise the effectiveness of uncertainty and sensitivity analyses. One common mistake is using inappropriate probability distributions for uncertain parameters. The choice of distribution should be based on a sound understanding of the underlying process and available data, avoiding arbitrary choices.

Another pitfall is neglecting to consider correlations between input parameters. Parameters are rarely independent; for instance, rainfall and soil moisture are often correlated. Ignoring these correlations can lead to an underestimation or overestimation of the overall uncertainty.

Finally, misinterpreting the results is a frequent problem. Sensitivity indices quantify the relative importance of parameters, but don’t necessarily imply causality. A highly sensitive parameter might simply be a proxy for a more complex underlying process. Careful interpretation and validation are crucial to avoid misleading conclusions.

Key Topics to Learn for Uncertainty and Sensitivity Analysis Interview

- Probability Distributions: Understanding and applying various probability distributions (e.g., Normal, Uniform, Lognormal) to model uncertainties in input parameters.

- Monte Carlo Simulation: Mastering the principles and practical application of Monte Carlo simulation for quantifying uncertainty propagation through models.

- Sensitivity Analysis Techniques: Familiarity with different methods like local sensitivity analysis (e.g., partial derivatives), global sensitivity analysis (e.g., Sobol indices, variance-based methods), and their appropriate applications.

- Uncertainty Quantification (UQ): Grasping the fundamental concepts of UQ, including characterizing, propagating, and reducing uncertainties in model predictions.

- Practical Applications: Understanding how Uncertainty and Sensitivity Analysis are applied in various fields, such as risk assessment, engineering design, environmental modeling, and financial forecasting. Consider examples from your own experience.

- Software Proficiency: Demonstrating familiarity with relevant software packages for performing Uncertainty and Sensitivity Analysis (e.g., R, Python with relevant libraries like `numpy`, `scipy`, `pandas`).

- Interpreting Results: The ability to clearly and effectively communicate the results of Uncertainty and Sensitivity Analysis, including identifying key uncertainties and their impact on model outputs.

- Model Calibration and Validation: Understanding how to incorporate uncertainty into model calibration and validation processes.

Next Steps

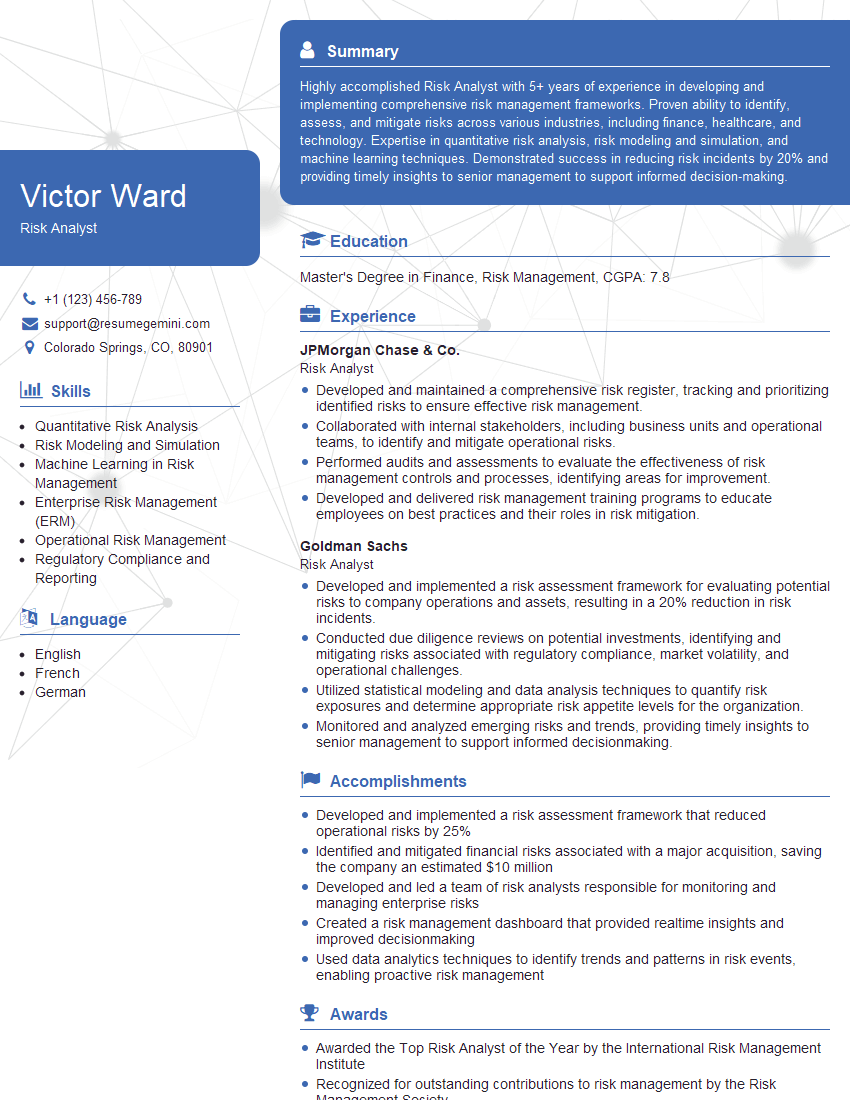

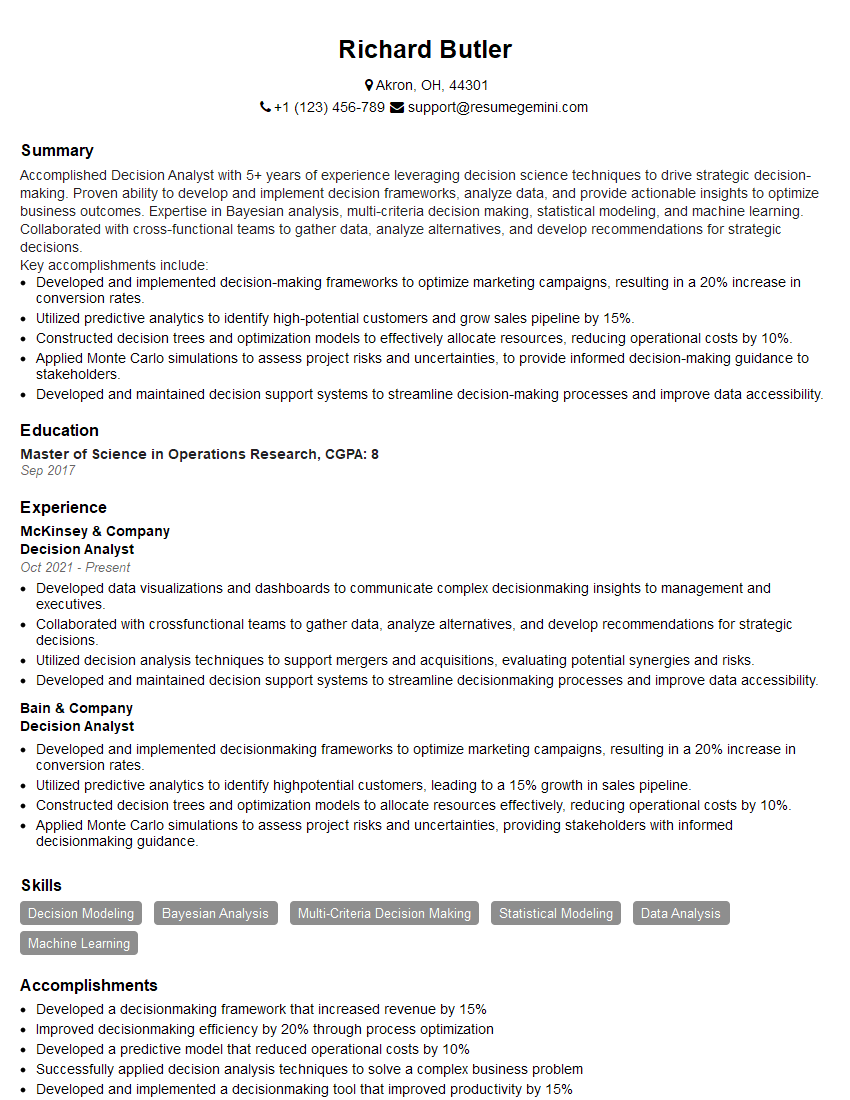

Mastering Uncertainty and Sensitivity Analysis opens doors to exciting career opportunities in diverse fields demanding robust analytical skills. A strong understanding of these techniques significantly enhances your value to potential employers, demonstrating your ability to handle complex problems and make informed decisions under uncertainty. To maximize your job prospects, invest time in crafting a compelling and ATS-friendly resume. ResumeGemini is a trusted resource that can help you build a professional resume that highlights your skills effectively. Examples of resumes tailored to Uncertainty and Sensitivity Analysis are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.