Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Tool and Workpiece Measurement interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Tool and Workpiece Measurement Interview

Q 1. Explain the difference between accuracy and precision in measurement.

Accuracy and precision are often confused, but they represent distinct aspects of measurement quality. Think of it like shooting arrows at a target.

Accuracy refers to how close a measurement is to the true value. If your arrows consistently hit close to the bullseye, you have high accuracy. In measurement, it’s how close your reading is to the actual dimension of the workpiece or tool.

Precision, on the other hand, refers to how close repeated measurements are to each other. If all your arrows group tightly together, regardless of whether they hit the bullseye, you have high precision. In measurement, this means your instrument gives consistent readings, even if those readings are systematically off from the true value.

For example, a micrometer might consistently read 10.002 mm for a part that is actually 10.000 mm. This is precise (consistent readings) but inaccurate (not close to the true value). Conversely, another measurement tool might give readings of 9.998 mm, 10.001 mm, and 10.005 mm, which is less precise but closer to the true value, resulting in higher accuracy.

Q 2. Describe various types of measuring instruments used for tool and workpiece measurement.

The choice of measuring instrument depends heavily on the application, the required precision, and the geometry of the workpiece or tool. Here are some examples:

- Vernier Calipers: Versatile and relatively inexpensive, they measure linear dimensions with good precision (typically to 0.01 mm).

- Micrometers: Offer even higher precision than vernier calipers (typically to 0.001 mm), ideal for precise measurements of smaller parts.

- Dial Indicators: Used for checking surface flatness, runout, and other geometric characteristics.

- Optical Comparators: Project an enlarged image of the workpiece onto a screen, allowing for detailed inspection of complex shapes and surface features.

- Coordinate Measuring Machines (CMMs): Highly sophisticated instruments capable of measuring three-dimensional coordinates of points on a workpiece, providing comprehensive dimensional data.

- Laser Scanners: Employ laser technology to quickly and accurately measure complex surfaces and shapes, generating point cloud data.

- Gauge Blocks: Precision-ground blocks of known dimensions used for calibrating other measuring instruments.

Each instrument has its strengths and limitations; selecting the right tool is crucial for obtaining reliable measurements.

Q 3. How do you calibrate measuring instruments to ensure accuracy?

Calibration is the process of comparing a measuring instrument’s readings against a known standard to determine its accuracy. This ensures reliable and trustworthy measurements.

The process typically involves:

- Selecting appropriate standards: Use traceable standards with higher accuracy than the instrument being calibrated.

- Following established procedures: Use a method approved by the instrument manufacturer or a recognized standard.

- Comparing readings: Compare the instrument’s readings with the standard’s known values under controlled environmental conditions (temperature, humidity).

- Adjusting or correcting: If the instrument readings deviate from the standards beyond acceptable tolerances, adjustments might be made (if possible) or a correction factor applied.

- Documentation: Record all calibration data, including the date, standards used, and any deviations observed. This documentation forms part of a quality management system.

Regular calibration, at intervals recommended by the manufacturer, is vital for maintaining measurement accuracy. Calibration certificates serve as evidence of the instrument’s accuracy.

Q 4. What are the common sources of measurement error, and how can they be minimized?

Several factors can introduce errors into measurements. Understanding these sources is critical for minimizing their impact.

- Environmental Factors: Temperature, humidity, and vibrations can affect instrument performance and workpiece dimensions.

- Instrument Wear: Wear and tear on measuring instruments can lead to inaccuracies. Regular maintenance and calibration are crucial.

- Operator Error: Incorrect handling, improper reading, or misinterpretation of the instrument can introduce significant errors.

- Workpiece Condition: Surface finish, cleanliness, and deformation of the workpiece can affect measurement accuracy.

- Fixture Errors: Improperly designed or used fixtures can lead to inaccuracies, particularly in CMM measurements.

Minimizing errors requires careful attention to detail. This includes using appropriate instruments, controlling environmental factors, following proper measuring procedures, regularly calibrating instruments, and maintaining workpieces in good condition.

Q 5. Explain the principles of geometric dimensioning and tolerancing (GD&T).

Geometric Dimensioning and Tolerancing (GD&T) is a standardized system for specifying the size, shape, orientation, and location of features on a part. It uses symbols and tolerances to define acceptable variations in a part’s geometry.

Unlike traditional tolerancing, which relies solely on size tolerances, GD&T provides a more comprehensive approach. It allows for a clearer understanding of the part’s functional requirements, leading to improved manufacturing efficiency and part interchangeability. It utilizes symbols to describe geometric controls such as:

- Position: Specifies the allowable variation in the location of a feature.

- Orientation: Defines the permissible angular variation of a feature.

- Form: Controls the shape of a feature (straightness, flatness, circularity, cylindricity).

- Profile: Specifies the allowable variation from a defined profile.

- Runout: Controls the variation in the axial or circular runout of a feature.

GD&T uses a combination of symbols and numerical values to specify tolerances, allowing for a precise definition of acceptable variations in part geometry.

Q 6. How do you interpret GD&T symbols on engineering drawings?

Interpreting GD&T symbols on engineering drawings requires understanding the meaning of each symbol and its associated tolerances. Each symbol represents a specific geometric control, and the numerical values specify the allowable deviation from the ideal geometry. For example:

- Position Symbol (√): Indicates the allowed positional variation of a feature. The value next to the symbol specifies the maximum permissible deviation from the ideal position.

- Perpendicularity Symbol (∣): Indicates the allowed variation in perpendicularity between a feature and a datum reference plane.

- Flatness Symbol (∘): Indicates the allowed variation from a flat surface.

Reference planes (datums) are crucial for interpreting GD&T. Datums are theoretical planes, axes, or points used as reference points for specifying geometric tolerances. Understanding the datum references is essential to correctly interpret the tolerances.

Training and familiarity with the ASME Y14.5 standard (or equivalent international standard) are essential for accurately interpreting GD&T symbols.

Q 7. Describe your experience using Coordinate Measuring Machines (CMMs).

I have extensive experience using Coordinate Measuring Machines (CMMs), both in research and industrial settings. I’m proficient in operating various types of CMMs, including bridge-type, gantry-type, and articulated-arm CMMs.

My experience includes:

- Programming CMMs: Developing and executing measurement programs using various CMM software packages (e.g., PC-DMIS, Calypso).

- Data Acquisition and Analysis: Collecting large amounts of 3D coordinate data, performing statistical analysis, generating reports, and identifying deviations from design specifications.

- Troubleshooting CMM Issues: Diagnosing and resolving hardware and software problems to ensure accurate measurements.

- Part Inspection: Performing complex inspections of various parts ranging from small precision components to large complex assemblies.

- Implementing GD&T in CMM programming: Utilizing GD&T principles to accurately evaluate component geometry compliance.

I’ve worked on projects involving reverse engineering, quality control, and process optimization, using CMM data to improve manufacturing processes and product quality. For example, one project involved identifying the root cause of dimensional inconsistencies in a critical automotive component using CMM data analysis, ultimately leading to improved tooling and a reduction in manufacturing defects.

Q 8. Explain the different types of CMM probes and their applications.

Coordinate Measuring Machines (CMMs) utilize various probes to contact and measure workpiece features. The choice of probe depends heavily on the part’s geometry, material, and the required accuracy.

- Touch Trigger Probes: These are the most common type, using a stylus that triggers a signal upon contact with the workpiece. They’re excellent for measuring points, lines, and surfaces, and are relatively robust. Think of them like a very precise fingertip feeling for points on the part. For instance, they are ideal for inspecting complex shapes on a car part or measuring critical dimensions of a medical implant.

- Scanning Probes: These probes continuously collect data as they move across the workpiece’s surface, creating a point cloud representing the shape. This is much faster than point-by-point measurement with touch trigger probes and perfect for creating a detailed 3D model of a complex shape. Imagine a high-resolution scanner creating a digital replica of a sculpted piece of art. They’re great for capturing freeform surfaces on things like turbine blades or automotive body panels.

- Optical Probes: These use non-contact methods, such as laser or white light, to measure the part’s surface. They’re extremely useful for fragile or delicate parts where physical contact might cause damage. Picture a non-invasive scan of a delicate artifact in a museum.

- Rentrant Probes: Designed specifically for measuring internal features or recessed areas of the workpiece that are impossible to access with standard probes. These are crucial for inspecting features like holes or cavities within a casting.

The selection process often involves considering factors such as the desired measurement speed, the part’s geometry and material, and the needed accuracy and repeatability. We carefully match the probe type to the application to ensure optimal results.

Q 9. How do you create a CMM inspection program?

Creating a CMM inspection program involves a structured process. It begins with understanding the part’s design and specifications, including tolerances and geometric dimensioning and tolerancing (GD&T) requirements. Then:

- Part Definition: We define the part’s coordinate system and identify all critical features to be measured.

- Probe Selection: The appropriate probe type is chosen based on the part’s geometry and material.

- Feature Measurement Strategy: We determine the best approach for measuring each feature, including the measurement points, scanning parameters (for scanning probes), and the appropriate algorithms.

- Program Creation: The program is written using CMM software, defining the probe path, measurement routines, and data analysis options. This often includes utilizing CAD data to align the virtual model to the actual part and defining measurement points directly from the CAD.

- Program Verification: Before running the program on the actual workpiece, we simulate the program to ensure it’s accurate and efficient. We also perform a trial run on a known ‘good’ part to verify accuracy and identify any issues.

- Program Optimization: We refine the program based on the verification results, optimizing the measurement strategy for speed and efficiency while ensuring accuracy.

Throughout this process, it’s critical to maintain rigorous documentation and traceability. A poorly designed program can lead to inaccurate measurements and incorrect conclusions, which is why this systematic process is crucial.

Q 10. What software are you familiar with for CMM programming and data analysis?

My experience encompasses a range of CMM software packages. I’m proficient in PC-DMIS, a widely used software known for its robust features and comprehensive functionalities for programming and analyzing CMM data. I also have experience with Calypso, another popular option offering advanced capabilities in GD&T analysis. In addition, I am familiar with other platforms like QUINDOS and ZEISS PiWeb, depending on the client’s specific requirements and the CMM being used.

These software packages allow for automation, statistical analysis, and reporting, which are all crucial for efficient and accurate measurement processes.

Q 11. Describe your experience with statistical process control (SPC) in measurement.

Statistical Process Control (SPC) is integral to effective measurement. It provides a structured approach to monitoring and controlling the manufacturing process to minimize variations and improve quality. In my work, I use SPC charts like control charts (X-bar and R charts, for instance) to track the measured values over time. This allows me to identify trends, detect outliers (measurements outside the expected range), and assess the stability of the process.

For example, if we’re measuring the diameter of a shaft, we might collect data from multiple samples throughout the production run. Plotting this data on an X-bar and R chart shows the average diameter and the range of variation. If the data consistently falls outside pre-defined control limits, it flags potential issues in the manufacturing process, prompting investigation and corrective actions. This proactive approach helps to prevent the production of defective parts.

Q 12. How do you handle discrepancies between measured values and specifications?

Discrepancies between measured values and specifications require a systematic investigation. The first step is to verify the accuracy and validity of the measurement itself. We check the CMM’s calibration status, the probe’s condition, and the accuracy of the inspection program. We might also re-run the measurement to ensure consistency. If the measurement is valid, the next step is to understand the root cause of the discrepancy.

This could be due to variations in the manufacturing process, tool wear, or errors in the design specifications. We document our findings, investigate the source of the problem, and work with the manufacturing team to implement corrective actions. This might involve adjusting the manufacturing process, replacing worn tooling, or revising the design specifications. Ultimately, the goal is to improve the manufacturing process to minimize future discrepancies.

Q 13. Explain your process for documenting measurement results.

Comprehensive documentation is vital for traceability and quality assurance. My process includes detailed records of:

- Part Identification: Clear identification of the workpiece including part number, revision level, and batch number.

- Measurement Date and Time: Accurate timestamps to track measurements over time.

- CMM and Probe Information: Details about the CMM used, its calibration status, and the specific probe employed.

- Inspection Program Details: Information on the inspection program used, including revision level and any modifications made.

- Measurement Results: Detailed results including all measured values, tolerances, and GD&T compliance.

- Statistical Data: Any statistical data obtained, such as control charts and capability analysis results.

- Analyst’s Signature and Verification: Confirmation of the analysis and any deviations from specifications.

All documentation is stored in a secure, centralized system for easy access and retrieval. This robust system ensures full traceability and allows for comprehensive analysis if issues arise.

Q 14. How do you ensure traceability in your measurement processes?

Traceability in measurement is crucial for ensuring the accuracy and reliability of the results. We achieve this through a combination of methods:

- CMM Calibration: Regular calibration of the CMM against traceable standards ensures its accuracy and reliability. Calibration certificates provide a documented chain of traceability to national or international standards.

- Probe Calibration: Probes are also calibrated regularly, ensuring their accuracy and repeatability. Calibration data should be documented.

- Standard Parts: Using traceable standard parts for verification helps ensure that measurements are accurate and reliable.

- Documented Procedures: Maintaining detailed written procedures for calibration, measurement, and data analysis ensures consistency and repeatability.

- Software Validation: Ensuring the measurement software is properly validated and kept up to date.

- Data Management System: A secure and organized data management system provides easy access to all relevant data, enabling full traceability of each measurement process from start to finish.

By implementing these procedures, we create an unbroken chain of traceability, ensuring the reliability of our measurement results and supporting any audits or investigations.

Q 15. Describe your experience with different types of measuring tools (e.g., micrometers, calipers, dial indicators).

My experience with measuring tools spans a wide range, encompassing both common and specialized instruments. I’m proficient with micrometers, for precise measurements down to thousandths of an inch or micrometers; vernier calipers, offering a balance between precision and speed for external and internal measurements; and dial indicators, crucial for detecting minute variations in surface flatness or shaft runout. I’ve used digital versions of these tools extensively, appreciating their speed and data logging capabilities. Beyond these, I’ve worked with optical comparators for detailed shape inspection, height gauges for precise vertical measurements, and even coordinate measuring machines (CMMs) for complex three-dimensional measurements.

- Micrometers: I’ve used these extensively for measuring the thickness of sheet metal, the diameter of small shafts, and the depth of grooves. The feel and precision of a micrometer are crucial for accurate readings.

- Vernier Calipers: These are my go-to tool for quick measurements in manufacturing, often used for checking part dimensions during production runs. Their versatility for internal, external, and depth measurements is invaluable.

- Dial Indicators: These are indispensable for detecting minute variations in surface flatness or shaft runout, vital in ensuring machinery operates smoothly and efficiently. I’ve used these in machine alignment and quality control processes.

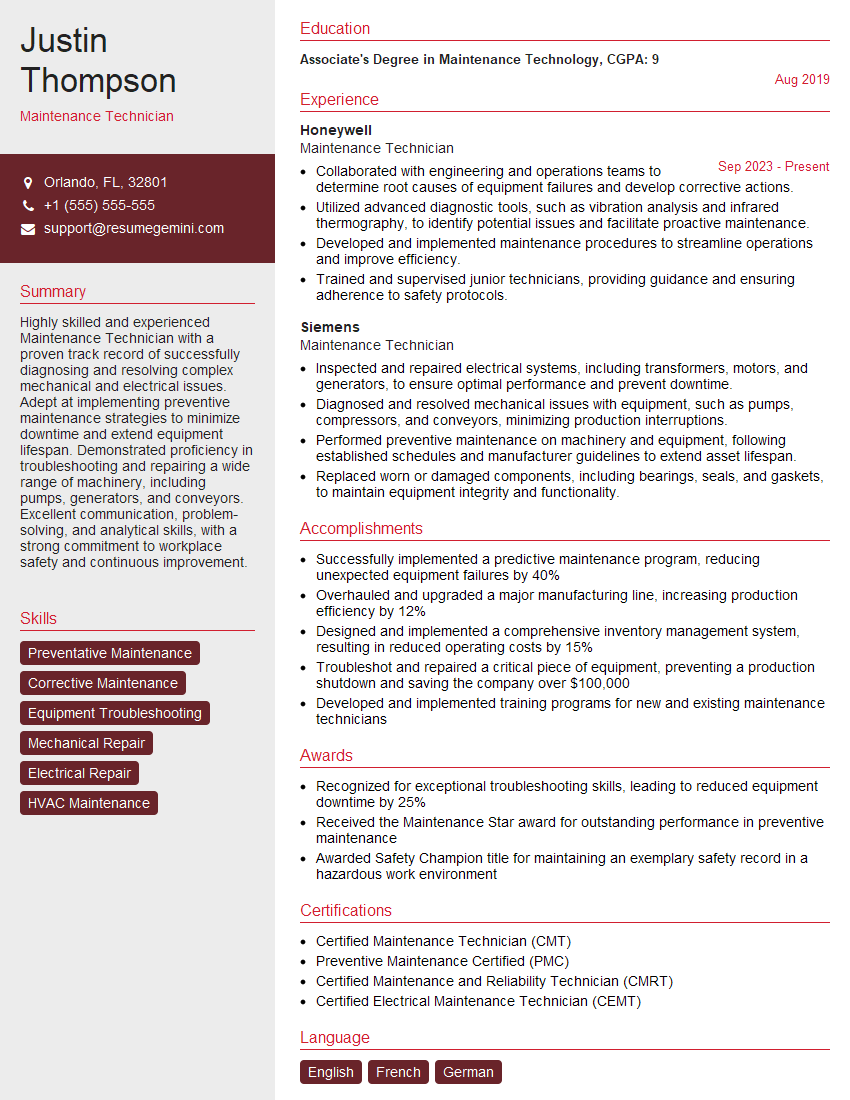

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you select the appropriate measuring instrument for a given task?

Selecting the right measuring instrument is paramount for accurate and efficient work. The choice depends on several key factors:

- Required Precision: For extremely precise measurements (e.g., tolerances of a few micrometers), micrometers or even CMMs are necessary. Less precise measurements can be made with calipers or rulers.

- Type of Measurement: Internal dimensions need inside calipers, external dimensions need outside calipers, and depth measurements necessitate depth gauges or a specific caliper function.

- Size and Shape of the Workpiece: Large parts might require CMMs or other specialized tools, while small parts are suitable for micrometers or calipers.

- Material of the Workpiece: The material can affect the choice of measuring instrument. For instance, measuring soft materials like rubber might require a different technique than measuring hard metal.

- Measurement Speed: If speed is crucial, digital calipers or optical comparators might be preferred over micrometers, though the level of precision needs to be considered.

For example, I wouldn’t use a ruler to measure a small precision bearing; a micrometer would be far more appropriate. Similarly, inspecting the overall geometry of a complex part would call for a CMM, not just calipers.

Q 17. Describe your experience with laser scanning or other advanced measurement techniques.

My experience with advanced measurement techniques includes extensive work with laser scanning. This technology allows for rapid, non-contact measurement of complex shapes and surfaces, creating detailed 3D models. I’ve used laser scanners in reverse engineering applications, recreating CAD models from existing parts. This is particularly useful when dealing with legacy parts where original drawings are unavailable. Additionally, I’ve utilized structured light scanning for similar purposes, finding it particularly helpful in capturing fine details. I also have some experience with photogrammetry, another non-contact measurement technique that uses multiple images to build a 3D model. These methods are superior to traditional contact measurement when dealing with delicate or complex geometries.

For example, when working on a project involving a highly intricate sculpture, laser scanning provided a quick and accurate way to capture its exact dimensions and shape, which was then used to create a precise replica.

Q 18. How do you troubleshoot issues with measuring instruments?

Troubleshooting measuring instruments starts with a systematic approach. First, I check for obvious issues such as damage, loose parts, or dirty surfaces. Next, I verify calibration. Many instruments require regular calibration to ensure accuracy. I use calibrated reference standards (e.g., gauge blocks) to check the instrument’s readings against known values. If the instrument is still inaccurate after calibration, I might check the instrument’s manuals for troubleshooting steps or contact the manufacturer for service or repair. For example, a consistently off-by-a-specific-amount reading could point to a systematic error requiring calibration, whereas random variations point to other issues such as instrument damage or operator error.

A practical example: if my micrometer consistently reads 0.002 inches too low, this suggests a calibration issue, which I’d address by recalibrating the instrument using standard gauge blocks.

Q 19. Explain your understanding of measurement uncertainty.

Measurement uncertainty is the unavoidable doubt associated with any measurement. It reflects the range of values within which the true value is likely to lie. It’s not about mistakes, but about the inherent limitations of any measurement system. Several factors contribute to uncertainty, including the resolution of the instrument, environmental conditions (temperature, humidity), operator skill, and the workpiece’s condition. Quantifying uncertainty is crucial for understanding the reliability of measurement results. This is commonly expressed as a ± value, indicating the range of possible error around a measured value. For instance, a measurement reported as 10.000 ± 0.005 mm indicates that the true value likely lies between 9.995 and 10.005 mm.

Understanding measurement uncertainty is essential for making informed decisions. If the uncertainty is larger than the design tolerance, then the measurement may not be reliable enough to assess whether the workpiece meets the requirements.

Q 20. How do you interpret measurement data to identify trends and patterns?

Interpreting measurement data involves more than just recording the values; it requires careful analysis to identify trends, patterns, and anomalies. I often use statistical methods, such as calculating averages, standard deviations, and control charts, to assess the consistency and stability of the measurements. This helps to identify potential sources of variation, whether it’s due to instrument error, process variability, or changes in raw materials. Visualizations like histograms and scatter plots are invaluable tools for spotting patterns and outliers in the data. For example, a control chart showing points consistently outside the control limits would indicate a problem that needs to be investigated.

Identifying trends can help in predictive maintenance; for example, if a certain dimension on a manufactured part slowly drifts over time, it could suggest wear in a machine tool, allowing for preventative maintenance before it leads to failures.

Q 21. Describe your experience with data analysis software (e.g., Minitab, JMP).

I have extensive experience with data analysis software, including Minitab and JMP. These packages offer powerful tools for statistical analysis, data visualization, and process capability studies. I use Minitab to perform capability analysis, Gage R&R studies (to evaluate measurement system variability), and hypothesis testing. JMP is useful for its robust statistical modeling capabilities and its interactive visualizations. For example, I’ve used Minitab to perform a Gage R&R study on a newly implemented measurement system to ensure that the variability of the measurements is acceptable compared to the overall part tolerances. Using this, I can determine if the measurement system is capable of accurately measuring the part dimensions to determine if it is fit for its intended purpose.

In a real-world scenario, I used JMP to analyze data from a machining process, identifying a correlation between tool wear and part dimensions that led to process improvements and reduced scrap.

Q 22. How do you ensure the safety and proper handling of measuring instruments?

Ensuring the safety and proper handling of measuring instruments is paramount for accurate results and personal well-being. This involves several key practices. First, always handle instruments with care, avoiding drops or impacts that could damage delicate components or calibration. For example, micrometers should be stored in protective cases and handled gently to prevent damage to the anvils and spindle. Second, understand and adhere to the instrument’s specific instructions. This might include guidelines for cleaning, storage temperature and humidity, and the proper use of accessories.

Regular calibration is crucial. A miscalibrated instrument leads to inaccurate measurements, potentially causing costly errors in manufacturing or design. Many instruments require periodic calibration checks using traceable standards to ensure their accuracy. Finally, always wear appropriate personal protective equipment (PPE), such as safety glasses, when using certain instruments, especially those involving lasers or sharp edges. Think of it like driving a car; you wouldn’t drive without a seatbelt, and similarly, responsible use of measuring tools necessitates the appropriate safety precautions.

Q 23. Explain your experience with different types of workpiece materials and their measurement challenges.

My experience encompasses a broad range of workpiece materials, each posing unique measurement challenges. For instance, measuring soft materials like rubber or polymers requires careful consideration to avoid deformation under the measuring probe. Specialized techniques, such as using low-force probes or optical measurement methods, might be necessary. Conversely, hard materials like hardened steel or ceramics can be susceptible to chipping or scratching during measurement, necessitating the use of carbide or diamond tipped probes and careful handling.

Measuring porous materials, such as wood or castings, requires understanding how surface irregularities impact measurements. This often involves surface preparation techniques or using averaging methods to get a representative measurement. Furthermore, the thermal properties of materials are crucial; measuring a hot workpiece with a cold instrument will lead to inaccurate readings due to thermal expansion. This often requires temperature compensation or using instruments specifically designed for high-temperature environments. Each material presents specific nuances, and a skilled metrologist must adapt their techniques accordingly.

Q 24. How do you handle complex geometries during measurement?

Handling complex geometries during measurement often requires a combination of techniques and instruments. Simple linear measurements are often insufficient; we often employ coordinate measuring machines (CMMs) or 3D scanners for intricate parts. CMMs, through their tactile probes or laser scanning capabilities, allow for the precise measurement of complex features such as curves, tapers, and undercuts.

For highly complex shapes, specialized software is often used to analyze the point cloud data obtained from 3D scanners. This software allows for reverse engineering, creating CAD models from the measured data. This enables detailed analysis of surface finish, deviation from the nominal design, and overall dimensional accuracy. Furthermore, selecting the appropriate probe for the application is critical. Smaller probes are suited for finer details, while larger probes might be needed for robust measurements on larger surfaces. It’s a balancing act between accuracy and practicality.

Q 25. Describe your experience with automated measurement systems.

I have extensive experience with automated measurement systems, including robotic CMMs and automated optical inspection (AOI) systems. These systems significantly improve efficiency and repeatability compared to manual methods. Robotic CMMs, for example, can automatically measure a large batch of parts with consistent accuracy and speed. Programming these systems often involves creating measurement routines using specialized software, defining probe paths, and setting tolerances.

AOI systems use optical sensors and image processing techniques to detect defects and measure features on parts automatically. This is particularly useful for high-volume production environments where speed and consistency are critical. My experience includes the setup, programming, and troubleshooting of these systems, as well as interpreting the data generated. It’s not just about the hardware, but about mastering the software and understanding its limitations to optimize the measurement process.

Q 26. How do you maintain the cleanliness and organization of your measurement workspace?

Maintaining a clean and organized measurement workspace is crucial for accurate and reliable results. This begins with regular cleaning of instruments and work surfaces. Compressed air, lint-free cloths, and appropriate cleaning solutions are essential tools. Instruments must be stored correctly; for instance, micrometers should be stored with their thimbles slightly open to prevent binding.

Organization is just as important. Measuring instruments should be stored in designated areas, with clear labeling to avoid confusion. Workspaces should be free of clutter to prevent accidental damage to instruments or workpieces. A well-organized workspace helps increase efficiency by making frequently used tools readily accessible. Think of a surgeon’s operating room; a clean and organized environment is a foundation for precision and accuracy.

Q 27. Describe a time you had to solve a challenging measurement problem.

One particularly challenging measurement problem involved measuring the internal diameter of a very small, intricately shaped bore in a precision aerospace component. Standard methods failed due to the bore’s tight tolerances and complex geometry. Traditional methods like calipers and micrometers were too large to access the bore.

My solution involved a multi-step approach: First, I used a fiber optic borescope to visually inspect the bore and confirm its geometry. Then, I utilized a small, specialized internal probe on a CMM equipped with a high-resolution sensor. Careful probe selection and programming were critical to avoid damage to the delicate component. Finally, I employed specialized software to analyze the acquired data and generate accurate dimensional measurements. The process required meticulous planning and execution, demonstrating my ability to adapt to unexpected challenges and devise effective solutions.

Q 28. How do you stay up-to-date with advancements in measurement technology?

Staying up-to-date with advancements in measurement technology is an ongoing process. I regularly attend industry conferences and workshops to learn about new techniques and instrumentation. I subscribe to relevant professional journals and online resources to keep abreast of the latest developments in CMM technology, optical metrology, and laser scanning techniques.

Participating in professional organizations like ASME (American Society of Mechanical Engineers) provides access to training materials and networking opportunities with other metrologists. Furthermore, I actively seek out training opportunities provided by instrument manufacturers to learn how to best utilize their equipment. Staying current ensures I maintain a high level of expertise and leverage the most effective measurement methodologies in my work.

Key Topics to Learn for Tool and Workpiece Measurement Interview

- Dimensional Metrology: Understanding fundamental concepts like accuracy, precision, tolerance, and repeatability. Practical application includes interpreting engineering drawings and specifications.

- Measurement Instruments & Techniques: Gain proficiency in using various tools such as calipers, micrometers, dial indicators, CMMs (Coordinate Measuring Machines), and optical comparators. Explore different measurement techniques like surface roughness measurement and geometrical dimensioning and tolerancing (GD&T).

- Statistical Process Control (SPC): Learn how to analyze measurement data using statistical methods to identify trends, variations, and potential process improvements. Practical application includes creating control charts and interpreting capability studies.

- Workpiece Inspection & Quality Control: Develop a strong understanding of inspection procedures, quality control standards, and problem-solving approaches to identify and address measurement discrepancies. This includes understanding different types of inspection reports.

- Calibration & Traceability: Understand the importance of instrument calibration, traceability to national standards, and the impact of calibration uncertainty on measurement results.

- Advanced Measurement Techniques: Explore advanced techniques such as laser scanning, 3D scanning, and non-contact measurement methods. Understand their applications and limitations.

- Problem-Solving & Troubleshooting: Develop skills in identifying and resolving measurement inaccuracies, discrepancies, and equipment malfunctions. This includes understanding root cause analysis.

Next Steps

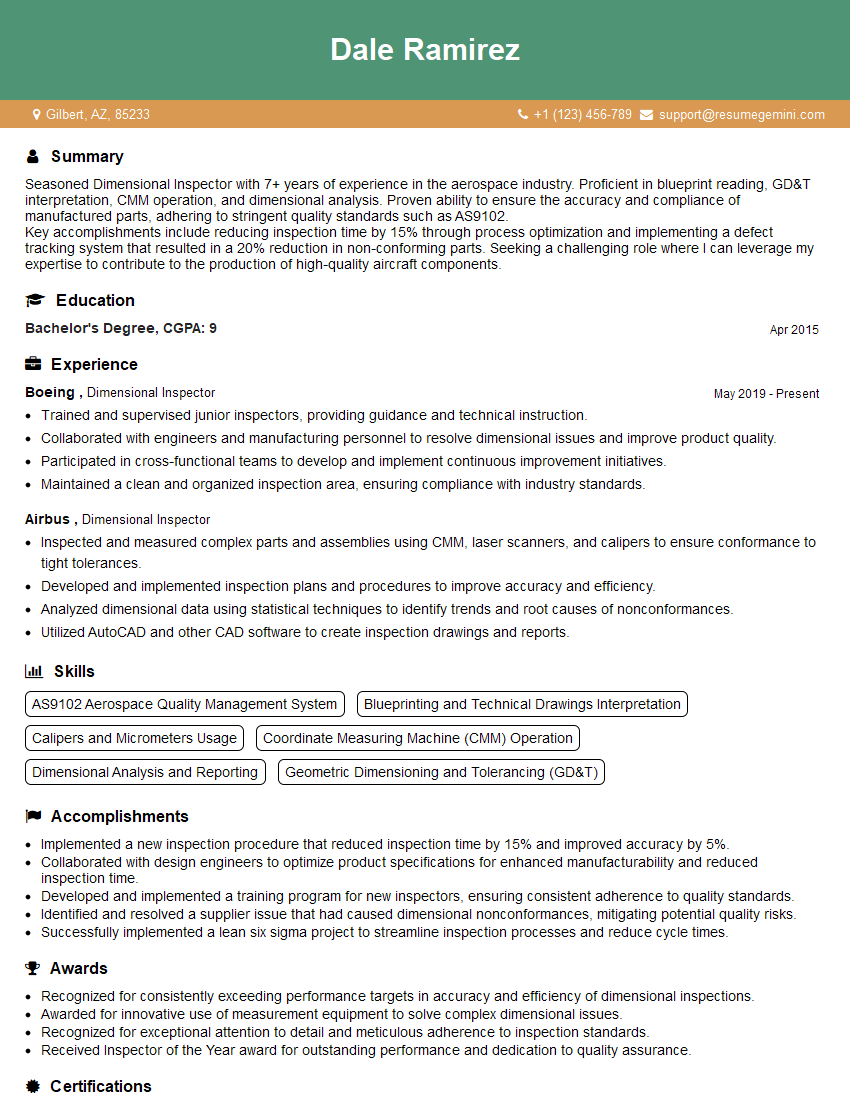

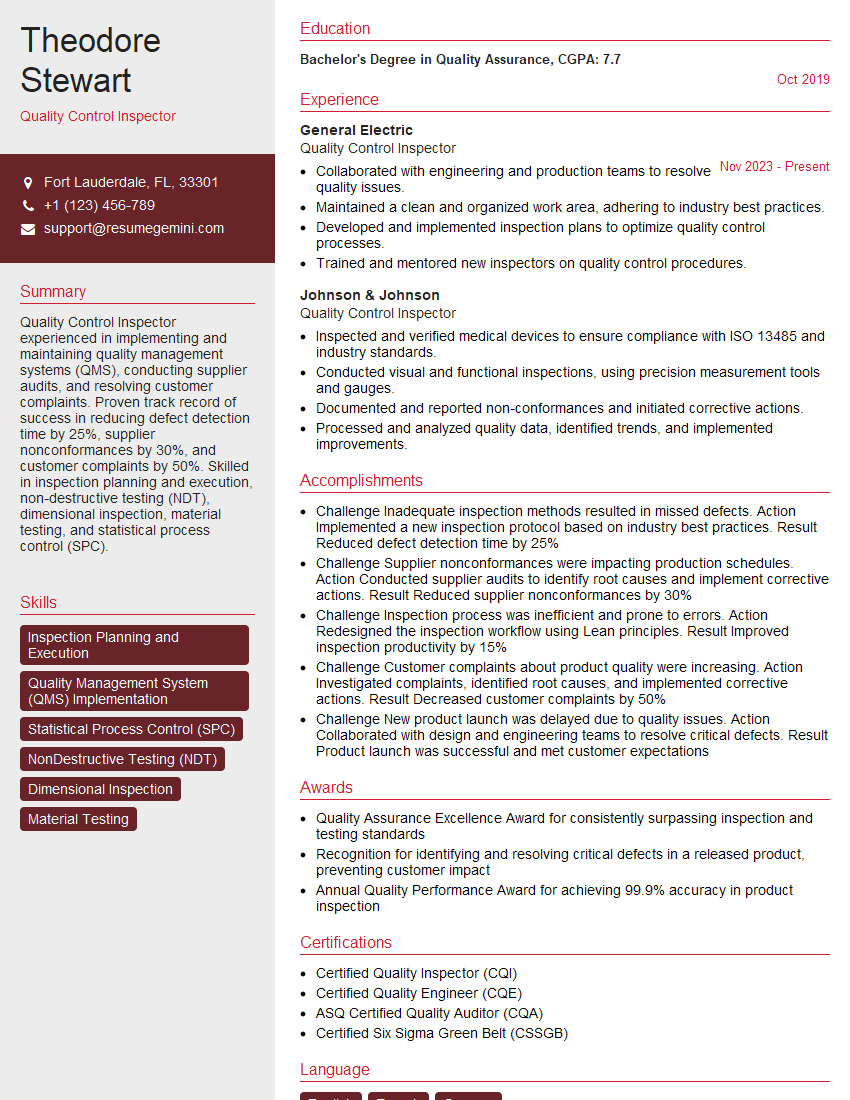

Mastering Tool and Workpiece Measurement is crucial for career advancement in manufacturing, engineering, and quality control. A strong understanding of these principles demonstrates your technical expertise and problem-solving abilities, making you a highly valuable asset to any team. To significantly boost your job prospects, creating an ATS-friendly resume is essential. ResumeGemini can help you build a professional and impactful resume that highlights your skills and experience effectively. ResumeGemini provides examples of resumes tailored to Tool and Workpiece Measurement, helping you present yourself in the best possible light. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.