Cracking a skill-specific interview, like one for Dosimetry and Calibration, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Dosimetry and Calibration Interview

Q 1. Explain the principles of ionizing radiation dosimetry.

Ionizing radiation dosimetry is the science and practice of measuring the amount of ionizing radiation absorbed by a material or a person. It’s based on the principle that ionizing radiation interacts with matter, transferring energy and causing ionization (the removal of electrons from atoms). This energy transfer can lead to biological damage. Dosimetry aims to quantify this energy deposition, typically expressed in units like Gray (Gy) for absorbed dose and Sievert (Sv) for equivalent dose, which accounts for the biological effectiveness of different types of radiation.

The process involves using a dosimeter to measure the radiation field. The dosimeter interacts with the radiation, and the resulting signal (e.g., change in electrical charge, luminescence, or chemical change) is proportional to the radiation dose. Calibration is crucial to ensure the accuracy of the dosimeter’s response and its traceability to national or international standards.

For example, imagine a radiation worker in a nuclear power plant. Their dosimeter, carefully calibrated, measures the radiation they’re exposed to throughout their shift. This data helps ensure their exposure remains within safe limits, protecting their health.

Q 2. Describe different types of dosimeters and their applications.

Various dosimeters exist, each suited for different applications. Some common types include:

- Film Badges: These use photographic film that darkens upon exposure to radiation. They offer a relatively inexpensive and simple method for integrating dose over time but require careful processing and have limited sensitivity.

- Thermoluminescent Dosimeters (TLDs): These use crystals that store energy when exposed to radiation, releasing it as light when heated. TLDs are more sensitive and accurate than film badges and are widely used for personal monitoring.

- Optically Stimulated Luminescence (OSL) Dosimeters: These use materials that release light upon stimulation with laser light after exposure to radiation. They offer high sensitivity and wide dose range, making them ideal for various applications.

- Geiger-Müller (GM) Counters: These detect individual radiation events and provide a count rate, often used for radiation surveys and contamination monitoring, rather than precise dose measurement.

- Ionization Chambers: These directly measure the ionization produced by radiation, providing high accuracy for dose measurements, particularly in high-dose applications like radiation therapy.

Applications range from personal monitoring of radiation workers to environmental monitoring, medical radiation therapy dosimetry, and research in radiation physics and biology.

Q 3. How do you ensure the accuracy and traceability of calibration procedures?

Accuracy and traceability in calibration are paramount. It’s achieved through a multi-step process:

- Using Standardized Sources: Calibration relies on traceable radiation sources, often from national metrology institutes. These sources have their activity precisely determined and documented.

- Calibration Equipment: High-quality, regularly calibrated equipment is essential. This could include electrometers, pulse counters, and other instruments used to measure the dosimeter’s response.

- Environmental Control: Factors like temperature and humidity can affect dosimeter response, hence the importance of controlled calibration environments.

- Documentation: Comprehensive records, including calibration certificates, equipment details, and measurement uncertainties, are crucial for traceability and compliance with regulatory requirements.

- Regular Calibration: Dosimeters require periodic calibration to ensure their response remains accurate. The frequency depends on the type of dosimeter and its usage.

By linking the calibration process to national standards through a chain of calibrations, we ensure that dosimeter readings are meaningful and comparable across different laboratories and institutions.

Q 4. What are the common sources of error in dosimetry measurements?

Several factors can introduce errors in dosimetry measurements:

- Dosimeter-Specific Errors: Energy dependence (different responses to different radiation energies), temperature dependence, and fading (loss of signal over time).

- Environmental Factors: Temperature, humidity, pressure, and electromagnetic fields can influence dosimeter response.

- Measurement Geometry: The relative positions of the dosimeter and the radiation source significantly affect the measured dose.

- Radiation Scatter: Scattered radiation can reach the dosimeter from unexpected directions, leading to inaccurate readings.

- Calibration Uncertainty: Even with careful calibration, there’s inherent uncertainty associated with the radiation source’s activity and the calibration equipment.

- Human Error: Incorrect handling, recording, or calculation of dosimetry data can lead to significant errors.

Understanding these sources of error is crucial for proper error analysis and quality assurance in dosimetry.

Q 5. Explain the concept of uncertainty in measurement and its importance in dosimetry.

Uncertainty in measurement quantifies the doubt associated with a measurement result. It acknowledges that no measurement is perfectly accurate, and there’s always a range of possible values within which the true value lies. In dosimetry, uncertainty is crucial because it directly affects the reliability of dose assessments and influences decisions about radiation safety.

For example, if a dosimeter reads 1 mSv with an uncertainty of ±0.1 mSv, the true dose could lie anywhere between 0.9 and 1.1 mSv. This uncertainty must be considered when evaluating compliance with dose limits or assessing potential health risks. Uncertainty estimation involves combining various sources of error, including those from the dosimeter itself, calibration, and measurement procedures.

ISO standards (like ISO/IEC 17025) provide guidelines for uncertainty estimation and reporting, emphasizing transparency and rigorous analysis.

Q 6. How do you perform a calibration of a Geiger-Müller counter?

Calibrating a Geiger-Müller counter involves determining its efficiency in detecting radiation. This typically involves exposing the GM counter to a known radiation source with a precisely known activity.

Procedure:

- Prepare the GM counter: Ensure it is in good working order and has a stable operating voltage.

- Select a standardized radiation source: Choose a source with a known activity and energy appropriate for the intended use of the GM counter (e.g., a 137Cs source).

- Establish a known geometry: Position the source at a fixed distance from the GM counter’s window to maintain consistent geometry during measurements.

- Measure the count rate: Record the number of counts detected by the GM counter over a specific time interval. Repeat this several times to obtain statistically meaningful data.

- Calculate the efficiency: Compare the measured count rate to the known activity of the radiation source to determine the detector efficiency. This will often involve correcting for background radiation (counts detected in the absence of the source).

- Document the results: Record the date, time, source details, environmental conditions, and calculated efficiency. This will ensure traceability and allow for tracking changes in performance over time.

The calibration establishes a relationship between the measured count rate and the actual radiation activity, allowing for more accurate measurements in subsequent radiation surveys.

Q 7. What are the regulatory requirements for dosimetry and calibration in your field?

Regulatory requirements for dosimetry and calibration vary depending on location (country, state, etc.) and the specific application. However, some general principles apply:

- Compliance with national and international standards: Many countries have regulatory bodies overseeing radiation safety. Calibration laboratories often need to be accredited to standards like ISO/IEC 17025 to demonstrate competence.

- Personal Monitoring: Radiation workers in regulated industries must have their radiation exposure monitored using calibrated dosimeters. Dose limits are typically defined by regulatory bodies, such as the NRC (Nuclear Regulatory Commission) in the USA or similar organizations in other countries.

- Equipment Calibration: Instruments used for radiation measurement, including dosimeters and survey meters, must be calibrated regularly to maintain accuracy. Calibration intervals are often specified by regulatory bodies or manufacturers.

- Record Keeping: Detailed records of calibration procedures, results, and uncertainties are required for auditing purposes.

- Quality Assurance: Quality assurance programs are implemented to ensure the accuracy and reliability of dosimetry measurements, often involving regular audits and intercomparisons with other laboratories.

Ignorance of these regulations can have serious consequences, from invalidating results to compromising worker safety.

Q 8. Describe the process of verifying the accuracy of a radiation survey meter.

Verifying the accuracy of a radiation survey meter is crucial for ensuring reliable radiation measurements. This process, often called calibration, involves comparing the meter’s readings to a known, traceable standard. It’s like checking a kitchen scale against certified weights to make sure it’s measuring accurately.

The process typically involves:

- Source Selection: Choosing a calibrated radiation source emitting the type and energy range relevant to the meter’s intended use. This might be a gamma source like Cesium-137 or Cobalt-60, or a beta source depending on the application.

- Controlled Environment: Performing the calibration in a controlled environment, minimizing background radiation and ensuring stable conditions.

- Measurement Comparison: Exposing the survey meter and the standard instrument to the radiation source simultaneously at various intensities. The readings from both are compared and any deviation is documented. We use various reference sources from accredited standards bodies.

- Calibration Factor Determination: Based on the comparison, a calibration factor is determined. This factor adjusts future readings from the survey meter to account for any discrepancies. This factor might be a simple multiplicative constant or could involve a more complex correction based on energy.

- Documentation: Meticulous record-keeping is essential. This includes the date, time, source used, environmental conditions, and the calculated calibration factor. This forms part of the instrument’s traceability to national standards.

Failure to regularly calibrate survey meters can lead to inaccurate measurements, potentially exposing personnel to unsafe radiation levels or causing unnecessary shutdowns due to false-positive readings.

Q 9. Explain the difference between direct and indirect dosimetry methods.

Direct and indirect dosimetry methods differ in how they measure radiation dose. Direct methods directly measure the energy deposited in a material (like human tissue), while indirect methods infer the dose from a related effect.

Direct Dosimetry: This involves directly measuring the energy absorbed by a material through devices like ionization chambers, which measure the ionization produced by radiation in a gas. The charge collected is directly proportional to the absorbed dose. Think of it as directly weighing the quantity of absorbed radiation. An example is using a thermoluminescent dosimeter (TLD) that measures the light emitted when heated after being exposed to radiation. This light intensity is related to the absorbed dose.

Indirect Dosimetry: In this method, we measure a quantity related to the absorbed dose and then use established relationships to calculate the dose itself. For instance, photographic film dosimetry, now largely historical, measures the darkening of the film, which is indirectly proportional to the radiation dose. Another example is chemical dosimetry, which measures the change in a specific chemical solution after exposure to radiation.

The choice between direct and indirect methods depends on factors such as the type and energy of radiation, the required accuracy, and the cost and practicality of the measurement.

Q 10. How do you handle and report dosimetry results?

Handling and reporting dosimetry results requires precision and adherence to strict protocols. This involves several key steps:

- Data Acquisition: Carefully collect data from dosimeters, ensuring accurate readings and recording relevant metadata (e.g., individual identification, date, time, location). This data must be handled with the utmost care to avoid errors.

- Data Processing: This includes corrections for background radiation and any calibration factors. Software programs are typically used to automate this process and maintain traceability to national standards.

- Dose Calculation: Based on the processed data, the absorbed dose and equivalent dose (or effective dose) are calculated, adhering to established standards and using appropriate conversion factors.

- Report Generation: A comprehensive report is prepared, including all relevant information (e.g., individual details, measured doses, measurement uncertainties, and any relevant comments). This report must be clear, concise, and easy to understand.

- Quality Assurance: A thorough quality assurance process is crucial to ensure the accuracy and reliability of the results. This might involve internal audits and regular intercomparisons with other dosimetry laboratories.

- Record Keeping: All data and reports are stored securely, in compliance with regulatory requirements, and readily accessible for future reference or audits.

Inaccurate handling and reporting of dosimetry results can have serious consequences, impacting personnel safety and potentially leading to legal issues. Therefore, strict adherence to established guidelines and procedures is non-negotiable.

Q 11. Describe the importance of quality control in dosimetry and calibration.

Quality control (QC) in dosimetry and calibration is paramount to ensuring accurate and reliable radiation measurements. Think of it as the backbone of a reliable dosimetry program, ensuring the integrity of every measurement.

Key aspects of QC include:

- Regular Calibration: Dosimeters and survey meters must be regularly calibrated against traceable standards to ensure accuracy. The frequency of calibration depends on the type of instrument and its usage. This is analogous to regular servicing of a car to maintain performance.

- Instrument Checks: Routine checks of instruments (e.g., linearity checks, energy dependence checks) verify their proper operation and detect potential issues before they affect measurements. This can include pre-use checks before each measuring procedure.

- Traceability: Maintaining a complete chain of traceability to national or international standards ensures comparability and reliability of measurements. This establishes a clear line of evidence demonstrating the accuracy of our measurements.

- Proper Handling and Storage: Dosimeters must be handled and stored correctly to avoid damage or degradation that could compromise measurement accuracy.

- Personnel Training: Proper training of personnel is critical in ensuring competent operation, accurate data acquisition, and data analysis.

- Quality Assurance Audits: Regular internal and external audits provide independent verification of the QC program’s effectiveness.

Neglecting quality control in dosimetry and calibration can lead to inaccurate dose assessments, potentially exposing personnel to unnecessary radiation risks or compromising the safety of radiation facilities.

Q 12. What are the different types of radiation and their effects on human tissue?

Radiation encompasses various types, each with different interactions with human tissue and varying levels of potential harm. The effects depend on factors like radiation type, energy, dose, and exposure time.

Types of Radiation and their Effects:

- Alpha particles: Heavily ionizing but low penetrating power. Primarily a hazard if ingested or inhaled. Can cause severe damage to localized tissue.

- Beta particles: Moderately ionizing and more penetrating than alpha particles. Can cause skin burns and damage to internal organs depending on energy and level of exposure.

- Gamma rays and X-rays: Electromagnetic radiation, highly penetrating. Can damage cells throughout the body, potentially causing long-term health effects like cancer.

- Neutrons: Uncharged particles with high penetration power, interacting directly with atomic nuclei. Can induce radioactivity in materials.

The severity of effects depends on the absorbed dose. High doses can lead to acute radiation sickness, while lower doses increase the risk of long-term effects such as cancer.

Q 13. Explain the concept of effective dose and equivalent dose.

Effective dose and equivalent dose are both important quantities in radiation protection, providing different but related perspectives on the biological effects of radiation exposure.

Equivalent Dose (HT): Accounts for the different biological effectiveness of various types of radiation. It’s calculated by multiplying the absorbed dose (in Gray, Gy) by a radiation weighting factor (ωR) specific to the type of radiation. HT = D × ωR The weighting factor reflects how much more damaging a type of radiation is compared to gamma rays.

Effective Dose (E): Represents the overall health risk from radiation exposure to the entire body, considering the varying sensitivity of different organs and tissues. It is calculated by summing the weighted equivalent doses to individual organs, using tissue weighting factors (wT) that reflect each organ’s relative sensitivity to radiation-induced harm. E = Σ (wT × HT)

In essence, equivalent dose addresses the differing biological effectiveness of various radiations, while effective dose integrates the organ-specific sensitivity to radiation damage to quantify the overall health risk.

Q 14. How do you calculate the absorbed dose from a radiation source?

Calculating the absorbed dose from a radiation source involves several steps and relies on several factors.

The basic formula for absorbed dose (D) is:

D = (A × t × Γ) / (4πr²)

Where:

- D is the absorbed dose (in Gray, Gy).

- A is the activity of the source (in Becquerels, Bq).

- t is the exposure time (in seconds, s).

- Γ is the gamma constant of the source (Gy·m²/h or similar units, specific to the source and energy). This constant represents the dose rate at a certain distance from a point source.

- r is the distance from the source (in meters, m).

This formula applies for a point source in a vacuum. In reality, we must often account for factors such as shielding, scattering, and the geometry of the source and the irradiated material. More sophisticated calculations might involve Monte Carlo simulations for complex geometries or heterogeneous media.

For example, if you have a 100 mCi (0.01 Ci) Cs-137 source with a gamma constant of 0.30 Gy·m²/Ci·h, and someone stands 1 meter away for 1 hour, the calculation would be:

D = (0.01 Ci × 1 h × 0.30 Gy·m²/Ci·h) / (4π × (1 m)²) ≈ 0.00024 Gy

Remember to always use consistent units throughout your calculation. This simple example doesn’t account for scattering and attenuation; in practice, these are essential factors to consider for accurate absorbed dose calculations.

Q 15. What are the safety precautions you would take when handling radioactive materials?

Handling radioactive materials demands rigorous adherence to safety protocols. The overarching principle is ALARA – As Low As Reasonably Achievable. This means minimizing exposure time, maximizing distance from the source, and using shielding whenever possible.

- Time Minimization: Plan procedures meticulously to reduce the time spent near the source. For instance, rehearsing a procedure before handling radioactive materials helps ensure efficiency and reduces overall exposure.

- Distance Maximization: Radiation intensity decreases rapidly with distance. Always work at the maximum distance possible from the source. Using remote handling tools is vital when dealing with high-activity materials.

- Shielding: Appropriate shielding materials, such as lead, concrete, or depleted uranium, should be used to attenuate radiation. The thickness and type of shielding depend on the energy and type of radiation.

- Personal Protective Equipment (PPE): This includes lab coats, gloves, and specialized respirators depending on the material’s form (e.g., solid, liquid, gas). The choice of PPE is crucial for preventing contamination.

- Monitoring: Regular monitoring of radiation levels using instruments like Geiger counters is essential to ensure safety and compliance with regulations. This allows immediate detection of any unexpected increase in radiation.

- Proper Disposal: Radioactive waste must be handled and disposed of according to strict regulations, minimizing risks to the environment and public health.

Remember, working with radioactive materials is inherently risky. A thorough understanding of the material’s properties and rigorous adherence to safety procedures are paramount.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the principles of thermoluminescent dosimetry (TLD).

Thermoluminescent dosimetry (TLD) uses the property of certain crystalline materials, like lithium fluoride (LiF), to store energy absorbed from ionizing radiation. This stored energy is released as light (luminescence) when the material is heated. The intensity of the emitted light is proportional to the absorbed radiation dose.

The process involves:

- Exposure: The TLD is exposed to ionizing radiation, causing electrons to be trapped in crystal lattice defects.

- Heating: After exposure, the TLD is heated in a TLD reader. The trapped electrons return to their ground state, releasing energy as visible light.

- Measurement: The intensity of the emitted light is measured by a photomultiplier tube and is directly proportional to the absorbed dose.

TLDs offer several advantages: they are small, durable, relatively insensitive to environmental factors, and have a wide dynamic range. They are frequently used for personnel dosimetry in medical and industrial settings, providing a reliable measure of accumulated radiation exposure.

Q 17. Describe the operation of an ionization chamber.

An ionization chamber is a passive radiation detection device that measures ionizing radiation by collecting the ions produced when radiation interacts with a gas within the chamber.

Here’s how it works:

- Ionization: Incoming radiation ionizes the gas (typically air) inside the chamber, creating ion pairs (positive ions and electrons).

- Charge Collection: An electric field, established by applying a high voltage between electrodes within the chamber, separates these ions. The positive ions move toward the negatively charged electrode, and the electrons move towards the positively charged electrode.

- Current Measurement: The movement of these ions constitutes a small electric current, which is measured by a sensitive electrometer. The magnitude of this current is directly proportional to the radiation intensity.

Ionization chambers are widely used for radiation measurements because of their accuracy and simplicity. They are employed in diverse applications, including radiation protection, environmental monitoring, and calibrating other radiation detection instruments.

A common example is a free-air ionization chamber, used in high-energy radiation dosimetry. In this design, the chamber’s air volume is carefully defined, enabling accurate dose calculations.

Q 18. What are the advantages and disadvantages of different dosimeter types?

Various dosimeter types exist, each with its advantages and disadvantages.

- Film Badges:

- Advantages: Relatively inexpensive, provides a permanent record.

- Disadvantages: Energy dependent response, limited dynamic range, requires processing, not suitable for immediate readings.

- Thermoluminescent Dosimeters (TLDs):

- Advantages: High sensitivity, wide dynamic range, reusable.

- Disadvantages: Requires a reader, annealing is needed for reuse.

- Optically Stimulated Luminescence Dosimeters (OSLDs):

- Advantages: High sensitivity, wider dynamic range than TLDs, reusable.

- Disadvantages: Requires a reader, more expensive than TLDs.

- Electronic Personal Dosimeters (EPDs):

- Advantages: Provides immediate readings, can measure dose rate, versatile.

- Disadvantages: More expensive, battery life, can be affected by environmental factors.

The choice of dosimeter depends on the specific application, required accuracy, cost considerations, and need for immediate readout. For example, film badges might suffice for routine monitoring in low-radiation environments, while EPDs are better suited for dynamic situations requiring immediate dose assessment.

Q 19. How do you troubleshoot a malfunctioning dosimeter?

Troubleshooting a malfunctioning dosimeter involves a systematic approach. The first step is to identify the type of dosimeter and review its operational manual.

- Check for Obvious Issues: Begin with the simplest checks: Ensure the device is powered correctly, the battery is functioning, and the sensor is clean and undamaged. For example, a film badge might be damaged, leading to improper readings.

- Calibration Verification: A malfunctioning dosimeter may be due to drift or incorrect calibration. Traceability to national or international standards is crucial.

- Environmental Factors: Temperature, humidity, and other environmental factors can affect the performance of some dosimeters. Check the operating conditions to ensure they are within specified limits.

- Testing with a Known Source: Expose the dosimeter to a calibrated radiation source with a known dose rate to assess whether the instrument responds appropriately.

- Compare Readings: If possible, compare the readings with another working dosimeter under similar conditions to identify whether the issue is with the instrument or the measurement setup. If multiple dosimeters fail similarly, the issue may be with the radiation source.

- Professional Service: If simple checks and troubleshooting steps fail, contact a qualified technician or the manufacturer for professional repair or replacement.

Accurate dosimetry is paramount in radiation safety. A faulty dosimeter can lead to inaccurate dose assessments and potential health risks.

Q 20. Explain the concept of radiation shielding and its applications.

Radiation shielding aims to reduce the exposure of personnel and equipment to ionizing radiation. It works by absorbing or scattering radiation, reducing its intensity. The effectiveness of shielding depends on the type and energy of the radiation, the shielding material, and its thickness.

Common shielding materials include:

- Lead: Highly effective against gamma rays and X-rays.

- Concrete: Cost-effective for gamma and neutron shielding, especially at higher thicknesses.

- Water: Effective for neutron shielding.

- Depleted Uranium: Excellent shielding for gamma rays, used in specialized applications.

Applications of radiation shielding are wide-ranging, including:

- Nuclear power plants: To protect workers from radiation exposure.

- Medical facilities: To shield patients and staff during radiotherapy and diagnostic imaging procedures.

- Industrial radiography: To protect personnel during industrial inspection using radioactive sources.

- Spacecraft: To protect astronauts from cosmic radiation.

The design of a radiation shield is a complex process that requires careful consideration of the radiation source, the type and energy of the radiation, the required level of protection, and the cost considerations.

Q 21. Describe different calibration standards and their applications.

Calibration standards are essential for ensuring the accuracy and traceability of radiation measurements. They provide a reference point against which dosimeters and other radiation detection instruments are calibrated.

Different calibration standards are used depending on the type of radiation and the energy range:

- Primary standards: These are highly accurate standards maintained by national metrology institutes (e.g., NIST in the US). They are based on fundamental physical principles, often involving sophisticated techniques like calorimetry.

- Secondary standards: These are calibrated against primary standards and are used to calibrate other instruments. They are more readily available and often less expensive than primary standards.

- Transfer standards: These are highly stable and accurately calibrated radiation sources used to transport calibration to other facilities.

Examples of calibration standards include:

- Co-60 sources: Commonly used for gamma ray calibrations.

- Cs-137 sources: Another widely used gamma ray source.

- Am-241 sources: Used for low-energy gamma and X-ray calibrations.

The application of calibration standards ensures that radiation measurements are accurate and reliable, supporting safety and regulatory compliance across diverse applications including medical physics, nuclear power, and radiation protection.

Q 22. How do you maintain traceability in calibration procedures?

Maintaining traceability in calibration procedures is crucial for ensuring the accuracy and reliability of our measurements. It’s like having a chain of custody for your measuring instruments, proving their accuracy can be linked back to national or international standards. We achieve this through a documented chain of calibrations.

This starts with calibrating our instruments against a known standard, usually a higher-order standard traceable to a national metrology institute (NMI) like NIST (National Institute of Standards and Technology) in the US or similar organizations worldwide. Each calibration step is meticulously recorded, including the date, equipment used, calibration results, and the certificate of calibration from the higher-order standard. This creates an unbroken chain of traceability, allowing us to confidently assert the accuracy of our measurements.

For example, if we calibrate a dosimeter, we’ll use a calibrated reference dosimeter traceable to a national standard. That reference dosimeter’s calibration certificate will then be referenced in the certificate generated for our working dosimeter, providing a clear path back to the primary standard. This detailed documentation allows for audits, ensures consistency, and facilitates troubleshooting if inaccuracies are detected.

Q 23. What are the different types of calibration certificates?

Calibration certificates come in various forms, each with specific information depending on the complexity of the calibration and the standard used. Some common types include:

- Certificate of Calibration (CoC): This is the most common type, providing a detailed record of the calibration procedure, including the instrument’s identification, calibration date, results, uncertainties, and the traceability chain. It’s essentially a formal declaration of the instrument’s accuracy.

- Test Report: Similar to a CoC but often more comprehensive, offering more detailed information on the testing process, including methods and data points. It’s typically used for more complex or critical instruments.

- Data Sheet: A simpler document summarizing the key calibration results, often suitable for instruments with simpler calibration requirements. It may not include detailed traceability information.

The type of certificate issued depends on factors like the instrument’s complexity, regulatory requirements, and client needs. For example, a medical dosimeter would typically require a comprehensive CoC with detailed traceability, while a simpler measuring device might only need a data sheet.

Q 24. Explain the importance of regular calibration checks.

Regular calibration checks are vital for ensuring the accuracy and reliability of our measurements. Imagine a scale that’s gradually drifting off calibration – you wouldn’t get accurate weight measurements! Similarly, uncalibrated dosimeters or other measuring instruments can lead to inaccurate readings, resulting in significant consequences, depending on the application.

In dosimetry, inaccurate readings can lead to incorrect radiation doses for patients in radiotherapy, compromised safety in radiation protection, or unreliable measurements in research. The frequency of calibration checks depends on several factors, including the instrument type, its usage frequency, the environment it operates in, and regulatory requirements. It’s often described as preventative maintenance. Consistent calibration minimizes the risk of errors and ensures compliance with regulatory standards, safeguarding both patient safety and the integrity of our data.

Q 25. How do you document calibration procedures and results?

We meticulously document all calibration procedures and results using a robust quality management system. This typically involves a combination of electronic and paper-based records.

For each calibration, we generate a detailed report that includes:

- Instrument identification: Serial number, model, and any other relevant identifiers.

- Calibration date and time.

- Calibration procedure: A reference to the specific procedure used and any deviations.

- Calibration results: Numerical data, charts, and graphs displaying the measurements.

- Uncertainties: Quantified uncertainties associated with the measurements.

- Traceability: Details of the standards used and their traceability chain.

- Calibration technician’s signature and credentials.

This information is stored in a secure, auditable database that allows for easy retrieval and analysis. This rigorous documentation ensures the integrity of our calibration data and facilitates traceability in case of any inquiries or audits.

Q 26. Describe your experience with different types of calibration equipment.

My experience spans a wide range of calibration equipment, including:

- Ionization chambers: These are used as primary standards for measuring radiation exposure, requiring meticulous calibration procedures and knowledge of radiation physics.

- Dosimeters (thermoluminescent dosimeters (TLDs), optically stimulated luminescence (OSL) dosimeters, electronic personal dosimeters (EPDs)): I’m experienced in calibrating various types of dosimeters used for radiation protection and medical applications, ensuring accurate personal dose monitoring.

- Electrometers: These are essential for measuring the charge collected by ionization chambers and other radiation detectors, needing careful calibration for precise measurements.

- Reference radiation sources: I have experience handling and calibrating various radioactive sources used in radiation calibration laboratories, following strict safety regulations and procedures.

This diverse experience allows me to understand the unique challenges and requirements associated with each instrument, ensuring accurate and reliable calibrations.

Q 27. How do you ensure compliance with relevant safety regulations?

Ensuring compliance with relevant safety regulations is paramount in our work. We adhere strictly to regulations regarding the handling of radioactive materials, including national and international standards such as those set by the IAEA (International Atomic Energy Agency).

This includes:

- Radiation safety training: All personnel undergo regular training on radiation safety principles and practices.

- Protective equipment: We utilize appropriate personal protective equipment (PPE), including lead aprons, gloves, and dosimeters, when handling radioactive materials.

- Proper handling procedures: We follow strict procedures for handling, storage, and disposal of radioactive sources and waste materials.

- Regular safety inspections: Our laboratory undergoes regular safety inspections to ensure compliance with all regulations.

- Emergency response plans: We have comprehensive emergency response plans in place to handle any unforeseen incidents.

Our commitment to safety ensures the well-being of our staff and the protection of the environment.

Q 28. Describe a time you had to troubleshoot a calibration issue.

During a routine calibration of a TLD dosimeter, we observed unexpectedly low readings compared to previous calibrations. Initially, we suspected instrument malfunction.

Our troubleshooting involved a systematic approach:

- Review of the calibration procedure: We carefully reviewed the calibration procedure to ensure all steps were followed correctly.

- Instrument inspection: We visually inspected the dosimeter for any damage or contamination.

- Testing with a different electrometer: We used a different, independently calibrated electrometer to rule out any issues with our primary electrometer.

- Verification of the reference source: We verified the stability and accuracy of the reference radiation source.

- Analysis of environmental factors: We considered possible environmental factors that might have affected the readings.

After meticulously checking each step, we discovered a minor procedural error in the annealing process of the TLDs, leading to incomplete erasure of the previous dose. After correcting this error, subsequent calibrations yielded expected results. This experience highlighted the importance of systematic troubleshooting and careful attention to detail in every step of the calibration process.

Key Topics to Learn for Your Dosimetry and Calibration Interview

- Radiation Physics Fundamentals: Understand the interaction of radiation with matter, including different types of radiation and their properties. Be prepared to discuss concepts like half-life, activity, and dose calculations.

- Dosimetry Techniques: Familiarize yourself with various dosimetry methods, such as thermoluminescent dosimetry (TLD), film dosimetry, and ionization chamber dosimetry. Be ready to compare and contrast their strengths and weaknesses in different applications.

- Calibration Procedures and Standards: Master the principles and practices of instrument calibration, including traceability to national or international standards. Understand the importance of calibration certificates and uncertainty analysis.

- Radiation Safety and Regulations: Demonstrate knowledge of relevant safety regulations and protocols for handling radioactive materials and ensuring radiation protection. This includes understanding ALARA principles.

- Quality Control and Assurance: Discuss the importance of quality control measures in dosimetry and calibration, including error analysis and the implementation of quality assurance programs.

- Practical Applications: Be ready to discuss real-world applications of dosimetry and calibration in various fields, such as medical physics, nuclear power, industrial radiography, or environmental monitoring. Prepare examples of how your skills and knowledge can be applied to solve practical problems.

- Troubleshooting and Problem-Solving: Develop your ability to identify and troubleshoot issues related to dosimetry equipment, calibration procedures, or data analysis. Practice explaining your problem-solving approach in a clear and concise manner.

Next Steps

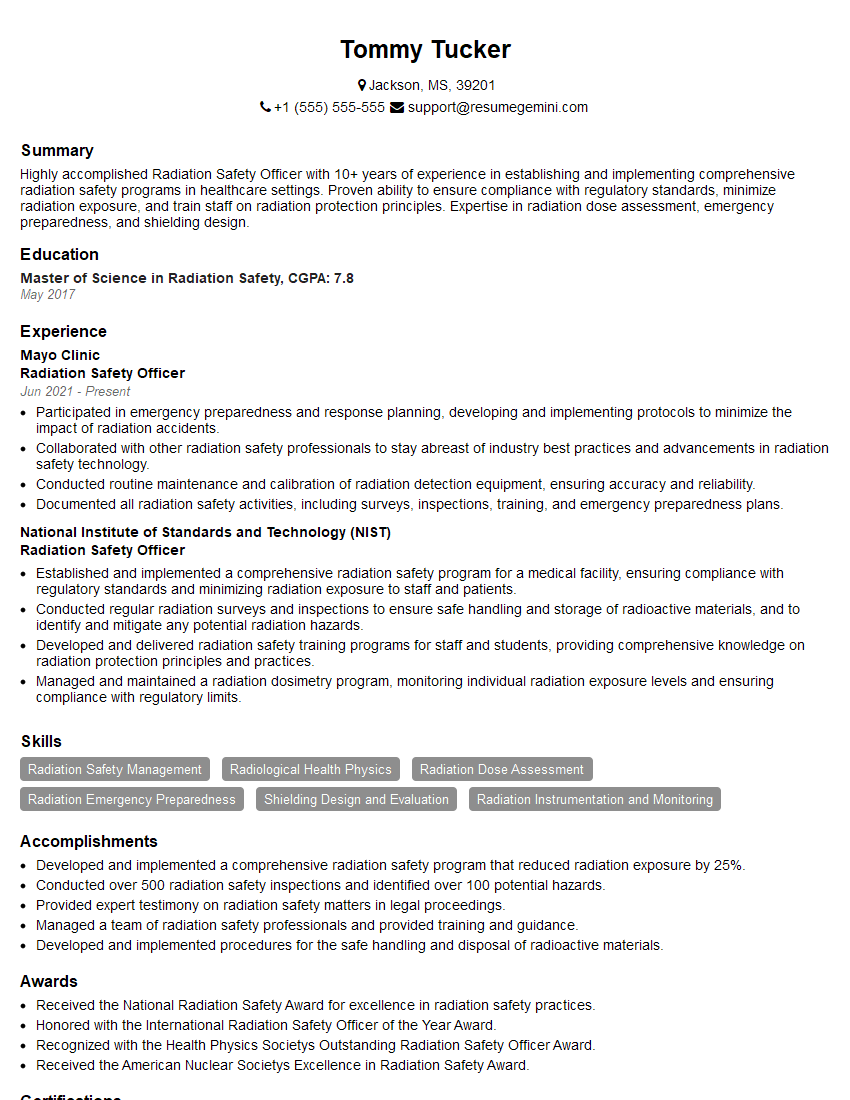

Mastering Dosimetry and Calibration opens doors to exciting and impactful careers in various sectors. A strong understanding of these principles is highly valued by employers, ensuring competitive advantage and career growth opportunities. To maximize your job prospects, it’s crucial to present your skills effectively. An ATS-friendly resume is key to getting your application noticed. Use ResumeGemini to create a professional and impactful resume that highlights your achievements and expertise. ResumeGemini provides examples of resumes tailored to Dosimetry and Calibration, ensuring your application stands out from the crowd. Invest the time to craft a compelling resume – it’s your first impression!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.