Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Experience with Qualitative Data Analysis Software (e.g., NVivo, Atlas.ti) interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Experience with Qualitative Data Analysis Software (e.g., NVivo, Atlas.ti) Interview

Q 1. What are the key differences between NVivo and Atlas.ti?

NVivo and Atlas.ti are both leading qualitative data analysis software packages, but they have key differences. NVivo is generally considered more user-friendly, particularly for beginners, with a more intuitive interface and robust support system. It excels in managing large datasets and complex projects, offering powerful features for visualizing relationships between data points. Atlas.ti, on the other hand, is often preferred by researchers who value flexibility and customizable workflows. It provides a more open architecture and a greater emphasis on manual coding and analysis, appealing to those who want more control over the analysis process. In essence, NVivo prioritizes ease of use and scalability, whereas Atlas.ti prioritizes customization and control.

For example, NVivo’s query builder offers pre-built options to analyze data with ease, whereas Atlas.ti offers more command line access to its functionality. The choice often depends on the specific project requirements and the analyst’s experience level.

Q 2. Describe your experience with coding qualitative data.

Coding qualitative data involves systematically organizing and categorizing textual data to identify patterns, themes, and meaning. My experience encompasses a wide range of coding techniques, from open coding (developing codes inductively from the data) to axial coding (linking codes to develop categories and subcategories) and selective coding (identifying the core category that summarizes the data). I’m proficient in both deductive (theory-driven) and inductive (data-driven) coding approaches, adapting my methodology to the specific research question.

For instance, in a recent project analyzing interview transcripts on customer satisfaction, I initially employed open coding to identify emergent themes. Then, I used axial coding to build relationships between those themes, ultimately refining them into a core category representing the overall customer experience. This process involved constant comparison and refinement of my codes, ensuring they accurately reflected the nuances of the data.

Q 3. How do you manage large datasets in NVivo or Atlas.ti?

Managing large datasets in NVivo or Atlas.ti requires a strategic approach. This involves careful project planning, efficient data import, and effective data management techniques. In NVivo, I frequently utilize the software’s powerful search and filtering capabilities to quickly locate specific data segments. For instance, using Boolean operators (AND, OR, NOT) allows you to refine searches to narrow results. Regular data backups are crucial to prevent data loss. I also employ strategies for organizing data, like creating clear and logical folder structures to house coded data and memo sections.

Atlas.ti offers similar tools, although their implementation might vary slightly. In both softwares, efficient coding strategies, such as using hierarchical coding schemes and memoing, are essential for navigating vast datasets. It’s also beneficial to break down a large project into smaller, more manageable components.

Q 4. Explain the process of creating and using a codebook in your preferred software.

A codebook is a critical tool in qualitative data analysis. It’s essentially a document that defines and explains each code used in the project. In NVivo, I typically create a codebook as a separate Word document or spreadsheet that I link to my project. I meticulously document each code, including its definition, examples from the data, and any relevant theoretical considerations. This ensures consistency and transparency throughout the coding process.

Creating this detailed codebook beforehand (deductive approach) or iteratively (inductive approach) allows for effective collaboration among researchers and facilitates the interpretation of findings. Within NVivo, I use the ‘nodes’ to represent my codes, and the node description acts as the definition in the codebook. This makes it easy to manage the codes and their definitions throughout the analysis process. In Atlas.ti, the process is similar, often utilizing the codebook function built into the software itself.

Q 5. How do you ensure the reliability and validity of your qualitative data analysis?

Ensuring the reliability and validity of qualitative data analysis is paramount. Reliability refers to the consistency of the findings; would another researcher reach similar conclusions from the same data? Validity refers to the accuracy and trustworthiness of the interpretations. To enhance reliability, I meticulously document my coding decisions, using a well-defined codebook, and adhering to established coding guidelines. Inter-coder reliability checks, where multiple researchers independently code a subset of the data and compare their results, are also invaluable. Techniques like Cohen’s Kappa can quantify agreement levels.

For validity, I employ strategies such as member checking (returning findings to participants for feedback) and triangulation (using multiple data sources to corroborate findings). I also carefully consider potential biases in my data collection and analysis, employing reflexivity – critically reflecting on my own assumptions and influences.

Q 6. What are some common challenges in qualitative data analysis, and how have you overcome them?

Common challenges in qualitative data analysis include managing large datasets, ensuring inter-coder reliability, and dealing with ambiguous or contradictory data. I’ve addressed these challenges through several strategies. For instance, to manage large datasets, I’ve employed efficient coding strategies, regular backups, and effective search and filtering techniques (as previously mentioned).

To improve inter-coder reliability, I’ve implemented rigorous training sessions for coders, used detailed codebooks, and conducted regular meetings to discuss coding decisions and resolve disagreements. When encountering ambiguous data, I’ve relied on theoretical frameworks, engaged in prolonged engagement with the data, and meticulously documented my interpretations. Moreover, using software like NVivo or Atlas.ti streamlines data management, facilitating easier collaboration and improved reliability checks.

Q 7. Describe your experience with qualitative data visualization techniques.

Qualitative data visualization is essential for communicating complex findings effectively. In NVivo and Atlas.ti, I leverage various visualization techniques such as word clouds to highlight frequently occurring terms, network diagrams to show relationships between codes, and matrices to display relationships between variables. These visualizations allow me to quickly identify patterns and themes, making them easier to understand for both myself and my audience. These visualizations make data more accessible and help communicate complex relationships clearly.

For instance, a network diagram in NVivo might illustrate how different themes identified in interview data are interconnected. This visual representation significantly simplifies complex connections between various ideas. Similarly, I’ve used word clouds to highlight key themes from large corpora of textual data, providing a quick, impactful visual summary.

Q 8. How do you handle conflicting or ambiguous data in your analysis?

Conflicting or ambiguous data is inevitable in qualitative research. Think of it like assembling a jigsaw puzzle with some missing pieces and others that seem to fit in multiple places. My approach involves a multi-step process. First, I meticulously document the conflict or ambiguity itself, noting the specific data points and their context within the interview or document. This detailed record is crucial for maintaining transparency and auditability. Next, I explore alternative interpretations, considering different perspectives and theoretical frameworks. For instance, a seemingly contradictory statement might reveal a nuanced understanding once contextualized within the participant’s overall narrative or cultural background. I might use NVivo’s coding features to create separate codes representing these different interpretations, allowing for a comparative analysis later. Finally, I engage in careful reflection on the limitations of the data itself. I acknowledge the ambiguity and discuss it in my analysis, demonstrating transparency and intellectual honesty.

For example, if one interviewee strongly praises a product feature while another criticizes the same feature, instead of dismissing one viewpoint, I would explore the reasons for the discrepancy. Maybe the first participant used the feature differently, or their needs are significantly different from the second participant’s. This approach allows for a richer understanding than simply choosing one ‘correct’ interpretation.

Q 9. How do you integrate qualitative data with quantitative data (if applicable)?

Integrating qualitative and quantitative data enriches the understanding of a research problem. Imagine having a detailed map (qualitative data – rich descriptions and context) and statistical summaries of population distribution (quantitative data – numbers and frequencies). Combining these offers a far more comprehensive view than either alone. I typically employ a mixed-methods approach, using quantitative data to identify patterns and trends, which then inform the selection of qualitative data for in-depth exploration. For example, a quantitative survey might reveal that customer satisfaction is lower among a particular demographic group. I could then conduct qualitative interviews with individuals from that group to understand the reasons behind this lower satisfaction, gaining deeper insights.

In terms of software, I often use NVivo’s ability to import external data sources, such as spreadsheet files containing quantitative results. This lets me link specific quantitative findings (e.g., average satisfaction scores) to relevant qualitative data (e.g., interview excerpts discussing those satisfaction levels). This integration enhances the analysis and allows for a more compelling narrative in the final report. I might visually represent this through charts and tables that illustrate relationships between themes identified through qualitative analysis and corresponding quantitative data.

Q 10. Explain your experience with thematic analysis.

Thematic analysis is a widely used qualitative data analysis method, ideal for identifying recurring patterns and themes within a dataset. Think of it like finding the common threads in a tapestry woven from multiple stories. My process usually follows these steps: 1. Familiarization: I immerse myself in the data, repeatedly reading transcripts and documents. 2. Code Generation: I identify initial codes that capture key ideas and concepts, often using NVivo or Atlas.ti to assist in this process. I might start with open coding, generating codes directly from the data. 3. Theme Development: I group related codes into potential themes. This involves grouping similar ideas and concepts in a logical and meaningful way. 4. Reviewing Themes: I examine each theme for coherence and consistency, refining definitions and ensuring that each theme accurately reflects the data. 5. Defining and Naming Themes: Finally, I clearly define each theme and assign a concise, descriptive name. 6. Writing up: I write a report that describes the themes and their relationships, providing evidence from the data to support my findings. The entire process is iterative; I continually revisit earlier steps as I uncover new insights.

For example, in a study on employee satisfaction, themes like ‘workload,’ ‘management support,’ and ‘compensation’ might emerge. Each theme would be supported by specific quotes and data points from interviews or documents. The software’s query and visualization tools help illustrate the relationship between these themes and even allow a visual representation of the data flow from code to theme.

Q 11. How do you ensure the ethical considerations of qualitative data analysis are met?

Ethical considerations are paramount in qualitative data analysis. Maintaining participant confidentiality is my top priority. This starts before data collection through obtaining informed consent, ensuring participants understand how their data will be used and protected. In the analysis phase, I employ several strategies: anonymization or pseudonymization of participants (replacing names and other identifiers), securing data storage using password-protected files and encryption, adhering strictly to data protection regulations (GDPR, HIPAA, etc.), and limiting access to the data to authorized personnel only. Furthermore, I ensure that my analysis is unbiased and avoids misrepresenting participants’ views. I maintain reflexivity, acknowledging my own potential biases and how they might influence the interpretation of the data. Finally, I adhere to research integrity standards; my conclusions are based solely on the evidence from the data and are not influenced by external pressures.

For example, I might replace participant names with codes like ‘P1’, ‘P2’ in my transcripts and analysis documents. I’d also maintain a separate, secure document with the link between codes and actual identities, which is only accessible to me or others specifically authorized for access by IRB. This level of security ensures the participants’ privacy and confidentiality is protected throughout the entire research process.

Q 12. Describe your process for managing and organizing interview transcripts.

Efficient transcript management is crucial for effective qualitative analysis. My process starts with careful transcription, ensuring accuracy and clarity. I utilize professional transcription services when needed to maintain high quality, especially when working with complex or nuanced interviews. I then organize my transcripts systematically, using a consistent file-naming convention (e.g., ‘Interview_P1_20231026.txt’) that incorporates participant ID, date, and any other relevant identifiers. I store the transcripts securely, using cloud storage or a dedicated, password-protected computer, depending on the project requirements. Within NVivo or Atlas.ti, I create a project specifically for that study to store and organize my transcripts. I utilize the software’s features to import the transcripts, ensuring each file is clearly labelled and easily accessible. The software’s search functionalities significantly assist in locating specific sections within lengthy transcripts, speeding up the coding and analysis process.

For large projects, I sometimes use a dedicated project management tool alongside the qualitative data analysis software to maintain a complete overview of all interview transcripts and associated files. This centralized location allows for efficient tracking of progress, collaboration between team members, and efficient communication about study progress.

Q 13. What are your preferred methods for ensuring data quality and integrity?

Data quality and integrity are vital for credible qualitative research. My approach involves multiple layers of quality control. First, I pay meticulous attention to the quality of data collection, ensuring the interviews are conducted using standardized procedures and clear prompts. Then, transcription accuracy is verified either through double-checking or using quality-assured transcription services. During the analysis phase, I maintain detailed audit trails, documenting all coding decisions and analytical choices. This process is crucial for ensuring the reproducibility of the analysis. I regularly review my codes and themes, seeking feedback from colleagues or supervisors to minimize bias and ensure accuracy. Finally, I adhere to rigorous data management practices, employing version control to track changes made to my analysis documents and maintaining backups regularly to protect against data loss.

In the software, features such as memoing and audit trails allow for a record of my decisions and reasoning. NVivo and Atlas.ti let me easily compare different coding approaches or track changes to coding schemes across time, which greatly facilitates quality control and ensures rigor.

Q 14. How do you deal with missing data in qualitative research?

Missing data in qualitative research can be challenging. This could include incomplete transcripts, missing interviews, or gaps in participant responses. My strategy depends on the cause and extent of the missing data. If the missing data is minimal and doesn’t significantly impact the overall analysis, I might simply acknowledge the limitation in my report. However, if the missing data is substantial or could create a bias, I take a more proactive approach. For example, if a participant fails to answer a key question, I may try to clarify by contacting them directly (if ethical and feasible). If the missing data is due to an incomplete transcript, I might arrange for the missing parts to be transcribed. In cases where data is systematically missing, I would explicitly address this limitation in my analysis and discuss how it might influence the interpretations.

For example, if several participants fail to answer a question about a specific policy, I would explain this gap in my report and discuss potential reasons for the non-response. This transparency strengthens the credibility of the analysis and ensures that limitations are acknowledged.

Q 15. Compare and contrast different qualitative data analysis approaches.

Qualitative data analysis approaches vary widely depending on the research question and the nature of the data. Some prominent approaches include:

- Thematic Analysis: This is a widely used method focusing on identifying recurring patterns (themes) within the data. It’s flexible and can be applied across diverse datasets. For example, analyzing interview transcripts to discover themes related to customer satisfaction.

- Grounded Theory: A systematic approach aiming to develop theory inductively from data. It emphasizes constant comparison and iterative refinement of concepts. A study exploring the lived experiences of individuals with a specific illness might use grounded theory to develop a new theory of coping mechanisms.

- Narrative Analysis: Concentrates on the stories individuals tell and the meaning they ascribe to their experiences. It examines how stories are structured and the impact of narrative on meaning-making. Useful for researching personal experiences, for instance, studying the narratives of refugees recounting their journeys.

- Discourse Analysis: This approach examines how language is used to construct meaning and social reality. It explores power dynamics and the ways language shapes understanding. Analyzing political speeches to identify persuasive techniques would be an application of discourse analysis.

- Content Analysis: A quantitative approach used to analyze the occurrence of specific words or phrases within a text corpus. It can be both quantitative and qualitative. Analyzing news articles to gauge the frequency of certain terms related to a particular social issue is an example.

The key difference between these approaches lies in their underlying philosophy, the level of theoretical grounding, and the analytical techniques employed. Thematic analysis is often more flexible, while grounded theory is more rigorous and theory-driven. The choice of approach depends heavily on the research question and the researcher’s theoretical orientation.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with using software to identify patterns and themes.

My experience with qualitative data analysis software, primarily NVivo and Atlas.ti, is extensive. I leverage these tools to efficiently manage large datasets and identify patterns and themes. For instance, in a recent project studying employee engagement, I used NVivo to code interview transcripts. I created codes representing key themes like ‘work-life balance,’ ‘managerial support,’ and ‘career development.’ Then, I used NVivo’s query functions to explore relationships between codes. For example, I could identify how often ‘lack of work-life balance’ co-occurred with ‘high stress levels,’ visualizing this relationship with word clouds or networks. Atlas.ti, while having a slightly different interface, offered similar functionalities. Its coding and querying capabilities allowed me to identify emergent themes and patterns more effectively than manual analysis. The software significantly reduced analysis time and allowed for a more systematic and rigorous approach to identifying relationships within the data.

Q 17. How familiar are you with grounded theory methodology?

I am very familiar with grounded theory methodology. It’s a powerful approach for developing theory inductively from data. The process begins with data collection (interviews, observations, documents), followed by open coding (identifying initial codes), axial coding (establishing relationships between codes), and selective coding (developing a core category and integrating the other categories around it). A crucial aspect is constant comparison, where new data is continuously compared with existing codes and categories, refining and developing the emerging theory. Software like NVivo greatly facilitates this iterative process. For example, the ability to create memos linked to specific codes helps in tracking the evolving understanding of concepts. I’ve used grounded theory in several projects, including a study on organizational change where I used NVivo to track the development of theoretical concepts related to resistance and adaptation.

Q 18. How do you use software to manage your research project?

I utilize qualitative data analysis software to manage my research projects comprehensively. This includes:

- Data Organization: Import various data types (transcripts, documents, images) into the software and organize them logically using folders and subfolders.

- Coding and Indexing: Develop a coding framework to systematically analyze the data and apply codes to relevant segments. This allows for efficient retrieval and analysis of data related to specific themes or concepts.

- Memo Writing: Document thoughts, interpretations, and analytical reflections directly linked to coded data segments. This helps in tracking the research process and developing analytical insights.

- Querying and Visualization: Use the software’s querying capabilities to explore relationships between codes, visualize data patterns, and generate reports.

- Collaboration: Share projects with collaborators and facilitate team-based analysis.

In essence, the software acts as a central repository and analytical tool for the entire project, from data organization to the final report. This structured approach ensures efficiency, transparency, and facilitates the generation of robust findings.

Q 19. Explain the importance of inter-rater reliability in qualitative research.

Inter-rater reliability is crucial in qualitative research to ensure the trustworthiness and objectivity of findings. It refers to the extent to which different researchers agree on the coding and interpretation of data. Low inter-rater reliability suggests subjective biases influencing the analysis. To achieve high inter-rater reliability, I employ several strategies: First, a clear and detailed coding scheme needs to be developed and thoroughly discussed amongst researchers. Then, we independently code a subset of the data, and subsequently compare our coding to identify discrepancies. We discuss these disagreements until consensus is reached, refining the coding scheme as needed. Software like NVivo facilitates this process by allowing for easy comparison of codings and the calculation of inter-rater reliability statistics, such as Cohen’s Kappa. Achieving strong inter-rater reliability significantly enhances the credibility and generalizability of qualitative research findings.

Q 20. Describe your experience with memo writing in qualitative data analysis.

Memo writing is an integral part of my qualitative data analysis process. Memos are essentially reflective notes, annotations, and theoretical reflections linked to specific data segments or codes. They are crucial for tracking the evolution of my thinking, recording emergent themes, and documenting the rationale behind analytical choices. In NVivo, I can create memos directly linked to codes or nodes, maintaining a clear audit trail of my analytical process. This means that I can easily access my memos to revisit prior thinking or to trace the genesis of a specific idea. Memos aren’t just a record; they actively contribute to the analytical process, shaping the development of the overarching narrative. For example, a memo could contain initial interpretations of a particular theme, later revisions based on further data analysis, and final thoughts on the significance of this theme within the broader research context.

Q 21. How do you ensure the trustworthiness of your qualitative findings?

Ensuring the trustworthiness of qualitative findings is paramount. I employ several strategies to enhance credibility:

- Prolonged Engagement: Spending sufficient time in the field to develop a deep understanding of the context and participants.

- Triangulation: Using multiple data sources (interviews, observations, documents) to corroborate findings.

- Member Checking: Sharing findings with participants to validate their accuracy and meaningfulness from their perspective.

- Peer Debriefing: Discussing the research process and findings with colleagues to get external feedback and identify potential biases.

- Audit Trail: Maintaining a detailed record of the entire research process, including data collection, analysis, and interpretation. NVivo’s features facilitate a detailed audit trail.

- Reflexivity: Acknowledging and reflecting upon my own biases and assumptions throughout the research process.

By employing these strategies, I aim to provide a transparent, rigorous, and credible account of the research findings, enhancing their trustworthiness and impact.

Q 22. How do you address saturation in your data analysis?

Saturation, in qualitative data analysis, refers to the point where further data collection yields no new insights or themes. Think of it like filling a cup: initially, each addition of water (data) significantly increases the level. However, once the cup is full, adding more water doesn’t change its level. Similarly, when saturation is reached, you’ve gathered enough data to thoroughly explore the research topic.

Addressing saturation requires a careful and iterative approach. I typically start by collecting data until I observe a clear trend of repetition in codes and themes. I then assess whether these repeating themes provide new information. If several consecutive interviews, focus groups, or document reviews fail to add new insights or challenge existing findings, I consider the data saturated. This involves rigorously checking for new codes or categories within the data. I might also utilize software functionalities like NVivo’s matrix coding to visually compare themes across different data sources.

For instance, in a study on employee burnout, I might initially interview 15 employees. However, if the themes of workload, lack of support, and work-life balance consistently emerge without significant variation after interviewing the 10th employee, I might consider saturation. This doesn’t mean stopping immediately; it suggests that additional data collection might be unnecessary. I’d carefully document my rationale for determining saturation in my research report, supporting the decision with evidence from my analysis.

Q 23. What are the strengths and limitations of using qualitative data analysis software?

Qualitative data analysis software like NVivo and Atlas.ti offer significant strengths, but also have limitations. They are powerful tools that greatly enhance the efficiency and rigor of qualitative analysis.

- Strengths: These programs help manage large datasets, facilitating efficient coding, searching, and retrieval of data. They enable the creation of visual representations of data relationships, like networks or matrices, helping researchers identify patterns and connections that might be missed during manual analysis. They also offer robust functionalities for managing different data types (transcripts, images, videos) and creating rich, detailed reports.

- Limitations: Over-reliance on software can lead to a superficial analysis if not used thoughtfully. The process of coding and categorizing data remains interpretive and subjective, even with software assistance. Moreover, the software can be expensive, require training to learn properly, and might not be accessible to all researchers.

In my experience, the software is a tool – a powerful one, but still just a tool. The researcher’s critical thinking, analytical skills, and interpretive ability remain essential for a robust and meaningful analysis. I always strive to maintain a balanced approach, utilizing the software’s capabilities effectively while retaining the human element of qualitative analysis.

Q 24. Explain your experience with using different coding techniques (e.g., in vivo, open, axial).

My experience with coding techniques is extensive. I regularly utilize in vivo, open, and axial coding, often in combination.

- In vivo coding: This involves using the participants’ own words directly as codes. This is crucial for maintaining authenticity and capturing the nuances of their experiences. For instance, if a participant describes feeling “overwhelmed” by their workload, “overwhelmed” becomes a code.

- Open coding: This involves initially reading through the data and developing initial codes based on emerging themes and patterns. It’s a more exploratory and inductive approach. For example, after reading several transcripts, I might identify codes like “work pressure,” “lack of resources,” and “communication breakdown.”

- Axial coding: This involves re-examining and refining codes developed during open coding. It involves connecting categories and themes to develop more complex relationships within the data. I might find that “work pressure” is linked to “lack of resources” and “poor communication,” leading to a higher-order theme of “systemic issues.”

I often start with open coding to identify initial themes, then refine them through axial coding, incorporating in vivo codes where relevant to ground the analysis in the participants’ perspectives. The software aids in managing this iterative process, allowing for easy modification and refinement of codes as the analysis progresses.

Q 25. How do you ensure the confidentiality and anonymity of participants in your research?

Ensuring participant confidentiality and anonymity is paramount. I adhere to strict ethical guidelines throughout the research process.

- Data anonymization: I replace identifying information (names, addresses, etc.) with codes or pseudonyms. This is done immediately after data collection.

- Secure data storage: I store all data in password-protected files on encrypted devices. Access is strictly limited to me and other authorized researchers.

- Informed consent: Participants provide written informed consent, clearly outlining the purpose of the research, how data will be used, and their rights regarding their participation. They are informed about the steps taken to protect their confidentiality and anonymity.

- Data deletion: Once the research is complete and the findings are published, data are deleted or securely archived in accordance with institutional guidelines.

Transparency is key. The methods used to ensure confidentiality are detailed in my research report and ethics approvals. For example, I might describe the anonymization process and the data security measures implemented, reassuring readers of my commitment to ethical research practices.

Q 26. Describe your experience with data cleaning and preparation in qualitative data analysis.

Data cleaning and preparation are crucial for the success of qualitative analysis. This stage involves organizing, reviewing, and preparing data for analysis.

- Transcription: For audio or video data, I ensure accurate and verbatim transcriptions. I often double-check to ensure accuracy.

- Data import and organization: Once transcribed, I organize data within the software (NVivo or Atlas.ti), creating separate nodes for each interview or document. This structured approach facilitates efficient coding and retrieval.

- Identifying and managing missing data: I carefully consider any gaps or missing data. I might include notes about missing data sections within the software or in my field notes to ensure transparency.

- Data cleaning: I review the data for errors, inconsistencies, and irrelevant information. I usually undertake this multiple times throughout the coding process.

In a recent project, we encountered some incomplete transcripts. Instead of discarding them completely, we documented the missing sections and considered the context while analyzing the available information. We specifically noted within our analysis how the incompleteness might impact the interpretation of findings.

Q 27. How familiar are you with the concept of reflexivity in qualitative research?

Reflexivity, in qualitative research, refers to the critical self-reflection on the researcher’s role and influence on the research process. It acknowledges that researchers are not neutral observers; their values, biases, and experiences shape their interpretation of data.

I embrace reflexivity as a vital aspect of qualitative research. I regularly reflect on my own biases and how they might influence my coding, interpretation, and analysis. I document these reflections in a research journal, helping me track my own evolving understanding and interpretations. For example, I might note how my prior experience in a specific field influences my initial coding choices. This reflection helps me remain aware of potential biases and mitigate their impact on the research.

This process enhances trustworthiness and credibility. By acknowledging and addressing my own biases, I increase the transparency and rigour of my research, making the findings more robust and believable. The acknowledgement of reflexivity strengthens the overall credibility of the study.

Q 28. What are your strategies for managing bias in qualitative data analysis?

Managing bias in qualitative data analysis is a continuous process, requiring constant vigilance and self-awareness.

- Reflexivity (as discussed above): Regularly reflecting on my own biases and assumptions is crucial. Keeping a reflective journal helps to track these considerations over time.

- Triangulation: Using multiple data sources (interviews, observations, documents) can help to validate findings and reduce bias. Different perspectives can offset individual biases.

- Member checking: Sharing initial findings with participants for feedback helps ensure that the interpretations align with their experiences. This provides a crucial check on potential researcher bias.

- Peer debriefing: Discussing the research process and findings with colleagues helps gain alternative perspectives and identify potential biases that might have been overlooked. A fresh pair of eyes can spot inconsistencies or biases you might miss.

- Auditing the process: Documenting all stages of the research process, from data collection to analysis, enhances transparency and allows for review and scrutiny by others. A detailed audit trail promotes rigor and helps detect potential biases.

In practice, I combine these strategies. For instance, in a study on community perceptions of a new policy, I would employ multiple methods (interviews, focus groups, document analysis), regularly reflect on my own potential biases, and share my initial findings with participants for feedback. The result is a more comprehensive and trustworthy analysis.

Key Topics to Learn for Experience with Qualitative Data Analysis Software (e.g., NVivo, Atlas.ti) Interview

- Data Import and Management: Understanding different data import methods (e.g., text files, audio/video transcripts), data organization strategies, and the importance of data cleaning for accurate analysis.

- Coding and Categorization: Mastering techniques for developing robust coding schemes, applying codes to data, and refining codes based on emerging themes. Understanding the difference between deductive and inductive coding approaches.

- Querying and Reporting: Proficiency in generating various reports (e.g., frequency counts, matrices, networks) to visualize and interpret data patterns. Understanding the limitations and strengths of different query types.

- Theoretical Frameworks: Familiarity with grounded theory, thematic analysis, and other qualitative research approaches and how they inform data analysis in NVivo or Atlas.ti.

- Software-Specific Features: Demonstrating a strong understanding of the specific functionalities of NVivo or Atlas.ti, including features like memoing, visualization tools, and data comparison functionalities.

- Data Visualization and Interpretation: Knowing how to present your findings clearly and concisely, using charts, graphs, and other visual aids to support your interpretations. Understanding the importance of evidence-based conclusions.

- Ethical Considerations: Understanding ethical implications related to data privacy, informed consent, and responsible data handling within the context of qualitative research.

- Troubleshooting and Problem-Solving: Ability to identify and resolve common challenges encountered during qualitative data analysis, such as managing large datasets, handling inconsistencies, and addressing potential biases.

Next Steps

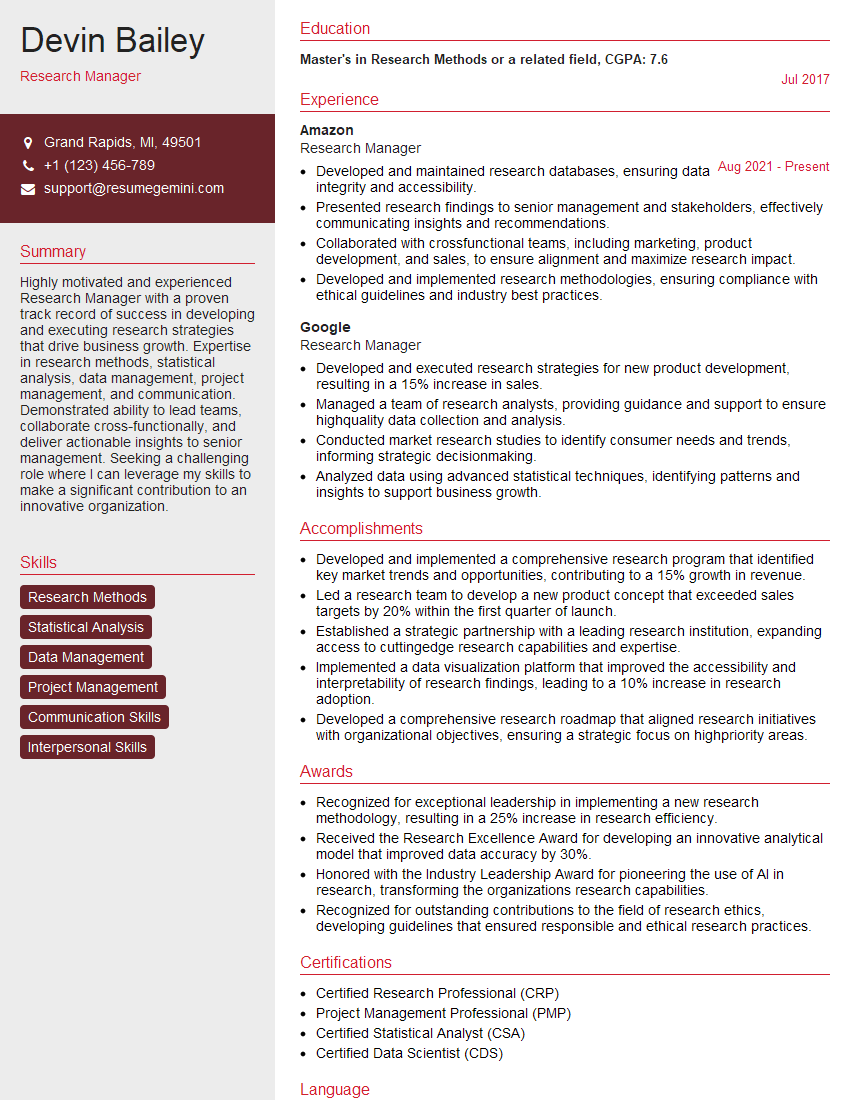

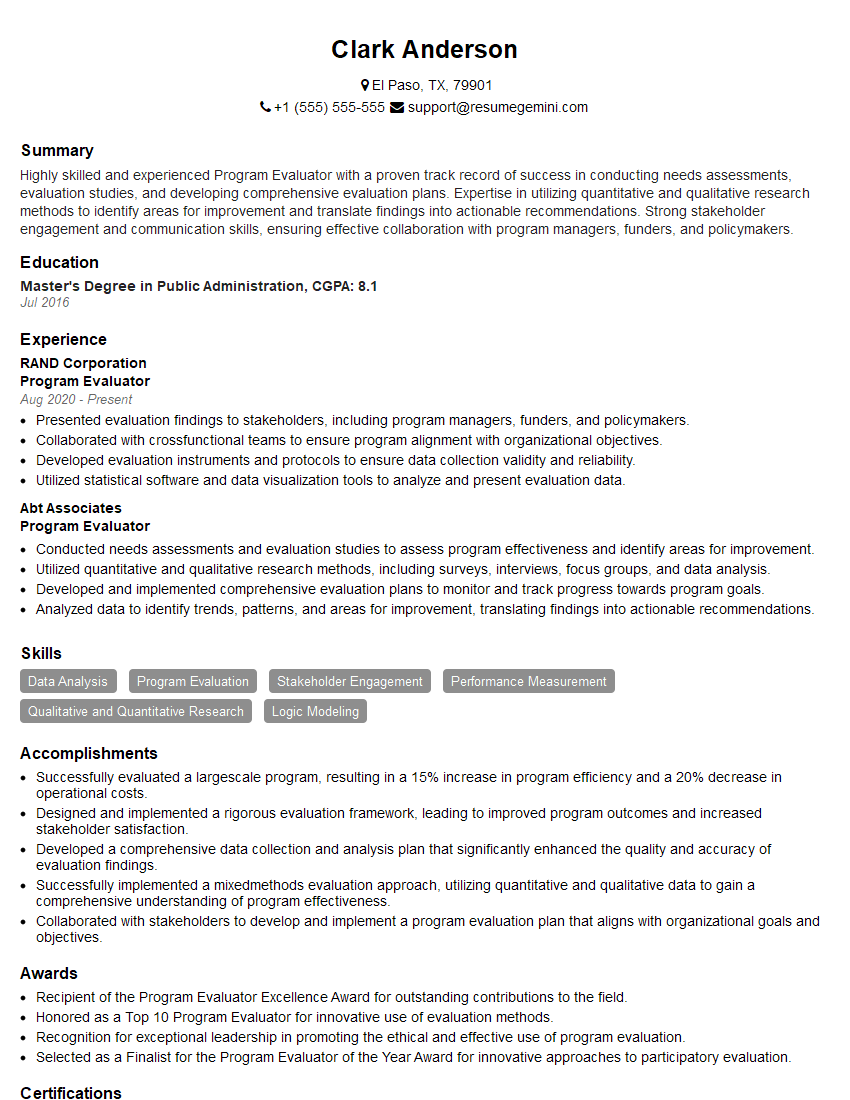

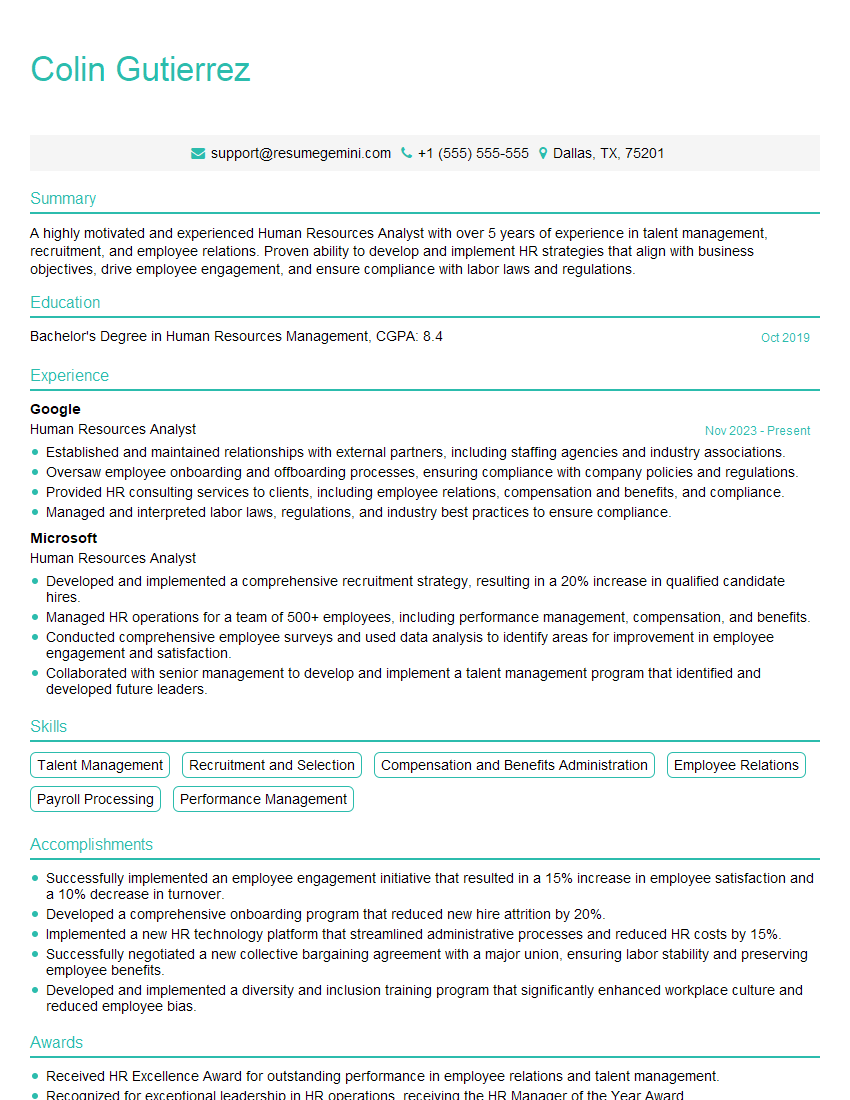

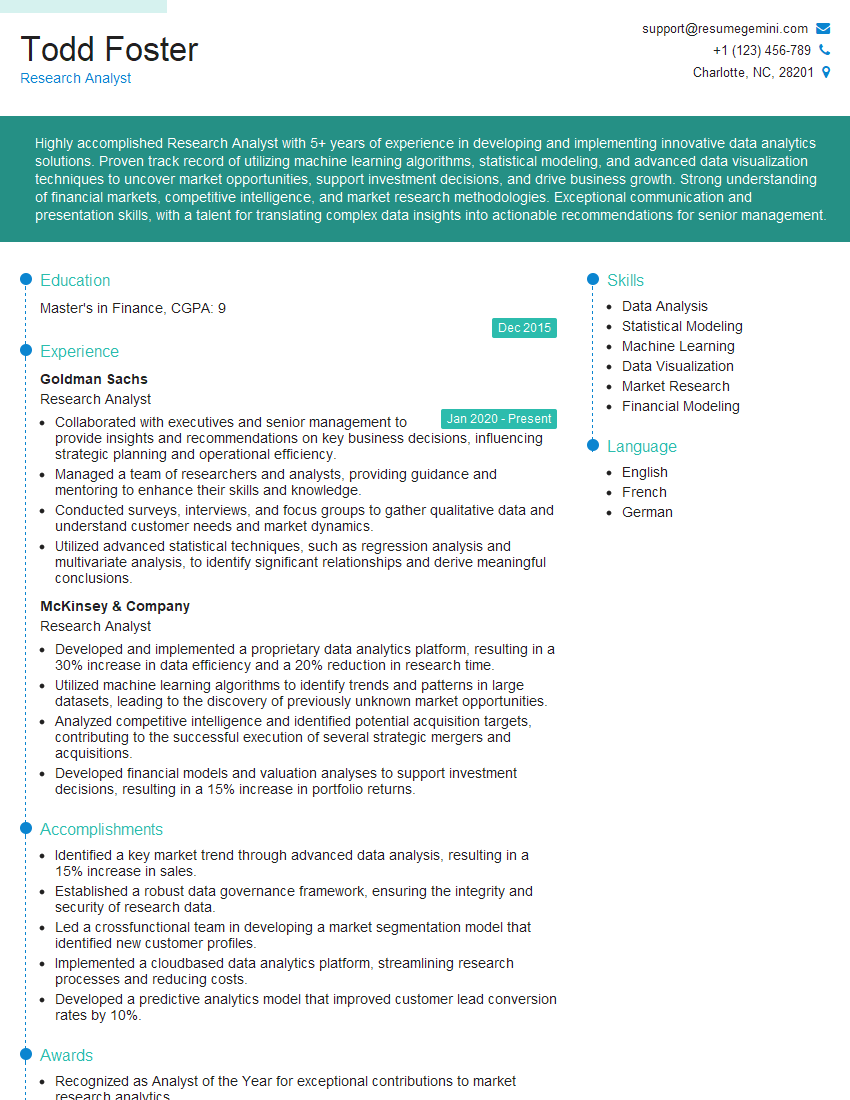

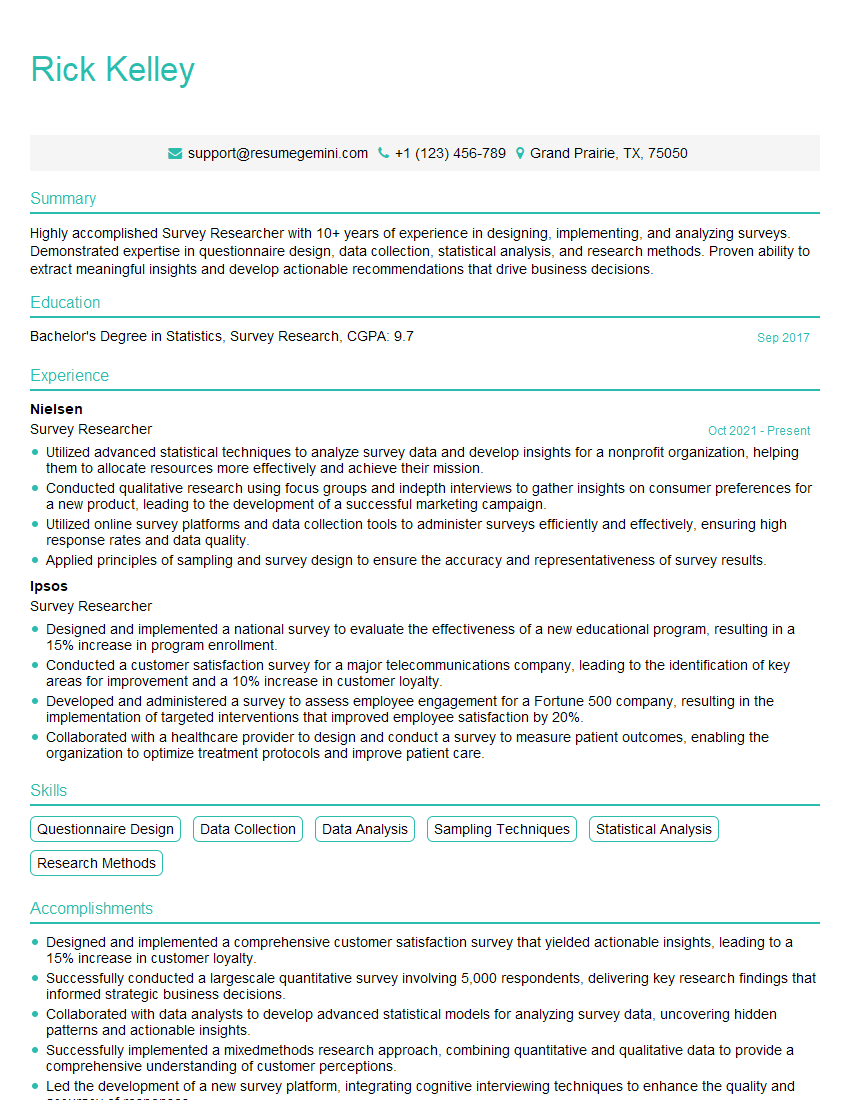

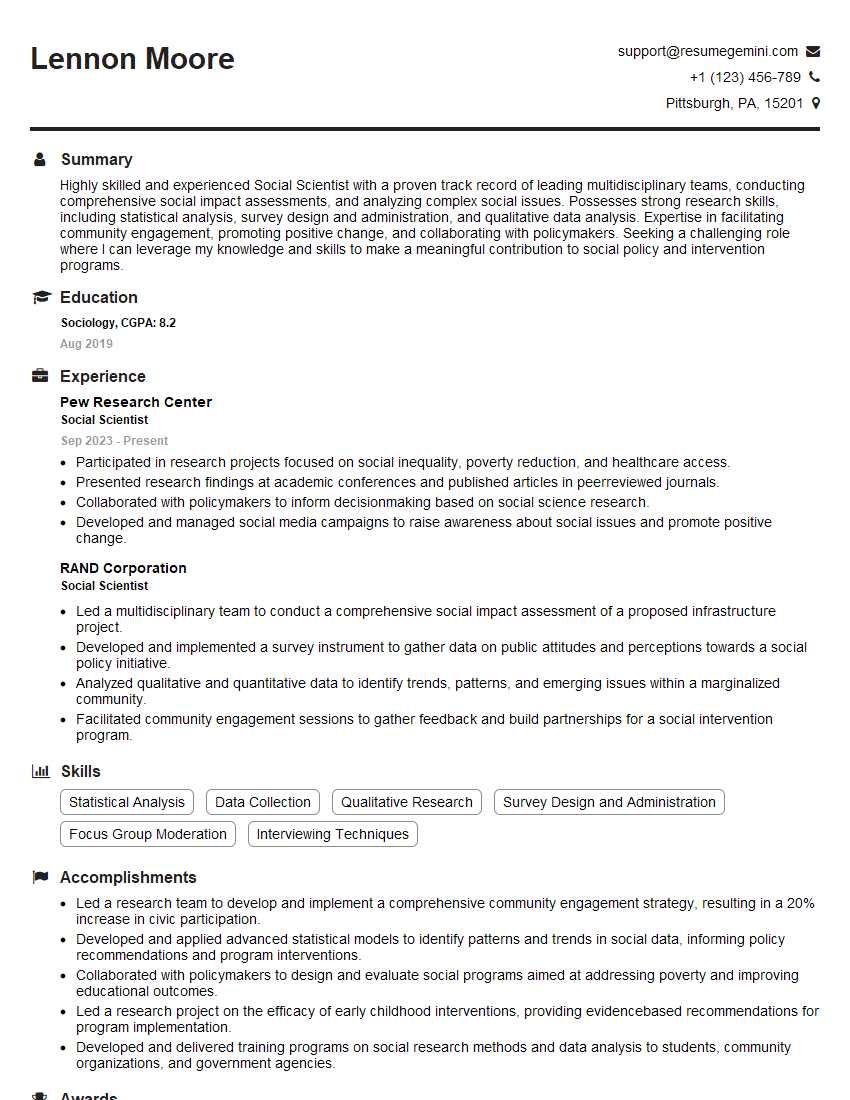

Mastering qualitative data analysis software like NVivo and Atlas.ti is crucial for career advancement in research, social sciences, and many other fields requiring insightful data interpretation. A strong understanding of these tools significantly enhances your ability to conduct rigorous and impactful research, leading to greater career opportunities and competitive advantage. To maximize your job prospects, create an ATS-friendly resume that highlights your skills effectively. Use ResumeGemini, a trusted resource, to build a professional and impactful resume tailored to your specific experience. Examples of resumes tailored to showcasing experience with qualitative data analysis software (e.g., NVivo, Atlas.ti) are available [link to examples would go here in a live application].

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.