The thought of an interview can be nerve-wracking, but the right preparation can make all the difference. Explore this comprehensive guide to Experimental Design and Implementation interview questions and gain the confidence you need to showcase your abilities and secure the role.

Questions Asked in Experimental Design and Implementation Interview

Q 1. Explain the difference between between-subjects and within-subjects designs.

The core difference between between-subjects and within-subjects experimental designs lies in how participants are assigned to different conditions. In a between-subjects design, each participant is exposed to only one level of the independent variable. Think of it like comparing two separate groups: one receiving a new drug (experimental group) and another receiving a placebo (control group). Each individual contributes data to only one condition. This approach is straightforward but requires a larger number of participants to achieve sufficient statistical power.

Conversely, in a within-subjects design, each participant is exposed to all levels of the independent variable. Imagine testing the effectiveness of different learning techniques. In a within-subjects design, the same participants would try each technique and their performance measured for each. This approach reduces the influence of individual differences between participants as each person serves as their own control, but it can be susceptible to order effects (the order of conditions impacting results) and learning effects (participants improving over time due to practice).

Example: Let’s say we’re studying the effect of caffeine on reaction time. A between-subjects design would have one group receiving caffeine and another a placebo. A within-subjects design would have each participant complete a reaction time test after consuming both caffeine and a placebo, with the order randomized.

Q 2. Describe the concept of randomization and its importance in experimental design.

Randomization is the process of assigning participants or conditions to groups in a completely random manner. Its importance in experimental design cannot be overstated. Random assignment helps to ensure that the groups being compared are as similar as possible at the start of the experiment, minimizing the influence of pre-existing differences that could confound the results.

Imagine trying to assess a new teaching method. If you let teachers choose which class receives the new method, you might inadvertently introduce bias – perhaps more experienced teachers get the new method, resulting in better outcomes irrespective of the method itself. Random assignment prevents this. It’s akin to shuffling a deck of cards before dealing – each hand (group) has an equal chance of receiving any ‘card’ (condition).

Without randomization, it becomes difficult to confidently attribute any observed differences to the independent variable; other factors might be responsible, leading to inaccurate conclusions.

Q 3. What are confounding variables and how can they be controlled?

Confounding variables are extraneous factors that influence the dependent variable, making it difficult to determine the true effect of the independent variable. They’re essentially unwanted guests at the experimental party, muddying the waters and making it hard to isolate the key relationship. For instance, if you’re testing a new fertilizer’s effect on plant growth, a confounding variable could be the amount of sunlight each plant receives – if some plants get more sun, you can’t be certain if better growth is due to the fertilizer or the extra sun.

Confounding variables can be controlled through several methods:

- Randomization: As discussed earlier, randomly assigning participants to conditions helps minimize the impact of confounding variables.

- Matching: Pairing participants based on relevant characteristics (e.g., age, gender, pre-existing conditions) before assigning them to different groups can reduce confounding effects.

- Statistical control: Using statistical techniques like analysis of covariance (ANCOVA) can account for the influence of confounding variables during data analysis.

- Holding variables constant: If feasible, maintaining consistent levels of a potential confounding variable (e.g., using the same type of soil for all plants) eliminates its influence.

Q 4. Explain the importance of blinding in experimental studies.

Blinding refers to concealing the treatment condition from participants (single-blind) and/or researchers (double-blind). This is crucial for minimizing bias. If participants know they’re receiving a treatment, they might exhibit a placebo effect (improvement due to expectation), while researchers might unconsciously bias their observations or interpretations.

Example: In a drug trial, a double-blind study ensures neither the participants nor the researchers administering the drug know who receives the actual drug and who receives the placebo. This eliminates both participant bias (placebo effect) and researcher bias (conscious or unconscious influence on data collection and analysis). Blinding helps enhance the objectivity and credibility of the research findings.

Q 5. What are the different types of validity in experimental research?

Validity in experimental research refers to the extent to which the study measures what it intends to measure and the generalizability of the findings. There are several types:

- Internal validity: This addresses the question of whether the independent variable truly caused the observed changes in the dependent variable. High internal validity means that confounding variables have been effectively controlled.

- External validity: This concerns the generalizability of the findings to other populations, settings, and times. A study with high external validity has results that can be applied more broadly.

- Construct validity: This refers to the extent to which the operational definitions of the variables accurately reflect the theoretical constructs being studied. For example, does a particular test truly measure intelligence or just a specific type of problem-solving ability?

- Statistical conclusion validity: This focuses on whether the statistical tests used were appropriate and whether the conclusions drawn from the data are justified.

Achieving high validity in all these areas is a major goal in experimental design and analysis.

Q 6. How do you determine the appropriate sample size for an experiment?

Determining the appropriate sample size is critical for ensuring sufficient statistical power. A sample that’s too small might fail to detect a real effect (type II error), while a sample that’s too large wastes resources. Several factors influence sample size determination:

- Effect size: The magnitude of the expected difference between groups. Larger expected effects require smaller sample sizes.

- Significance level (alpha): The probability of rejecting the null hypothesis when it is true (typically set at 0.05). A lower alpha requires a larger sample size.

- Power (1-beta): The probability of rejecting the null hypothesis when it is false. Higher power (e.g., 0.80 or 80%) requires a larger sample size.

- Variability of the data: Greater variability within groups requires larger sample sizes.

Power analysis software or online calculators can help determine the appropriate sample size based on these factors. It’s important to conduct a power analysis before starting the experiment to avoid costly and time-consuming errors.

Q 7. What statistical tests would you use to analyze data from a 2×2 factorial design?

A 2×2 factorial design has two independent variables, each with two levels. The most appropriate statistical test to analyze the data depends on the nature of the dependent variable:

- If the dependent variable is continuous (e.g., weight, height, test scores): A two-way ANOVA (analysis of variance) is the standard approach. This test assesses the main effects of each independent variable and the interaction effect between them.

- If the dependent variable is categorical (e.g., pass/fail, yes/no): A chi-square test could be used to analyze the relationship between the independent and dependent variables, though a logistic regression might be more informative if you want to see the strength of the relationship and the role of individual predictors

The ANOVA will provide F-statistics and p-values for each main effect and the interaction. A significant interaction indicates that the effect of one independent variable depends on the level of the other. Post-hoc tests, such as Tukey’s HSD, might be needed to pinpoint specific differences between groups after a significant ANOVA result.

Q 8. Explain Type I and Type II errors in hypothesis testing.

In hypothesis testing, we make decisions based on sample data about a population. Type I and Type II errors represent the risk of making incorrect decisions. A Type I error, also known as a false positive, occurs when we reject a true null hypothesis. Think of it like this: you’re testing a new drug, and your experiment concludes it’s effective (rejecting the null hypothesis that it’s ineffective), when in reality, it’s not. A Type II error, or a false negative, happens when we fail to reject a false null hypothesis. In the same drug example, this would mean your experiment concludes the drug is ineffective (failing to reject the null hypothesis), when, in fact, it is effective.

The probability of making a Type I error is denoted by α (alpha), often set at 0.05 (5%). The probability of making a Type II error is denoted by β (beta). The power of a test (1-β) represents the probability of correctly rejecting a false null hypothesis. Balancing these error types is crucial; reducing one often increases the other.

Q 9. How do you interpret a p-value?

The p-value is the probability of observing results as extreme as, or more extreme than, the ones obtained in your experiment, assuming the null hypothesis is true. It’s a measure of evidence against the null hypothesis. A small p-value (typically less than 0.05) suggests strong evidence against the null hypothesis, leading us to reject it. However, it’s crucial to understand that a p-value doesn’t measure the probability that the null hypothesis is true or false. It’s simply a probability calculated under the assumption that the null hypothesis is true. For example, a p-value of 0.03 indicates that there’s a 3% chance of observing the obtained results if the null hypothesis were true. This low probability often leads us to reject the null hypothesis, but it doesn’t definitively prove the alternative hypothesis.

Q 10. What is the difference between correlation and causation?

Correlation describes a relationship between two variables; they tend to change together. Causation implies that one variable directly influences another. Correlation does not imply causation. Just because two variables are correlated doesn’t mean one causes the other. There might be a third, confounding variable influencing both. For instance, ice cream sales and crime rates might be positively correlated (both increase during summer), but ice cream sales don’t cause crime. The underlying cause is the warmer weather.

To establish causation, we typically need experimental evidence, demonstrating a cause-and-effect relationship through controlled manipulation of the independent variable and observation of its effect on the dependent variable. This often involves randomized controlled trials to minimize confounding variables.

Q 11. Describe your experience with A/B testing methodologies.

I have extensive experience with A/B testing methodologies. I’ve designed and implemented numerous A/B tests to optimize website design, marketing campaigns, and user interfaces. My experience encompasses all stages, from defining the hypothesis and selecting metrics to data collection, analysis, and reporting. For example, in one project, we tested two different call-to-action buttons on a landing page – one using imperative language and the other using a more subtle approach. We used a split-testing approach, randomly assigning users to either version (A or B) and tracking conversion rates. Statistical analysis of the results helped determine which button design led to a statistically significant improvement in conversions.

Beyond simple A/B tests, I’m familiar with more sophisticated techniques like multivariate testing (testing multiple variations of multiple elements simultaneously) and Bayesian A/B testing (incorporating prior knowledge to improve the efficiency of the test). Proper sample size calculation and the use of appropriate statistical tests are crucial aspects of my approach.

Q 12. How would you design an experiment to test the effectiveness of a new marketing campaign?

To test the effectiveness of a new marketing campaign, I’d design a controlled experiment, likely an A/B test. The experimental group would be exposed to the new campaign, while the control group would receive either the existing campaign or no campaign (depending on the context). The key is to randomly assign participants to either group to minimize bias. I would clearly define the metrics of success beforehand, for example, conversion rates, click-through rates, or brand awareness metrics measured through surveys.

The experiment’s duration needs careful consideration, balancing the need for sufficient data with practical constraints. Throughout the experiment, continuous monitoring would be essential to detect any unexpected issues or significant changes that might affect the results. Finally, robust statistical analysis would be used to determine if the new campaign’s impact is statistically significant compared to the control group. I would also consider using techniques like power analysis to determine the required sample size for sufficient statistical power.

Q 13. Explain the process of data cleaning and preprocessing for experimental data.

Data cleaning and preprocessing are critical steps in experimental data analysis. This process involves several steps, starting with data validation to ensure data accuracy and consistency. I would check for missing values, outliers, and inconsistencies. Handling missing data might involve imputation (filling in missing values using statistical methods) or removal of data points depending on the amount and nature of missing data. Outliers need careful consideration. Sometimes they represent errors, and removal is justified. Other times they might represent genuine extreme values. A thorough investigation is crucial before deciding whether to keep or remove these data points.

Data transformation might be necessary to meet the assumptions of statistical tests. This might involve scaling variables (e.g., using standardization or normalization), transforming non-normal distributions (e.g., using logarithmic transformation) or creating new variables from existing ones (e.g., calculating ratios or interaction terms). Finally, data cleaning also often involves error correction and dealing with inconsistencies in data formats or coding schemes.

Q 14. What are some common challenges encountered in experimental design and implementation?

Several challenges can arise during experimental design and implementation. One common issue is confounding variables – factors other than the independent variable that might influence the dependent variable, thus affecting the interpretation of results. Another issue is ensuring sufficient statistical power to detect significant effects. Small sample sizes can lead to false negatives. Ethical considerations are crucial, especially when involving human subjects; informed consent and data privacy are paramount.

External validity – whether results can be generalized to other contexts – is also a challenge. The experimental setting might not perfectly reflect the real-world scenario. Finally, resource constraints – such as time, budget, or participant availability – can significantly impact the feasibility and quality of experiments. Careful planning and consideration of these factors are crucial for successful experimental design and implementation.

Q 15. How do you handle missing data in an experimental dataset?

Missing data is a common challenge in experimental datasets. The best approach depends heavily on the nature of the missing data – is it missing completely at random (MCAR), missing at random (MAR), or missing not at random (MNAR)? MCAR implies the missingness is unrelated to any other variables; MAR means missingness depends on observed variables; and MNAR, the most problematic, means missingness depends on unobserved variables.

- For MCAR data: Simple methods like listwise deletion (removing entire cases with missing data) can be acceptable if the amount of missing data is small. Imputation methods, like replacing missing values with the mean, median, or mode, are also options, but can bias results if not used carefully.

- For MAR data: More sophisticated imputation techniques are needed, such as multiple imputation (creating several plausible imputed datasets and combining results), or maximum likelihood estimation. These methods account for the uncertainty introduced by the imputation.

- For MNAR data: Handling MNAR data is complex and often requires specialized statistical modeling techniques, potentially including model-based imputation or using techniques that explicitly account for the non-random nature of the missingness. Ignoring this type of missing data can lead to severely biased results.

Example: In a clinical trial measuring blood pressure, some participants may miss a follow-up appointment (potentially MAR, as it might relate to their health status), while others might refuse to provide blood pressure readings (potentially MNAR, driven by factors like anxiety about results). The chosen method must address this type of bias appropriately.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What software packages are you proficient in for statistical analysis?

My statistical analysis skillset is robust and includes proficiency in several widely used software packages. I’m highly experienced with R, leveraging its extensive statistical libraries like dplyr for data manipulation, ggplot2 for visualization, and lme4 for mixed-effects modeling. I also have considerable experience with Python, utilizing packages such as pandas for data analysis, scikit-learn for machine learning, and statsmodels for statistical modeling. Finally, I’m familiar with SPSS, which is valuable for its user-friendly interface and its powerful capabilities in analyzing large datasets.

The choice of software depends on the specific needs of the project. R and Python offer greater flexibility and power for complex analyses and customizability, whereas SPSS might be preferred for its user-friendliness in simpler projects or when working with teams less familiar with coding.

Q 17. Describe your experience with different experimental designs (e.g., randomized controlled trials, factorial designs).

I’ve extensively utilized various experimental designs throughout my career. My experience spans from simple randomized controlled trials (RCTs) to more complex factorial designs and quasi-experimental designs.

- Randomized Controlled Trials (RCTs): The gold standard for establishing causality, RCTs involve randomly assigning participants to treatment and control groups. I’ve worked on numerous RCTs, ensuring rigorous randomization procedures to minimize bias and employing appropriate statistical tests (e.g., t-tests, ANOVA) to compare group outcomes. For example, I helped design an RCT evaluating the effectiveness of a new drug for treating hypertension, carefully considering factors like blinding, sample size, and potential confounders.

- Factorial Designs: These designs allow for investigating the effects of multiple independent variables (factors) and their interactions. I’ve used these designs to assess the combined impact of different factors, such as fertilizer type and watering frequency on crop yield, leading to more efficient resource allocation and optimized outcomes. Analyzing these designs often requires ANOVA or regression models.

- Quasi-Experimental Designs: When random assignment isn’t feasible, quasi-experimental designs are used. These might involve comparing outcomes before and after an intervention, or comparing groups that naturally differ (e.g., comparing academic performance in schools with different funding levels). Careful consideration of potential confounders and using appropriate statistical techniques are crucial here.

Q 18. How do you ensure the reproducibility of your experiments?

Reproducibility is paramount. My approach involves meticulous documentation and version control. I maintain detailed records of the experimental protocol, including participant recruitment methods, data collection procedures, data cleaning steps, and analytical methods. I use version control systems like Git to track changes in code and data, ensuring transparency and the ability to revisit any stage of the process.

Further, I strive for open science practices wherever possible by using open-source software and making data and code available (where appropriate and ethical considerations allow). This enhances the credibility and allows others to verify and build upon the findings.

Finally, I use comprehensive documentation in my reports, including details of the software versions, packages, and settings used, so that any future analysis can use the same environment and generate the same results.

Q 19. How do you communicate the results of your experiments to both technical and non-technical audiences?

Effective communication is vital. When communicating to technical audiences, I focus on detailed methodology, statistical analyses, and nuanced interpretations. I might present findings using graphs, tables, and code, relying on their statistical knowledge. For non-technical audiences, I prioritize clear, concise language, focusing on the key findings and their practical implications. Visual aids like charts and infographics are invaluable in simplifying complex information.

Regardless of the audience, I consistently emphasize the limitations of the study and any potential biases, ensuring transparency and promoting critical thinking. In both cases, storytelling can help to make complex data more relatable and engaging.

For example, when presenting results of a study on customer satisfaction, I might present statistical significance to technical stakeholders, but emphasize the percentage increase in customer happiness and the company’s revenue increase for a non-technical audience.

Q 20. What is power analysis, and why is it important?

Power analysis is a crucial step in experimental design. It’s a statistical method used to determine the sample size needed to detect a statistically significant effect of a given size with a specified level of confidence (typically 80% or 90%). A study with insufficient power might fail to detect a real effect (Type II error), while one with excessive power wastes resources.

Why is it important? Power analysis helps to optimize resource allocation. It prevents wasting time and money on underpowered studies that might yield inconclusive results. It also helps avoid the ethical issues associated with unnecessarily exposing participants to treatments or interventions that may be ineffective or even harmful.

Example: Imagine a study testing a new teaching method. A power analysis helps determine how many students need to participate to confidently detect a meaningful improvement in test scores, given the expected effect size of the new method. Without power analysis, the study might not have enough participants to show the method’s effectiveness even if it really is effective.

Q 21. Explain the concept of effect size and its interpretation.

Effect size quantifies the magnitude of an effect, independent of sample size. It tells us ‘how big’ the difference is between groups or the strength of a relationship between variables. Unlike p-values, effect sizes are not influenced by the sample size.

Several measures of effect size exist, depending on the type of analysis. For example:

- Cohen’s d: Used for comparing means between two groups. A Cohen’s d of 0.2 is considered small, 0.5 medium, and 0.8 large.

- Eta-squared (η²): Used in ANOVA to measure the proportion of variance in the dependent variable explained by the independent variable. Values closer to 1 indicate a stronger effect.

- Correlation coefficient (r): Used to measure the strength and direction of the linear relationship between two variables. Ranges from -1 (perfect negative correlation) to +1 (perfect positive correlation).

Interpretation: A large effect size indicates a substantial difference or relationship, suggesting a stronger practical significance. A small effect size, even if statistically significant, might have limited practical implications. Effect sizes complement p-values; considering both is essential for a complete interpretation of experimental results. For instance, a statistically significant (low p-value) effect with a small effect size suggests that while the effect is real, its practical implications may be limited.

Q 22. How do you choose the appropriate level of significance (alpha) for a hypothesis test?

Choosing the appropriate significance level (alpha) in hypothesis testing is crucial because it directly impacts the risk of making a Type I error – rejecting a true null hypothesis. Think of it like this: alpha represents the probability of falsely concluding there’s an effect when there isn’t one.

The standard alpha level is 0.05, meaning there’s a 5% chance of making a Type I error. However, the optimal alpha level depends on the context of the study. In fields like drug development, where the consequences of a Type I error (approving an ineffective drug) are severe, a much lower alpha (e.g., 0.01 or even 0.001) might be preferred. Conversely, in exploratory research where the goal is to generate hypotheses, a slightly higher alpha (e.g., 0.10) might be acceptable.

The choice also depends on the power of the test (the probability of correctly rejecting a false null hypothesis). A lower alpha reduces the power, meaning you’re less likely to detect a real effect if one exists. Therefore, researchers often strive for a balance between minimizing Type I error and maximizing power, considering the specific costs and benefits associated with each type of error in their research domain.

Q 23. What are some ethical considerations in experimental research?

Ethical considerations in experimental research are paramount. They encompass the protection of participants’ rights and well-being, ensuring data integrity, and responsible reporting of findings. Key ethical considerations include:

- Informed Consent: Participants must be fully informed about the study’s purpose, procedures, risks, and benefits before agreeing to participate. They should be free to withdraw at any time without penalty.

- Confidentiality and Anonymity: Participant data must be kept confidential and anonymized whenever possible to protect their privacy.

- Minimizing Risk: Researchers must take steps to minimize any physical, psychological, or social risks to participants. This might involve rigorous safety protocols or psychological support.

- Data Integrity and Transparency: Researchers must adhere to strict data collection and analysis procedures to ensure the accuracy and validity of their findings. They should be transparent about their methods and data.

- Avoiding Bias and Misrepresentation: Researchers should strive to avoid bias in their study design, data analysis, and reporting of results. They should accurately represent their findings without exaggerating or misinterpreting them.

- Beneficence and Non-maleficence: The research should aim to benefit participants and society and should avoid causing harm.

Institutional Review Boards (IRBs) play a crucial role in overseeing the ethical conduct of research.

Q 24. Describe a time you encountered a problem during an experiment. How did you solve it?

During a study on the effectiveness of a new teaching method, we encountered unexpected high attrition rates among the control group. This threatened the internal validity of our experiment, as the remaining participants in the control group might not be representative of the original sample.

To address this, we first investigated the reasons for the attrition. Through follow-up surveys and interviews, we discovered that the control group’s curriculum was perceived as less engaging compared to the experimental group’s new method. We couldn’t simply replace the dropped participants, as this could introduce further bias. Instead, we adjusted our analysis strategy. We employed multiple imputation techniques to estimate the missing data based on the characteristics of the participants who remained in the study. This allowed us to account for the non-random attrition while acknowledging the limitations introduced by the missing data in our final conclusions.

Q 25. How do you identify and address potential biases in your experimental design?

Identifying and addressing bias is critical for ensuring the validity of experimental findings. Biases can arise at various stages of the research process, from study design to data analysis. Here’s a systematic approach:

- Randomization: Randomly assigning participants to different groups minimizes selection bias, ensuring that groups are comparable at the outset.

- Blinding: Blinding participants and researchers to group assignments reduces the risk of bias in data collection and interpretation (e.g., placebo effects). Double-blinding is ideal, where neither the participant nor the researcher knows the treatment assignment.

- Control Groups: Using a control group allows for comparison and helps isolate the effect of the independent variable.

- Standardized Procedures: Implementing standardized protocols for data collection and analysis reduces measurement bias and ensures consistency across groups.

- Objective Measures: Using objective measures minimizes subjective interpretation and reduces bias in data analysis.

- Sensitivity Analysis: Performing sensitivity analysis helps assess the robustness of findings to potential biases or deviations from assumptions.

For example, in a study on the effects of a new drug, blinding is crucial to prevent placebo effects. Similarly, standardized data collection procedures ensure consistency in measuring outcomes across participants.

Q 26. How familiar are you with different statistical modeling techniques (e.g., regression, ANOVA)?

I’m highly familiar with various statistical modeling techniques, including linear and logistic regression, analysis of variance (ANOVA), and mixed-effects models. My experience includes applying these techniques in various experimental designs, including:

- Regression: Used to model the relationship between a dependent variable and one or more independent variables. I’m proficient in interpreting regression coefficients, assessing model fit (e.g., R-squared), and dealing with issues like multicollinearity.

- ANOVA: Used to compare means across different groups or conditions. I can conduct one-way, two-way, and repeated-measures ANOVAs and understand post-hoc tests for multiple comparisons.

- Mixed-effects models: Used to analyze data with hierarchical or nested structures, accounting for correlations within groups. This is particularly useful in longitudinal studies or experiments with repeated measures.

My expertise extends to selecting the appropriate statistical model based on the research question, data type, and experimental design. I also have experience in model diagnostics and validation to ensure the reliability and accuracy of the results.

Q 27. What are some common threats to internal and external validity?

Internal validity refers to the extent to which the observed effects are truly due to the independent variable and not to confounding factors. External validity refers to the generalizability of the findings to other populations, settings, and times. Common threats to these include:

Threats to Internal Validity:

- Confounding variables: Uncontrolled variables that influence both the independent and dependent variables.

- History: External events that occur during the experiment that influence the results.

- Maturation: Changes in the participants over time that affect the dependent variable.

- Testing effects: The act of testing itself influencing subsequent test results.

- Instrumentation: Changes in measurement instruments or procedures that affect results.

- Regression to the mean: Extreme scores tending toward the average on subsequent measurements.

Threats to External Validity:

- Selection bias: Non-representative samples.

- Setting limitations: Results specific to a particular setting or context.

- Time limitations: Results specific to a particular time period.

- Interaction of selection and treatment: Effects specific to a certain type of participant or setting.

Careful experimental design, appropriate controls, and rigorous data analysis help to mitigate these threats.

Q 28. How would you design an experiment to investigate the interaction effect between two independent variables?

To investigate the interaction effect between two independent variables, a factorial design is ideal. Let’s say we want to study the effect of fertilizer type (A and B) and watering frequency (low and high) on plant growth. A 2×2 factorial design would be appropriate.

Design:

- Independent Variables: Fertilizer type (2 levels: A, B) and watering frequency (2 levels: low, high).

- Dependent Variable: Plant growth (e.g., height).

- Groups: Four groups of plants would be created, each receiving a unique combination of fertilizer and watering frequency: (A, low), (A, high), (B, low), (B, high).

- Random Assignment: Plants are randomly assigned to each group.

- Measurements: Plant growth is measured after a set period.

Analysis: A two-way ANOVA would be used to analyze the data. This will show the main effects of each independent variable (fertilizer type and watering frequency) as well as their interaction effect. A significant interaction effect indicates that the effect of one independent variable depends on the level of the other. For instance, fertilizer A might perform better under high watering frequency, while fertilizer B might perform better under low watering frequency.

Key Topics to Learn for Experimental Design and Implementation Interview

- Defining Research Questions & Hypotheses: Understanding how to formulate clear, testable hypotheses and operationalize variables for rigorous experimentation.

- Experimental Designs: Mastering different experimental designs (e.g., A/B testing, factorial designs, randomized controlled trials) and their appropriate applications. Knowing the strengths and limitations of each design is crucial.

- Data Collection & Measurement: Understanding various data collection methods, ensuring reliability and validity of measurements, and handling missing data appropriately.

- Statistical Analysis & Interpretation: Proficiency in statistical methods relevant to experimental design, including t-tests, ANOVA, regression analysis, and understanding p-values and effect sizes. Being able to communicate statistical findings clearly is essential.

- Ethical Considerations: Demonstrating awareness of ethical principles in research, including informed consent, data privacy, and minimizing bias.

- Practical Application & Case Studies: Being able to discuss real-world applications of experimental design and implementation across various fields (e.g., software development, marketing, healthcare). Preparing examples from your own experience is highly beneficial.

- Troubleshooting & Problem Solving: Understanding common challenges in experimental design and implementation (e.g., confounding variables, sample size limitations) and demonstrating the ability to propose solutions and alternative approaches.

- Communication & Presentation of Results: Clearly articulating experimental design, methodologies, findings, and conclusions to both technical and non-technical audiences. Practice presenting your work concisely and effectively.

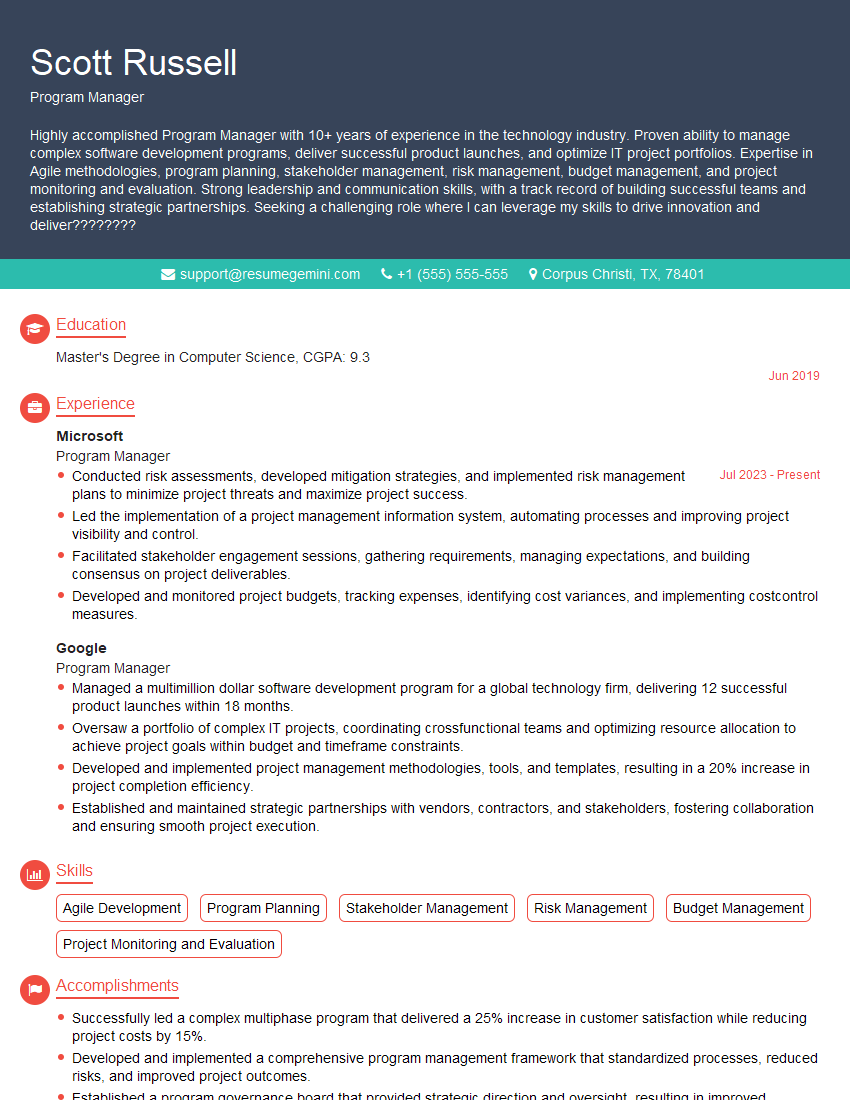

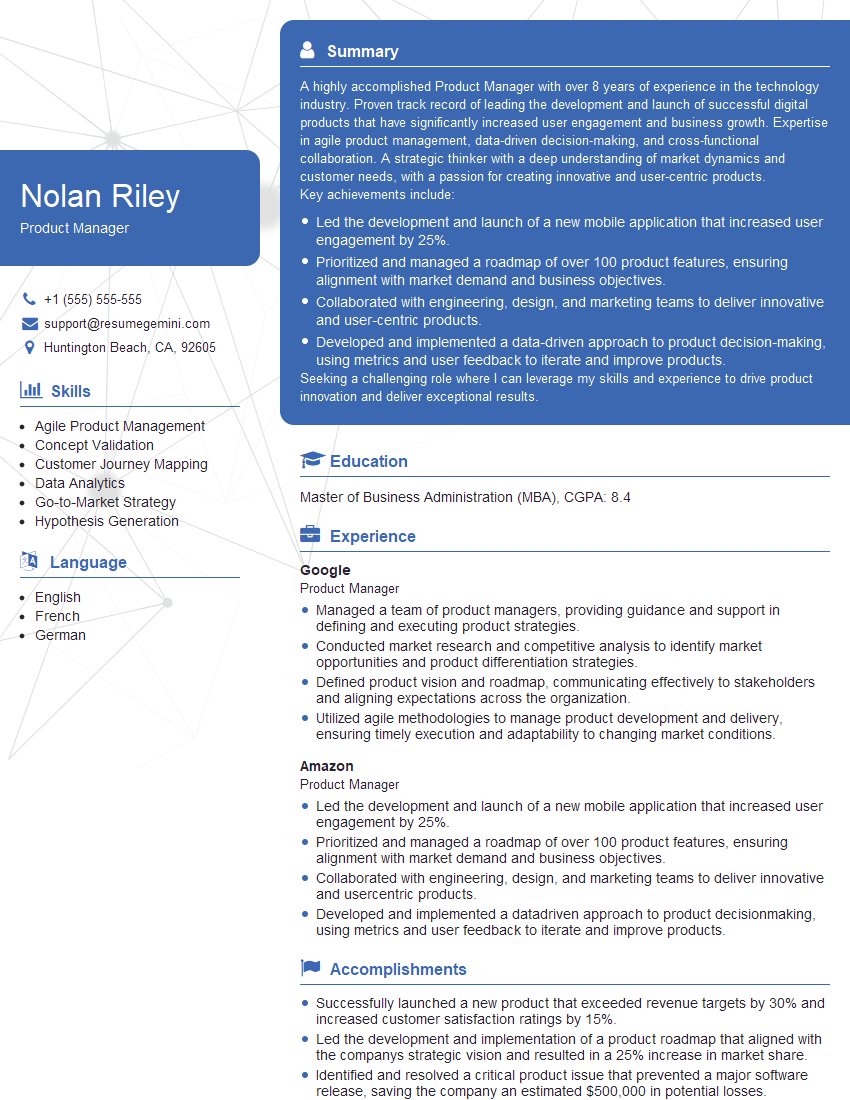

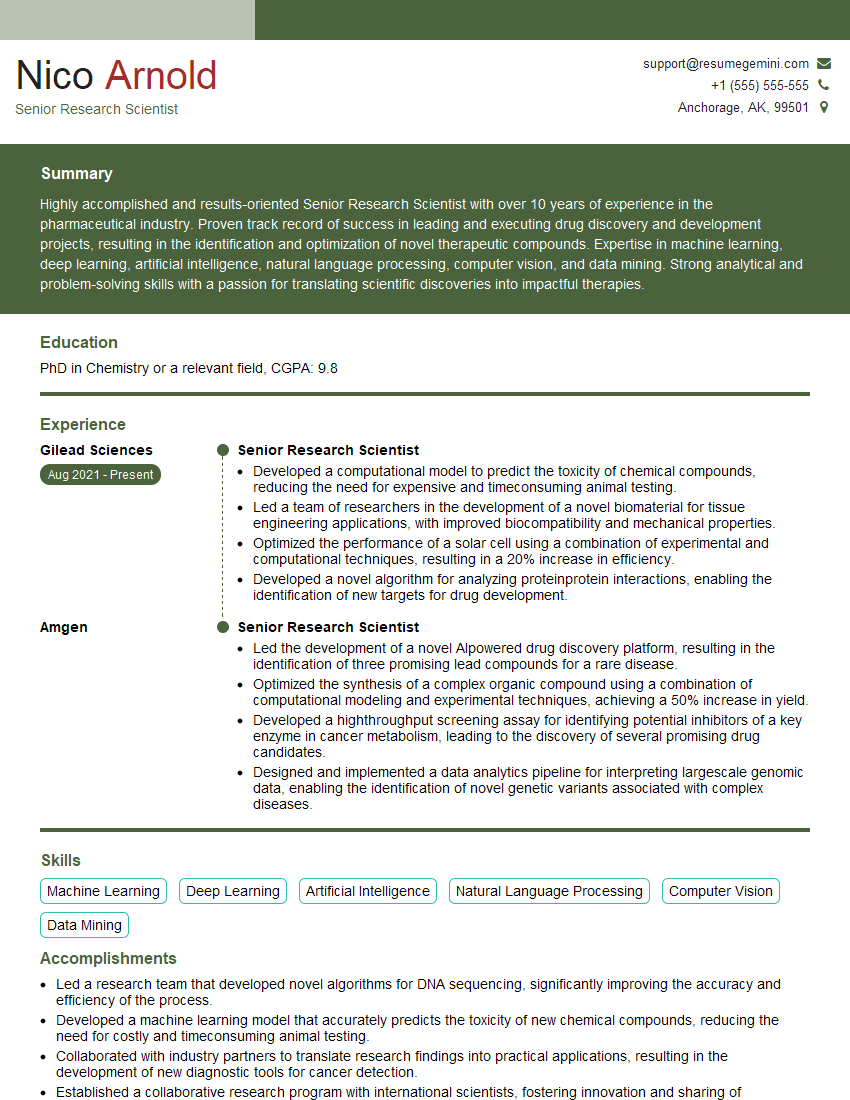

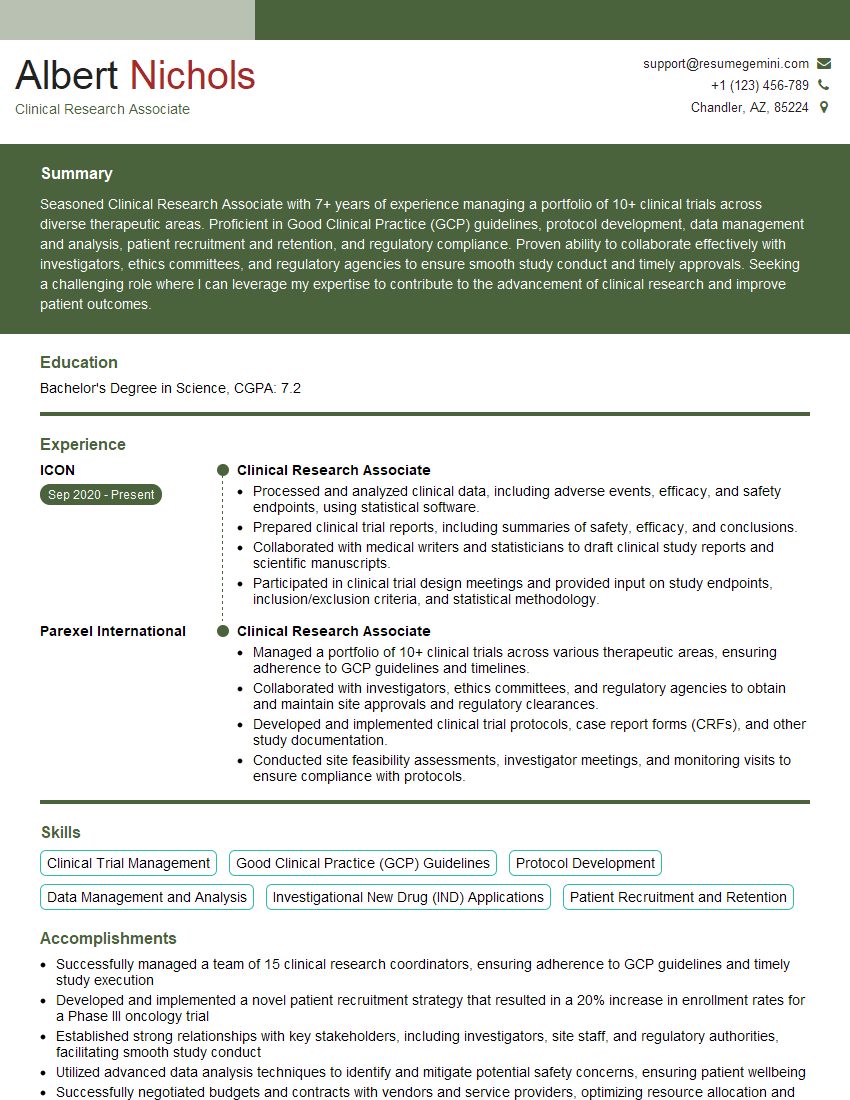

Next Steps

Mastering Experimental Design and Implementation is vital for career advancement in many fields, opening doors to exciting opportunities and higher-level positions. A strong resume is your key to unlocking these opportunities. Creating an ATS-friendly resume that highlights your skills and experience is crucial for getting noticed by recruiters. We highly recommend using ResumeGemini to build a professional and impactful resume tailored to your specific skills and experience. ResumeGemini offers examples of resumes specifically designed for candidates in Experimental Design and Implementation to help you create a compelling application.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.