Unlock your full potential by mastering the most common Reliable and Trustworthy interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Reliable and Trustworthy Interview

Q 1. Describe your experience implementing security protocols to ensure data integrity.

Ensuring data integrity is paramount in any system dealing with sensitive information. My approach involves a multi-layered strategy focusing on prevention, detection, and recovery. This includes implementing robust access control mechanisms, using cryptographic techniques for data protection, and establishing rigorous data validation processes.

Access Control: I utilize role-based access control (RBAC) and least privilege principles to restrict access to data based on individual roles and responsibilities. This minimizes the risk of unauthorized modification or deletion.

Cryptography: Data at rest and in transit is encrypted using industry-standard algorithms like AES-256. Hashing functions are used to verify data integrity, ensuring that it hasn’t been tampered with during storage or transmission.

Data Validation: Input validation and sanitization are crucial to prevent malicious data from entering the system. This includes checks for data type, length, and format, along with escaping special characters to prevent injection attacks. Regular checksums and data comparisons are also used to detect inconsistencies.

For example, in a previous project involving a financial database, we implemented end-to-end encryption using TLS 1.3 for secure communication and AES-256 encryption for data at rest. We also implemented robust logging and auditing to track all data access and modifications, allowing for quick identification of any unauthorized activity.

Q 2. Explain a situation where you had to troubleshoot a critical system failure. How did you maintain reliability?

During a recent project involving a high-traffic e-commerce platform, we experienced a critical system failure due to a database overload. The website became unresponsive, causing significant revenue loss and customer dissatisfaction. Maintaining reliability under pressure required a systematic and rapid response.

Immediate Response: The first step was to isolate the problem. We used monitoring tools to identify the bottleneck, confirming that the database server was overwhelmed. Then, we immediately implemented load balancing to distribute the traffic across multiple servers.

Root Cause Analysis: Once the immediate issue was resolved, we conducted a thorough root cause analysis. We examined the database queries, server logs, and application code to identify the underlying cause of the overload. This revealed an inefficient query that was unexpectedly impacting performance.

Solution Implementation: We optimized the inefficient query, and to prevent future overloads, we implemented a caching mechanism to reduce the database load. We also upgraded the database server’s capacity and implemented stricter monitoring thresholds.

Prevention Measures: To avoid similar incidents, we implemented more comprehensive capacity planning, load testing, and introduced automated alerts for critical system parameters. Regular performance testing became part of our standard deployment process.

This experience highlighted the importance of proactive monitoring, effective communication, and rapid response in maintaining system reliability.

Q 3. How do you prioritize tasks to ensure timely and accurate completion?

Prioritizing tasks effectively is crucial for successful project delivery. I employ a combination of methodologies, adapting my approach based on project specifics and urgency.

MoSCoW Method: I often use the MoSCoW method (Must have, Should have, Could have, Won’t have) to categorize requirements. This allows me to focus on critical tasks first while maintaining a clear understanding of potential trade-offs.

Eisenhower Matrix (Urgent/Important): This matrix helps to categorize tasks based on their urgency and importance. Urgent and important tasks receive immediate attention, while less urgent tasks are scheduled accordingly.

Dependency Mapping: Identifying task dependencies is essential to ensure efficient sequencing. This involves creating a visual representation of the tasks and their relationships, helping to determine the optimal execution order.

For example, in a recent project, we used the MoSCoW method to prioritize features. ‘Must-have’ features, like core functionality and security, were addressed first, followed by ‘Should-have’ features and finally ‘Could-have’ features.

Q 4. What methodologies do you use to ensure the trustworthiness of your work?

Trustworthiness in my work is built on a foundation of transparency, accuracy, and meticulousness. I employ several methodologies to ensure the reliability and validity of my work.

Version Control: I consistently utilize version control systems (like Git) to track changes, enabling collaboration, rollback capabilities, and audit trails. This ensures transparency and allows for easy tracking of modifications.

Code Reviews: Peer reviews are essential for identifying potential errors, improving code quality, and sharing knowledge. This collaborative approach ensures that multiple perspectives are considered and improves the overall trustworthiness of the codebase.

Testing and Validation: Rigorous testing, including unit testing, integration testing, and system testing, is crucial for identifying bugs and ensuring functionality. Automated testing further enhances efficiency and consistency.

Documentation: Clear and concise documentation of the design, implementation, and testing processes is vital for maintainability and reproducibility. This enables others to understand and verify the work.

For example, in a previous project, we used a rigorous testing framework that included automated unit tests, integration tests, and manual user acceptance tests. This process uncovered several critical bugs and ensured that the delivered software met the required specifications.

Q 5. Describe your experience with risk assessment and mitigation strategies.

Risk assessment and mitigation are fundamental to building reliable and trustworthy systems. My approach involves a structured process that identifies, analyzes, and mitigates potential threats.

Risk Identification: I begin by identifying potential risks through brainstorming sessions, threat modeling, and vulnerability assessments. This includes considering both internal and external factors.

Risk Analysis: Each identified risk is analyzed based on its likelihood and impact. This helps to prioritize risks based on their potential severity.

Risk Mitigation: Mitigation strategies are developed and implemented to reduce the likelihood or impact of identified risks. This may involve technical controls (e.g., firewalls, intrusion detection systems), administrative controls (e.g., access control policies), or physical controls (e.g., security cameras).

Monitoring and Review: The effectiveness of implemented mitigation strategies is regularly monitored and reviewed. This allows for adjustments and improvements based on ongoing assessments.

For instance, in a recent project, we conducted a thorough risk assessment, identifying potential security vulnerabilities, data breaches, and system failures. We implemented a multi-layered security approach, including firewalls, intrusion detection systems, and regular security audits to mitigate these risks.

Q 6. How do you handle conflicting priorities to maintain reliability and meet deadlines?

Conflicting priorities are an inevitable part of many projects. My approach to handling them involves effective communication, prioritization, and negotiation.

Open Communication: I foster open communication with stakeholders to clearly understand their priorities and concerns. This helps identify the root causes of conflicting priorities and explore potential solutions collaboratively.

Prioritization Framework: I utilize prioritization frameworks like the MoSCoW method or Eisenhower Matrix to objectively assess the relative importance of different tasks. This provides a rational basis for making difficult decisions.

Negotiation and Compromise: When necessary, I engage in constructive negotiation with stakeholders to find mutually acceptable solutions. This often involves trade-offs and compromises, balancing the needs of different parties.

Scope Management: Clearly defining and managing the project scope helps to prevent feature creep and unrealistic expectations, reducing the likelihood of conflicting priorities.

For example, in a project with competing deadlines for different features, we used a prioritization matrix to determine which features were most critical to the project’s success. We then negotiated with stakeholders to adjust expectations and timelines to accommodate the prioritized tasks.

Q 7. Explain your understanding of data security best practices.

Data security best practices encompass a wide range of measures designed to protect data from unauthorized access, use, disclosure, disruption, modification, or destruction. My understanding of these practices includes several key areas:

Access Control: Implementing strong passwords, multi-factor authentication (MFA), and role-based access control (RBAC) to restrict access to sensitive data based on individual roles and responsibilities.

Data Encryption: Using encryption both at rest and in transit to protect data from unauthorized access, even if a security breach occurs. This includes using strong encryption algorithms like AES-256.

Data Loss Prevention (DLP): Implementing measures to prevent sensitive data from leaving the organization’s control, such as data loss prevention tools and secure data disposal procedures.

Regular Security Audits and Penetration Testing: Conducting regular security assessments, vulnerability scans, and penetration testing to identify and address security weaknesses.

Security Awareness Training: Educating employees about security threats and best practices to minimize the risk of human error.

Incident Response Plan: Developing and regularly testing an incident response plan to effectively handle security incidents and minimize their impact.

Following these best practices creates a layered defense to protect data and ensure compliance with relevant regulations.

Q 8. How do you ensure the accuracy and validity of your work?

Ensuring accuracy and validity in my work is paramount. It’s a multi-faceted process that begins with a deep understanding of the problem and the requirements. I meticulously define the scope of the work, outlining clear, measurable, achievable, relevant, and time-bound (SMART) goals. This prevents scope creep and ensures focus.

Secondly, I employ rigorous testing methodologies throughout the development lifecycle. This includes unit testing, integration testing, system testing, and user acceptance testing (UAT). Each stage verifies specific functionalities and interactions, catching errors early and preventing them from cascading into later stages. For instance, during a recent project involving a financial transaction system, unit tests ensured individual modules functioned correctly before integration, dramatically reducing overall testing time and improving accuracy.

Finally, I leverage version control systems (like Git) and documentation to track changes and maintain a clear audit trail. This allows for easy identification of errors and ensures reproducibility of results. It’s like having a detailed history of every decision made, facilitating debugging and collaborative review.

Q 9. Describe a time you had to recover from a system failure. What steps did you take?

During a critical system failure involving a customer database, I immediately activated our disaster recovery plan. The initial focus was on mitigating further damage and restoring essential services. This involved a rapid assessment of the situation, identifying the root cause (in this case, a hardware failure), and initiating a failover to a redundant system.

Next, we leveraged our monitoring tools to track the system’s recovery and performance. We systematically restored data from our backups, prioritising critical customer information. Once the primary system was restored, a thorough post-incident review took place to identify vulnerabilities and improve our disaster recovery procedures. For instance, we discovered a weakness in our backup schedule and addressed it immediately, enhancing our resilience against future failures.

The entire process was meticulous and required collaborative effort across teams, underlining the importance of clear communication, pre-defined roles, and comprehensive planning in disaster recovery.

Q 10. How do you build and maintain trust with stakeholders?

Building and maintaining trust with stakeholders requires open communication, transparency, and consistent delivery on promises. I prioritize regular updates, both formal and informal, to keep stakeholders informed of project progress, challenges, and solutions. I actively seek feedback and address concerns promptly, demonstrating responsiveness and accountability. For example, I’ve used regular stand-up meetings to keep teams aligned, and weekly progress reports to keep management informed.

Furthermore, I strive to exceed expectations whenever possible, delivering high-quality work that consistently meets or surpasses performance standards. This demonstrates competence and builds credibility over time. In one instance, proactively identifying and addressing a potential risk to a project ahead of schedule not only prevented a major disruption, but solidified my credibility with the stakeholders involved. Trust is earned through actions and a consistent demonstration of integrity.

Q 11. What experience do you have with compliance regulations (e.g., GDPR, HIPAA)?

I have extensive experience with compliance regulations like GDPR and HIPAA. My understanding goes beyond simple adherence to regulatory text; I focus on implementing robust security measures that support these regulations. For example, understanding GDPR’s requirements for data minimization, processing limitations, and consent management heavily influenced the design and implementation of data handling procedures in several projects.

For HIPAA, my experience includes working with protected health information (PHI), ensuring adherence to security and privacy rules, and implementing access control mechanisms to prevent unauthorized access. This involved close collaboration with legal and compliance teams to ensure we consistently met the strict guidelines. Specific implementations include encryption of PHI both in transit and at rest, and robust auditing capabilities to track data access and modifications.

Q 12. Describe your experience with version control systems and their role in maintaining reliable code.

Version control systems (VCS), primarily Git, are integral to maintaining reliable code. They provide a centralized repository for tracking code changes, facilitating collaboration and enabling rollback to previous versions if necessary. This is analogous to having a detailed history of a document with the ability to revert to any previous revision.

Utilizing branching strategies, like Gitflow, allows for parallel development and testing of features without disrupting the main codebase. This reduces the risk of introducing errors into production code. Code reviews, facilitated by VCS, are essential for detecting errors and inconsistencies before deployment. This collaborative approach improves code quality and knowledge sharing within the team. We use clear commit messages to document changes, improving traceability and understanding.

git checkout -b feature/new-module // creates a new branch for a new feature

Q 13. How do you utilize monitoring tools to ensure system reliability?

Monitoring tools are crucial for maintaining system reliability. They provide real-time insights into system performance, allowing for proactive identification and resolution of potential issues. I utilize a range of tools, including system metrics dashboards, log aggregation systems, and application performance monitoring (APM) platforms. These tools allow us to track key performance indicators (KPIs) like CPU utilization, memory usage, network latency, and database query times.

We set up alerts for critical thresholds, allowing for immediate notification of any anomalies. For example, a sudden spike in database errors triggers an alert, prompting investigation and remediation before it impacts users. Data from these tools is analyzed to identify trends and optimize system performance. This proactive approach minimizes downtime and improves overall system stability.

Q 14. Explain your approach to testing and quality assurance.

My approach to testing and quality assurance is comprehensive and iterative. I believe in employing a multi-layered testing strategy that encompasses different levels of testing. This starts with unit tests, focusing on individual components. Integration tests verify interactions between modules, and system tests validate the overall system functionality. Finally, User Acceptance Testing (UAT) ensures the system meets user requirements.

Beyond functional testing, I emphasize non-functional testing, including performance, security, and usability testing. Automation plays a significant role, automating repetitive testing tasks to increase efficiency and reduce human error. For instance, we use automated test suites that run nightly, providing early detection of regressions. Continuous integration/continuous delivery (CI/CD) pipelines further integrate testing into the development process, ensuring code quality throughout the lifecycle. The goal is to deliver high-quality software that is reliable, secure, and performs as expected.

Q 15. How do you manage competing demands while maintaining high standards of accuracy?

Managing competing demands while upholding accuracy requires a structured approach. I prioritize tasks based on their impact and urgency, using methodologies like MoSCoW (Must have, Should have, Could have, Won’t have) to categorize requirements. This ensures critical tasks contributing most to accuracy are addressed first. For example, in a recent project involving data analysis, we had competing deadlines for a report and a critical system update. Using the MoSCoW method, we prioritized the system update – ensuring data integrity – before finalizing the report. Regularly reviewing and adjusting priorities is crucial, utilizing tools like Kanban boards to visualize workflow and identify potential bottlenecks before they impact accuracy.

To maintain accuracy, I employ rigorous quality control checks at each stage. This includes regular peer reviews, automated testing, and rigorous validation against known standards. Think of it like building a house – each brick needs to be perfectly placed, and regular inspections ensure the final structure is sound and accurate. Documentation is also key; meticulously recording every step, decision, and change prevents errors and provides a clear audit trail. By combining prioritization with robust quality control, I ensure that high standards of accuracy are consistently met, even under pressure.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with incident management and resolution.

My experience with incident management follows a structured, five-step process: 1. Detection and Reporting: Utilizing monitoring tools and proactive alerts, I identify incidents promptly. 2. Analysis and Diagnosis: I systematically investigate the root cause using logs, metrics, and system information, sometimes involving collaborative debugging sessions. 3. Resolution: Based on the diagnosis, I implement the necessary fixes, potentially involving code changes, configuration updates, or escalation to specialized teams. 4. Recovery: I ensure full system functionality is restored and impacted users are informed. 5. Post-Incident Review: I critically analyze the event, identifying preventative measures and documenting lessons learned to improve future responses. For instance, in a recent incident involving a database outage, I followed this process, pinpointing a faulty configuration setting, restoring the database from a backup, and ultimately implementing a preventative automated check in our monitoring system.

I use a ticketing system to manage and track incidents, ensuring proper documentation and follow-up. Clear communication with stakeholders is crucial throughout the process, keeping them informed of progress and potential impacts. This collaborative and systematic approach ensures timely resolution and minimizes disruption.

Q 17. What strategies do you use to prevent future system failures?

Preventing future system failures involves a proactive, multi-layered approach. This begins with robust design and architecture, utilizing principles like redundancy, failover mechanisms, and load balancing. Imagine a bridge; having multiple support structures ensures stability even if one fails. Regular system health checks, including proactive monitoring and performance testing, reveal potential vulnerabilities before they escalate into failures. This involves using tools to monitor CPU usage, memory consumption, network latency, and other critical metrics.

We also employ rigorous testing strategies, including unit testing, integration testing, and end-to-end testing, throughout the development lifecycle. Automated testing is paramount, allowing for quicker identification of issues. Furthermore, I champion a culture of continuous improvement, actively participating in post-incident reviews and implementing preventative measures based on lessons learned. Finally, keeping software and infrastructure up-to-date with security patches and updates is crucial, as these often address known vulnerabilities that can lead to system failures.

Q 18. How do you ensure the confidentiality, integrity, and availability of data?

Ensuring the confidentiality, integrity, and availability (CIA triad) of data is fundamental to my work. Confidentiality is achieved through access controls, encryption (both in transit and at rest), and data masking, limiting access to authorized personnel and protecting sensitive information. Integrity is maintained through data validation, checksums, version control, and regular backups, guaranteeing data accuracy and preventing unauthorized modification. Availability is ensured by redundancy, load balancing, disaster recovery planning, and high-availability infrastructure, minimizing downtime and ensuring continuous access to data.

For example, sensitive customer data is encrypted both when stored in our database and when transmitted over the network. We also employ strict access control policies, with different user roles having varying levels of permission. Regular backups allow for quick recovery in case of data loss, ensuring high availability. This layered approach ensures robust protection across all three pillars of the CIA triad.

Q 19. Explain your understanding of different security vulnerabilities and mitigation techniques.

I possess a thorough understanding of various security vulnerabilities, including SQL injection, cross-site scripting (XSS), cross-site request forgery (CSRF), denial-of-service (DoS) attacks, and buffer overflows. My approach to mitigation focuses on both preventative and detective measures.

Preventative measures include secure coding practices, input validation, parameterized queries to prevent SQL injection, using content security policies to mitigate XSS, and implementing robust authentication and authorization mechanisms. Detective measures involve intrusion detection systems (IDS), security information and event management (SIEM) tools, and regular security audits. For instance, we employ web application firewalls (WAFs) to detect and block malicious traffic, and we regularly conduct penetration testing to identify vulnerabilities before attackers can exploit them. My knowledge extends to various compliance frameworks like ISO 27001 and NIST Cybersecurity Framework, further guiding our security strategy and implementation.

Q 20. Describe a time you had to make a difficult decision that impacted reliability. What was the outcome?

In a previous role, we faced a critical decision regarding a system upgrade. The upgrade promised significant performance improvements but carried a higher risk of downtime during the migration. The deadline was approaching quickly, and choosing to delay would have impacted several key projects. After careful consideration of risks and benefits, including assessing the potential impact of downtime versus the benefits of the upgrade, we decided to proceed with the upgrade during a scheduled maintenance window.

To mitigate risks, we created a detailed rollback plan, thoroughly tested the upgrade in a staging environment, and implemented robust monitoring to detect any issues promptly. The migration was ultimately successful, delivering the promised performance enhancements without any major incidents. The outcome demonstrated the importance of risk assessment, meticulous planning, and proactive mitigation in making difficult reliability-impacting decisions.

Q 21. How do you stay up-to-date with the latest security threats and best practices?

Staying current with the ever-evolving landscape of security threats and best practices requires a multi-pronged strategy. I actively follow industry news sources, security blogs, and research publications, focusing on emerging threats and vulnerabilities. I also participate in online communities and attend industry conferences and webinars, networking with other professionals and learning from shared experiences. Furthermore, I regularly review and update my knowledge of relevant security standards and frameworks, ensuring our security practices remain aligned with best practices.

Certifications like CISSP demonstrate my commitment to continuous learning and professional development in the field. By combining these approaches, I maintain a robust understanding of the latest security threats and best practices, allowing me to proactively protect systems and data.

Q 22. What are your experience with disaster recovery and business continuity planning?

Disaster Recovery (DR) and Business Continuity Planning (BCP) are critical for ensuring organizational resilience. DR focuses on restoring IT systems after a disaster, while BCP encompasses broader strategies to minimize disruptions and maintain essential business functions. My experience spans developing and implementing comprehensive DR and BCP plans for various organizations, including financial institutions and healthcare providers.

For instance, at a previous role, I led the development of a DR plan for a critical database system. This involved identifying potential failure points (e.g., hardware failure, natural disaster), defining Recovery Time Objectives (RTOs) and Recovery Point Objectives (RPOs), and establishing a robust backup and recovery strategy using a combination of on-site and off-site backups. We also conducted regular drills and simulations to ensure the plan’s effectiveness and identify areas for improvement. We used a phased approach to recovery, prioritizing the most crucial systems and data first.

Another significant project involved creating a BCP for a large-scale e-commerce platform. This plan addressed various scenarios, including cyberattacks, supplier disruptions, and pandemics. It involved establishing alternate communication channels, identifying critical suppliers and developing contingency plans, and implementing a remote work strategy to ensure business continuity in the face of unforeseen circumstances. This involved detailed risk assessments and a rigorous testing process.

Q 23. How do you handle pressure and maintain composure during critical situations?

Maintaining composure under pressure is paramount in my field. I approach high-pressure situations methodically. My first step is to assess the situation calmly and prioritize the most critical tasks. This involves identifying the root cause of the problem, rather than focusing on the symptoms. I rely heavily on clear communication with the team, ensuring everyone understands their roles and responsibilities. We regularly practice incident response, so that under pressure, our processes become second nature.

For example, during a major system outage, I prioritized restoring core functionalities first while keeping stakeholders informed about the progress and anticipated resolution time. I delegated tasks effectively, ensuring everyone was focused and contributing to the solution. This clear leadership and delegation of tasks helps manage stress levels and promotes teamwork, creating a more efficient and effective response to a high-pressure situation.

I also leverage techniques such as deep breathing and mindfulness to manage my own stress and remain focused. Regularly practicing these stress management techniques keeps me well equipped to face critical situations with both composure and efficiency.

Q 24. Describe your experience with automation tools to enhance reliability and efficiency.

Automation is vital for enhancing reliability and efficiency. I have extensive experience using various tools to automate tasks, such as infrastructure provisioning, monitoring, and incident response. I’ve worked with tools like Ansible, Chef, and Puppet for infrastructure automation, and have extensive experience with monitoring tools such as Prometheus and Grafana, as well as cloud-based monitoring services like AWS CloudWatch and Azure Monitor.

In a previous role, we automated the deployment of our application using Jenkins and Docker. This significantly reduced deployment time and minimized the risk of human error. We also automated the monitoring of our systems, enabling us to proactively identify and address potential problems before they impacted our users. Automated incident response workflows have also streamlined the process of resolving issues faster and with greater accuracy.

Furthermore, I’ve developed scripts using Python and PowerShell to automate routine administrative tasks, freeing up valuable time for more strategic initiatives. The key benefits of automation have been increased speed, reduced errors, improved consistency, and ultimately, a more reliable and efficient IT infrastructure.

Q 25. Explain your understanding of different types of system architectures and their reliability implications.

Understanding system architectures and their reliability implications is fundamental. Different architectures offer varying levels of fault tolerance and scalability. For instance, microservices architectures offer high scalability and fault isolation, but require more complex management. Monolithic architectures are simpler to manage but can be less resilient to failures.

- Monolithic Architectures: All components are tightly coupled. A single point of failure can bring down the entire system. Simpler to develop and deploy initially, but difficult to scale and maintain over time.

- Microservices Architectures: The application is broken down into smaller, independent services. Failure in one service doesn’t necessarily affect others. More complex to manage but offers improved scalability, resilience, and maintainability.

- Distributed Architectures: Components are spread across multiple servers or data centers. Offers high availability and fault tolerance but introduces complexity in coordination and data consistency.

My experience encompasses designing and implementing systems using various architectures, always considering the reliability trade-offs. The choice of architecture depends heavily on the specific needs and requirements of the application. Factors like scalability needs, cost, and maintenance requirements play crucial roles in this decision.

Q 26. How do you measure and track system reliability and performance?

Measuring and tracking system reliability and performance involves a multi-faceted approach. We use a combination of metrics, monitoring tools, and logging systems to gain insights into system behavior.

- Availability: Measured as uptime percentage (e.g., 99.99%). Tools like Nagios, Zabbix, and Datadog track system availability.

- Latency: Measures the time it takes for a system to respond to a request. Monitoring tools track response times and identify performance bottlenecks.

- Throughput: Measures the number of requests a system can process per unit of time. This helps identify capacity limitations.

- Error Rates: Tracking the frequency of errors helps identify problematic areas and improve system stability. Log analysis tools are crucial here.

We use dashboards to visualize key metrics and set up alerts to notify us of potential problems. Regular performance testing and load testing help assess system capacity and identify areas for improvement. This combination of proactive monitoring, reactive incident management and analytical review forms the foundation of a healthy reliability and performance tracking regimen.

Q 27. What experience do you have with implementing and managing security audits?

Implementing and managing security audits is crucial for maintaining the confidentiality, integrity, and availability of systems. My experience includes conducting both internal and external security audits, adhering to industry best practices and regulatory compliance standards such as ISO 27001 and SOC 2.

This involves vulnerability assessments, penetration testing, and reviewing security configurations to identify weaknesses and potential threats. We use automated scanning tools and manual reviews to ensure a thorough audit. Following an audit, a remediation plan is developed and implemented to address identified vulnerabilities. These plans may involve patching software, updating security configurations, and implementing security controls. We then conduct follow-up audits to verify that remediation efforts have been successful.

For example, I managed a security audit for a client’s cloud infrastructure. This involved assessing the security of their virtual machines, networks, and storage systems. We identified several vulnerabilities and recommended remediation actions, which were implemented by the client’s IT team. Regular audits are crucial to maintaining a robust security posture and protecting sensitive data.

Q 28. Describe your approach to problem-solving in situations involving system failures.

My approach to problem-solving in situations involving system failures is systematic and data-driven. I follow a structured process to identify the root cause, implement a solution, and prevent future occurrences.

- Identify the problem: Clearly define the nature and scope of the failure, collecting relevant data from logs, monitoring tools, and affected users.

- Isolate the cause: Use diagnostic tools and techniques to pinpoint the root cause of the failure. This may involve examining logs, reviewing system configurations, and conducting network analysis.

- Implement a solution: Develop and implement a solution to address the immediate problem. This might involve restarting a service, deploying a patch, or reverting to a previous configuration.

- Prevent future occurrences: Analyze the root cause to identify underlying issues that led to the failure. Implement preventative measures to prevent similar failures in the future. This may involve improving system design, implementing better monitoring, or enhancing security controls.

- Document everything: Maintain detailed records of the incident, including the cause, the solution, and any preventative measures taken. This information is crucial for future troubleshooting and incident response.

For instance, when a web application experienced intermittent outages, I used logging and monitoring data to identify a bottleneck in the database. By optimizing the database queries and increasing server resources, we resolved the issue and prevented future occurrences. Detailed documentation was maintained to provide insight into future issue resolution strategies.

Key Topics to Learn for Reliable and Trustworthy Interview

- Integrity and Ethics: Understanding ethical decision-making in professional contexts, including conflict resolution and adherence to codes of conduct. Practical application involves analyzing case studies and demonstrating ethical reasoning.

- Accountability and Responsibility: Demonstrating ownership of tasks and projects, proactively identifying and addressing potential issues. Practical application involves describing past experiences where you took initiative and responsibility for outcomes.

- Consistency and Dependability: Highlighting your track record of meeting deadlines and commitments, managing workload effectively, and maintaining a high level of performance. Practical application involves providing specific examples showcasing your reliability in past roles.

- Transparency and Open Communication: Explaining how you foster open communication, share information effectively, and build trust with colleagues and clients. Practical application involves discussing scenarios where you proactively communicated challenges or successes.

- Data Security and Confidentiality: Understanding the importance of protecting sensitive information and adhering to data privacy regulations. Practical application involves describing your experience handling confidential data and demonstrating knowledge of relevant security protocols.

- Problem-Solving and Critical Thinking: Demonstrating your ability to approach problems systematically, analyze information objectively, and develop effective solutions. Practical application involves using the STAR method to describe past problem-solving experiences.

Next Steps

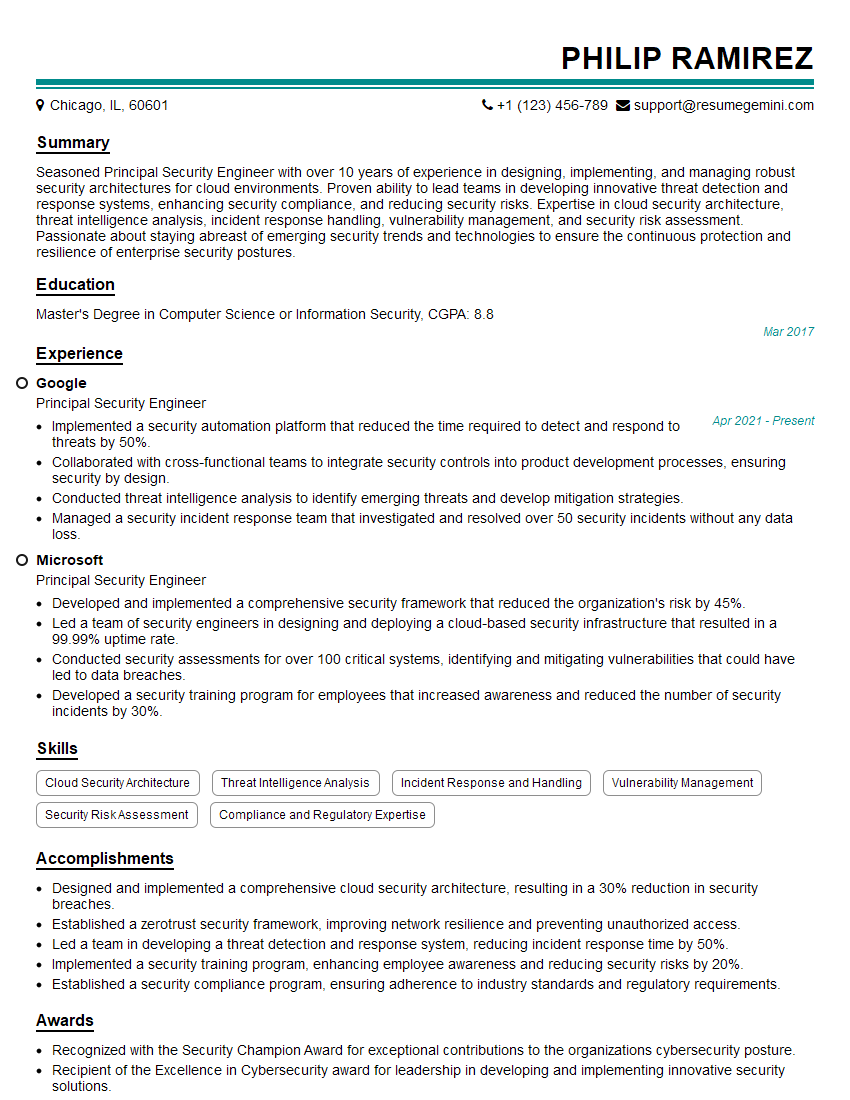

Mastering the concepts of reliability and trustworthiness is crucial for career advancement, opening doors to leadership roles and building strong professional relationships. An ATS-friendly resume is your first impression; it needs to highlight your skills and experience effectively to secure interviews. Use ResumeGemini to craft a compelling resume tailored to showcase your reliability and trustworthiness. Examples of resumes optimized for showcasing these qualities are available below to help guide your resume creation.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.