Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Change Detection and Time Series Analysis interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Change Detection and Time Series Analysis Interview

Q 1. Explain the difference between stationary and non-stationary time series.

The core difference between stationary and non-stationary time series lies in their statistical properties over time. A stationary time series has constant statistical properties like mean, variance, and autocorrelation regardless of the time period considered. Imagine a perfectly balanced spinning top – its movement is consistent and predictable. In contrast, a non-stationary time series exhibits changes in these properties over time. Think of a rollercoaster – its speed and direction constantly fluctuate.

For example, the daily temperature in a specific location might be considered non-stationary because the average temperature will likely be higher in summer than in winter. However, the daily fluctuations *around* the seasonal average might be approximately stationary.

Stationarity is crucial because many time series models, particularly those used in forecasting, assume stationarity. Non-stationary series often need to be transformed before analysis to avoid spurious correlations and inaccurate predictions.

Q 2. Describe various methods for making a time series stationary.

Several techniques can transform a non-stationary time series into a stationary one. The most common include:

- Differencing: This involves subtracting consecutive data points. First-order differencing subtracts the previous observation from the current one (

yt - yt-1). Higher-order differencing can be used if the first-order differencing isn’t sufficient. This effectively removes trends and seasonality. - Log Transformation: Applying a natural logarithm (ln) to the data can stabilize the variance, especially if the data exhibits exponential growth. This is helpful when the variance changes proportionally to the level of the series.

- Power Transformation (Box-Cox): This is a generalization of the log transformation and can handle a wider range of data characteristics. It involves raising the data to a power (λ). The optimal λ is often determined empirically.

- Seasonal Differencing: Similar to regular differencing, but the subtraction is done with the observation from the same period in the previous season (e.g., subtracting last year’s value from this year’s value for monthly data).

The choice of method depends on the nature of the non-stationarity. Often, a combination of these techniques might be necessary to achieve stationarity. For instance, a series with a trend and seasonality might require differencing to remove the trend and seasonal differencing to remove the seasonality.

Q 3. What are the assumptions of ARIMA models?

ARIMA (Autoregressive Integrated Moving Average) models make several key assumptions:

- Stationarity: The data, after differencing if necessary, should be stationary. This ensures the model’s parameters remain constant over time.

- Autocorrelation: The data points are correlated with their past values, a key aspect captured by the AR and MA components.

- Normality of Errors: The residuals (the differences between the actual and predicted values) should be normally distributed with a mean of zero and constant variance. This assumption is important for statistical inference.

- Constant Variance (Homoscedasticity): The variance of the error terms should remain constant over time. This is closely related to the normality assumption.

- No significant autocorrelation in the residuals: After fitting the ARIMA model, the residuals should not show any significant autocorrelation; otherwise, it suggests the model has not captured all the important dependencies in the data.

Violation of these assumptions can lead to inaccurate forecasts and unreliable statistical inferences. Diagnostic checks are essential after fitting an ARIMA model to assess the validity of these assumptions.

Q 4. How do you handle missing data in a time series?

Handling missing data in time series is crucial for maintaining data integrity and accurate analysis. Several strategies can be employed:

- Deletion: Simple, but can lead to information loss, especially if there are many missing values. Suitable only for a small number of missing values.

- Imputation: This involves replacing missing values with estimated values. Methods include:

- Mean/Median imputation: Replacing missing values with the mean or median of the available data. Simple, but doesn’t account for temporal correlations.

- Linear interpolation: Estimating missing values based on linear interpolation between neighboring data points. Considers temporal context, better than mean/median imputation but still basic.

- Spline interpolation: Uses more complex curves to interpolate, providing better accuracy than linear interpolation, especially with non-linear trends.

- Model-based imputation: Using a time series model (like ARIMA) to predict the missing values. This is often the most accurate method, as it considers the underlying patterns in the data.

The best approach depends on the amount of missing data, the nature of the missingness, and the desired level of accuracy. For a large number of missing values, model-based imputation is generally preferred.

Q 5. Explain different approaches for outlier detection in time series data.

Outlier detection in time series data requires careful consideration of the temporal dependencies. Methods include:

- Boxplot method: Simple visualization technique to identify outliers based on the interquartile range. However, doesn’t directly account for time dependencies.

- Moving average method: Comparing each data point to its moving average. Points significantly deviating from the average could be considered outliers.

- Seasonal Hybrid ESD (Extreme Studentized Deviate): A robust method that accounts for both trend and seasonality. It’s particularly useful for detecting outliers in seasonal time series.

- Autoregressive models (AR): Using AR models to predict the expected values, and then comparing those predictions to the actual values. Large differences can indicate outliers.

- Isolation Forest: A machine learning-based method that isolates anomalies by randomly partitioning the data. Effective even for high-dimensional data.

It’s crucial to investigate the cause of detected outliers. They might represent genuine anomalies or data errors. Always visually inspect the data before making conclusions.

Q 6. Compare and contrast different forecasting methods (e.g., ARIMA, Prophet, Exponential Smoothing).

Let’s compare ARIMA, Prophet, and Exponential Smoothing:

| Method | Description | Strengths | Weaknesses |

|---|---|---|---|

| ARIMA | Statistical model capturing autocorrelations in data. | Strong theoretical foundation, good for stationary data. | Requires stationarity, parameter tuning can be complex, struggles with seasonality changes. |

| Prophet | Developed by Facebook, robust to missing data and trend changes. | Handles seasonality well, flexible, tolerates missing data. | Assumption of additive seasonality, might not perform well with short time series. |

| Exponential Smoothing | A family of methods assigning weights to past observations, decaying exponentially. | Simple to implement, adaptable to different patterns. | Less accurate than ARIMA or Prophet for complex patterns, parameter tuning can be subjective. |

ARIMA excels with stationary data showing clear autocorrelations. Prophet is best for data with strong seasonality and trend changes. Exponential smoothing offers a simpler alternative for less complex patterns, with variations like Holt-Winters for seasonality.

The choice depends on the characteristics of the data and the specific forecasting needs. Sometimes, hybrid approaches combining elements from different models yield superior results.

Q 7. How do you evaluate the accuracy of a time series forecasting model?

Evaluating the accuracy of a time series forecasting model involves comparing predicted values to actual values. Common metrics include:

- Mean Absolute Error (MAE): The average absolute difference between predicted and actual values. Easy to interpret but less sensitive to large errors.

- Root Mean Squared Error (RMSE): The square root of the average squared differences. More sensitive to large errors than MAE.

- Mean Absolute Percentage Error (MAPE): The average absolute percentage difference. Useful for comparing models across different scales.

- Symmetric Mean Absolute Percentage Error (sMAPE): A modified version of MAPE that addresses issues when actual values are close to zero.

In addition to these metrics, visual inspection of the residuals (the differences between the actual and predicted values) is crucial. Plots of residuals against time and against predicted values can reveal patterns or heteroscedasticity (unequal variance) that indicate model inadequacy. Autocorrelation tests on residuals can detect remaining temporal dependencies not captured by the model.

Finally, consider the context. A model performing well on past data might not generalize well to future data, especially if underlying patterns change. Regular model updates and monitoring are essential.

Q 8. What are some common metrics used to evaluate time series models?

Evaluating the performance of a time series model requires a suite of metrics, tailored to the specific goals of the model and the nature of the data. Commonly used metrics fall into categories assessing accuracy, precision, and the model’s ability to capture the underlying time series dynamics.

- Mean Absolute Error (MAE): This measures the average absolute difference between the predicted and actual values. Lower MAE indicates better accuracy. It’s easy to interpret and less sensitive to outliers than MSE.

- Mean Squared Error (MSE): The average of the squared differences between predicted and actual values. MSE penalizes larger errors more heavily than MAE. It’s frequently used due to its mathematical properties but can be sensitive to outliers.

- Root Mean Squared Error (RMSE): The square root of MSE. It’s expressed in the same units as the original data, making it easier to interpret than MSE. It also penalizes larger errors more heavily.

- Mean Absolute Percentage Error (MAPE): Expresses the error as a percentage of the actual value. Useful for comparing models across datasets with different scales. However, it’s undefined when the actual value is zero.

- R-squared (R2): Represents the proportion of variance in the dependent variable explained by the model. A higher R2 (closer to 1) indicates a better fit. However, it doesn’t necessarily imply a good predictive model, especially with complex time series.

- Adjusted R-squared: A modification of R2 that adjusts for the number of predictors in the model. It penalizes the inclusion of unnecessary variables, offering a more robust measure of model fit.

The choice of metrics depends on the application. For example, in forecasting stock prices, RMSE might be preferred due to its sensitivity to large errors, while in weather forecasting, MAE might be more suitable because outliers (extreme weather events) are common and shouldn’t disproportionately influence the evaluation.

Q 9. Explain the concept of autocorrelation and partial autocorrelation.

Autocorrelation and partial autocorrelation are crucial concepts in time series analysis that describe the correlation between data points at different time lags.

Autocorrelation measures the linear correlation between a time series and a lagged version of itself. A significant autocorrelation at lag k suggests a relationship between the value at time t and the value at time t-k. For example, a strong positive autocorrelation at lag 1 means that if the value is high at time t, it’s likely to be high at time t+1.

Partial autocorrelation measures the correlation between a time series and its lagged version, while controlling for the effects of intermediate lags. It helps isolate the direct correlation between points separated by a specific lag, removing the influence of correlations at shorter lags. For instance, a significant partial autocorrelation at lag 3, but not at lags 1 and 2, indicates a direct relationship between observations separated by three time units, independent of any relationships at shorter lags.

Imagine analyzing monthly sales data. High autocorrelation at lag 12 might indicate strong yearly seasonality. Partial autocorrelation helps determine if this seasonality is a direct effect or indirectly influenced by shorter-term trends.

Both autocorrelation and partial autocorrelation functions (ACF and PACF) are visually represented as plots, which are vital for identifying the order of autoregressive (AR) and moving average (MA) models in ARIMA modelling.

Q 10. How do you identify seasonality and trend in a time series?

Identifying seasonality and trend in a time series is a key step in effective time series modeling. These components represent systematic patterns in the data that need to be accounted for to accurately model the remaining variability (noise).

Seasonality refers to recurring patterns within fixed time periods. For example, ice cream sales might peak in summer and be low in winter, exhibiting a yearly seasonality. We can identify seasonality using:

- Visual inspection: Plotting the time series often reveals clear seasonal patterns.

- ACF and PACF plots: Significant autocorrelations at specific lags (e.g., 12 for monthly data with yearly seasonality) indicate seasonal effects.

- Decomposition methods: Statistical methods like classical decomposition can separate the time series into trend, seasonal, and residual components.

Trend refers to the long-term direction of the time series. It could be upward (increasing), downward (decreasing), or stationary (no consistent direction). We can identify the trend using:

- Visual inspection: Observing the overall direction of the data over time.

- Moving averages: Smoothing the data by averaging values across a specific window helps highlight the underlying trend.

- Regression models: Fitting a regression model with time as the independent variable can capture the trend.

For example, analyzing electricity consumption data, we might observe a clear upward trend over several years due to population growth, coupled with a daily seasonality reflecting peak usage during daytime hours. Correctly identifying and modeling these components is critical for accurate forecasting and anomaly detection.

Q 11. Describe different change point detection methods.

Change point detection methods aim to identify points in time where the statistical properties of a time series change abruptly. These methods are crucial in various applications, such as fraud detection, environmental monitoring, and medical diagnosis.

Methods can be broadly classified as:

- Rule-based methods: These rely on predefined thresholds or rules to identify changes. Simple but can be less sensitive to subtle changes and require careful parameter tuning.

- Statistical methods: These use statistical tests or models to identify changes. Examples include CUSUM, Bayesian methods, and change point models.

- Machine learning methods: These employ machine learning algorithms to learn patterns and detect changes. This approach is powerful but may need significant labeled data for training.

Specific examples:

- CUSUM (Cumulative Sum): Tracks the cumulative sum of deviations from a baseline. Large deviations indicate changes.

- Bayesian Online Changepoint Detection: Uses Bayesian methods to infer the probability of a change point at each time step.

- Pruned Exact Linear Time (PELT): An efficient algorithm for finding multiple change points in a time series.

The choice of method depends on the nature of the data, the type of change being detected, and computational constraints. For example, rule-based methods might suffice for simple scenarios, while Bayesian methods are better suited for complex data with uncertainty.

Q 12. What are the advantages and disadvantages of using rule-based change detection methods?

Rule-based change detection methods offer a straightforward approach to identifying changes in time series data. They rely on defining specific rules or thresholds based on observed data characteristics.

Advantages:

- Simplicity and ease of implementation: Rule-based methods are relatively easy to understand, implement, and interpret.

- Computational efficiency: They are typically faster than statistical or machine learning methods.

- Intuitive interpretation: The rules themselves often provide clear explanations for detected changes.

Disadvantages:

- Sensitivity to parameter tuning: The performance of rule-based methods heavily depends on the choice of thresholds and rules. Poorly chosen parameters can lead to missed changes or false positives.

- Lack of flexibility: Rule-based methods might struggle with complex or unexpected patterns that fall outside their pre-defined rules.

- Limited adaptability: They often lack the ability to learn from data and adapt to changing conditions.

Consider a system monitoring network traffic. A simple rule might be to flag an anomaly if the number of packets per second exceeds a predefined threshold. This is easy to implement but might miss subtle changes or generate false positives due to temporary network fluctuations. More sophisticated methods would be needed to accurately detect true anomalies.

Q 13. Explain how to use CUSUM for change point detection.

The Cumulative Sum (CUSUM) method is a powerful and widely used technique for change point detection. It’s particularly effective for detecting small, gradual shifts in the mean of a time series.

How CUSUM works:

- Establish a baseline: First, determine a baseline value for the time series, often the mean of an initial segment of the data.

- Calculate cumulative sums: For each subsequent data point, calculate the cumulative sum of the deviations from the baseline. Two separate cumulative sums are maintained, one for positive deviations (

St+) and one for negative deviations (St-). These are updated recursively: St+ = max(0, St-1+ + xt - μ0 - k)St- = max(0, St-1- + μ0 - xt - k)xtis the current data point,μ0is the baseline mean, andkis a control parameter (threshold).kdetermines the sensitivity; smallerkmakes the algorithm more sensitive to smaller changes. Themax(0, ...)ensures that the sums only increase when the deviations exceed the threshold.- Detect change points: When either

St+orSt-exceeds a predefined threshold (h), a change point is detected.his another important parameter which represents the required significance of the deviation before it’s considered as a change point.

Example (Conceptual): Imagine monitoring a production line’s output. The baseline might be the average output rate. CUSUM will track the cumulative deviations from this baseline. If the cumulative deviations persistently increase, indicating a systematic decrease or increase in production, it will trigger an alert, signaling a potential change point.

CUSUM’s strength lies in its ability to detect small, gradual shifts that other methods might miss. The choice of k and h requires careful consideration, often involving experimentation to find the optimal balance between sensitivity and false alarms.

Q 14. Describe Bayesian change point detection methods.

Bayesian change point detection methods offer a powerful and flexible framework for identifying changes in time series. Unlike frequentist methods, Bayesian approaches explicitly model uncertainty in the change points’ locations and the parameters of the time series segments.

Key features:

- Probability distributions: Bayesian methods assign probability distributions to model parameters and change points. This allows for quantifying uncertainty about the exact location and timing of changes.

- Prior knowledge: Prior knowledge about the time series (e.g., expected frequency of changes) can be incorporated into the analysis via prior distributions.

- Posterior inference: Bayesian inference updates the probability distributions based on observed data, resulting in a posterior distribution over change points.

Common approaches:

- Bayesian online change point detection: This method sequentially processes data and updates the posterior probability of a change point at each time step. It’s particularly useful for online monitoring scenarios where data arrives in a stream.

- Bayesian change point models: These models use Bayesian inference to estimate the parameters and location of change points within the time series, allowing for greater flexibility than online methods.

Example: Consider analyzing sensor data from a manufacturing process. A Bayesian change point method could model the sensor readings as coming from different distributions before and after a change in the manufacturing process. The method will not only detect when the change occurred but also provide the probability distribution over the possible times of this change.

The main advantage of Bayesian methods is their ability to handle uncertainty explicitly and incorporate prior knowledge. However, they can be computationally more intensive than frequentist methods, especially for long time series.

Q 15. What are some common challenges in applying change detection techniques?

Change detection, the process of identifying differences between two or more datasets representing the same area over time, faces several significant challenges. These challenges often intertwine and require careful consideration.

- Noise and Uncertainty: Real-world data is rarely pristine. Sensor noise, atmospheric conditions (for satellite imagery), and variations in data acquisition methods can introduce artifacts that mask genuine changes or falsely identify changes where none exist. Imagine trying to spot a small wildfire in a satellite image obscured by cloud cover – that’s the challenge of noise.

- Spectral and Spatial Variability: The appearance of a feature can change significantly due to seasonal variations (e.g., vegetation changes color), illumination differences (shadows), or even the angle of the sensor. This variability can make it difficult to reliably differentiate between actual changes and natural fluctuations.

- Computational Cost: Processing large datasets, especially high-resolution imagery or massive time series, can be computationally expensive, particularly for sophisticated change detection algorithms. This can limit the feasibility of applying certain methods in real-time or for very large areas.

- Defining ‘Change’: Clearly defining what constitutes a ‘change’ is crucial and highly context-dependent. A change that’s significant in one application (e.g., deforestation) might be insignificant in another (e.g., minor variations in a grassland). Defining appropriate thresholds and metrics is a critical step.

- Data Availability and Quality: Inconsistent data acquisition schedules, missing data, and variations in data quality across different time points can hinder the accuracy and reliability of change detection results. Imagine trying to track urban growth with inconsistent satellite images taken over several years—gaps in the data make accurate tracking near impossible.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you handle high-dimensional time series data?

High-dimensional time series data presents unique challenges. The curse of dimensionality – where the number of features grows exponentially – increases computational complexity and the risk of overfitting. Effective strategies are needed to manage this complexity.

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE) can reduce the number of features while preserving important information. PCA finds principal components that capture the most variance in the data, allowing us to focus on the most informative features.

- Feature Selection: This involves identifying the most relevant features for the analysis, often using techniques like recursive feature elimination or correlation analysis. We can select features with high correlation to the target variable, discarding less informative ones.

- Regularization Techniques: Methods like LASSO (L1 regularization) or Ridge regression (L2 regularization) can help prevent overfitting by adding penalty terms to the model’s loss function. These penalties discourage the model from relying too heavily on individual features.

- Ensemble Methods: Combining predictions from multiple models trained on different subsets of features or using different algorithms (e.g., bagging, boosting) can improve generalization performance and robustness to high dimensionality.

The choice of the best strategy depends on the specific dataset and the nature of the time series. Often, a combination of these techniques provides the most effective solution. For instance, you might first apply PCA for dimensionality reduction, then perform feature selection on the principal components before training a regularized model.

Q 17. Explain the concept of dynamic time warping (DTW).

Dynamic Time Warping (DTW) is an algorithm for measuring similarity between two temporal sequences that may vary in speed. Unlike simple Euclidean distance, DTW allows for non-linear alignment of the sequences, making it robust to variations in timing or rhythm. Think of it as stretching and compressing one time series to best match another, minimizing the distance between them.

Imagine comparing the heartbeats of two individuals. One might have a slightly faster or slower heart rate than the other, yet their underlying heart rhythm might be very similar. Simple Euclidean distance would likely indicate a large difference, while DTW would capture the similarity by allowing for temporal stretching and compression.

DTW works by finding the optimal warping path between two time series. This path represents the best alignment between the two sequences, minimizing the cumulative distance between corresponding points. The algorithm uses dynamic programming to efficiently find this optimal path.

//Illustrative example (not actual code): // DTW(series1, series2) returns the minimum distance and the warping path

DTW is particularly useful when dealing with time series data that exhibits variations in speed or timing, such as speech recognition, gesture recognition, and medical signal analysis.

Q 18. How do you select the appropriate model for a given time series?

Selecting the appropriate time series model depends heavily on the characteristics of the data and the goals of the analysis. There’s no one-size-fits-all answer, but a systematic approach is crucial.

- Data Exploration: Begin by visualizing the data using plots (time plots, autocorrelation plots, etc.) to identify trends, seasonality, and stationarity (whether the statistical properties of the data remain constant over time).

- Stationarity Check: Many classic time series models (e.g., ARIMA) assume stationarity. If the data isn’t stationary, differencing or transformations might be necessary to achieve stationarity.

- Model Selection Criteria: Based on the data’s characteristics (trends, seasonality, autocorrelation), consider appropriate models:

- ARIMA (Autoregressive Integrated Moving Average): For stationary series with autocorrelation.

- SARIMA (Seasonal ARIMA): For series with seasonality.

- Exponential Smoothing: For forecasting, particularly when trend and seasonality are present.

- Prophet (from Facebook): For business time series with strong seasonality and trend.

- Recurrent Neural Networks (RNNs): Powerful for complex, non-linear relationships, but require substantial data.

- Model Evaluation: Use metrics like Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Mean Absolute Percentage Error (MAPE) to compare the performance of different models. Cross-validation is essential to avoid overfitting.

The process is iterative. You might start with simpler models (e.g., exponential smoothing) and gradually move to more complex ones (e.g., ARIMA or RNNs) if needed, always carefully evaluating their performance.

Q 19. How do you deal with overfitting in time series models?

Overfitting in time series models occurs when the model learns the training data too well, including its noise and random fluctuations, resulting in poor generalization to unseen data. This often happens with complex models and limited data.

- Cross-validation: Employing time series cross-validation techniques (e.g., rolling-origin or expanding window) helps assess generalization performance more accurately than a simple train-test split.

- Regularization: Adding penalty terms to the model’s loss function discourages overly complex models. This is particularly useful for methods like ARIMA where parameter estimation can be sensitive to overfitting.

- Feature Engineering: Instead of relying on a large number of raw features, create informative, aggregated features (e.g., rolling averages, lagged variables) that capture essential patterns while reducing noise.

- Model Simplification: If a complex model is overfitting, try simplifying it by reducing the number of parameters or using a simpler model altogether. Sometimes a simpler model, even if less accurate on training data, will generalize better to unseen data.

- Early Stopping (for Neural Networks): For neural network models, monitor performance on a validation set during training and stop training when the validation performance starts to degrade. This prevents the model from continuing to fit the training noise.

Careful model selection and evaluation are key to avoiding overfitting. The balance between model complexity and data size is crucial. More data usually helps mitigate overfitting, but effective regularization and cross-validation remain critical even with abundant data.

Q 20. Explain the concept of cross-validation in the context of time series.

Cross-validation in time series analysis differs from standard cross-validation because maintaining the temporal order of data is crucial. Shuffling data, as done in typical cross-validation, would destroy the temporal dependencies.

Common time series cross-validation approaches include:

- Rolling-origin evaluation: The model is trained on a progressively expanding window of past data, and its performance is evaluated on a subsequent, non-overlapping window. This simulates the real-world scenario where predictions are made on future data, using only past data for training.

- Expanding window: Similar to rolling origin, but the test set is always the most recent data. The training set grows with each iteration.

- k-fold forward chaining: The data is split into k folds, and the model is trained on a growing number of folds, with each subsequent fold used as a testing set. This approach balances the computational cost of rolling origin with the increased sample size.

The choice of cross-validation method depends on factors such as data volume, computational resources, and the desired level of realism in the evaluation. For instance, rolling origin is more realistic for assessing prediction accuracy on new data, while expanding window is more computationally efficient.

Q 21. How do you interpret the results of a time series analysis?

Interpreting time series analysis results involves several key steps:

- Model Performance Assessment: Start by evaluating the model’s performance metrics (MAE, RMSE, MAPE) and assessing their statistical significance. Are the results significantly better than a naive baseline (e.g., predicting the mean)?

- Trend Analysis: Identify and interpret any trends revealed by the model. Is there a clear upward or downward trend? Is the trend linear or non-linear? What are the potential drivers of this trend?

- Seasonality Identification: Examine the model’s ability to capture seasonal patterns. Are there significant seasonal fluctuations? What is the period of the seasonality (e.g., monthly, quarterly, yearly)?

- Forecasting Evaluation: If forecasting was involved, evaluate the forecast accuracy and uncertainty. Consider the confidence intervals around the forecasts. Are the forecasts reliable, and how sensitive are they to changes in input parameters?

- Anomaly Detection: If anomaly detection was a goal, examine the identified anomalies. Are they meaningful? Do they align with external knowledge or domain expertise?

- Causality Considerations: Be cautious about inferring causality from correlation. Time series analysis can reveal associations between variables, but it doesn’t prove causality. Further investigation might be needed to establish causal relationships.

The interpretation should always be done in the context of the specific application and the limitations of the model used. Clearly communicating the uncertainties and limitations of the analysis is crucial for responsible interpretation.

Q 22. What are some common applications of time series analysis?

Time series analysis is a powerful technique used to analyze data points collected over time. Think of it like studying a movie frame by frame, instead of just looking at a single still image. This allows us to understand trends, patterns, and seasonality within the data. Common applications span numerous fields:

- Finance: Predicting stock prices, detecting market trends, and managing risk.

- Weather Forecasting: Analyzing historical weather data to predict future weather patterns.

- Healthcare: Monitoring patient vital signs, identifying disease outbreaks, and personalizing treatment plans.

- Sales Forecasting: Predicting future sales based on past sales data to optimize inventory and resource allocation.

- Manufacturing: Monitoring equipment performance, predicting machine failures, and optimizing production processes.

Essentially, any domain that collects data sequentially over time can benefit from time series analysis.

Q 23. How can you apply time series analysis to anomaly detection?

Anomaly detection in time series aims to identify unusual or unexpected data points that deviate significantly from the normal pattern. Time series analysis provides various methods for this. For instance, we can use:

- Moving Average: Comparing each data point to a moving average of its preceding points. A significant deviation suggests an anomaly.

- Exponential Smoothing: Assigns exponentially decreasing weights to older observations, providing more weight to recent data, making it very sensitive to recent changes.

- ARIMA models: Autoregressive Integrated Moving Average models can capture complex patterns and forecast future values. Anomalies would be points that significantly deviate from these predictions.

- Change Point Detection: Algorithms designed to detect abrupt shifts in the underlying statistical properties of the time series. These shifts might indicate anomalies.

For example, imagine monitoring server CPU usage. A sudden spike above the expected range, as identified by a moving average, could indicate an anomaly — perhaps a DDoS attack or a software bug.

Q 24. Explain the concept of recursive partitioning in time series analysis.

Recursive partitioning, in the context of time series analysis, involves repeatedly dividing the time series into smaller segments based on some criteria. This is akin to building a decision tree, but for time-dependent data. Each partition represents a distinct regime or pattern in the data. This approach is particularly useful for identifying change points or regime shifts in the time series.

Imagine a time series representing stock prices. Recursive partitioning might identify periods of high volatility followed by periods of low volatility. The algorithm would recursively split the series at points where the volatility changes significantly. Popular algorithms employing this concept include CART (Classification and Regression Trees) and other tree-based methods which can be adapted to handle temporal dependencies.

Q 25. Describe the use of neural networks for time series forecasting.

Neural networks, especially Recurrent Neural Networks (RNNs) and Long Short-Term Memory networks (LSTMs), are well-suited for time series forecasting because they can capture long-range dependencies in sequential data. Unlike traditional methods like ARIMA, which might struggle with complex, non-linear patterns, neural networks can learn intricate relationships.

LSTMs, in particular, are designed to address the vanishing gradient problem in RNNs, allowing them to learn long-term dependencies effectively. For example, an LSTM could be trained on historical sales data to predict future sales, accounting for seasonality, trends, and external factors like promotional campaigns.

The process involves preparing the data (e.g., normalization, feature engineering), designing the neural network architecture (number of layers, neurons, etc.), training the network on the historical data, and then using the trained model to make predictions on new data.

Q 26. How can you apply change detection to anomaly detection in streaming data?

Change detection techniques are vital for anomaly detection in streaming data, as they can identify deviations from the expected patterns in real-time. For instance, we can employ cumulative sum (CUSUM) charts or Bayesian Online Change Point Detection (BOCPD) algorithms.

CUSUM continuously monitors the cumulative sum of deviations from a reference value. A significant departure from zero indicates a potential change point, signaling an anomaly. BOCPD utilizes a Bayesian framework to probabilistically detect change points in the data stream, providing a measure of uncertainty alongside the change point locations. These methods are particularly valuable in applications like network security monitoring, where rapid identification of anomalies is critical.

Imagine monitoring network traffic. A sudden increase in the number of failed login attempts, as detected by a CUSUM chart, could signal a potential intrusion attempt.

Q 27. Compare and contrast supervised and unsupervised change detection methods.

Both supervised and unsupervised change detection methods aim to identify changes in data patterns, but they differ in how they approach the problem:

- Supervised methods require labeled data, meaning you need to have examples of change points already identified. Algorithms like Support Vector Machines (SVMs) or neural networks can be trained on this labeled data to detect changes in new, unlabeled data. The advantage is higher accuracy if you have sufficient labeled data. The disadvantage is the need for labeling, which can be expensive and time-consuming.

- Unsupervised methods do not require labeled data. They identify changes based on statistical properties of the data itself. Examples include CUSUM charts, change point detection algorithms based on statistical tests (e.g., Bayesian Information Criterion), and clustering techniques. They are easier to apply but might be less accurate or require more careful parameter tuning.

The choice between supervised and unsupervised methods depends on the availability of labeled data and the desired level of accuracy.

Q 28. What are the ethical considerations of using time series analysis and change detection in real-world applications?

Ethical considerations in using time series analysis and change detection are crucial. These techniques can have significant societal impact, raising several concerns:

- Bias and Fairness: Data used to train these models might reflect existing societal biases, leading to unfair or discriminatory outcomes. For example, a predictive policing model trained on biased data could perpetuate inequalities.

- Privacy: Analyzing personal time-series data, such as health or financial records, raises significant privacy concerns. Appropriate anonymization and data security measures are essential.

- Transparency and Explainability: Complex models like neural networks can be ‘black boxes,’ making it difficult to understand their decision-making process. This lack of transparency can hinder accountability and trust.

- Misuse and Manipulation: These techniques can be misused to manipulate public opinion, spread misinformation, or engage in unfair competitive practices. For example, manipulating time series data to influence stock prices is illegal.

Responsible use of these techniques requires careful consideration of these ethical implications, employing rigorous validation methods, and prioritizing transparency and fairness.

Key Topics to Learn for Change Detection and Time Series Analysis Interview

- Change Detection Fundamentals: Understanding different change detection methods (e.g., pixel-based, object-based), image differencing techniques, and the impact of various factors like noise and resolution.

- Time Series Analysis Basics: Exploring fundamental concepts such as stationarity, autocorrelation, and different time series decomposition methods (additive vs. multiplicative).

- Statistical Models for Time Series: Familiarizing yourself with ARIMA, SARIMA, and exponential smoothing models, including their assumptions and limitations.

- Change Point Detection: Mastering algorithms and techniques used to identify abrupt changes or shifts in time series data, and their practical implications.

- Forecasting Techniques: Understanding various forecasting methods, including their strengths and weaknesses, and how to select appropriate methods based on data characteristics.

- Practical Applications: Exploring real-world applications of change detection and time series analysis in fields like environmental monitoring, finance, and healthcare. Consider examples like anomaly detection in sensor data or predicting stock prices.

- Model Evaluation and Selection: Understanding key metrics for evaluating model performance (e.g., RMSE, MAE, accuracy) and techniques for model selection and comparison.

- Data Preprocessing and Feature Engineering: Mastering techniques for handling missing data, outliers, and transforming data to improve model performance.

- Software Proficiency: Demonstrate familiarity with relevant software packages like Python (with libraries such as Pandas, NumPy, Scikit-learn, Statsmodels) or R.

Next Steps

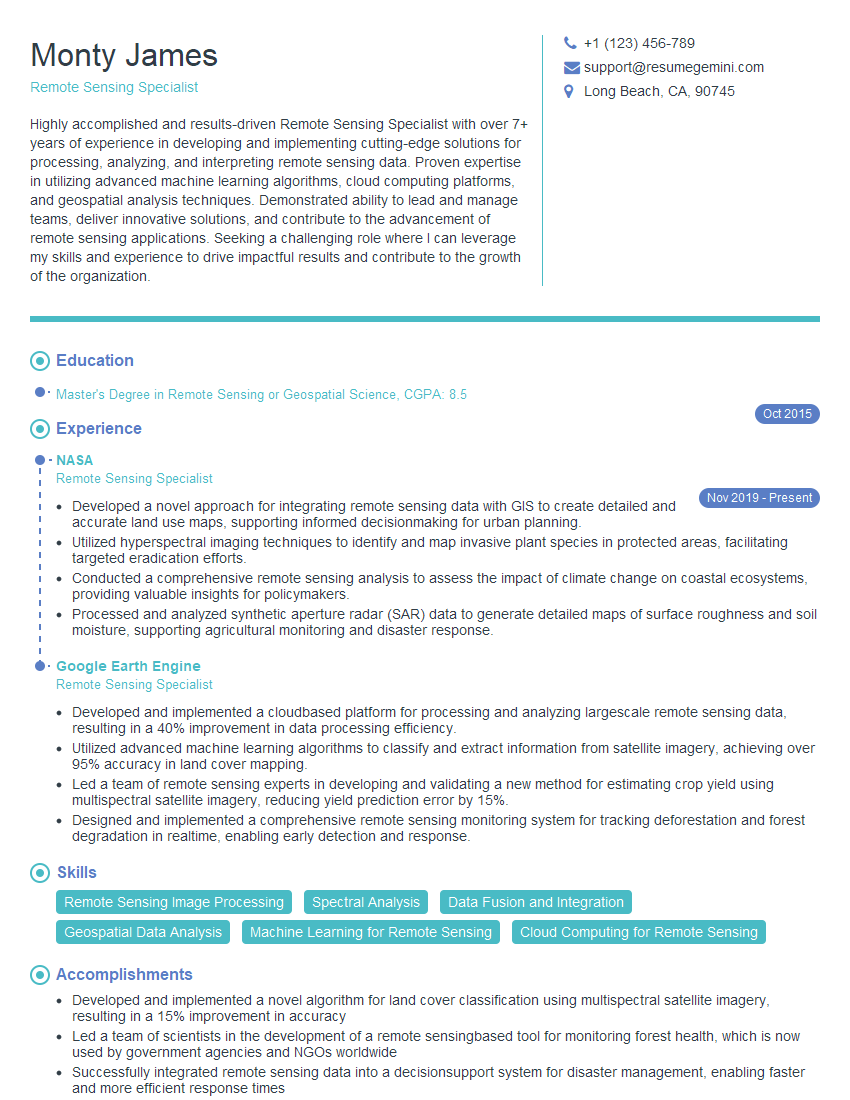

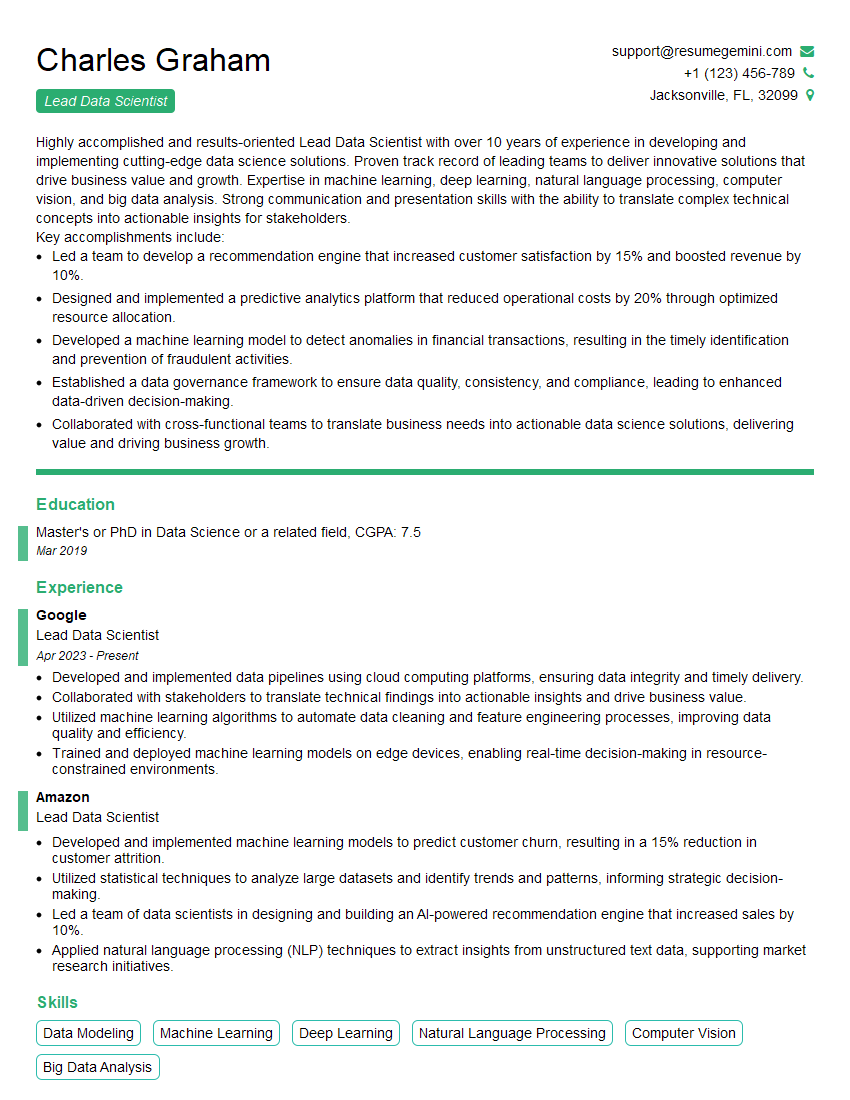

Mastering Change Detection and Time Series Analysis significantly enhances your prospects in data science, environmental science, finance, and many other fields. These skills are highly sought after, leading to exciting career opportunities and professional growth. To maximize your chances of landing your dream role, it’s crucial to present your skills effectively. Building an ATS-friendly resume is key to getting your application noticed. ResumeGemini is a trusted resource to help you craft a professional and impactful resume that highlights your expertise. Examples of resumes tailored to Change Detection and Time Series Analysis are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.