Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Electro-Optical and Infrared Image Analysis interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Electro-Optical and Infrared Image Analysis Interview

Q 1. Explain the differences between various infrared wavelength bands (SWIR, MWIR, LWIR).

Infrared (IR) wavelengths are categorized into various bands based on their energy levels, each offering unique advantages for different applications. The primary distinctions lie in the atmospheric transmission characteristics and the types of thermal signatures they reveal.

- Shortwave Infrared (SWIR): Ranges from approximately 0.9 to 3 micrometers. This band is less sensitive to atmospheric effects like water vapor absorption compared to longer wavelengths. It’s frequently used in applications where high resolution and penetration of certain materials (like glass) are crucial, such as precision agriculture, surveillance, and mineral exploration.

- Midwave Infrared (MWIR): Spans roughly 3 to 5 micrometers. MWIR offers a good balance between atmospheric transmission and thermal sensitivity, making it suitable for a wide range of applications, including target detection and recognition in military and security contexts, as well as industrial inspection and medical imaging. The atmospheric window around 4 micrometers is particularly favorable.

- Longwave Infrared (LWIR): Extends from roughly 8 to 15 micrometers. LWIR is highly sensitive to thermal emissions from objects at typical ambient temperatures, making it ideal for thermal imaging applications, such as night vision, building inspections, and predictive maintenance. However, it’s more susceptible to atmospheric attenuation, particularly from water vapor, limiting its range.

Imagine it like this: SWIR is like seeing a slightly blurry image in daylight; MWIR provides a clearer picture, still with decent atmospheric conditions; while LWIR is like seeing in the dark using heat as the light source – powerful, but visibility can be affected by atmospheric conditions (fog, rain etc.).

Q 2. Describe the principles of thermal imaging and its applications.

Thermal imaging leverages the principle that all objects above absolute zero (-273.15°C) emit infrared radiation. The intensity of this radiation is directly proportional to the object’s temperature. A thermal camera detects this emitted infrared radiation and converts it into a visible image, where different temperatures are represented by different colors or grayscale values. Hotter objects appear brighter (or in warmer colors), while colder objects appear darker (or in cooler colors).

Applications are incredibly diverse:

- Military and Security: Target acquisition, surveillance, and navigation in low-light or nighttime conditions.

- Industrial Inspection: Detecting overheating equipment, thermal leaks, and structural anomalies.

- Medical Diagnostics: Detecting inflammation, tumors, and other anomalies through changes in temperature patterns.

- Building Inspection: Identifying energy loss through insulation deficiencies and locating hidden moisture.

- Automotive: Testing engines, cooling systems, and braking systems for heat-related issues.

For example, a thermal image of a circuit board might reveal a faulty component emitting excessive heat before it malfunctions, preventing catastrophic failures. In medicine, thermal imaging can help detect early signs of breast cancer by revealing temperature variations in breast tissue.

Q 3. What are the common noise sources in EO/IR systems, and how are they mitigated?

Noise in EO/IR systems degrades image quality and reduces the accuracy of measurements. Common sources include:

- Detector Noise: Includes dark current (electrons generated in the detector even without illumination), read noise (noise introduced during signal readout), and shot noise (random fluctuations in the number of photons detected).

- Background Noise: This stems from ambient light sources, atmospheric emissions, and scattering of radiation within the system.

- System Noise: Can be generated by electronic components such as amplifiers and signal processing units.

Mitigation strategies involve:

- Careful Detector Selection: Choosing detectors with low dark current and read noise. Cooled detectors are often used to reduce dark current.

- Optical Filtering: Using narrow-band filters to reduce background noise and unwanted wavelengths.

- Signal Processing Techniques: Applying various filtering algorithms (like median filters, Wiener filters) to remove or reduce noise in the image data.

- Calibration and Compensation: Employing calibration techniques to correct for systematic errors and biases within the system.

- Cooling Systems: Cryogenic cooling drastically minimizes the thermal effects which generate noise.

For instance, using a cooled detector significantly reduces dark current noise, thereby improving the signal-to-noise ratio and the overall image quality, especially in low-light conditions.

Q 4. Explain the concept of atmospheric transmission and its impact on EO/IR imaging.

Atmospheric transmission refers to the fraction of electromagnetic radiation that passes through the atmosphere without being absorbed or scattered. This is crucial in EO/IR imaging because the atmosphere can significantly attenuate IR radiation depending on the wavelength, weather conditions, and distance to the target. Different wavelengths are absorbed differently; for instance, water vapor strongly absorbs certain wavelengths in the MWIR and LWIR bands.

The impact on EO/IR imaging can be severe:

- Reduced Range: Strong absorption can limit the maximum detection range of the system.

- Image Degradation: Scattering can cause blurring and reduce image contrast.

- False Alarms: Atmospheric emissions can be misinterpreted as targets.

Atmospheric models and compensation techniques are essential to correct for these effects. These models predict the transmission properties of the atmosphere at different wavelengths, allowing for more accurate interpretation of the images and improving the overall performance of EO/IR systems. A simple example is the difficulty in using LWIR cameras in foggy or rainy conditions due to the scattering and absorption of the radiation by water droplets.

Q 5. Discuss different image registration techniques used in EO/IR image processing.

Image registration is the process of aligning multiple images of the same scene taken from different viewpoints or at different times. This is critical in EO/IR image processing for tasks like change detection, image fusion, and creating 3D models. Several techniques exist:

- Feature-based Registration: This involves identifying common features (e.g., corners, edges) in the images and using these features to estimate the transformation (translation, rotation, scaling) needed to align the images. Algorithms like Scale-Invariant Feature Transform (SIFT) and Speeded-Up Robust Features (SURF) are commonly used.

- Intensity-based Registration: This method directly compares the intensity values of pixels in the images to find the optimal alignment. Techniques like cross-correlation and mutual information are utilized.

- Model-based Registration: This involves using a priori knowledge of the scene geometry or sensor parameters to predict the transformation parameters. For example, knowing the sensor’s position and orientation.

For example, in change detection applications, registering images from different times allows for accurate comparison to highlight changes in the scene. In creating 3D models from aerial or satellite imagery, robust image registration is fundamental to building accurate representations of the earth’s surface. The choice of technique depends on the characteristics of the images and the specific application.

Q 6. How do you perform image enhancement and restoration in EO/IR imagery?

Image enhancement and restoration aim to improve the visual quality and information content of EO/IR imagery, often counteracting the effects of noise, atmospheric attenuation, and sensor limitations.

Enhancement techniques focus on improving the visual appearance of an image without necessarily changing the underlying information. Common techniques include:

- Contrast stretching: Expanding the range of intensity values to enhance contrast and visibility of details.

- Histogram equalization: Redistributing the intensity values to improve the overall contrast.

- Sharpening filters: Enhancing edges and details by increasing high-frequency components.

Restoration techniques attempt to reconstruct the original, uncorrupted image by removing or reducing degradation caused by noise or blurring. Common techniques include:

- Noise filtering: Removing noise while preserving important image details. This could involve median filtering, Wiener filtering, or wavelet denoising.

- Deconvolution: Recovering the sharp image from a blurred version by estimating and reversing the blurring process. This requires knowledge of the point spread function (PSF) which models the blurring process.

- Atmospheric compensation: Correcting for the effects of atmospheric absorption and scattering.

For example, deconvolution could improve the resolution of a blurry image captured through a hazy atmosphere, while a median filter could remove salt-and-pepper noise caused by the detector without significantly affecting the image details.

Q 7. What are the advantages and disadvantages of different types of IR detectors?

Several types of IR detectors exist, each with its strengths and weaknesses:

- Photovoltaic (PV) detectors: These generate a current proportional to the incident radiation. They are known for their high sensitivity and speed, but typically have lower dynamic range compared to other types.

- Photoconductive (PC) detectors: These change their electrical resistance in proportion to incident radiation. They are generally more sensitive at longer wavelengths, but typically slower and noisier than PV detectors.

- Microbolometer detectors: These are uncooled thermal detectors that measure the temperature change caused by incident radiation. They are relatively inexpensive, rugged, and easily integrated but are generally less sensitive than cooled photodetectors.

- Quantum well infrared photodetectors (QWIPs): These devices leverage quantum mechanics to detect infrared radiation. They can achieve high sensitivity in the LWIR range and have potential for array integration. They are more complex than other detector types, especially those requiring cryogenic cooling.

Advantages and disadvantages summary:

| Detector Type | Advantages | Disadvantages |

|---|---|---|

| Photovoltaic (PV) | High sensitivity, fast response | Lower dynamic range |

| Photoconductive (PC) | Sensitive at longer wavelengths | Slower response, noisier |

| Microbolometer | Uncooled, inexpensive, rugged | Lower sensitivity |

| QWIP | High sensitivity in LWIR, array integration potential | More complex, often require cooling |

The choice of detector depends on the specific application requirements. For example, a high-speed application may benefit from a PV detector, while a low-cost thermal imaging system might use a microbolometer. Applications demanding high sensitivity in the LWIR often select QWIPs despite the complexity and associated costs.

Q 8. Explain the concept of spatial resolution and its importance in EO/IR systems.

Spatial resolution in Electro-Optical (EO) and Infrared (IR) imaging refers to the smallest discernible detail in an image. Think of it like the pixel density of a digital camera; higher resolution means smaller pixels and more detail. In EO/IR systems, it’s usually measured in terms of Ground Sample Distance (GSD), which represents the size of the area on the ground covered by a single pixel in the image. A smaller GSD indicates higher spatial resolution, enabling the detection of smaller and finer objects.

The importance of spatial resolution is paramount because it directly impacts the system’s ability to perform its tasks. For instance, in military applications, high spatial resolution is crucial for identifying individual soldiers or vehicles. In medical imaging (thermal imaging), high spatial resolution ensures the accurate detection of subtle temperature variations, aiding in disease diagnosis. A low spatial resolution image might blur details, hindering accurate identification or analysis, leading to incorrect conclusions.

For example, imagine trying to identify a specific type of aircraft. A low-resolution image might only show a blurry shape, making identification impossible. A high-resolution image, however, would reveal distinct features like the aircraft’s wings, tail, and markings, enabling accurate identification.

Q 9. Describe different target detection and recognition algorithms.

Target detection and recognition algorithms are crucial for automatically identifying objects of interest within EO/IR imagery. Several approaches exist:

- Template Matching: This involves comparing a known object’s template with the image to find similar patterns. It’s simple but sensitive to variations in scale, rotation, and viewpoint.

- Feature-based methods: These methods extract characteristic features (edges, corners, textures) from the target and image, then compare them. Examples include Scale-Invariant Feature Transform (SIFT) and Speeded-Up Robust Features (SURF). These are more robust to variations in the target’s appearance.

- Object Detection using Deep Learning: Convolutional Neural Networks (CNNs) excel at object detection. They learn to directly map image regions to object classes. Pre-trained models like YOLO (You Only Look Once) and Faster R-CNN are widely used, offering high accuracy and efficiency.

- Change Detection: This technique compares images taken at different times to highlight changes, indicating the presence of new targets. This is particularly useful for monitoring activities or identifying moving objects.

The choice of algorithm depends on factors such as computational resources, real-time requirements, and the complexity of the targets and background. For example, a simpler template matching approach might suffice for detecting a known, static object, whereas a deep learning model would be better suited for complex scenes with numerous objects.

Q 10. How do you handle geometric distortions in EO/IR images?

Geometric distortions in EO/IR images are common due to factors like lens imperfections, sensor misalignment, and platform motion. These distortions can affect accuracy and interpretation. Addressing them involves geometric correction using techniques like:

- Orthorectification: This process corrects for geometric distortions caused by terrain relief and sensor perspective, transforming the image to a map projection. It requires Digital Elevation Models (DEMs) for accurate terrain representation.

- Polynomial Transformation: This involves fitting a polynomial function to the control points (known locations) in the distorted and corrected images. This corrects for various types of distortions, but the order of the polynomial affects accuracy and complexity.

- Sensor Model-based Correction: This method uses a mathematical model of the sensor’s geometry to predict and correct the distortions. This approach requires detailed knowledge of the sensor’s internal parameters.

The choice of method depends on the type and severity of distortion and available information. For example, orthorectification is best for images affected by terrain relief, while polynomial transformation is suitable for general geometric distortions. Often a combination of methods might be used for optimal results. Tools like ENVI, ERDAS IMAGINE, and ArcGIS provide functionalities for implementing these methods.

Q 11. What is the role of image segmentation in EO/IR image analysis?

Image segmentation in EO/IR image analysis is the process of partitioning an image into meaningful regions based on similarity in pixel characteristics (intensity, texture, color). This is analogous to labeling different regions of a map – roads, buildings, forests, etc. In EO/IR analysis, segmentation is essential for isolating objects of interest from the background to facilitate subsequent analysis and interpretation.

Various segmentation methods exist:

- Thresholding: This is a simple method that partitions an image into regions based on pixel intensity levels. It’s effective for images with clear contrast between the target and background.

- Region-based methods: These methods group pixels based on their similarity in characteristics, growing regions iteratively. Examples include region growing and watershed segmentation.

- Edge-based methods: These methods detect edges or boundaries between different regions and use them to segment the image. Examples include Canny edge detection and Sobel operator.

- Deep Learning based methods: Deep learning techniques, particularly U-Net and Mask R-CNN, have become very popular due to their ability to handle complex scenes and automatically learn relevant features for segmentation.

Segmentation is critical for various applications, such as target detection, object recognition, and change detection. For instance, segmenting an infrared image could help in isolating a potential heat source (e.g., a fire) from the surrounding environment.

Q 12. Explain the concept of feature extraction in EO/IR image processing.

Feature extraction in EO/IR image processing is the process of selecting and quantifying the key characteristics of objects or regions within an image. These features act as a compact representation of the original image data, reducing dimensionality and complexity while preserving important information. Think of it as summarizing the main points of a long report to extract the key findings.

Features can be categorized into several types:

- Spectral features: These are based on the intensity values at different wavelengths (e.g., different spectral bands in multispectral or hyperspectral imagery). They are critical in distinguishing materials based on their spectral signatures.

- Spatial features: These are based on the spatial arrangement of pixels, such as texture, shape, and size. For example, the fractal dimension of a texture can be a useful feature.

- Geometric features: These relate to the object’s size, orientation, and shape (e.g., perimeter, area, aspect ratio).

- Transform-based features: These are derived from image transforms like Fourier, Wavelet, or Gabor transforms. They capture features related to frequency content or texture.

Feature extraction is a critical step because the right features significantly impact the accuracy of subsequent processing stages such as classification or object recognition. Poor feature selection can lead to misleading results. For instance, in detecting camouflage, features related to texture and spectral characteristics might be more relevant than simple geometric shape.

Q 13. Discuss the application of machine learning techniques in EO/IR image analysis.

Machine learning (ML) techniques are revolutionizing EO/IR image analysis by enabling automated and more accurate analysis of complex scenes. Various ML methods find extensive applications:

- Classification: ML algorithms are used to classify pixels or objects based on their features (e.g., land cover classification, target identification).

- Object Detection: Deep learning models (CNNs) provide highly accurate and efficient object detection capabilities. They learn to directly identify and locate objects in images.

- Regression: This is used to estimate continuous values from image data, such as temperature estimation in thermal imagery or estimating the size of an object from its image.

- Anomaly Detection: ML algorithms can effectively identify unusual patterns or anomalies in EO/IR images, aiding in applications such as detecting security breaches or identifying defects in manufactured components.

The success of ML methods depends on having sufficient labeled data for training. Transfer learning, where a pre-trained model is fine-tuned on a smaller dataset, can address data scarcity issues. For example, a pre-trained CNN could be fine-tuned to identify specific types of military vehicles in satellite imagery.

Q 14. How do you evaluate the performance of an EO/IR system?

Evaluating the performance of an EO/IR system is crucial for ensuring its effectiveness and reliability. Evaluation methods depend on the system’s intended application and involve multiple aspects:

- Spatial Resolution: Measured by GSD, it dictates the level of detail captured.

- Spectral Resolution: The number of spectral bands and their wavelengths determine the system’s ability to distinguish materials based on their spectral signatures.

- Radiometric Resolution: The number of bits used to represent the intensity values, impacting the sensitivity and dynamic range of the sensor.

- Detection Probability: The probability of correctly identifying the presence of a target.

- False Alarm Rate: The probability of incorrectly identifying a non-target as a target.

- Classification Accuracy: For systems performing classification, accuracy is assessed using metrics like precision, recall, and F1-score.

- Geometric Accuracy: Assessed by comparing the system’s output with ground truth data to determine the accuracy of geometric parameters.

Real-world performance evaluation may also involve field tests under various operating conditions to assess the system’s robustness and reliability. Quantitative metrics, alongside qualitative assessment of image quality and usability, are necessary to provide a holistic evaluation.

Q 15. Describe your experience with specific EO/IR software packages (e.g., ENVI, IDL).

My experience with EO/IR software packages is extensive. I’ve worked extensively with ENVI, a powerful platform for image processing, analysis, and visualization. I’ve utilized its capabilities for tasks ranging from atmospheric correction and orthorectification to feature extraction and classification using various algorithms. For example, I used ENVI’s spectral library to identify different materials in a hyperspectral image of a mine site. I’m also proficient in IDL (Interactive Data Language), particularly for developing custom algorithms and automating complex processing workflows. IDL’s strength lies in its scripting capabilities and efficient handling of large datasets. A project I worked on involved creating a custom IDL routine for real-time processing of thermal imagery from an unmanned aerial vehicle (UAV), allowing for immediate identification of potential hotspots.

Beyond ENVI and IDL, I have experience with other relevant software, including ArcGIS for geospatial analysis and integration of EO/IR data with geographic information systems (GIS), and MATLAB for signal processing and algorithm development. This diverse software skillset allows me to approach challenges from various perspectives and choose the best tool for the job.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of radiometric calibration in EO/IR imaging.

Radiometric calibration in EO/IR imaging is crucial for converting the sensor’s raw digital numbers (DN) into physically meaningful units, typically radiance or temperature. Imagine taking a photo with an uncalibrated camera – the colors would be distorted and unreliable. Similarly, uncalibrated EO/IR data provides inaccurate measurements. The process typically involves several steps. First, we need to understand the sensor’s response function which maps the incoming radiation to the recorded DN. This is often provided by the manufacturer. Then, we apply calibration coefficients to convert the DNs into radiance, accounting for factors like sensor gain, offset, and the sensor’s spectral response. Finally, for thermal imaging, we use Planck’s law to convert radiance to temperature, considering the emissivity of the target. This ensures that temperature measurements are accurate and comparable across different images and sensors. In a practical example, accurately calibrating a thermal image of a building would allow us to identify areas of heat loss, crucial for energy efficiency assessments.

Q 17. How do you address problems with image artifacts in EO/IR images?

Image artifacts in EO/IR images can significantly impact the accuracy and reliability of analysis. These artifacts can manifest in various forms, including noise, striping, blooming, and geometric distortions. My approach to addressing these issues is multifaceted and depends on the nature of the artifact. For instance, noise reduction can be achieved through techniques like median filtering or wavelet denoising. Striping, often caused by sensor inconsistencies, may require specialized algorithms such as destriping filters. Geometric distortions, resulting from lens imperfections or platform motion, can be corrected using geometric rectification techniques, often involving ground control points. Blooming, where bright objects overwhelm the sensor, may require non-linear correction methods. Each approach requires careful consideration, as aggressive artifact removal can also introduce unwanted effects. My process involves a thorough visual inspection, followed by a targeted application of appropriate techniques, carefully assessing the impact of each step to maintain data integrity.

Q 18. Describe your experience with various types of IR cameras and their functionalities.

My experience encompasses a range of IR cameras, including microbolometer-based uncooled cameras, which are widely used for their low cost and ease of use. I’ve worked with these in various applications, such as building inspections and security systems. I also have experience with cooled IR cameras, featuring Mercury Cadmium Telluride (MCT) or Indium Antimonide (InSb) detectors. These offer higher sensitivity and resolution, essential for long-range detection and high-precision measurements. For example, I’ve utilized cooled cameras in atmospheric research, measuring subtle temperature variations in cloud formations. Furthermore, I have experience working with hyperspectral IR cameras, enabling the acquisition of detailed spectral information from targets. The choice of IR camera depends heavily on the application’s specific requirements, considering factors such as sensitivity, resolution, field of view, and operating temperature range.

Q 19. What is the difference between active and passive EO/IR sensors?

The key difference between active and passive EO/IR sensors lies in their method of illumination. Passive sensors, like thermal cameras, detect naturally emitted radiation from objects, such as infrared radiation from heat. Think of it like seeing in the dark using only your eyes; you’re relying on the available light. Active sensors, on the other hand, emit their own radiation (e.g., laser) and measure the reflected or backscattered signal. It’s like using a flashlight to illuminate an object in the dark. Examples of active sensors include LiDAR (Light Detection and Ranging) and laser rangefinders. Passive sensors are advantageous for covert observation, while active sensors offer precise range measurements and better control over the illumination conditions. The choice between the two depends heavily on the specific application and the information required.

Q 20. Discuss the challenges of working with high-resolution EO/IR data.

High-resolution EO/IR data presents unique challenges. The sheer volume of data requires significant processing power and storage capacity. Processing time increases dramatically, and specialized algorithms are often necessary to efficiently handle the data. Furthermore, the increased detail can highlight minor artifacts or noise that might be less apparent in lower-resolution data, requiring more careful preprocessing and noise reduction techniques. Data management becomes critical, requiring robust storage solutions and efficient data organization strategies. Finally, visual interpretation of high-resolution imagery can be time-consuming and requires expertise to effectively extract meaningful information. To manage these challenges, I rely on parallel processing techniques, optimized algorithms, and advanced data management strategies. Careful planning, including the design of efficient workflows and the selection of appropriate analysis techniques, is crucial to effectively manage these datasets.

Q 21. How do you handle data compression and storage for large EO/IR datasets?

Handling the compression and storage of large EO/IR datasets is a critical aspect of working with this type of data. Lossless compression techniques, such as JPEG 2000, are preferred to preserve data integrity, although they achieve lower compression ratios than lossy methods. Lossy compression might be acceptable for certain applications where minor data loss is tolerable. To optimize storage, I typically employ hierarchical data structures and utilize cloud storage solutions to handle large datasets effectively. Metadata management is also paramount; meticulous record-keeping of data acquisition parameters, processing steps, and analysis results ensures data traceability and reproducibility. The choice of compression method and storage strategy depends on the specific project requirements, balancing data integrity with storage efficiency and accessibility. For archiving, robust and secure long-term storage solutions are necessary to ensure data preservation.

Q 22. Explain the concept of signal-to-noise ratio (SNR) and its importance in EO/IR imaging.

Signal-to-Noise Ratio (SNR) is a crucial metric in electro-optical (EO) and infrared (IR) imaging that quantifies the strength of the desired signal relative to the background noise. A high SNR indicates a clear, strong signal easily distinguishable from the noise, while a low SNR suggests a weak signal obscured by noise, leading to poor image quality.

Imagine trying to hear a faint whisper in a noisy room. The whisper is your signal, and the room noise is the noise. A high SNR would be like having a clear, loud voice in a quiet room – easy to understand. A low SNR is like trying to decipher that whisper in a crowded, noisy bar – very difficult.

In EO/IR imaging, the signal represents the information we want to capture (e.g., the thermal signature of an object), and the noise can stem from various sources such as sensor noise, atmospheric interference, or electronic noise in the system. A high SNR is essential for accurate object detection, identification, and tracking. It directly impacts the image’s clarity, contrast, and overall interpretability. Applications demanding high SNR include medical imaging, surveillance, and satellite remote sensing.

Q 23. Describe your experience with developing or implementing algorithms for EO/IR systems.

My experience encompasses the development and implementation of numerous algorithms for EO/IR systems. I’ve worked extensively on image processing techniques such as noise reduction using wavelet transforms and median filtering, image registration for aligning images taken at different times or perspectives, and target detection algorithms based on machine learning techniques like convolutional neural networks (CNNs).

For instance, in one project, I developed a real-time object tracking algorithm for an airborne surveillance system using a combination of Kalman filtering for prediction and correlation-based tracking for confirmation. This required optimizing the algorithm for speed and accuracy to ensure reliable target tracking despite variations in atmospheric conditions and sensor noise. Another project involved developing a background subtraction algorithm for thermal imagery, improving the detection of anomalies by suppressing stationary background components. The specific implementation employed a mixture of Gaussian modeling of background pixels for robust adaptation to varying lighting and thermal conditions. This algorithm’s improvement in false positive detection rates was substantial, improving situational awareness significantly.

//Example Code Snippet (Python): Simplified median filter for noise reduction. import numpy as np def median_filter(image, kernel_size): return np.median(image, kernel_size) Q 24. What are the ethical considerations related to the application of EO/IR technology?

The ethical considerations surrounding EO/IR technology are significant and multifaceted. Privacy concerns are paramount, as EO/IR systems can capture images with high resolution and thermal information that can reveal sensitive details about individuals without their knowledge or consent. This raises ethical questions about surveillance, data storage, and potential misuse.

Furthermore, the use of EO/IR in military applications necessitates careful consideration of the potential for collateral damage and civilian casualties. Algorithmic bias can also affect EO/IR applications, leading to inaccurate or discriminatory outcomes. For example, facial recognition systems using EO/IR might show biases depending on the skin tone of individuals. Transparent development processes, robust testing, and clear guidelines are critical to mitigating these risks. Ensuring responsible development and deployment requires careful consideration of these issues and a strong commitment to ethical guidelines and legal frameworks.

Q 25. How would you approach a problem with image blurring in EO/IR imagery?

Image blurring in EO/IR imagery can result from various factors, including atmospheric turbulence, defocus, motion blur, or sensor limitations. Addressing this requires a multi-faceted approach.

- Identify the cause: Analyze the image and its metadata to determine the likely cause of blurring. For instance, atmospheric turbulence typically leads to a more random blur, while motion blur manifests as streaks.

- Image restoration techniques: Various image processing techniques can help mitigate blurring. For motion blur, deconvolution algorithms can be effective, while Wiener filtering or other adaptive filtering methods can address noise-induced blurring. If the blur is due to defocus, then one can attempt to digitally re-focus the image using image processing algorithms based on inverse problems, though this is not always highly successful

- Improve acquisition parameters: In future image acquisitions, adjust parameters like exposure time, aperture settings, and stabilization techniques to minimize blurring. Better atmospheric compensation techniques during acquisition can also be employed.

- Super-resolution techniques: For significantly degraded images, advanced super-resolution methods can try to reconstruct a higher resolution image from the blurry one, however this is a more computationally expensive approach.

The choice of technique depends heavily on the specific type and severity of blurring. In many cases, a combination of methods might be necessary for optimal results. This process would be iterative, evaluating the results and potentially refining the choice of technique as the result of each step is assessed.

Q 26. Explain the concept of modulation transfer function (MTF) and its relevance.

The Modulation Transfer Function (MTF) is a critical metric that characterizes the ability of an optical system, including sensors, to reproduce the contrast of different spatial frequencies. Essentially, it quantifies how well the system transfers the spatial frequencies of an object onto the image plane.

Think of it as a measure of the system’s sharpness. A high MTF at high spatial frequencies means the system can accurately reproduce fine details (high sharpness), while a low MTF indicates a loss of contrast and detail (blurriness). It’s usually plotted as a graph showing MTF (usually expressed as a percentage of the ideal value) against spatial frequency (typically expressed in cycles per millimeter).

The MTF is crucial for several reasons. It enables the assessment and comparison of the performance of different optical systems. It’s used in designing and optimizing systems to meet specific resolution requirements. In quality control, the MTF is essential for characterizing the performance of the delivered system and ensuring it satisfies the specifications. It’s a valuable tool for predicting the image quality in a real-world scenario and for troubleshooting image quality issues, as low MTF values in specific frequency ranges suggest degradation in certain parts of the system or image acquisition process.

Q 27. Describe your experience with designing and testing optical systems.

My experience in designing and testing optical systems includes both theoretical modeling and hands-on experimentation. I’ve used optical design software (e.g., Zemax, Code V) to model and simulate the performance of various optical systems for different applications, ranging from telescopes to microscopic imaging systems. This often involves optimizing design parameters such as lens curvatures, spacing, and material selection to minimize aberrations and maximize image quality and MTF.

The testing phase involves both laboratory-based measurements and field tests. I’ve conducted extensive testing of optical systems using techniques such as MTF measurements, spot diagrams, and wavefront analysis to validate the theoretical designs. For instance, during testing a compact infrared camera system, I used a calibrated resolution target and a controlled environmental chamber to systematically measure MTF and other relevant parameters under different operating temperatures and humidity conditions. This allowed characterization of system performance over the environmental range.

Q 28. How would you troubleshoot a malfunctioning EO/IR sensor?

Troubleshooting a malfunctioning EO/IR sensor requires a systematic approach:

- Check environmental conditions: Ensure proper temperature, humidity, and power supply are within the sensor’s operational limits.

- Inspect cabling and connectors: Look for loose connections, damage to cables, or other physical issues.

- Review sensor data and logs: Examine the sensor’s output for anomalies, such as unusual noise levels, image artifacts, or error messages.

- Isolate the problem: Determine if the issue is with the sensor itself, the associated electronics, or external factors (e.g., interference).

- Perform calibration checks: Verify if the sensor’s calibration is valid and accurate. If needed, recalibrate the sensor to correct for any drifts or offsets.

- Consult technical documentation: Review the sensor’s specifications and troubleshooting guides. Some sophisticated systems have self-diagnostic tools.

- Contact manufacturer support: If the problem persists, contact the manufacturer for technical assistance.

A methodical approach combining visual inspection, data analysis, and technical knowledge can often pinpoint the root cause and enable efficient resolution of sensor malfunction. The specifics would naturally depend on the sensor type, its integration within the larger system, and the nature of the malfunction itself. The availability of self-diagnostic information from the system, as well as access to specialized equipment for detailed testing (e.g., oscilloscopes, spectrum analyzers), would affect the process.

Key Topics to Learn for Electro-Optical and Infrared Image Analysis Interview

- Electromagnetic Spectrum and Sensor Physics: Understanding the principles of light interaction with matter, the characteristics of different spectral bands (visible, near-infrared, mid-infrared, etc.), and the operation of various electro-optical sensors (CCD, CMOS, InGaAs, etc.). Consider the limitations of each sensor type.

- Image Formation and Acquisition: Grasping the processes involved in image formation, including lens design, optical aberrations, and the effects of atmospheric scattering. Explore different imaging modalities like passive and active sensing, and understand the nuances of data acquisition.

- Image Processing and Enhancement Techniques: Familiarize yourself with common image processing algorithms such as noise reduction (filtering), contrast enhancement, geometric correction, and image registration. Understanding the practical applications and limitations of each technique is crucial.

- Infrared Thermography and Applications: Explore the principles of thermal imaging, including the relationship between temperature and emitted infrared radiation. Understand the applications of infrared thermography in various fields like defense, industrial inspection, and medical diagnostics.

- Target Detection and Recognition: Develop a strong understanding of algorithms and techniques used for object detection, classification, and tracking in electro-optical and infrared imagery. This includes feature extraction, pattern recognition, and machine learning approaches.

- Data Analysis and Interpretation: Mastering the skills to interpret and extract meaningful information from processed images. This includes understanding various data formats, analyzing statistical properties, and presenting findings effectively.

- Calibration and Validation: Understand the importance of sensor calibration and data validation techniques to ensure accuracy and reliability of the analysis results. Explore different methods and their impact on data integrity.

Next Steps

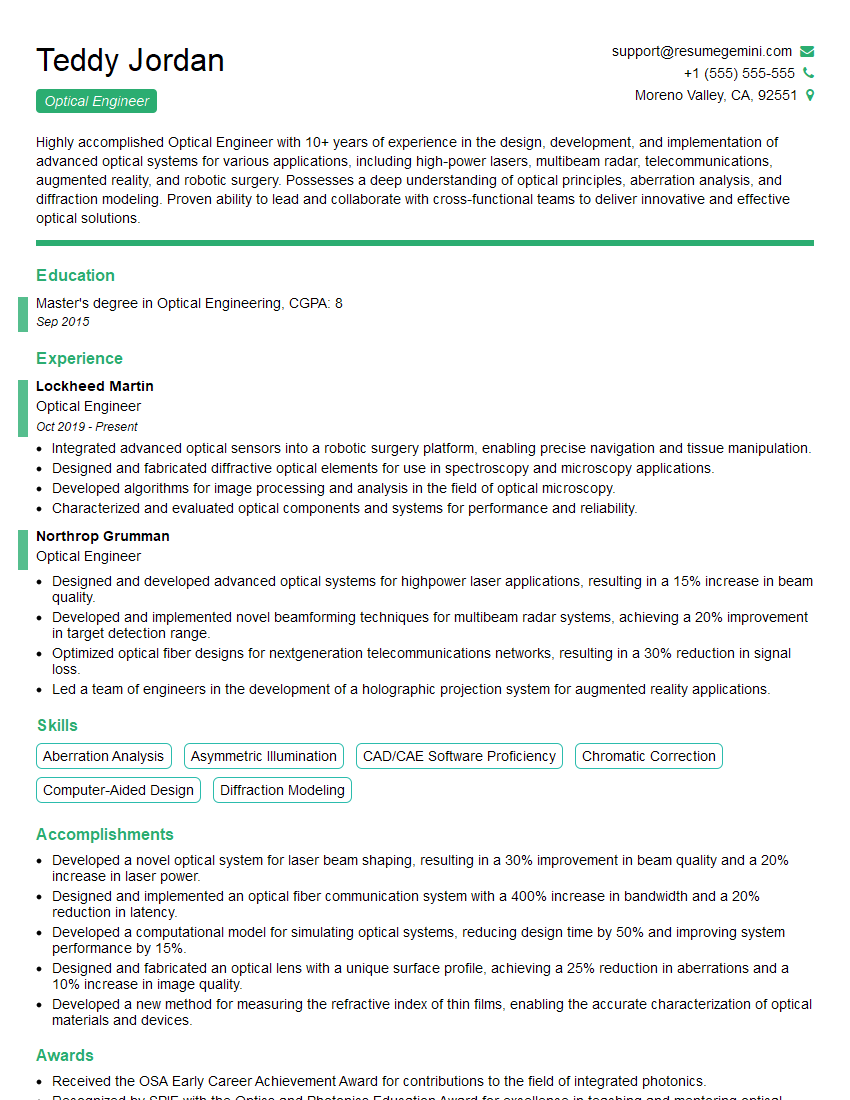

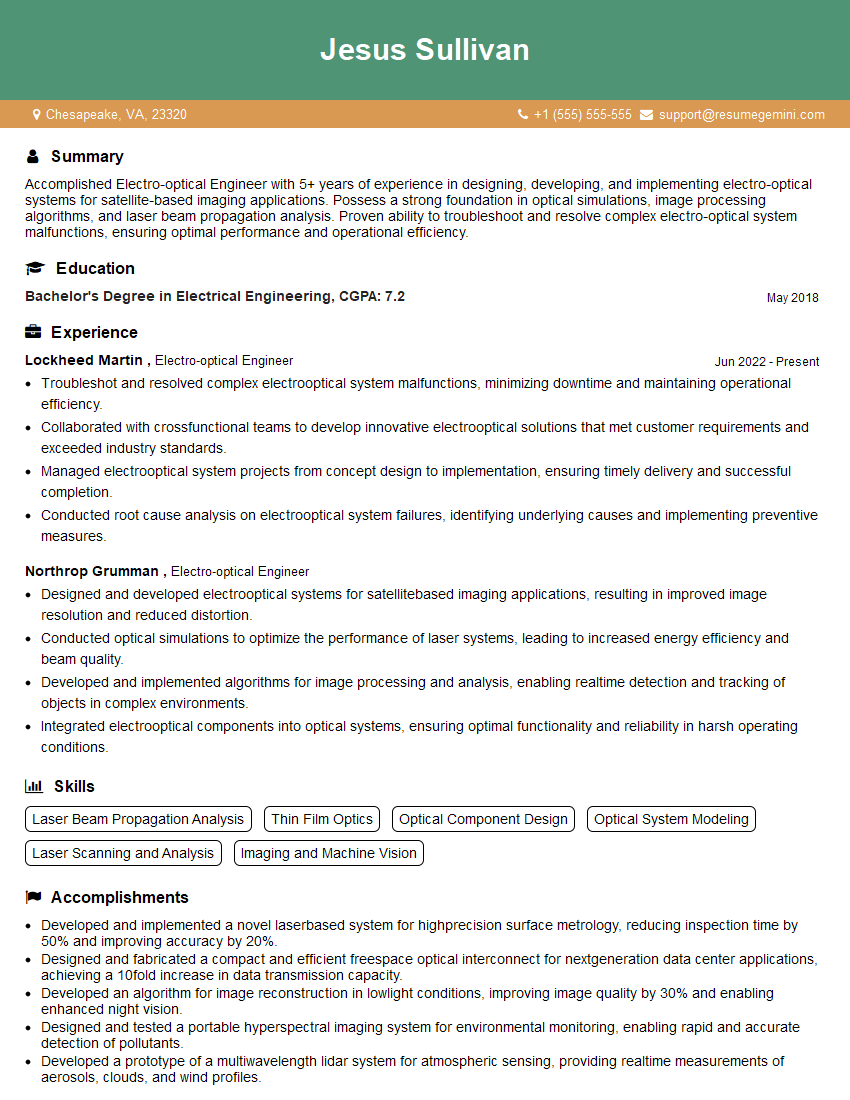

Mastering Electro-Optical and Infrared Image Analysis opens doors to exciting and rewarding careers in diverse fields. Developing a strong foundation in these skills significantly enhances your job prospects and allows you to contribute meaningfully to cutting-edge technologies. To increase your chances of landing your dream role, it’s essential to craft a compelling and ATS-friendly resume that showcases your expertise effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific skills and experience. We provide examples of resumes specifically designed for Electro-Optical and Infrared Image Analysis professionals to guide you through the process. Let ResumeGemini help you present your qualifications in the best possible light.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.