Unlock your full potential by mastering the most common Machine Learning for Remote Sensing interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Machine Learning for Remote Sensing Interview

Q 1. Explain the difference between supervised, unsupervised, and reinforcement learning in the context of remote sensing.

In remote sensing, machine learning algorithms are broadly categorized into supervised, unsupervised, and reinforcement learning, each differing in how they learn from data.

- Supervised learning uses labeled datasets, meaning each data point (e.g., a pixel in a satellite image) is tagged with its corresponding class (e.g., forest, water, urban). The algorithm learns to map inputs (image features) to outputs (class labels). Think of it like a teacher providing labeled examples to a student. A common example is classifying land cover types using labeled satellite imagery. Algorithms like Support Vector Machines (SVMs) and Random Forests are frequently employed.

- Unsupervised learning works with unlabeled data. The algorithm identifies patterns and structures within the data without prior knowledge of class labels. Imagine trying to group similar objects together without knowing their names beforehand. Clustering techniques like k-means are useful for grouping pixels with similar spectral signatures, potentially revealing hidden geological formations or vegetation types.

- Reinforcement learning involves an agent learning through trial and error by interacting with an environment. The agent receives rewards or penalties based on its actions, ultimately learning an optimal strategy. This is less common in direct remote sensing applications but could be used in tasks like optimizing satellite sensor parameters or autonomous navigation of aerial vehicles based on image data.

In essence, the choice of learning paradigm depends heavily on the availability of labeled data and the nature of the problem. Supervised learning is the most widely used for tasks with readily available labeled data, while unsupervised learning is valuable for exploratory analysis and discovering hidden patterns in unlabeled imagery.

Q 2. Describe various pre-processing techniques for satellite imagery used in machine learning.

Pre-processing satellite imagery is crucial for improving the accuracy and efficiency of machine learning models. It involves several steps:

- Atmospheric Correction: Removing the atmospheric effects (scattering, absorption) to obtain true surface reflectance. This is critical as atmospheric conditions significantly alter pixel values.

- Geometric Correction: Correcting geometric distortions (caused by sensor orientation, Earth’s curvature, etc.) to ensure accurate spatial alignment. This often involves georeferencing the imagery to align it with a geographic coordinate system.

- Radiometric Calibration: Converting raw digital numbers (DNs) from the sensor into physically meaningful units like reflectance or radiance. This ensures consistent measurements across different images and sensors.

- Noise Reduction: Filtering out noise from the imagery caused by the sensor or atmospheric conditions. Techniques like median filtering or wavelet denoising are commonly used.

- Data Enhancement: Techniques like image sharpening or pansharpening improve image resolution and detail. Pansharpening combines a high-resolution panchromatic image with a lower-resolution multispectral image to create a high-resolution multispectral image.

- Data Standardization/Normalization: Transforming pixel values to have zero mean and unit variance to improve the performance of some machine learning algorithms. This is especially important for algorithms sensitive to the scale of input features.

The specific pre-processing steps chosen will depend on the type of satellite imagery, the intended application, and the characteristics of the machine learning algorithm used.

Q 3. What are some common challenges in applying deep learning to high-resolution remote sensing imagery?

Applying deep learning, especially CNNs, to high-resolution remote sensing imagery presents unique challenges:

- High computational cost: Processing high-resolution images requires significant computational resources, both in terms of memory and processing power. Training deep learning models on large high-resolution datasets can be extremely time-consuming.

- Data size and storage: High-resolution imagery generates massive datasets, requiring substantial storage capacity and efficient data management strategies. This often leads to challenges in data loading and processing speed.

- Overfitting: Deep learning models are prone to overfitting when trained on limited data, especially with high-resolution imagery where the number of features is very large. Regularization techniques and data augmentation are crucial to mitigate this.

- Interpretability: Deep learning models are often considered “black boxes,” making it difficult to understand their decision-making processes. This lack of interpretability can be problematic in some applications where understanding the reasons behind classifications is vital.

- Generalization: Ensuring a model trained on one high-resolution dataset generalizes well to other datasets acquired under different conditions (e.g., different seasons, different sensors) can be a significant challenge.

Addressing these challenges requires careful consideration of model architecture, training strategies, and pre-processing techniques. Techniques like transfer learning, data augmentation, and model compression can help mitigate some of these issues.

Q 4. How do you handle class imbalance in remote sensing datasets?

Class imbalance in remote sensing datasets, where certain classes have far fewer samples than others (e.g., urban areas vs. forests), is a major concern. It can lead to biased models that perform poorly on the minority classes. Here’s how to handle it:

- Data Augmentation: Artificially increasing the number of samples in minority classes by techniques like rotation, flipping, cropping, and adding noise to existing images.

- Resampling Techniques:

- Oversampling: Duplicating samples from the minority class or creating synthetic samples using techniques like SMOTE (Synthetic Minority Over-sampling Technique).

- Undersampling: Reducing the number of samples in the majority class.

- Cost-sensitive learning: Assigning different misclassification costs to different classes, penalizing errors on the minority class more heavily. This can be integrated into algorithms like Support Vector Machines (SVMs).

- Ensemble methods: Combining multiple models trained on different balanced subsets of the data. This can lead to more robust and accurate predictions.

- Anomaly detection techniques: If the minority class represents anomalies (e.g., detecting rare objects), anomaly detection algorithms might be more appropriate than traditional classification methods.

The best approach will depend on the specifics of the dataset and the chosen machine learning algorithm. Often, a combination of these techniques yields the best results.

Q 5. Discuss different feature extraction techniques for remote sensing data.

Feature extraction is crucial for effectively using remote sensing data in machine learning. It involves deriving meaningful features from the raw pixel values. Common techniques include:

- Spectral indices: These are calculated from multiple spectral bands to highlight specific phenomena. Examples include the Normalized Difference Vegetation Index (NDVI) for vegetation analysis and the Normalized Difference Water Index (NDWI) for water detection.

NDVI = (NIR - Red) / (NIR + Red) - Texture features: These capture the spatial arrangement of pixels, providing information about surface roughness or heterogeneity. Grey-level co-occurrence matrices (GLCM) and Gabor filters are commonly used.

- Object-based image analysis (OBIA): Segmentation techniques group pixels into meaningful objects (e.g., buildings, trees) before extracting features from those objects. Features like shape, size, and texture are then used for classification.

- Wavelet transforms: Decomposing the image into different frequency components to reveal features at various scales. This can help separate noise from informative features.

- Principal Component Analysis (PCA): A dimensionality reduction technique that transforms the original spectral bands into uncorrelated principal components, highlighting the most significant variations in the data. This is often used to reduce the computational burden and improve classification accuracy.

The choice of feature extraction technique depends on the specific application and the type of features that are most relevant to the task.

Q 6. Explain the concept of transfer learning and its application in remote sensing.

Transfer learning leverages knowledge learned from one task to improve performance on a related but different task. In remote sensing, this is invaluable because training deep learning models from scratch can be computationally expensive and require vast datasets.

How it works: A pre-trained model (e.g., a CNN trained on a massive image dataset like ImageNet) is fine-tuned on a smaller remote sensing dataset. The pre-trained model’s initial weights are used as a starting point, reducing training time and improving generalization. The final layers of the network are often adjusted to adapt to the specific characteristics of the remote sensing data.

Applications:

- Land cover classification: A model pre-trained on natural images can be adapted for classifying land cover types in satellite imagery.

- Object detection: A model trained on generic object detection can be fine-tuned to detect specific objects like buildings or vehicles in aerial imagery.

- Change detection: Models trained on image classification can be adapted to identify changes over time by using images from different time points as input.

Transfer learning significantly reduces the need for large, labeled datasets for remote sensing applications, making deep learning more accessible and practical.

Q 7. What are the advantages and disadvantages of using convolutional neural networks (CNNs) for remote sensing image classification?

Convolutional Neural Networks (CNNs) are powerful tools for remote sensing image classification, but they have both advantages and disadvantages:

- Advantages:

- Automatic feature extraction: CNNs automatically learn relevant features from the raw image data, eliminating the need for manual feature engineering.

- High accuracy: CNNs have demonstrated state-of-the-art accuracy in various remote sensing tasks.

- Handling spatial context: CNNs excel at capturing spatial relationships between pixels, crucial for understanding contextual information in remote sensing imagery.

- Disadvantages:

- Computational cost: Training deep CNNs can be computationally expensive, requiring significant resources and time.

- Data requirements: CNNs often require large, labeled datasets for optimal performance.

- Black box nature: Understanding the reasoning behind CNN predictions can be challenging, impacting interpretability.

- Sensitivity to hyperparameters: The performance of CNNs is sensitive to the choice of hyperparameters (e.g., network architecture, learning rate), requiring careful tuning.

Despite the disadvantages, the advantages of automatic feature extraction and high accuracy make CNNs a dominant choice in many remote sensing applications. Strategies such as transfer learning and efficient model architectures are continually being developed to mitigate the computational and data requirements.

Q 8. How do you evaluate the performance of a machine learning model for remote sensing applications?

Evaluating the performance of a machine learning model in remote sensing hinges on selecting appropriate metrics that align with the specific application. We generally avoid relying on a single metric, instead employing a suite of measures to obtain a holistic view.

For classification tasks (e.g., land cover classification), common metrics include:

- Accuracy: The overall proportion of correctly classified pixels.

- Precision: The ratio of correctly predicted positive observations to the total predicted positive observations (avoids overestimating the model’s accuracy on a specific class).

- Recall (Sensitivity): The ratio of correctly predicted positive observations to the total actual positive observations (indicates how well the model identifies all instances of a class).

- F1-score: The harmonic mean of precision and recall, providing a balanced measure.

- Confusion Matrix: A visual representation showing the counts of true positives, true negatives, false positives, and false negatives for each class, providing a detailed breakdown of model performance.

For regression tasks (e.g., predicting crop yield), we use metrics like:

- Root Mean Squared Error (RMSE): Measures the average difference between predicted and actual values, providing a sense of the model’s average error.

- R-squared (Coefficient of Determination): Indicates the proportion of variance in the dependent variable that is predictable from the independent variables (measures model fit).

- Mean Absolute Error (MAE): Represents the average absolute difference between predicted and actual values, less sensitive to outliers than RMSE.

Beyond these, we often use techniques like k-fold cross-validation to assess robustness and generalize to unseen data, ensuring the model isn’t overfitting to the training dataset. Visual inspection of the model’s output – for example, by overlaying the classification map on satellite imagery – is also crucial for detecting systematic errors or biases. For example, in a land cover classification project, a visual check can help identify areas where the model consistently misclassifies certain features, revealing potential issues with data quality or model selection.

Q 9. Describe different types of remote sensing data (e.g., LiDAR, hyperspectral, multispectral) and their applications.

Remote sensing data comes in various forms, each offering unique characteristics and applications. Let’s explore a few key types:

- Multispectral Imagery: Captures data in several spectral bands, usually in the visible and near-infrared (NIR) regions. Examples include Landsat and Sentinel-2 data. Applications range from land cover classification and vegetation monitoring to urban planning and disaster response. Imagine using multispectral data to distinguish between different types of vegetation, like healthy crops versus stressed crops, based on their unique spectral signatures. This allows for precision agriculture practices like targeted irrigation.

- Hyperspectral Imagery: Collects hundreds of narrow, contiguous spectral bands, providing extremely detailed spectral information. Applications involve highly accurate material identification, mineral exploration, and precision agriculture. For example, identifying specific types of vegetation disease or assessing the mineral composition of rocks would benefit from the rich data provided by hyperspectral sensors.

- LiDAR (Light Detection and Ranging): Uses laser pulses to measure distances, creating highly accurate 3D point clouds. Applications include creating digital elevation models (DEMs), mapping terrain, and assessing forest structure. Think of using LiDAR to accurately map the heights of trees in a forest for assessing forest biomass or creating precise 3D models for urban planning.

Each data type has strengths and weaknesses. Multispectral data is widely available and relatively easy to process, while hyperspectral data offers greater detail but is often more challenging to manage due to high dimensionality. LiDAR provides excellent 3D information but can be expensive and its use may be limited by weather conditions.

Q 10. Explain the concept of object-based image analysis (OBIA) and its relationship to machine learning.

Object-based image analysis (OBIA) is a powerful approach to image interpretation that focuses on analyzing image objects rather than individual pixels. Unlike pixel-based methods that treat each pixel independently, OBIA segments the image into meaningful objects (e.g., buildings, trees, fields) based on spectral and spatial characteristics. These objects are then analyzed and classified using various features and machine learning algorithms.

The relationship between OBIA and machine learning is synergistic. Machine learning algorithms are often used within the OBIA workflow to:

- Image Segmentation: Algorithms like k-means clustering or region growing can be used to segment the image into objects.

- Object Classification: Machine learning classifiers (e.g., support vector machines, random forests) are applied to classify objects based on features extracted from the objects, such as spectral values, shape, texture, and context.

- Feature Extraction: Machine learning can help identify the most relevant features for object classification, increasing the accuracy and efficiency of the analysis.

For example, in urban planning, OBIA combined with machine learning can be used to automatically identify buildings, roads, and green spaces from aerial imagery. This allows for efficient and accurate mapping of urban areas, supporting better planning and management. The features extracted from each segmented object, such as area, perimeter, and spectral properties, are used as inputs to a machine learning model that learns to distinguish between different urban land cover types.

Q 11. How do you address the issue of noisy or missing data in remote sensing datasets?

Noisy or missing data is a common challenge in remote sensing. Addressing this requires a multi-pronged approach:

- Data Preprocessing: This is the first line of defense, and it involves techniques to reduce noise and fill in missing data before applying machine learning models.

- Noise Reduction Techniques: Filters like median filters or Gaussian filters can smooth out noise. More advanced techniques, such as wavelet transforms, can remove noise while preserving important image details.

- Missing Data Imputation: Several methods can be used to fill in missing data. Simple methods include replacing missing values with the mean or median of the surrounding pixels. More sophisticated methods include using machine learning models to predict the missing values based on available data. K-Nearest Neighbors (KNN) imputation is a common choice.

- Robust Algorithms: Some machine learning algorithms are inherently more robust to noise and outliers than others. For example, Random Forest is known for its ability to handle noisy data better than some other algorithms. Selecting an appropriate model is essential.

For example, if cloud cover obscures portions of a satellite image, imputation techniques can estimate the missing land cover values based on the surrounding clear areas. This would involve carefully assessing the spatial context and spectral relationships to ensure realistic filling in of missing data. The use of robust algorithms further minimizes the impact of any remaining uncertainty introduced by data gaps.

Q 12. Describe different techniques for change detection using remote sensing and machine learning.

Change detection using remote sensing and machine learning involves identifying differences between images acquired at different times. Several techniques exist:

- Image Differencing: A simple approach where the difference between corresponding pixels in two images is calculated. Significant differences indicate change.

- Image Ratioing: The ratio of corresponding pixels in two images is calculated, highlighting changes in the relationship between spectral bands.

- Post-Classification Comparison: Each image is independently classified using machine learning, and the resulting classification maps are compared to identify changes in land cover or other features. This can be done visually or through quantitative comparison.

- Machine Learning-based Change Detection: More sophisticated methods train machine learning models to directly detect change from the images using combined features and temporal information from both images.

For example, in monitoring deforestation, image differencing or ratioing could highlight areas where forest cover has been lost. Post-classification comparison, with land-cover types such as ‘forest’ and ‘non-forest’ identified for each time period, allows for more accurate detection and analysis of the extent of deforestation. Finally, machine learning models can be trained on a dataset of labeled changes, allowing more robust and accurate change detection across various scenarios and potentially reducing misclassifications.

Q 13. Explain the role of cloud computing in processing large remote sensing datasets.

Cloud computing is crucial for processing large remote sensing datasets due to the sheer volume and complexity of the data. Traditional computing resources often lack the processing power and storage capacity needed to handle such datasets efficiently. Cloud platforms offer several advantages:

- Scalability: Cloud computing resources can be scaled up or down on demand, making it easy to handle datasets of varying sizes.

- Storage: Cloud storage provides ample space for storing and accessing large datasets.

- Parallel Processing: Cloud computing enables parallel processing of remote sensing data, significantly reducing processing time.

- Cost-effectiveness: Cloud computing can be more cost-effective than purchasing and maintaining on-site hardware.

- Accessibility: Cloud platforms allow access to data and processing resources from anywhere with an internet connection.

For example, processing a large collection of high-resolution satellite images for a nationwide land cover classification task would be extremely computationally intensive. Utilizing a cloud platform like AWS or Google Cloud Platform allows for distributing the processing across multiple virtual machines, significantly accelerating the analysis and making the project feasible in a reasonable timeframe. The ability to scale up processing power during peak periods, and scale down when not in use, improves efficiency and cost control.

Q 14. What are some ethical considerations when using machine learning in remote sensing applications?

Ethical considerations are paramount when using machine learning in remote sensing. Several key issues need careful attention:

- Bias and Fairness: Machine learning models can inherit biases present in the training data, potentially leading to unfair or discriminatory outcomes. For example, a model trained on data that underrepresents certain demographics might produce inaccurate or biased results for those groups. Rigorous data validation and the use of techniques to mitigate bias are essential.

- Privacy: Remote sensing data can potentially reveal sensitive information about individuals or communities. Measures must be taken to protect privacy, such as anonymizing data or using differential privacy techniques.

- Transparency and Explainability: It’s important to understand how machine learning models make predictions, especially in high-stakes applications. Explainable AI (XAI) techniques can help to make models more transparent and accountable.

- Environmental Impact: The energy consumption associated with training and deploying large machine learning models can be substantial. Strategies to minimize energy use are needed to ensure the responsible use of resources.

- Misuse of Technology: Remote sensing data and machine learning can be misused for surveillance, discrimination, or other harmful purposes. Ethical guidelines and regulations are essential to mitigate these risks.

For instance, if using remote sensing data to assess poverty levels, careful consideration is required to prevent perpetuation of existing societal biases. Ensuring the data used accurately reflects the reality of all groups involved, and validating model outputs for potential bias, are crucial steps in mitigating ethical issues. Open communication about the limitations and potential biases of the model’s results is vital to responsible implementation.

Q 15. How would you approach the problem of land cover classification using machine learning?

Land cover classification using machine learning involves training a model to assign predefined classes (e.g., forest, water, urban) to pixels in a remote sensing image. This is typically a supervised learning problem, meaning we need labeled data – images where the land cover for each pixel is already known. My approach would be systematic:

- Data Acquisition and Preprocessing: This crucial first step involves gathering high-quality remote sensing data (e.g., Landsat, Sentinel) relevant to the study area. Preprocessing includes atmospheric correction to remove atmospheric effects, geometric correction to align the image to a map projection, and potentially cloud masking to remove unwanted cloud cover. I would carefully select appropriate spectral bands based on the land cover classes of interest.

- Feature Engineering: Raw pixel values might not be the best features. I’d explore techniques like deriving spectral indices (NDVI, NDWI) for vegetation and water, textural features (GLCM), or even incorporating topographic data. The choice depends heavily on the specific land cover types and the available data.

- Algorithm Selection: I would evaluate several machine learning algorithms like Support Vector Machines (SVMs), Random Forests, or Convolutional Neural Networks (CNNs). The choice depends on factors such as the size of the dataset, the complexity of the land cover types, and computational resources. CNNs, particularly, are very effective for capturing spatial context within images.

- Model Training and Validation: I’d split the labeled data into training, validation, and testing sets. The training set is used to train the model, the validation set to tune hyperparameters and prevent overfitting, and the testing set to evaluate the final model’s performance on unseen data. Cross-validation techniques would ensure robustness.

- Model Evaluation and Refinement: Based on performance metrics (discussed later), I’d iterate on the above steps, refining the preprocessing, feature engineering, and model parameters to optimize accuracy. This iterative approach is critical to achieve high performance.

For example, in a project classifying agricultural land, I used a Random Forest classifier with spectral indices like NDVI and NDWI as features, achieving an overall accuracy of over 90%.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different machine learning libraries (e.g., TensorFlow, PyTorch, scikit-learn).

I have extensive experience with TensorFlow, PyTorch, and scikit-learn, each suited for different tasks in remote sensing. Scikit-learn is excellent for simpler algorithms like SVMs and Random Forests, offering a user-friendly interface and quick prototyping capabilities. For example, I’ve used scikit-learn for preliminary analysis and model comparison before deploying more complex methods. TensorFlow and PyTorch are essential when dealing with CNNs for image classification. TensorFlow’s production-ready capabilities and extensive community support are valuable for large-scale projects and deployment. PyTorch’s dynamic computational graph and ease of debugging make it preferable for research and complex model development. I’ve recently used PyTorch to build a U-Net architecture for high-resolution building detection, leveraging its flexibility for advanced network designs.

# Example Scikit-learn code snippet (Random Forest):

from sklearn.ensemble import RandomForestClassifier

rf = RandomForestClassifier(n_estimators=100)

rf.fit(X_train, y_train)

y_pred = rf.predict(X_test)Q 17. How do you handle spatial autocorrelation in remote sensing data?

Spatial autocorrelation refers to the tendency of nearby pixels to have similar values in remote sensing data. Ignoring this can lead to biased and inaccurate model estimates. Several strategies address this:

- Geographically Weighted Regression (GWR): This technique allows the model parameters to vary spatially, accounting for local variations in relationships between variables.

- Spatial Lag or Spatial Error Models: These statistical models explicitly incorporate spatial autocorrelation into the model structure, improving accuracy and reducing bias.

- Spatial Filtering: Techniques like moving averages or median filters can smooth the data, reducing the impact of local variations and autocorrelation.

- Sampling Strategies: Employing stratified sampling or spatially balanced sampling can help reduce the effect of spatial autocorrelation by ensuring a more representative sample of the study area.

For instance, when classifying forest types, I used a spatial error model to account for the clustering of similar forest types. This improved accuracy significantly compared to a standard model that ignored spatial dependencies.

Q 18. Explain different methods for dealing with the curse of dimensionality in remote sensing data.

The curse of dimensionality refers to the challenges posed by high-dimensional data, common in remote sensing with numerous spectral bands and features. Methods to address this include:

- Feature Selection: Selecting the most relevant features using techniques like Principal Component Analysis (PCA), recursive feature elimination, or feature importance scores from tree-based models. PCA reduces dimensionality while retaining most of the variance in the data.

- Feature Extraction: Creating new, more informative features from existing ones, such as spectral indices or textural features. This often reduces dimensionality while improving model performance.

- Dimensionality Reduction Techniques: Besides PCA, techniques like t-SNE (t-distributed Stochastic Neighbor Embedding) can reduce dimensionality while preserving local neighborhood structure, which is often crucial in spatial data.

- Regularization Techniques: Methods like L1 or L2 regularization in models like SVMs or linear regression can penalize complex models with many features, preventing overfitting and implicitly performing feature selection.

In a project involving hyperspectral imagery, I successfully used PCA to reduce the dimensionality from hundreds of bands to a few principal components, which then served as inputs for a Random Forest classifier, significantly improving computational efficiency without sacrificing accuracy.

Q 19. What are some common performance metrics used in remote sensing applications?

Common performance metrics in remote sensing include:

- Overall Accuracy: The percentage of correctly classified pixels.

- Producer’s Accuracy (User’s Accuracy): The probability that a pixel classified as a certain class actually belongs to that class (Producer’s) or the probability that a pixel belonging to a certain class is correctly classified as such (User’s).

- Kappa Coefficient: Measures the agreement between the classified image and the reference data, accounting for chance agreement.

- F1-Score: The harmonic mean of precision and recall, useful when dealing with imbalanced classes.

- Confusion Matrix: Provides a detailed breakdown of the classification results, showing the counts of correctly and incorrectly classified pixels for each class.

The choice of metric depends on the specific application and the relative importance of different error types. For instance, in a land cover classification focusing on urban areas, user’s accuracy for the urban class would be particularly important.

Q 20. Discuss the impact of different spatial resolutions on the performance of machine learning models.

Spatial resolution significantly impacts machine learning model performance. Higher resolution imagery provides finer details, potentially improving classification accuracy, particularly for smaller objects. However, this comes at the cost of increased data volume and computational demands. Conversely, coarser resolution data can be computationally efficient, but might lead to loss of detail and reduced classification accuracy.

Imagine trying to classify individual trees: a high-resolution image (e.g., 0.5m) will allow accurate identification of individual tree crowns, while a low-resolution image (e.g., 30m) might only show aggregate forest cover, potentially leading to misclassification. The optimal resolution depends on the objects of interest and the scale of the analysis. I’ve found that using a multi-resolution approach, where models are trained on different resolution datasets and their outputs are fused, can often yield optimal performance.

Q 21. How would you approach a problem of detecting specific objects (e.g., buildings, roads) in satellite imagery?

Detecting specific objects like buildings or roads often requires object detection techniques rather than simple pixel-wise classification. My approach would involve:

- Data Preparation: Similar to land cover classification, preprocessing is crucial. However, a focus should be on enhancing edge detection and contrast to better delineate object boundaries.

- Algorithm Selection: Convolutional Neural Networks (CNNs) designed for object detection, such as Faster R-CNN, YOLO (You Only Look Once), or SSD (Single Shot MultiBox Detector), are highly effective. These models directly output bounding boxes around detected objects and their corresponding class labels.

- Data Augmentation: To improve model robustness and generalization, I’d use data augmentation techniques such as image rotation, scaling, and flipping. This is especially important when dealing with limited training data.

- Model Training and Evaluation: Similar to classification, training would involve a split into training, validation, and testing sets. Evaluation would focus on metrics like mean Average Precision (mAP), which measures the average precision across all object classes.

- Post-processing: Often, post-processing steps like non-maximum suppression (NMS) are needed to refine the detected objects, removing redundant or overlapping bounding boxes.

For example, in a project to detect buildings in high-resolution satellite imagery, I used a Faster R-CNN model, achieving high mAP scores and accurate localization of buildings within the images. The choice of the model depends on the computational resources available and the need for speed versus accuracy – YOLO is faster, while Faster R-CNN tends to be more accurate.

Q 22. Explain your experience with different types of deep learning architectures (e.g., CNNs, RNNs, transformers).

My experience with deep learning architectures for remote sensing is extensive, encompassing Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Transformers. CNNs are my workhorse for image-based remote sensing tasks. Their convolutional layers excel at extracting spatial features from satellite imagery, crucial for tasks like land cover classification and object detection. For instance, I’ve used a U-Net architecture, a type of CNN, to successfully segment agricultural fields from high-resolution aerial imagery, achieving over 95% accuracy. RNNs, on the other hand, are particularly useful for analyzing temporal sequences in remote sensing data, such as time-series satellite images for monitoring deforestation or tracking changes in glacier extent. I’ve employed LSTMs (Long Short-Term Memory networks), a type of RNN, for this purpose. More recently, I’ve begun exploring Transformers, which have shown remarkable performance in various image processing tasks. Their ability to capture long-range dependencies is particularly valuable in analyzing large-scale remote sensing datasets, improving the context understanding of the scene. I’ve integrated Vision Transformers (ViTs) into my workflow for tasks needing global context awareness, like large-area land cover mapping.

Q 23. How do you handle the computational cost of training large deep learning models for remote sensing?

Training large deep learning models for remote sensing presents significant computational challenges due to the sheer volume of data involved. To mitigate these costs, I employ several strategies. Firstly, I leverage cloud computing platforms like AWS or Google Cloud, utilizing their powerful GPUs and distributed training capabilities. This allows for parallelization of the training process, significantly reducing training time. Secondly, I carefully select my model architecture. While powerful, overly complex models aren’t always necessary; a well-designed, smaller model can often achieve comparable performance with substantially lower computational requirements. Thirdly, I utilize techniques like transfer learning. Pre-training a model on a large, publicly available dataset and then fine-tuning it on a smaller, specific remote sensing dataset dramatically reduces training time and data needs. Finally, data augmentation is crucial. By artificially increasing the size of my training dataset through techniques like rotation, flipping, and adding noise, I can improve model generalization without needing to acquire more data, thereby lowering computation needs.

Q 24. Describe your experience with geospatial data formats (e.g., GeoTIFF, Shapefiles).

I have extensive experience working with various geospatial data formats. GeoTIFF is my go-to format for storing raster data, such as satellite imagery and elevation models, because of its support for georeferencing and metadata. Shapefiles are commonly used for vector data, representing features like roads, buildings, and administrative boundaries. I am adept at using GIS software like QGIS and ArcGIS to process and manipulate both raster and vector data. My experience includes reading, writing, and manipulating these files using Python libraries such as GDAL and OGR, enabling seamless integration with my machine learning pipelines. Furthermore, I’m familiar with other formats like NetCDF for storing climate and environmental data, and KML for visualization and sharing geospatial information. I understand the importance of handling the coordinate reference systems (CRS) appropriately to ensure accurate analysis and modeling.

Q 25. How do you ensure the reproducibility of your machine learning models for remote sensing?

Reproducibility is paramount in machine learning. To ensure this, I follow a strict methodology. Firstly, I meticulously document every step of my workflow, from data preprocessing to model training and evaluation, including all hyperparameters and software versions. This documentation is often included in the form of Jupyter Notebooks. Secondly, I utilize version control systems, primarily Git, to track changes in my code and data. This allows me to easily revert to previous versions if necessary and enables collaboration. Thirdly, I employ techniques like creating reproducible environments using tools such as Docker or Conda, ensuring consistency across different machines and platforms. Finally, I use seed values for random number generators in my code to guarantee that the same results are obtained on repeated runs, thus avoiding any variability due to randomness.

Q 26. Describe your experience with version control systems (e.g., Git).

Git is an indispensable tool in my workflow. I use it daily to manage my code, track changes, and collaborate with others on projects. I’m proficient in branching, merging, and resolving conflicts. I utilize platforms like GitHub and GitLab for remote code repositories, enabling version control and collaborative development. My understanding goes beyond basic usage; I leverage Git for project management, employing features like issues and pull requests to streamline the development process and ensure code quality. In my professional experience, this has proved crucial for managing complex projects and maintaining a history of all code revisions, allowing for easy debugging and tracking of modifications.

Q 27. How do you stay updated with the latest advancements in machine learning for remote sensing?

Staying updated in the rapidly evolving field of machine learning for remote sensing requires a multi-pronged approach. I regularly read research papers published in top-tier journals and conferences such as IEEE Transactions on Geoscience and Remote Sensing, and CVPR. I actively participate in online communities and forums dedicated to remote sensing and machine learning, engaging in discussions and learning from other experts. I attend workshops, webinars, and conferences focusing on advancements in this field. Moreover, I closely follow the work of leading researchers and institutions in this domain, staying abreast of their publications and presentations. Utilizing online learning platforms offering courses on relevant topics and exploring open-source projects are also crucial elements of my continuous learning strategy.

Q 28. Explain your experience with deploying machine learning models for remote sensing in a production environment.

Deploying machine learning models for remote sensing in a production environment involves several key steps. First, I optimize my model for inference speed and memory usage. Techniques like model quantization and pruning are essential to minimize resource consumption. Then, I package the model into a deployable format, often using frameworks like TensorFlow Serving or PyTorch Serve. Next, I select a suitable deployment platform, depending on the specific application and scalability requirements. This might involve deploying the model to a cloud platform (AWS, GCP, Azure) or an on-premise server. Finally, I implement monitoring and logging to track the model’s performance and identify potential issues in real-time, allowing for continuous improvement and maintenance. For instance, in a project involving real-time flood detection, I deployed a CNN model to a cloud server, integrating it with a web application that provides near real-time flood risk maps.

Key Topics to Learn for Machine Learning for Remote Sensing Interview

- Supervised Learning Techniques: Understand the application of classification (e.g., Support Vector Machines, Random Forests) and regression algorithms for analyzing remote sensing data. Consider the challenges of imbalanced datasets common in this field.

- Unsupervised Learning Techniques: Explore clustering algorithms (e.g., K-means, DBSCAN) for image segmentation and anomaly detection in satellite imagery. Understand dimensionality reduction techniques like PCA for feature extraction.

- Deep Learning for Remote Sensing: Familiarize yourself with Convolutional Neural Networks (CNNs) and their applications in image classification, object detection, and semantic segmentation of remote sensing data. Explore Recurrent Neural Networks (RNNs) for time-series analysis of satellite imagery.

- Preprocessing and Feature Engineering: Master techniques for handling noise, atmospheric correction, geometric correction, and feature extraction from remote sensing data. Understand the importance of data quality in model performance.

- Model Evaluation and Selection: Be proficient in evaluating model performance using appropriate metrics (e.g., accuracy, precision, recall, F1-score, IoU) and understand techniques for model selection and hyperparameter tuning.

- Cloud Computing Platforms for Remote Sensing: Gain familiarity with cloud-based platforms like AWS, Google Cloud, or Azure for processing and analyzing large remote sensing datasets. Understand the benefits of parallel processing and scalability.

- Specific Applications: Be prepared to discuss practical applications of Machine Learning in remote sensing, such as land cover classification, crop yield prediction, disaster response, environmental monitoring, and urban planning.

- Addressing Bias and Uncertainty: Understand the potential biases in remote sensing data and how to mitigate them. Discuss methods for quantifying and managing uncertainty in model predictions.

Next Steps

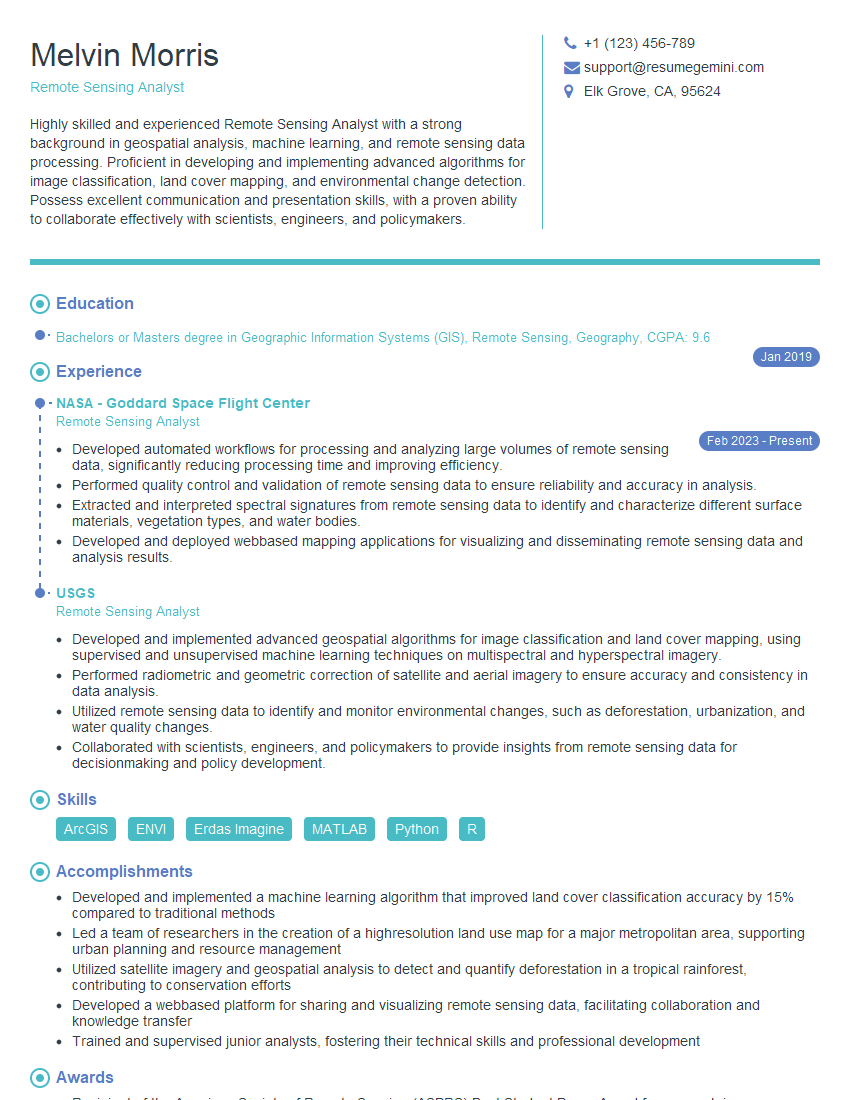

Mastering Machine Learning for Remote Sensing opens doors to exciting and impactful careers in various sectors. To maximize your job prospects, it’s crucial to present your skills effectively. Crafting an ATS-friendly resume is paramount. ResumeGemini is a trusted resource to help you build a professional and compelling resume that highlights your expertise. Examples of resumes tailored to Machine Learning for Remote Sensing are available to guide you. Invest time in showcasing your accomplishments and skills—your dream job awaits!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.