Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Sampling and Field Data Collection interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Sampling and Field Data Collection Interview

Q 1. Explain the difference between probability and non-probability sampling.

The core difference between probability and non-probability sampling lies in how the sample is selected. In probability sampling, every member of the population has a known, non-zero chance of being selected. This allows for generalizations about the population from the sample. Conversely, in non-probability sampling, the probability of selection for each population member is unknown, making generalizations less reliable. Think of it like drawing names from a hat (probability) versus grabbing a handful of names you see on a list (non-probability).

Q 2. Describe different types of probability sampling (e.g., simple random, stratified, cluster).

Probability sampling techniques offer various approaches to ensure a representative sample. Here are some key methods:

- Simple Random Sampling: Every member has an equal chance of selection. Imagine using a random number generator to select participants from a numbered list of all students at a university.

- Stratified Sampling: The population is divided into strata (subgroups) based on relevant characteristics (e.g., age, gender, income), and random samples are drawn from each stratum. This ensures representation from all subgroups. For instance, if surveying consumer preferences for a product, you might stratify by age groups (18-25, 26-35, etc.) to get a balanced perspective.

- Cluster Sampling: The population is divided into clusters (e.g., geographical areas, schools), and a random sample of clusters is selected. All members within the selected clusters are then included in the sample. A cost-effective method. For example, studying teacher effectiveness might involve randomly selecting schools (clusters) and then surveying all teachers in those schools.

Q 3. What are the advantages and disadvantages of using systematic sampling?

Systematic sampling, where every kth element is selected from a list (after a random starting point), offers several advantages and disadvantages:

- Advantages: Simple to implement, less time-consuming than simple random sampling, can be more efficient in large populations.

- Disadvantages: Susceptible to bias if the list has a hidden periodic pattern. For example, if surveying houses along a street and they alternate between large and small, selecting every 10th house could skew your results. It might be better to use stratified sampling. It also doesn’t guarantee a perfectly representative sample.

Imagine surveying customers at a store. Using systematic sampling, you could pick every 5th customer that walks through the door after a random starting point. This is easy to do, but if there’s a pattern (e.g., every 5th customer is a senior citizen), your results could be skewed.

Q 4. Explain different types of non-probability sampling (e.g., convenience, quota, snowball).

Non-probability sampling methods are less rigorous but often easier and cheaper to conduct. They are suitable when generalizability to a broader population is not the primary goal:

- Convenience Sampling: Selecting participants readily available. An example would be surveying students in your class to gauge their opinions. Clearly, it wouldn’t be generalizable to all students.

- Quota Sampling: Similar to stratified sampling, but selection within strata isn’t random. Researchers might aim to interview a specific number of men and women, but the selection of individuals within those groups isn’t random. For example, interviewing a set number of people from various demographics at a shopping mall.

- Snowball Sampling: Participants recruit additional participants. This is useful for hard-to-reach populations (e.g., studying a rare disease). Someone diagnosed with the disease might be asked to refer others with the same condition.

Q 5. How do you determine the appropriate sample size for a study?

Determining the appropriate sample size involves a balance between cost and accuracy. There’s no single magic number. It depends heavily on the study’s objectives, the desired precision, the variability within the population, and the acceptable margin of error. Several methods exist, including:

- Power analysis: A statistical method used to determine the minimum sample size needed to detect a statistically significant effect.

- Sample size calculators: Available online, these tools require you to input parameters like confidence level, margin of error, and population variability.

- Rule of thumb: Some researchers use guidelines (e.g., a minimum of 30 participants per group in experimental research). However, these are very rough estimates.

It’s best to consult a statistician for complex studies.

Q 6. What factors influence sample size determination?

Several factors influence sample size determination:

- Population size: Larger populations generally require larger samples, but the relationship isn’t linear (after a certain point, increasing sample size yields diminishing returns).

- Desired precision: A smaller margin of error (higher precision) requires a larger sample size.

- Confidence level: A higher confidence level (e.g., 99% instead of 95%) necessitates a larger sample size.

- Population variability: Higher variability (more heterogeneity) requires a larger sample size to capture the diversity effectively.

- Type of research: Experimental studies often require larger samples than observational studies.

- Resources: Budget and time constraints will also impact the feasible sample size.

Q 7. Explain the concept of sampling error and how to minimize it.

Sampling error is the difference between the characteristics of a sample and the characteristics of the population it represents. It’s inherent in any sampling process. A larger sampling error means that your sample is less representative of the population. For instance, if your sample has a significantly higher percentage of women than the overall population, that’s sampling error.

Minimizing sampling error involves:

- Increasing sample size: A larger sample size reduces the likelihood of extreme deviations from the population parameters.

- Using appropriate sampling techniques: Probability sampling methods generally lead to lower sampling error compared to non-probability methods.

- Careful sample selection: Eliminating biases in the sampling process is crucial.

- Stratification: If you know the population is heterogeneous, stratifying and drawing samples from each stratum helps balance representation.

- Using statistical analysis: Calculating confidence intervals can help quantify the uncertainty associated with sampling error.

Q 8. Describe different methods for collecting field data (e.g., GPS, sensors, manual measurements).

Field data collection employs a variety of methods, each suited to different data types and research objectives. Think of it like assembling a puzzle – you need different tools for different pieces.

GPS (Global Positioning System): GPS receivers provide precise geographical coordinates (latitude, longitude, and elevation). This is crucial for spatial data, mapping, and understanding location-based variations. For example, in a soil sampling project, GPS helps pinpoint the exact location of each sample, enabling accurate mapping of soil properties.

Sensors: These automated devices measure various environmental parameters. Imagine sensors as tireless assistants constantly collecting data. Examples include temperature sensors (measuring air or soil temperature), humidity sensors, rain gauges (measuring rainfall), and water level sensors. Data from these sensors can be remotely collected and transmitted, enabling continuous monitoring.

Manual Measurements: Sometimes, direct human observation and measurement are indispensable. This could involve measuring tree height with a clinometer, recording water flow with a flow meter, or taking soil samples with a shovel and analyzing them in a lab. While potentially more time-consuming, these methods provide valuable ground-truth data and are often necessary when automated tools aren’t sufficient.

Remote Sensing: Techniques like aerial photography or satellite imagery provide broader-scale data collection. For instance, assessing deforestation over large areas would be impractical with only ground-based methods. Remote sensing offers a valuable perspective that complements ground-level data.

Q 9. How do you ensure the accuracy and precision of your field data?

Ensuring data accuracy and precision is paramount. It’s like baking a cake – if your measurements are off, the result will be unsatisfactory. We employ several strategies:

Calibration and Validation: All equipment (sensors, GPS receivers, measuring tools) is meticulously calibrated before and after data collection. This ensures consistent and reliable readings. We also validate our measurements by comparing them to established standards or known values.

Multiple Measurements and Replication: We take multiple measurements at each location to reduce random error. Replication is vital; for instance, taking multiple soil samples from the same area helps assess the variability within the sample site itself.

Standard Operating Procedures (SOPs): Clearly defined SOPs minimize variability between different data collectors. Everyone follows the same protocols, ensuring consistency and minimizing potential biases.

Quality Control Checks: Regular checks on data consistency and plausibility identify errors early. We use data visualization and statistical methods to flag outliers or impossible values. For example, a temperature reading of 150 degrees Celsius in a temperate forest would trigger an investigation.

Data Logging and Metadata: We maintain detailed records of each measurement, including date, time, location (GPS coordinates), equipment used, and any relevant observations. This rich metadata is crucial for tracing errors and understanding potential biases.

Q 10. Explain the importance of data validation and quality control in field data collection.

Data validation and quality control are integral to the credibility of field data. Think of it as the editing process for a scientific paper – it’s essential for ensuring the accuracy and reliability of the findings. Without proper validation, our data becomes unreliable and potentially misleading.

Range Checks: Values outside acceptable ranges (e.g., negative rainfall) are flagged for review.

Consistency Checks: Checking for inconsistencies between related data points (e.g., a high temperature reading but low evaporation).

Completeness Checks: Ensuring all required data fields are filled.

Plausibility Checks: Verifying data’s logical consistency with known facts or expectations.

Duplicate Checks: Identifying and resolving duplicate entries.

These checks not only improve data quality but also facilitate error detection and correction. A robust quality control process increases the confidence in the reliability of our conclusions.

Q 11. How do you handle missing data in your dataset?

Missing data is an inevitable challenge in field work. It’s like having a puzzle with some missing pieces. We address this strategically:

Identify the Cause: Before handling missing data, we understand *why* data is missing. Was it equipment malfunction, inaccessibility, or human error? The cause guides the best imputation strategy.

Imputation Methods: Depending on the cause and data characteristics, different methods are applied. This could be:

Deletion: If missing data is minimal and randomly distributed, complete case deletion is sometimes acceptable. However, this may reduce sample size and bias results.

Mean/Median Imputation: Simple method for replacing missing values with the average or median of the available data. Suitable only if the missing data is truly random.

Regression Imputation: Predicting missing values using a regression model based on other variables. This requires strong correlations between variables.

Multiple Imputation: Creating multiple plausible imputed datasets and analyzing each, combining results to account for uncertainty in the imputed values.

Sensitivity Analysis: We analyze the impact of missing data and different imputation methods on the results. If the results change significantly based on different imputation techniques, this suggests the uncertainty introduced by missing data needs further consideration.

The best approach is context-specific. A thorough understanding of the missing data mechanism and the subsequent impact on analysis is crucial.

Q 12. Describe your experience with data entry and management software.

My experience encompasses various data entry and management software. Choosing the right software is like choosing the right tool for a job – efficiency and accuracy hinge on it.

ArcGIS: Extensive experience with ArcGIS for geospatial data management, integrating GPS data, and creating maps. I’m proficient in data visualization, spatial analysis, and geodatabase management.

R and Python: Proficient in statistical programming with R and Python for data cleaning, manipulation, analysis, and visualization. Packages like

dplyr,tidyr,ggplot2(in R), andpandas,numpy,matplotlib(in Python) are my regular companions.Spreadsheets (Excel, Google Sheets): While not ideal for large datasets, spreadsheets are used for basic data entry, initial cleaning, and simple calculations.

Database Management Systems (DBMS): Experience with relational databases (e.g., MySQL, PostgreSQL) for structured data management and querying complex datasets.

I adapt my software choices to the specific requirements of each project, ensuring data integrity and efficient workflow.

Q 13. What are some common challenges encountered during field data collection?

Field data collection is fraught with challenges. It’s not always smooth sailing. Some common hurdles include:

Weather conditions: Extreme weather (rain, snow, heat) can halt data collection and affect equipment performance.

Accessibility: Reaching remote or difficult-to-access locations can pose significant logistical challenges.

Equipment malfunctions: Unexpected equipment failure in the field necessitates backup plans and troubleshooting skills.

Data loss or corruption: Data loss due to accidental deletion, hardware failure, or software errors is a serious concern.

Human error: Incorrect measurements, inaccurate recording, or data entry mistakes are unavoidable but need to be minimized through careful planning and quality control.

Safety concerns: Working in remote or hazardous environments requires adhering to strict safety protocols.

Q 14. How do you address logistical challenges in field data collection?

Addressing logistical challenges requires meticulous planning and resourcefulness. It’s like planning a complex expedition.

Pre-field planning: Thorough planning includes site reconnaissance, obtaining necessary permits, securing equipment and supplies, arranging transportation, and establishing communication protocols.

Teamwork and Communication: Effective teamwork and clear communication among team members are crucial, especially when facing unexpected issues.

Backup plans: Having contingency plans for equipment failure, weather disruptions, or safety concerns helps mitigate risks.

Resource management: Efficient use of time, personnel, and resources is essential for efficient and cost-effective field operations.

Technology utilization: Employing technology such as GPS tracking, satellite communication, and cloud-based data storage enhances efficiency and data security.

Proactive planning and problem-solving skills are vital for smooth and successful field data collection.

Q 15. Explain your experience with using GIS software for data visualization and analysis.

GIS software is indispensable for visualizing and analyzing spatial data collected in the field. My experience spans several years, working with ArcGIS, QGIS, and MapInfo Pro. I’ve used these platforms extensively to create thematic maps, perform spatial analyses like overlaying different datasets (e.g., soil type and land use), and generate insightful visualizations from GPS tracks and point data. For instance, in a recent project studying water quality, I used ArcGIS to map sampling locations, plot contaminant levels, and perform spatial interpolation to estimate concentrations in unsampled areas. This helped identify pollution hotspots and inform remediation strategies.

Beyond basic mapping, I am proficient in geoprocessing tools to conduct tasks like raster analysis (e.g., calculating NDVI from satellite imagery to assess vegetation health), network analysis (e.g., determining optimal routes for field sampling), and data management using geodatabases. I also have experience in integrating GIS data with other data sources for comprehensive analysis.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you maintain safety protocols during field data collection?

Safety is paramount during field data collection. My approach is proactive and multifaceted, starting with a thorough risk assessment before each project. This involves identifying potential hazards like hazardous materials, wildlife encounters, extreme weather conditions, and unsafe terrain. Based on the risk assessment, we develop a comprehensive safety plan which includes:

- Personal Protective Equipment (PPE): Ensuring appropriate PPE such as safety helmets, high-visibility vests, gloves, and safety footwear is available and used consistently.

- Emergency Procedures: Establishing clear communication protocols, designating emergency contacts, and providing first aid training to the team.

- Vehicle Safety: Using appropriately maintained vehicles, employing safe driving practices, and carrying emergency supplies.

- Environmental Awareness: Following guidelines to minimize environmental impact, respecting wildlife habitats, and leaving the site cleaner than we found it.

- Weather Monitoring: Closely monitoring weather forecasts and adjusting field activities to avoid dangerous conditions.

Regular safety briefings and toolbox talks are held to reinforce safety procedures and address any concerns.

Q 17. How familiar are you with different types of sampling equipment?

My familiarity with sampling equipment is extensive, covering various methodologies and applications. I’ve worked with a wide array of tools, including:

- Soil sampling: Augers, soil cores, probes, and hand trowels for various soil depths and sample sizes.

- Water sampling: Water samplers (both grab and integrated), flow meters, and turbidity meters for collecting accurate water samples at various depths and measuring water quality parameters.

- Air sampling: Passive and active air samplers, particulate matter monitors, and gas detectors for monitoring air quality.

- Biological sampling: Nets, traps, pitfall traps, and other specialized equipment for collecting plant and animal specimens.

- GPS equipment: Handheld and differential GPS units for accurate georeferencing of samples.

The selection of appropriate equipment always depends on the specific project requirements, the nature of the sample, and the desired precision and accuracy. I have the skills to select, operate, and maintain this equipment effectively.

Q 18. Describe your experience with data cleaning and preprocessing.

Data cleaning and preprocessing is a critical step that significantly influences the validity and reliability of any analysis. This often involves dealing with incomplete, inconsistent, and erroneous data. My experience includes:

- Identifying and handling missing values: Employing techniques like imputation (replacing missing values based on statistical methods) or removing data points if the missing data is excessive or non-random.

- Detecting and correcting outliers: Using visual inspection of data plots (boxplots, scatterplots), statistical methods (e.g., Z-scores), or domain knowledge to identify and handle outliers.

- Data transformation: Applying transformations like log transformations or standardization to improve data normality and stabilize variance.

- Data consistency checks: Ensuring consistency in data units, formats, and coding schemes.

- Error detection and correction: Identifying and correcting errors through manual review and utilizing automated quality control checks within data management software.

For instance, in a forestry project, I detected outliers in tree diameter data likely caused by measurement errors. By removing these outliers, I was able to obtain a more accurate representation of the forest structure.

Q 19. How do you ensure the confidentiality and security of collected data?

Confidentiality and data security are paramount. My approach is based on adhering to strict protocols and best practices:

- Data anonymization: Removing personally identifiable information (PII) wherever possible to protect the privacy of individuals.

- Secure data storage: Using encrypted storage solutions, both locally and on cloud platforms, to protect data from unauthorized access.

- Access control: Implementing access control measures that limit access to data only to authorized personnel on a need-to-know basis.

- Data encryption: Encrypting data both in transit and at rest.

- Data backup and recovery: Regularly backing up data to prevent data loss.

- Compliance with regulations: Adhering to relevant data privacy regulations like GDPR and HIPAA, depending on the project context.

Documentation is crucial, maintaining a detailed audit trail of data access and modifications.

Q 20. What statistical software are you proficient in?

I am proficient in several statistical software packages, including R, Python (with libraries like SciPy and Statsmodels), and SPSS. R is my preferred choice for its flexibility and extensive statistical libraries. I can conduct a wide range of statistical analyses, from basic descriptive statistics to more complex analyses like ANOVA, regression, time series analysis, and geostatistics.

My experience with these tools extends beyond simple data analysis. I have experience in building statistical models, performing data visualization, and generating reports. I am also comfortable using programming within these packages for custom data analysis tasks.

Q 21. Explain your understanding of statistical analysis related to your field data.

My understanding of statistical analysis in the context of field data is comprehensive. It begins with selecting appropriate statistical methods based on the research question, data type (continuous, categorical, spatial), and the study design. I frequently employ techniques like:

- Descriptive statistics: Calculating measures of central tendency, variability, and distribution to summarize field data.

- Inferential statistics: Using hypothesis testing to draw inferences about populations based on sample data, including t-tests, ANOVA, and chi-square tests.

- Regression analysis: Modeling relationships between variables to understand how changes in one variable affect another.

- Spatial analysis: Employing geostatistical techniques (kriging, spatial autocorrelation) to analyze spatially correlated data.

- Time series analysis: Modeling data collected over time to identify trends and patterns.

The choice of statistical method should always be justified and appropriate for the data. I ensure the results are interpreted accurately and their limitations are acknowledged in any report or presentation. For example, in a recent project assessing the impact of a new agricultural practice on soil health, I used ANOVA to compare soil nutrient levels across different treatment groups, and then used regression analysis to model the relationship between nutrient levels and crop yield.

Q 22. How do you ensure data integrity during transportation and storage?

Data integrity during transportation and storage is paramount. Think of your data as a precious artifact – you wouldn’t just toss it in a box and hope for the best! We need a multi-pronged approach.

- Secure Packaging: Samples are packaged using appropriate containers (e.g., sealed, tamper-evident bags, specialized vials for liquids) to prevent breakage, spillage, or contamination. For instance, soil samples might be placed in airtight, labeled bags to avoid moisture loss or cross-contamination.

- Environmental Control: Temperature and humidity are critical. Temperature-sensitive samples require cold chain logistics, using refrigerated transport and storage. Chain of custody documentation is meticulously maintained, recording temperature fluctuations throughout the journey.

- Chain of Custody: This is a critical document that tracks every step of the sample’s journey. It records who handled the sample, when, and where, ensuring traceability and preventing unauthorized access. Any transfer or change in custody is formally documented with signatures.

- Secure Storage: Once in the lab, samples are stored in a secure, climate-controlled environment with access restrictions. Digital data is backed up regularly to multiple secure locations and encrypted for protection against loss or corruption.

By implementing these measures, we minimize the risk of data loss, degradation, or compromise, ensuring the reliability of our findings.

Q 23. How do you document your field data collection procedures?

Thorough documentation is the backbone of reproducible research. Our field data collection procedures are meticulously documented using a standardized format. This ensures clarity, consistency, and facilitates easy review.

- Standard Operating Procedures (SOPs): We create detailed SOPs that outline each step, from equipment preparation to data recording. This minimizes ambiguity and ensures every team member follows the same protocol. For example, an SOP for soil sampling might detail the type of auger, sampling depth, and the required field measurements.

- Data Sheets/Forms: Customized data sheets are used to record observations, measurements, and other relevant information in the field. These sheets include clear instructions and space for both quantitative and qualitative data. We use barcodes or unique identifiers to link the field data to the samples.

- Field Notebooks: Detailed field notebooks are maintained. This provides space for observations not captured on data sheets and allows for sketches, diagrams, or any other helpful notes. It serves as a backup and allows us to annotate any irregularities encountered during the sampling process.

- Digital Data Management: We use established platforms for digital data entry, ideally ones that support real-time data entry and validation. Data is regularly backed up to prevent loss.

These comprehensive records allow for traceability, quality control, and ensure the results can be independently verified.

Q 24. Describe your experience working independently and as part of a team in the field.

I thrive in both independent and team-based field settings. The ability to adapt is key.

Independent Work: I’m adept at managing solo fieldwork, requiring self-motivation, resourcefulness, and the ability to troubleshoot problems independently. For example, during a remote water quality assessment project, I was responsible for setting up and operating equipment, collecting samples, and maintaining the chain of custody – all without direct supervision.

Teamwork: Collaboration is essential for efficient and safe fieldwork. I effectively communicate with team members, contribute my expertise, and readily take on assigned roles. A recent project involving soil sampling required coordination amongst several team members, managing logistics, equipment sharing, and ensuring everyone adhered to safety protocols. My role as lead sampler ensured we completed the project on time and to a high standard.

Ultimately, my strength lies in adaptability and effective communication, allowing me to contribute significantly whether working independently or collaboratively.

Q 25. What are your strategies for managing time effectively during field work?

Effective time management in fieldwork is crucial for project success. It often involves juggling unexpected challenges with tight deadlines.

- Detailed Planning: Before fieldwork, I meticulously plan the tasks, allocating specific timeframes for each activity. This includes travel time, sampling, data recording, equipment setup, and potential setbacks.

- Prioritization: I prioritize tasks based on their importance and urgency. This prevents us from wasting time on less critical activities, ensuring we meet critical deadlines.

- Efficient Logistics: We optimize routes to minimize travel time. We also pre-stage equipment, reduce unnecessary steps, and use technology like GPS to improve location accuracy.

- Flexibility: Fieldwork is unpredictable; unexpected challenges might arise. It’s important to have contingency plans and be flexible to adapt to unexpected events without compromising project goals.

- Regular Check-ins: Regular communication with team members ensures everyone stays on schedule and any issues are addressed promptly.

By adopting these strategies, I ensure projects stay on track, delivering high-quality results within the allocated time.

Q 26. How do you adapt your data collection methods to different environmental conditions?

Adaptability is key when facing variable environmental conditions. My approach involves selecting appropriate methods and equipment for each situation.

- Equipment Selection: Different environments demand different equipment. For instance, in harsh weather conditions, rugged, waterproof equipment is essential. For remote locations, portable and lightweight equipment becomes crucial.

- Sampling Techniques: Adapting sampling techniques is vital. In dense vegetation, modified sampling procedures might be needed. For underwater sampling, specialized equipment and diving techniques are employed.

- Safety Protocols: Environmental conditions dictate the safety procedures. Extreme weather necessitates safety precautions such as protective gear and awareness of potential hazards.

- Data Validation: In challenging conditions, rigorous data validation is critical to account for potential errors or biases introduced by the environment.

For example, while collecting water samples in a fast-flowing river, I’d use a specialized sampler to obtain representative samples, accounting for the current’s strength and ensuring accurate measurements despite the difficult conditions.

Q 27. How do you handle unexpected events or problems that arise during data collection?

Unexpected events are commonplace in fieldwork. A calm, systematic approach is essential.

- Risk Assessment: Before fieldwork, potential problems are anticipated and mitigation strategies are planned. This involves assessing environmental risks (weather, terrain), equipment failures, and logistical challenges.

- Contingency Plans: Backup plans address likely problems; For instance, having backup equipment or alternative sampling methods if the primary equipment fails.

- Problem-Solving: When unexpected issues arise, I use a structured approach: identify the problem, assess the impact, brainstorm solutions, select the best option, and implement it. Documentation of the problem, solution, and its impact on the data is essential.

- Communication: Open communication with the team and supervisors is crucial for effective problem-solving. This prevents misunderstandings and helps in seeking support when needed.

For instance, if a critical piece of equipment malfunctions during a survey, I would immediately contact support, implement the contingency plan (using a backup instrument or adjusting the sampling strategy), document the event, and assess its potential impact on the overall data quality.

Q 28. Describe a time you encountered a challenge during field data collection and how you overcame it.

During a remote ecological survey in a mountainous region, we encountered unexpectedly heavy snowfall, delaying our access to a crucial study site. This threatened to jeopardize the project timeline and data collection goals.

To overcome this challenge, we quickly adapted our approach. Firstly, we communicated the situation to our supervisors and requested an extension on the deadline. Secondly, we utilized satellite imagery and available weather forecasts to identify potential alternative access routes. Thirdly, we contacted local guides to leverage their knowledge of the region and assess safe alternative access points. Finally, we adjusted our sampling design to focus on accessible sites first, prioritising data collection where possible.

Although the initial setback was significant, by implementing these quick and adaptable measures, we managed to minimize the overall impact on the project. We successfully completed the survey, albeit with a revised timeline, ensuring the research data remained reliable and comprehensive.

Key Topics to Learn for Sampling and Field Data Collection Interview

- Sampling Techniques: Understanding different sampling methods (random, stratified, systematic, cluster) and their appropriate applications. Knowing the strengths and weaknesses of each method is crucial.

- Data Collection Methods: Mastering various data collection techniques, including surveys (online, paper, telephone), interviews (structured, semi-structured, unstructured), observations, and the use of specialized equipment. Be prepared to discuss the pros and cons of each.

- Sample Size Determination: Understanding the factors influencing sample size calculation (e.g., desired precision, confidence level, population variability) and applying appropriate statistical methods.

- Data Quality Control: Discussing methods for ensuring data accuracy, completeness, and consistency throughout the collection process. This includes data validation, cleaning, and error handling.

- Fieldwork Management: Demonstrating knowledge of planning, executing, and managing field data collection projects, including team management, logistical considerations, and budget control.

- Data Security and Confidentiality: Understanding ethical considerations and best practices for protecting sensitive data collected in the field.

- Data Analysis Fundamentals: While detailed analysis might not be the focus, a basic understanding of descriptive statistics and data visualization techniques relevant to your collected data is beneficial.

- Problem-Solving in the Field: Prepare examples demonstrating your ability to troubleshoot unexpected challenges during data collection (e.g., equipment malfunction, respondent unavailability, unexpected weather conditions).

Next Steps

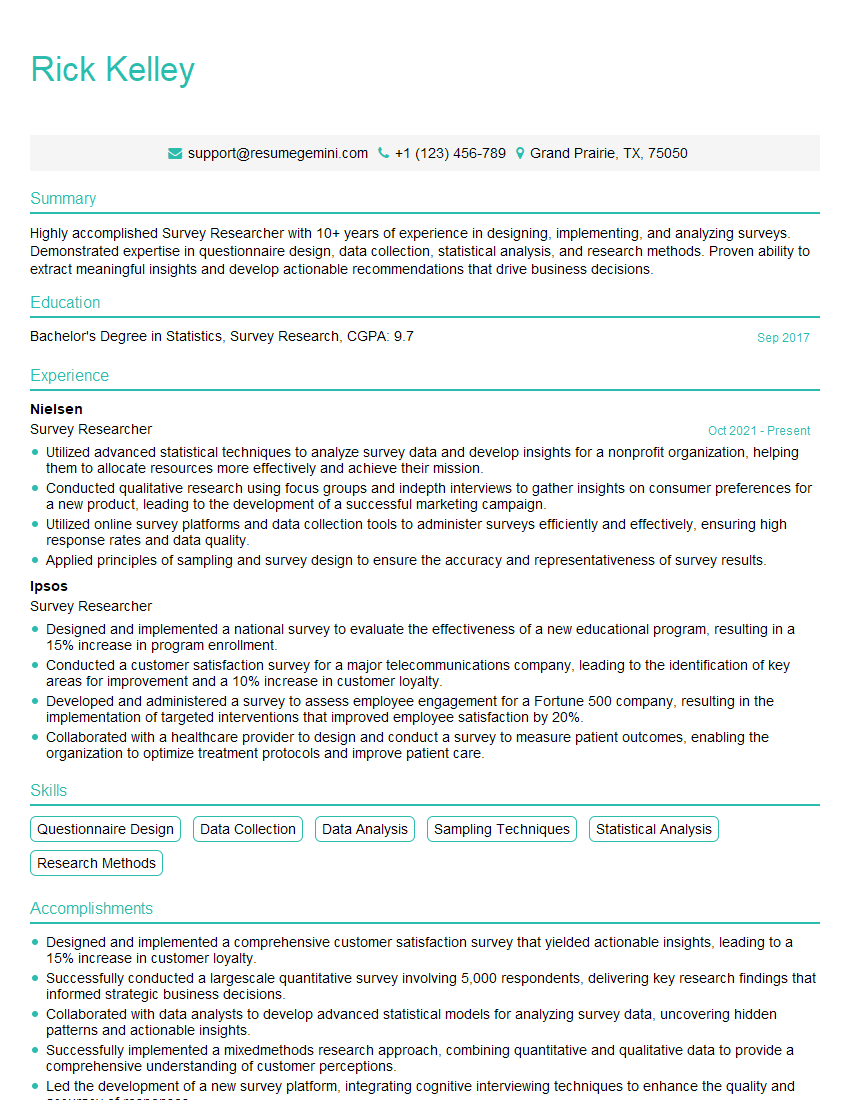

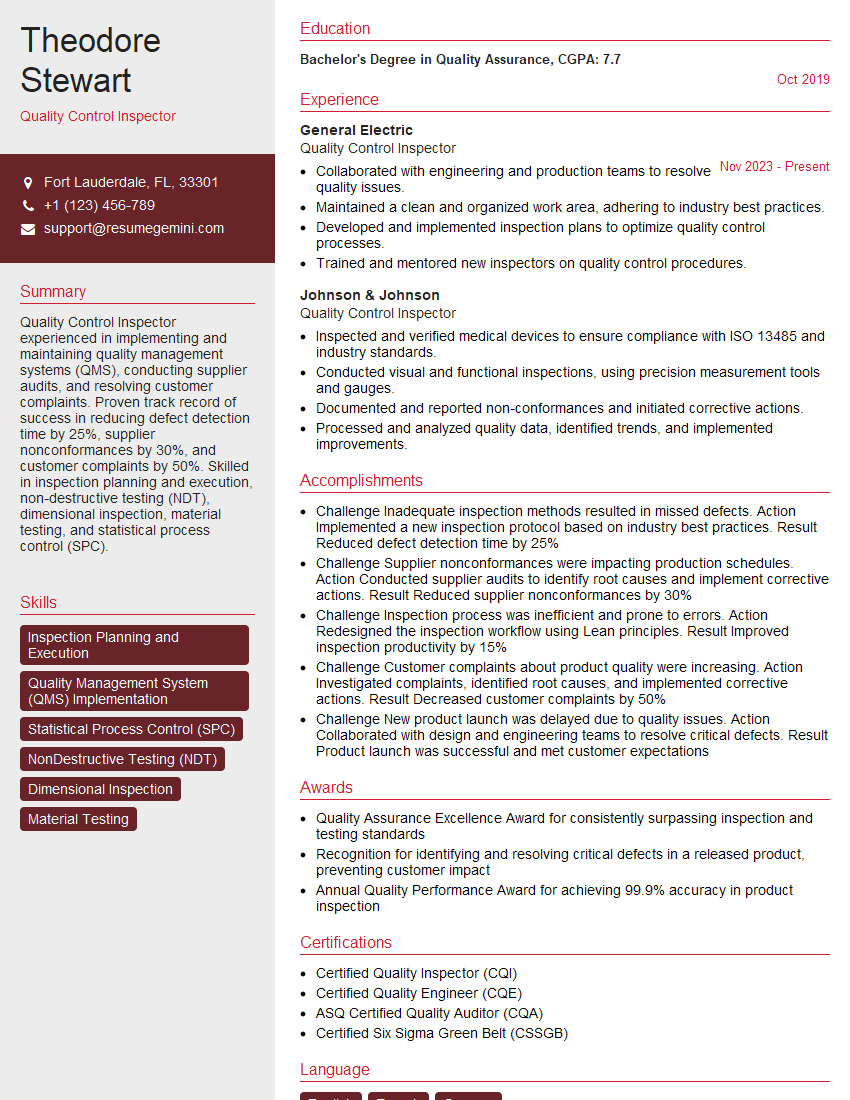

Mastering Sampling and Field Data Collection is key to unlocking exciting career opportunities in research, market analysis, environmental science, and many other fields. A strong understanding of these techniques will significantly enhance your job prospects and allow you to contribute effectively to data-driven decision-making. To maximize your chances of success, it’s vital to present your skills and experience through a well-crafted, ATS-friendly resume. ResumeGemini is a trusted resource to help you build a professional resume that highlights your key qualifications. Examples of resumes tailored to Sampling and Field Data Collection are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.