Are you ready to stand out in your next interview? Understanding and preparing for Well Data Analysis interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Well Data Analysis Interview

Q 1. Explain the difference between static and dynamic well data.

The key difference between static and dynamic well data lies in the time aspect. Static well data represents the reservoir’s properties under static conditions – essentially, when the well isn’t actively producing. This data provides a snapshot of the reservoir’s inherent characteristics. Think of it like a photograph of the reservoir. Examples include well logs (porosity, permeability, lithology), core analysis data (permeability, porosity, fluid saturation), and pressure-volume-temperature (PVT) data. This data helps us understand the reservoir’s potential and structure.

Dynamic well data, on the other hand, captures reservoir behavior during production. This is like a movie showing the reservoir’s behavior over time. It reflects how the reservoir responds to production, including pressure changes, fluid flow rates, and temperature variations. Examples include production logging data, pressure buildup tests, and rate-transient analysis. This data provides insights into the reservoir’s productivity and helps us optimize production strategies.

Q 2. Describe various types of well logs and their applications.

Well logs are instrumental in understanding subsurface formations. Various types exist, each serving a specific purpose:

- Gamma Ray (GR): Measures natural radioactivity. High GR indicates shale, low GR indicates sandstone or carbonate. It’s a fundamental log used for lithology identification and correlation between wells.

- Neutron Porosity (NPHI): Measures the hydrogen index of the formation, indicating porosity. Higher NPHI generally indicates higher porosity.

- Density Porosity (RHOB): Measures the bulk density of the formation. This, in conjunction with NPHI, provides a more accurate porosity estimate.

- Resistivity Logs (e.g., Deep Resistivity, Shallow Resistivity): Measure the electrical conductivity of the formation, indicating the presence of hydrocarbons (high resistivity) or water (low resistivity).

- Sonic Logs: Measure the transit time of sound waves through the formation, related to porosity and lithology.

- Caliper Log: Measures the diameter of the borehole. Helps identify borehole washouts and determine true formation thickness.

Applications: These logs collectively provide a detailed picture of the reservoir. They’re crucial for:

- Reservoir Characterization: Determining lithology, porosity, permeability, and fluid saturation.

- Hydrocarbon Identification: Distinguishing between oil, gas, and water zones.

- Well Completion Design: Optimizing well placement and completion strategies.

- Reservoir Simulation: Input data for reservoir models to predict future production.

Q 3. How do you identify and handle outliers in well data?

Outliers in well data can significantly impact analysis and interpretation. Identification typically involves a combination of visual inspection and statistical methods.

Visual Inspection: Plotting the data (e.g., on a log-log plot) often reveals outliers as points significantly deviating from the overall trend.

Statistical Methods: Techniques like box plots, scatter plots with trend lines, and z-score analysis can quantitatively identify outliers. The z-score, for example, measures how many standard deviations a data point is from the mean. Data points with a z-score above a certain threshold (e.g., 3) are often flagged as outliers.

Handling Outliers: The approach depends on the cause. If the outlier stems from a genuine reservoir heterogeneity, it should be retained. However, if it’s due to measurement errors (e.g., bad sensor readings, equipment malfunction), it can be addressed through several approaches:

- Removal: Outliers can be removed if they are demonstrably erroneous.

- Winsorizing or Trimming: Replacing outliers with less extreme values (Winsorizing) or removing a percentage of the most extreme values (Trimming).

- Transformation: Applying a mathematical transformation (e.g., log transformation) can sometimes mitigate the effect of outliers.

It’s crucial to document the reasons for handling outliers and the methods used to maintain data integrity and transparency.

Q 4. What are the common challenges in integrating well data from different sources?

Integrating well data from diverse sources presents numerous challenges. Common issues include:

- Inconsistent Units: Data might be recorded using different units (e.g., metric vs. imperial), requiring careful conversion.

- Different Data Formats: Data may be stored in various formats (LAS, CSV, proprietary formats), necessitating data conversion and standardization.

- Missing Data: Incomplete datasets can hinder analysis. Interpolation or imputation techniques may be necessary to fill gaps, but it’s essential to document these actions.

- Data Quality Issues: Errors, noise, or inconsistencies may be present in the data from various sources, requiring thorough quality control checks.

- Depth Discrepancies: Depth referencing inconsistencies between datasets can introduce errors when correlating data from different wells.

Effective integration requires a robust workflow that includes data cleaning, standardization, and validation. Using well-established data management practices and employing specialized software capable of handling various data formats is essential.

Q 5. Explain your experience with well test interpretation techniques.

My experience encompasses various well test interpretation techniques, including:

- Pressure Buildup Tests: Analyzing pressure data after shutting in a well to determine reservoir properties like permeability, skin factor, and reservoir pressure.

- Drawdown Tests: Analyzing pressure data while the well is producing to assess well productivity and reservoir characteristics.

- Multi-Rate Tests: Conducting tests at varying production rates to improve the accuracy of reservoir parameter estimation.

- Pressure Interference Tests: Observing pressure changes in one well due to production in another well, used to determine reservoir connectivity and properties.

I’ve used software like Kappa and Petrel to process and interpret well test data, employing both manual and automated interpretation methods. For instance, I’ve applied type-curve matching techniques to identify reservoir flow regimes and estimate reservoir properties. Furthermore, I am experienced in using numerical modelling to simulate the well test responses to better understand complex reservoir behavior.

Q 6. How do you use well data to optimize production?

Well data is crucial for production optimization. I leverage it in several ways:

- Identifying Zones of High Productivity: Analyzing production logs and well tests to pinpoint the most productive zones within the reservoir. This knowledge helps optimize completion strategies and improve overall well performance.

- Predictive Modeling: Using historical production data, integrated with reservoir properties from well logs, to predict future production and optimize production schedules.

- Water Management: Monitoring water cut (the proportion of water in produced fluids) helps in identifying water breakthrough and implementing strategies to delay or mitigate its impact on production.

- Artificial Lift Optimization: Analyzing well performance data to optimize the operation of artificial lift systems (e.g., ESPs, gas lift) for increased production efficiency.

- Reservoir Management Decisions: Integrated well data analysis helps to inform decisions regarding infill drilling, waterflooding, or other enhanced oil recovery (EOR) techniques.

A practical example: In one project, we used production data combined with reservoir simulation to predict the optimal injection rates for a waterflood project, which significantly increased the cumulative oil production.

Q 7. Describe your experience with different well data analysis software (e.g., Petrel, Kappa, etc.).

I have extensive experience with various well data analysis software packages, including:

- Petrel: Proficient in using Petrel for data management, well log interpretation, reservoir modeling, and production analysis. I have utilized Petrel’s capabilities to build geological models, integrate well data, and simulate reservoir performance to inform production optimization strategies.

- Kappa: I am experienced in using Kappa for advanced well test analysis, including pressure transient interpretation and reservoir simulation. This software has been instrumental in characterizing complex reservoir behavior and optimizing well testing strategies.

- Other Software: I also possess working knowledge of other industry-standard software packages like IHS Kingdom and Schlumberger’s Techlog, allowing me to adapt to various data formats and analysis workflows.

My proficiency in these tools enables me to perform comprehensive well data analysis, from initial data processing and interpretation to reservoir modeling and production forecasting.

Q 8. How would you assess the health and integrity of a well using available data?

Assessing well health and integrity relies on a multifaceted approach using various data streams. We look for inconsistencies or anomalies that signal potential problems. For instance, we analyze pressure data to detect leaks or pressure drops indicating damage to the casing or formation. Production data, including fluid rates and compositions, helps us identify changes in reservoir performance that might stem from wellbore issues. Furthermore, we scrutinize logging data – such as cement bond logs, caliper logs, and temperature logs – to pinpoint potential problems with the well’s physical structure. For example, a poor cement bond log might reveal zones with potential leaks. Finally, we consider the history of interventions (e.g., acidizing, fracturing) and any reported operational issues to get a complete picture. Combining all this information allows us to build a comprehensive assessment and make informed decisions about well maintenance and interventions.

Example: A sudden drop in production coupled with an increase in water cut, alongside a negative pressure test, would strongly suggest a problem with the wellbore integrity, possibly a casing leak or a change in reservoir communication.

Q 9. Explain your understanding of pressure transient analysis.

Pressure transient analysis (PTA) is a powerful technique used to characterize reservoir properties and well performance by analyzing pressure changes in the wellbore following a perturbation. This perturbation can be a production rate change (e.g., a drawdown test), a shut-in period (e.g., a buildup test), or an injection of fluid (e.g., a falloff test). By analyzing the pressure response over time, we can determine reservoir parameters like permeability, porosity, skin factor (a measure of near-wellbore damage or stimulation), and reservoir pressure. We often use analytical models or numerical simulators to interpret the data. These models predict the pressure behavior given certain reservoir properties. Matching the model to the observed pressure data allows us to estimate those properties.

Example: A buildup test, where the well is shut in after a production period, shows a characteristic pressure increase. The rate of pressure increase is directly related to the reservoir permeability and other parameters. By analyzing this pressure buildup curve using software like Saphir or similar, we can estimate these reservoir properties.

Q 10. Describe the different types of well completions and their impact on production data.

Well completions are crucial because they determine how effectively hydrocarbons flow from the reservoir to the wellbore. Different completion types significantly impact production data.

- Openhole completion: The simplest type, where the wellbore is left open in the reservoir section, offering high productivity but potentially leading to instability and sand production. Production data from openhole completions might show high initial rates but may decline faster due to issues like sanding.

- Cased and perforated completion: The wellbore is cased (protected with steel pipe) and then perforated to allow fluid flow. This provides greater wellbore stability. Production data will show a more stable production profile compared to openhole.

- Gravel-pack completion: Gravel is packed around the perforations to prevent sand production. Production data will typically show sustained higher rates with less decline due to sand control.

- Horizontal completion: A horizontally drilled wellbore is completed in the reservoir, substantially increasing the contact area with the reservoir. Production data demonstrates higher production rates compared to vertical wells due to the increased contact area.

- Multi-stage fractured completion: Fractures are created in the reservoir at multiple points along the wellbore to enhance permeability and fluid flow. Production data is typically characterized by significant rate increases after the fracturing operation.

Choosing the right completion is critical for maximizing production and minimizing operational issues. The impact on production data is considerable, with different completion types resulting in distinct production profiles. We make the choice based on reservoir characteristics, such as rock strength and permeability, and expected production rates.

Q 11. How do you identify and interpret flow regimes from well test data?

Identifying flow regimes from well test data involves analyzing the pressure and rate derivatives. Different flow regimes exhibit unique characteristics on these plots. We typically look at log-log plots of pressure derivative versus pressure or time.

- Radial Flow: Characterized by a constant derivative indicating steady-state flow into the well.

- Linear Flow: Indicated by a half-slope derivative line.

- Bilinear Flow: Shows a one-quarter slope derivative line.

- Transition Flow: Derivative curves display transitions between different flow regimes.

By analyzing these derivative curves and identifying these distinct slopes and their transition points, we can understand the dominant flow mechanisms influencing the well’s productivity. This understanding is crucial for reservoir management and production optimization. The presence of specific flow regimes can highlight the need for completion optimization or secondary recovery techniques. For instance, identifying linear flow could suggest the need for horizontal drilling in the future.

Q 12. What are the key parameters you would consider when analyzing well performance?

When analyzing well performance, I consider several key parameters:

- Production Rates: Oil, gas, and water rates over time provide a fundamental understanding of well productivity and potential decline.

- Pressure Data: Bottomhole pressure (BHP) data indicates reservoir pressure and can reveal issues like pressure depletion or formation damage.

- Fluid Properties: Oil and gas gravity, viscosity, and water cut influence flow rates and production efficiency.

- Wellbore Conditions: Temperature, pressure gradients, and flow regimes in the wellbore influence production.

- Reservoir Properties: Porosity, permeability, and fluid saturation estimated through pressure transient analysis and other methods are essential.

- Completion Details: Type of completion, number of perforations, and stimulation treatments will affect production outcomes.

- Operational History: Workovers, interventions, and production downtime contribute to the well’s overall performance and must be considered.

By integrating these parameters, a comprehensive assessment of well performance can be established. This allows for effective reservoir management and enhanced oil/gas recovery.

Q 13. How do you handle missing or incomplete well data?

Handling missing or incomplete well data is a common challenge in well data analysis. My strategy involves a multi-step approach:

- Data Validation: First, I rigorously check for inconsistencies and outliers within the available data, correcting errors or inconsistencies whenever possible.

- Data Imputation: For missing values, I employ various imputation techniques. Simple methods include using the mean or median of the available data for that specific parameter. More advanced techniques, like regression imputation, can be used to predict the missing values based on the correlation with other parameters.

- Data Reconstruction: In cases with extensive missing data, it may be necessary to reconstruct the data using available similar wells, employing techniques like reservoir simulation or analogy with other wells within the same reservoir, or applying a knowledge-based approach using available information on the reservoir.

- Sensitivity Analysis: After imputation, I perform a sensitivity analysis to determine how the imputation method affects the results of our analysis. This allows me to assess the uncertainty introduced by missing data.

The choice of imputation method is based on the nature of the missing data and the potential impact on the results. Always documenting the methods used and uncertainties introduced by dealing with incomplete data is crucial.

Q 14. Explain your experience with data visualization techniques for well data.

Effective data visualization is crucial for understanding complex well data. I am proficient in using various tools and techniques to create informative and insightful visualizations. This includes:

- Production profiles: Plotting production rates (oil, gas, water) over time to show production trends and decline curves.

- Pressure-time plots: Visualizing pressure changes during well tests for pressure transient analysis, using both linear and log-log scales for various flow regimes.

- Log plots: Using various logs (gamma ray, resistivity, porosity, etc.) to visualize reservoir properties and wellbore conditions.

- Cross-plots: Creating plots to show the relationships between different parameters, such as water cut vs. oil production, or permeability vs. porosity.

- 3D visualizations: Employing 3D modeling software to represent reservoir properties and flow patterns.

- Interactive dashboards: Developing dashboards that allow for easy exploration and filtering of large datasets to facilitate effective decision-making.

The selection of visualization techniques is dictated by the nature of the data and the insights sought. The goal is always clarity and readily understandable insights. A well-designed visualization can uncover patterns and trends that might be missed by simply looking at tables of numbers.

Q 15. How do you use well data to predict future well performance?

Predicting future well performance relies heavily on analyzing historical well data. We use various techniques to extrapolate past trends into the future. This involves understanding the underlying physics of reservoir depletion and fluid flow. A common approach is decline curve analysis (DCA), which we’ll discuss later. Beyond DCA, we also incorporate reservoir simulation models, which are complex numerical models that simulate fluid flow within the reservoir based on geological data and well testing information. These models can be calibrated with historical production data to predict future performance under different operating scenarios, such as changes in production rates or well interventions.

For example, if we observe a consistent exponential decline in oil production from a well, we can use DCA to project this decline into the future. However, we must account for potential changes, such as water or gas coning, which can alter the decline pattern. Reservoir simulation allows us to incorporate these complexities and create more accurate predictions.

Another approach involves using machine learning techniques, such as neural networks or support vector machines, which can identify complex patterns and relationships in the data that might be missed by traditional methods. These models are particularly useful when dealing with large, noisy datasets common in well data analysis.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your understanding of decline curve analysis.

Decline curve analysis (DCA) is a powerful technique used to forecast future well production rates based on historical production data. It’s essentially fitting a mathematical model to the historical production data to extrapolate future performance. Several decline models exist, including exponential, hyperbolic, and harmonic decline curves. The choice of model depends on the reservoir characteristics and the production history of the well.

The hyperbolic decline model is the most versatile and commonly used because it can represent a wide range of decline behaviors, from exponential decline (early life) to harmonic decline (late life). The model is defined by parameters like initial production rate (qi), decline rate (b), and a shape factor (n).

q(t) = qi / (1 + bDt)1/n

where: q(t) is the production rate at time t, D is a constant depending on the units used.

Fitting these parameters to historical data allows us to predict future production. However, it’s crucial to remember that DCA is an empirical method; its accuracy depends on the quality of the data and the appropriateness of the chosen decline model. Significant changes in reservoir conditions or well operations can invalidate the prediction.

Q 17. How do you assess the impact of reservoir heterogeneity on well performance?

Reservoir heterogeneity, the variation in reservoir properties (such as porosity, permeability, and saturation) across the reservoir, significantly impacts well performance. Heterogeneity can lead to uneven fluid flow, reduced sweep efficiency, and ultimately lower oil recovery.

We assess this impact using several methods. First, we analyze geological data such as seismic surveys, well logs (e.g., porosity logs, permeability logs), and core samples to understand the spatial distribution of reservoir properties. This allows us to build a geological model of the reservoir.

Secondly, we use production data from multiple wells to infer the impact of heterogeneity. For instance, if wells in the same reservoir show widely different production rates or decline curves, it suggests significant heterogeneity. Production logging tools can provide detailed information on fluid flow profiles within the wellbore, helping to pinpoint zones of higher and lower permeability.

Finally, reservoir simulation models are crucial in capturing the impact of heterogeneity. By incorporating the geological model into the simulator, we can simulate fluid flow within the heterogeneous reservoir and predict the performance of individual wells and the entire reservoir. This helps optimize well placement and completion strategies to maximize recovery.

Q 18. What are the limitations of using empirical correlations for well data analysis?

Empirical correlations, while useful for quick estimations, have limitations in well data analysis. These correlations are often derived from limited datasets and may not be applicable to all reservoirs or well types. They typically don’t account for the complexities of reservoir behavior and operational factors.

For example, a correlation developed for a specific type of reservoir rock might not accurately predict the performance of a well in a different reservoir with different rock properties. Similarly, these correlations often disregard the influence of factors such as fluid properties, wellbore geometry, and production strategies. This can lead to inaccurate predictions and potentially flawed decisions.

More sophisticated methods, such as decline curve analysis with appropriate model selection or reservoir simulation, are preferable to empirical correlations when accurate predictions are needed. While empirical correlations can serve as a starting point or a quick check, they should not be solely relied upon for critical decisions about well development or production management.

Q 19. Explain your experience with building and validating predictive models using well data.

I have extensive experience building and validating predictive models using well data. This involves a systematic approach starting with data preprocessing and quality control. I’ve utilized various techniques, including decline curve analysis, material balance calculations, and machine learning algorithms.

In one project, we used a neural network model to predict future oil production from a complex carbonate reservoir. We trained the model using historical production data from multiple wells, along with geological and operational data. The model significantly improved the accuracy of production forecasts compared to traditional decline curve analysis, particularly in the later stages of production. We validated the model using a hold-out dataset, ensuring its ability to generalize to unseen data.

For model validation, I typically employ techniques like cross-validation and statistical metrics such as RMSE (Root Mean Squared Error) and R-squared to quantify the model’s performance. Regular model updates are important; as more data becomes available, the models are refined and recalibrated to maintain accuracy.

Q 20. How do you ensure the quality and accuracy of well data?

Ensuring the quality and accuracy of well data is paramount. This involves a multi-faceted approach starting with data acquisition. We must ensure proper calibration and maintenance of downhole sensors and surface equipment. Regular audits of the data acquisition systems are crucial. Data validation includes checking for inconsistencies, outliers, and errors. We use automated checks and manual reviews to identify and correct these issues.

Data cleaning is a vital step. This involves handling missing values, smoothing noisy data, and correcting errors. Advanced techniques like outlier detection and data imputation can help in this process. Data reconciliation, where data from different sources are compared and adjusted for consistency, is essential for ensuring data reliability.

Proper data management is also critical. A well-structured database system is necessary to store and access the data efficiently. Data provenance tracking helps trace data lineage, aiding in troubleshooting data discrepancies. Implementing robust quality control measures at each stage of the workflow is essential for building trustworthy predictive models.

Q 21. Describe your experience with automated well data analysis workflows.

I have extensive experience with automated well data analysis workflows, leveraging scripting languages like Python and specialized software packages. These workflows streamline data processing, analysis, and reporting, reducing manual effort and improving efficiency.

For example, I’ve developed automated workflows for decline curve analysis, which include data import, quality control checks, model fitting, and report generation. These scripts automate repetitive tasks, ensuring consistency and reducing human error. Similar workflows have been created for other tasks, such as well test analysis and reservoir simulation data processing. I frequently utilize cloud computing platforms for handling large datasets and running computationally intensive tasks.

These automated workflows also facilitate the integration of different data sources, such as production data, geological data, and engineering data, into a unified analysis platform. This facilitates a holistic view of the reservoir and well performance, allowing for more informed decision-making.

Q 22. How do you communicate complex well data analysis results to non-technical audiences?

Communicating complex well data analysis results to a non-technical audience requires translating technical jargon into plain language and using effective visualizations. Instead of focusing on intricate details, the key is to highlight the practical implications of the findings. For example, instead of saying “The permeability index in the target zone exhibited a 15% reduction based on decline curve analysis,” I would say something like “Our analysis suggests that the rate of oil production is slowing down by about 15%, indicating we might need to consider enhanced oil recovery techniques.”

I often use analogies to make complex concepts relatable. Think of the reservoir as a sponge; permeability represents how easily oil flows through the sponge. Visual aids like charts, graphs, and even simplified diagrams are crucial for conveying key findings quickly. A simple bar chart showing production rates over time is far more effective than a complex geostatistical map. Finally, keeping the communication concise and focusing on the ‘so what?’ – the impact of the analysis on business decisions – is key to ensuring the message is understood and acted upon.

In a recent project, I presented findings on well performance to executive stakeholders who had limited technical backgrounds. Instead of diving into pressure-transient analysis details, I used a simple slide showing predicted production decline alongside potential mitigation strategies, making it easy for them to understand the urgency and the proposed solutions.

Q 23. Explain your understanding of the relationship between well data and reservoir simulation.

Well data is the lifeblood of reservoir simulation. Reservoir simulation models use well data to create a digital twin of the reservoir, predicting its behavior under various operating conditions. The relationship is two-way: well data is used to calibrate and validate the simulation model, and the simulation model provides insights that help interpret and optimize the well data itself.

For example, well test data (pressure, flow rate) is used to estimate reservoir properties like permeability and porosity. These properties are then input into the reservoir simulator to model fluid flow and predict future production. Conversely, the simulation model can help to identify potential production bottlenecks that might not be immediately apparent from the well data alone. It allows us to test different scenarios – like changes in well placement or completion strategies – before implementing them in the field, saving time and money.

Inconsistencies between well data and simulation results often highlight errors or missing information. For example, a discrepancy in predicted versus actual production could indicate a problem with the reservoir model, the well data itself, or perhaps an unforeseen geological event. Addressing these inconsistencies involves a rigorous iterative process of refining the model, validating well data quality, and exploring alternative explanations.

Q 24. How do you use well data to optimize drilling operations?

Well data plays a critical role in optimizing drilling operations. Real-time data from sensors on the drill bit (e.g., rate of penetration, torque, weight on bit) provide valuable insights into the drilling process and help anticipate and mitigate potential problems.

For instance, by analyzing rate of penetration (ROP) data in conjunction with geological formation information, we can identify zones with higher or lower drilling difficulty. This allows for adjustments in drilling parameters (e.g., weight on bit, rotary speed) to optimize drilling efficiency and reduce non-productive time. Similarly, analyzing torque and drag data helps detect potential problems such as bit balling or stuck pipe, enabling proactive intervention and preventing costly delays.

Advanced techniques like machine learning can be used to predict drilling problems even before they occur. By analyzing historical well data, we can train models to identify patterns and anomalies associated with drilling complications. This predictive capability allows for preventative measures, enhancing safety and efficiency. For example, a model could predict a high risk of stuck pipe based on the observed changes in torque and vibrations, prompting a proactive change in drilling plan.

Q 25. Describe your experience with analyzing data from different well types (e.g., vertical, horizontal, multilateral).

My experience encompasses analyzing data from various well types, each presenting unique challenges and opportunities. Vertical wells provide a relatively straightforward data interpretation, while horizontal and multilateral wells introduce significant complexity due to their extended reach and multiple branches.

Vertical wells primarily provide data along a single path, making data analysis relatively simpler. In horizontal wells, we must account for the variation in reservoir properties along the lateral length. This requires sophisticated techniques like pressure transient analysis that take the geometry of the well into account. Multilateral wells further complicate the picture with the added complexity of interactions between multiple branches, necessitating detailed simulation models to understand fluid flow and production behavior.

For example, interpreting pressure data from a horizontal well involves considering the skin effect – the resistance to flow near the wellbore – which can be highly variable along the lateral length. In multilateral wells, data from different branches needs to be integrated to obtain a complete picture of reservoir performance. This usually involves using advanced techniques like reservoir simulation coupled with sophisticated data analysis to understand production patterns.

Q 26. How do you identify and address inconsistencies in well data?

Identifying and addressing inconsistencies in well data is crucial for accurate analysis and reliable decision-making. This process typically involves a combination of data quality checks, validation against other datasets, and careful examination of potential sources of error.

Data quality checks can involve identifying outliers, missing values, and inconsistencies in data units. Validation often means comparing the data with other sources such as geological models, production logs, or data from neighboring wells. Suspected inconsistencies require a thorough investigation to understand their origin. For instance, a sudden drop in pressure might be due to a genuine reservoir depletion or a faulty pressure gauge. This investigation often involves scrutinizing the data acquisition process, comparing it to other data streams and applying quality control checks such as moving averages or spike removal algorithms. Example: If pressure data shows a sharp spike, it's important to cross-reference this with other sensor data to determine whether it’s an actual event or a data error.

Addressing inconsistencies may require data correction, imputation (filling in missing values), or even excluding problematic data points. The chosen method depends on the nature and severity of the inconsistency, always with thorough documentation to maintain data integrity and transparency.

Q 27. What are your strategies for managing large and complex well datasets?

Managing large and complex well datasets requires a structured approach, leveraging both technical skills and efficient workflows. This involves employing databases optimized for handling large datasets, implementing robust data management systems and utilizing advanced analytical tools. A critical part is data organization, using consistent naming conventions and clear metadata to ensure data discoverability and reusability.

I’ve had success employing relational databases (like SQL) to store structured well data and NoSQL databases for unstructured data, such as images from well logs. Data visualization tools are crucial for exploring and interpreting the data, helping identify patterns and anomalies. Advanced analytics techniques, like machine learning, can help extract meaningful insights from massive datasets too complex for manual analysis. Cloud-based solutions can provide scalability for storing and processing exceptionally large datasets. Automated data quality checks are essential, allowing efficient identification and resolution of anomalies at the source, rather than downstream.

For example, in a recent project involving hundreds of wells, we used a cloud-based data lake to store all the well data. We then implemented automated data quality checks and used machine learning algorithms to predict future production based on historical trends. This not only provided better insights into reservoir performance but also significantly reduced the time spent on data processing and analysis.

Key Topics to Learn for Well Data Analysis Interview

- Formation Evaluation: Understanding porosity, permeability, saturation, and their impact on reservoir properties. Practical application: Interpreting log data to estimate reservoir potential.

- Pressure Transient Analysis: Analyzing pressure buildup and drawdown tests to determine reservoir characteristics. Practical application: Estimating reservoir permeability and skin factor.

- Production Logging: Interpreting production logs to understand fluid flow dynamics in the wellbore. Practical application: Identifying flow restrictions and optimizing production strategies.

- Well Testing Interpretation: Analyzing various well test data (e.g., pressure buildup, drawdown, interference) to characterize reservoir properties. Practical application: Determining reservoir boundaries and connectivity.

- Data Visualization and Interpretation: Effectively presenting and interpreting well data using various software and techniques. Practical application: Creating insightful visualizations to communicate findings to stakeholders.

- Reservoir Simulation Fundamentals: Understanding the basic principles of reservoir simulation and its role in well data analysis. Practical application: Evaluating the impact of different production scenarios on reservoir performance.

- Petrophysics: Understanding the relationship between rock properties and fluid properties. Practical application: Integrating geological and engineering data for comprehensive reservoir characterization.

Next Steps

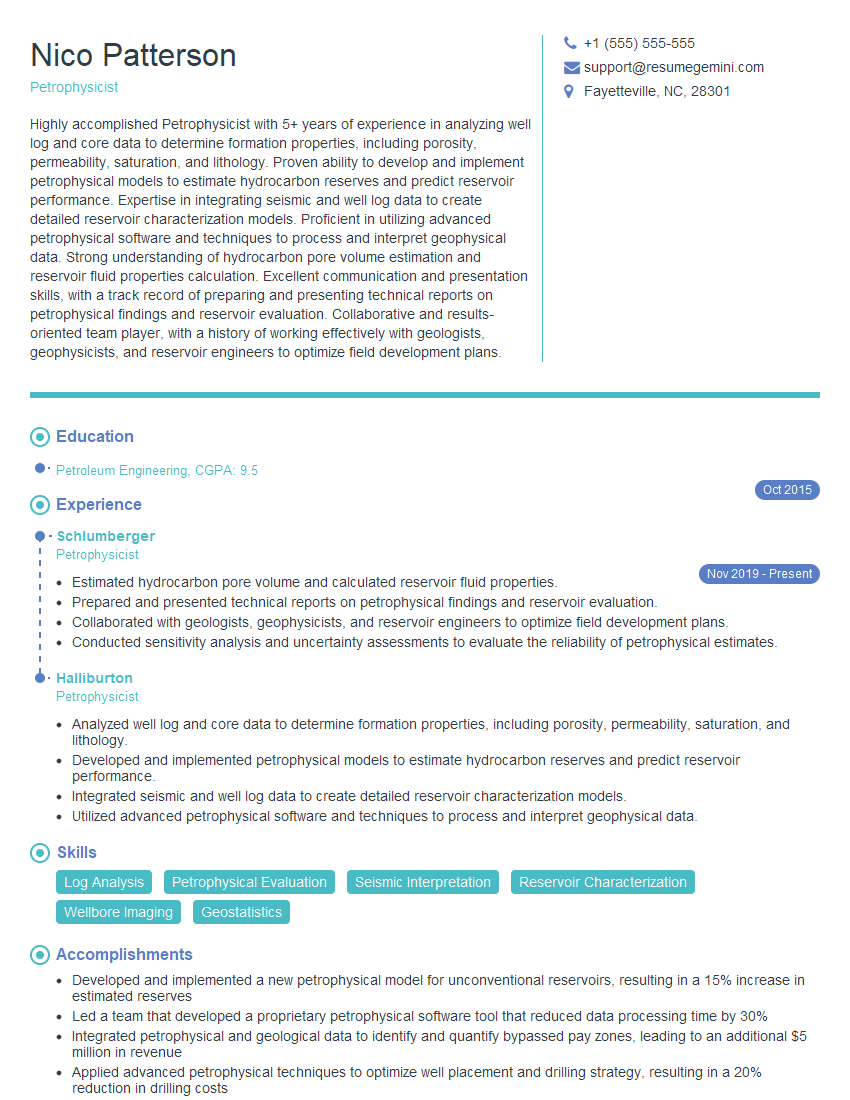

Mastering Well Data Analysis is crucial for advancing your career in the energy industry, opening doors to exciting opportunities and higher earning potential. A strong understanding of these concepts will significantly improve your interview performance and showcase your expertise. To maximize your job prospects, invest time in crafting a professional, ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a compelling resume tailored to the energy sector. We provide examples of resumes specifically designed for Well Data Analysis professionals to give you a head start. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.