Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Multi-Sensor Target Identification interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Multi-Sensor Target Identification Interview

Q 1. Explain the concept of sensor fusion and its importance in target identification.

Sensor fusion is the process of integrating data from multiple sensors to achieve a more accurate, robust, and comprehensive understanding of a scene or target than could be achieved using any single sensor alone. Imagine trying to describe a person – you’d get a much better picture combining visual observation (shape, color of clothing), audio information (voice), and maybe even olfactory data (if they’re nearby!). Similarly, in target identification, fusing data from different sensors significantly improves our ability to classify and track targets.

Its importance stems from the complementary nature of different sensors. One sensor might be excellent at detecting a target but less precise in determining its type, while another sensor might be great at identifying the target type but struggle with detection in certain conditions. By combining their strengths, we minimize individual sensor limitations and gain a more reliable overall picture.

Q 2. Describe different sensor fusion architectures (e.g., centralized, decentralized).

Sensor fusion architectures can be broadly classified into centralized, decentralized, and hybrid approaches.

Centralized Architecture: In this architecture, all sensor data is transmitted to a central fusion node, where it’s processed and integrated. This approach provides a global view but can be susceptible to single points of failure and communication bottlenecks. Think of a military command center receiving information from various surveillance systems—radar, sonar, satellite imagery—all converging to a single analysis point.

Decentralized Architecture: Here, each sensor performs local processing and fusion before exchanging information with other nodes. This approach is more robust to failures, as individual node failures don’t necessarily compromise the entire system. For example, a network of autonomous vehicles each with multiple sensors (cameras, lidar, radar) could perform local fusion to make individual driving decisions while sharing some data for improved situational awareness.

Hybrid Architecture: This approach combines elements of both centralized and decentralized architectures, balancing robustness and global awareness. Certain critical aspects might be centrally fused, while others are fused locally, providing a flexible and scalable solution.

Q 3. What are some common sensor modalities used in target identification?

Common sensor modalities used in target identification include:

Radar: Provides information about range, velocity, and angle, often used to detect and track moving targets.

Infrared (IR): Detects heat signatures, useful for identifying targets based on their thermal properties, particularly useful at night or in obscured conditions.

Electro-Optical (EO): Uses visible and near-infrared light, enabling visual identification of targets. This includes cameras and imaging systems.

Acoustic: Utilizes sound waves, valuable for underwater target identification (sonar) or detecting specific sounds associated with specific targets.

LiDAR: Uses pulsed laser light to measure distances, creating 3D point clouds that can help identify targets based on their shape and geometry. It’s particularly useful for autonomous driving and robotics.

GPS: While not directly identifying targets, GPS provides critical location information, crucial for tracking target movement and establishing contextual information.

Q 4. Compare and contrast different data association techniques.

Data association techniques link measurements from different sensors to the same target over time. Several methods exist, each with strengths and weaknesses:

Nearest Neighbor: This simple method assigns each measurement to the closest predicted target state. It’s computationally efficient but vulnerable to noise and misassociations.

Probabilistic Data Association (PDA): This approach considers all possible associations, weighting them according to their probabilities. It is more robust to noise than nearest neighbor but computationally more intensive.

Joint Probabilistic Data Association (JPDA): Extends PDA to handle multiple targets and their associated measurements simultaneously, accounting for potential cross-correlations.

Multiple Hypothesis Tracking (MHT): Considers all possible association hypotheses, maintaining multiple tracks concurrently until the most likely hypothesis can be determined. This method is very robust but computationally expensive.

The choice of technique depends on the specific application, the number of targets, the noise level, and the available computational resources. For example, nearest neighbor might suffice for a simple tracking scenario with few targets and low noise, while MHT would be preferred for complex scenarios with multiple targets and significant measurement uncertainty.

Q 5. Explain Kalman filtering and its application in sensor fusion.

The Kalman filter is a powerful recursive algorithm used to estimate the state of a dynamic system from a series of noisy measurements. In sensor fusion, it helps to combine measurements from different sensors, taking into account the noise characteristics of each sensor and the dynamics of the target being tracked.

It works by predicting the target’s state at the next time step based on a motion model and then updating this prediction using the incoming sensor measurements. This process is iteratively repeated, refining the state estimate over time. The Kalman filter uses a covariance matrix to represent the uncertainty in the state estimate, which is updated with each measurement. This allows for incorporating the reliability (or unreliability) of each sensor.

For example, if a radar provides a noisy velocity estimate and a camera a less noisy position estimate, the Kalman filter will intelligently weight these inputs to provide a more accurate and consistent combined estimate of target position and velocity.

Q 6. How do you handle noisy sensor data in target identification?

Handling noisy sensor data is critical in target identification. Strategies include:

Filtering Techniques: Kalman filtering (as discussed above), median filtering, and other smoothing techniques can reduce the impact of random noise.

Outlier Rejection: Identifying and removing or down-weighting measurements that deviate significantly from expected values. Statistical methods such as the Z-score can be used to identify outliers.

Sensor Data Preprocessing: Applying techniques to clean up the data before fusion, such as noise reduction algorithms or calibration steps.

Robust Estimation Methods: Employing algorithms less sensitive to outliers, like robust versions of the Kalman filter.

Redundancy: Using multiple sensors of the same type to provide redundant measurements, and then averaging or using voting strategies to get a more robust measurement.

The specific approach depends on the nature of the noise (e.g., Gaussian, impulsive) and the computational constraints. It’s often an iterative process of experimenting with different approaches to achieve optimal results.

Q 7. Describe your experience with different sensor calibration techniques.

Sensor calibration is crucial for accurate target identification, as uncalibrated sensors can introduce significant errors. My experience encompasses various calibration techniques, including:

Internal Calibration: This involves using internal sensor parameters and self-diagnostic tools to correct for known systematic errors within the sensor itself. This is often done during sensor manufacturing or initial setup.

External Calibration: This involves using external reference points or targets to determine the sensor’s pose (position and orientation) and other parameters. Techniques such as camera calibration using a checkerboard pattern, or radar calibration using known targets are typical examples.

Multi-Sensor Calibration: This entails calibrating the relationship between multiple sensors, accounting for time delays, positional offsets, and other inter-sensor discrepancies. This could involve simultaneous measurements of a common target by multiple sensors to determine alignment and timing parameters.

In practice, we often use a combination of these techniques. For example, we might start with internal calibration, then refine the calibration using external calibration methods, and finally perform multi-sensor calibration to ensure all the sensors work in concert accurately. Calibration procedures are often iterative, requiring multiple calibration runs and error analysis to achieve the desired accuracy.

Q 8. What are some common challenges in multi-sensor data registration?

Multi-sensor data registration, the process of aligning data from different sensors with varying coordinate systems and timestamps, presents several significant challenges. These challenges stem from the inherent differences in sensor characteristics and the environment.

- Geometric Misalignment: Each sensor has its unique position and orientation, leading to discrepancies in spatial coordinates. Imagine trying to overlay a photograph taken from a drone with a radar scan – the perspectives are inherently different.

- Temporal Misalignment: Sensors might not record data simultaneously. A slight delay between a camera capturing an image and a lidar system scanning the same scene can cause registration errors. This is particularly crucial in dynamic environments where targets are moving.

- Sensor Noise and Uncertainty: Inherent noise in sensor data adds uncertainty to the registration process. This is amplified when dealing with low-quality sensors or challenging environments with obstructions.

- Scale Differences: The scale of measurements might vary across sensors. For example, a high-resolution camera provides detailed imagery, while a low-resolution thermal camera might show a more coarse representation of the same target.

- Data Format Incompatibilities: Different sensors often produce data in different formats, requiring complex transformations and pre-processing steps before registration can occur. This adds to the complexity and potential for errors.

Addressing these challenges typically involves using sophisticated algorithms like Iterative Closest Point (ICP) or techniques based on feature matching and transformation matrices. Robust solutions often rely on a combination of techniques, careful sensor calibration, and appropriate data pre-processing.

Q 9. How do you evaluate the performance of a multi-sensor target identification system?

Evaluating a multi-sensor target identification system requires a comprehensive approach encompassing various aspects of performance. We typically assess the system using a combination of metrics and real-world tests.

- Accuracy: How often does the system correctly identify the target? We use metrics like precision and recall.

- Precision: What proportion of the system’s positive identifications are actually correct?

- Recall: What proportion of the actual targets did the system successfully identify?

- Robustness: How well does the system perform under varying conditions (e.g., different weather, target orientations, levels of clutter)? This often involves testing with a wide variety of data and scenarios.

- Computational Efficiency: How quickly can the system process data and deliver results? This is crucial for real-time applications.

- False Alarm Rate: How often does the system incorrectly identify a non-target as a target?

- Real-world testing: The ultimate test is deploying the system in a controlled real-world scenario to observe its performance in realistic conditions.

A good evaluation plan incorporates controlled experiments, simulated environments, and real-world testing. The specific metrics and evaluation strategies depend on the application and the requirements of the system.

Q 10. What metrics do you use to assess the accuracy and reliability of target identification?

Assessing the accuracy and reliability of target identification involves a set of key metrics, often presented visually as confusion matrices or ROC curves. These metrics provide a comprehensive picture of the system’s performance.

- Accuracy: The overall correctness of the identification (number of correct identifications / total number of identifications).

- Precision: Out of all the instances identified as a specific target, what proportion were actually that target?

- Recall (Sensitivity): Out of all the actual instances of a specific target, what proportion did the system correctly identify?

- F1-Score: The harmonic mean of precision and recall, providing a balanced measure of performance.

- False Positive Rate (FPR): The rate at which the system incorrectly identifies a non-target as a target (Type I error).

- False Negative Rate (FNR): The rate at which the system fails to identify an actual target (Type II error).

- AUC (Area Under the ROC Curve): A measure of the system’s ability to distinguish between targets and non-targets across different thresholds. A higher AUC indicates better performance.

For instance, in a scenario identifying vehicles in satellite imagery, a high precision would ensure fewer false positives (incorrectly labeling non-vehicles as vehicles). A high recall would ensure that the system identifies most of the actual vehicles present in the imagery.

Q 11. Explain your understanding of Bayesian inference and its use in sensor fusion.

Bayesian inference provides a powerful framework for sensor fusion, allowing us to combine information from multiple sensors to arrive at a more accurate and robust estimate of the target’s characteristics. It’s based on Bayes’ theorem, which updates our belief about a hypothesis (e.g., target location or identity) given new evidence (sensor data).

Bayes’ Theorem is expressed as:

P(A|B) = [P(B|A) * P(A)] / P(B)Where:

P(A|B)is the posterior probability of hypothesis A given evidence B.P(B|A)is the likelihood of observing evidence B given hypothesis A.P(A)is the prior probability of hypothesis A.P(B)is the prior probability of evidence B (often considered as a normalization factor).

In sensor fusion, we might have prior knowledge about the target’s likely location or type. As we receive data from different sensors, we update our belief using Bayesian inference, incorporating the likelihood of the data given different hypotheses. This allows for a more nuanced and informed estimate of the target’s characteristics, combining the strengths of individual sensors and mitigating the weaknesses.

For example, a radar might provide an estimate of a vehicle’s speed, while a camera could provide an estimate of its color and type. Bayesian inference can combine this information to generate a more accurate and reliable identification.

Q 12. Describe different target tracking algorithms and their strengths/weaknesses.

Many target tracking algorithms are used in multi-sensor systems, each with its strengths and weaknesses. The choice often depends on the specific application and the characteristics of the sensors and environment.

- Kalman Filter: A linear optimal estimator that predicts the target’s state (position, velocity, etc.) based on a linear dynamic model and noisy measurements. It’s efficient but assumes linearity, which may not always be the case.

- Extended Kalman Filter (EKF): An extension of the Kalman filter that handles non-linearity using linearization techniques. It’s more versatile than the Kalman filter but can struggle with highly non-linear systems.

- Unscented Kalman Filter (UKF): Another approach for non-linear systems that uses a deterministic sampling technique to approximate the probability distribution. Often more accurate than the EKF, but can be more computationally expensive.

- Particle Filter: A non-parametric filter that represents the probability distribution with a set of particles. It’s highly flexible and can handle complex non-linear and non-Gaussian systems, but it is computationally demanding.

- Nearest Neighbor Algorithm: This is a simpler method that assigns a new measurement to the closest existing track. It’s easy to implement but can be sensitive to noise and clutter.

Choosing the right algorithm involves considering the computational constraints, the non-linearity of the target dynamics, and the presence of noise and clutter. For example, in a high-speed application where real-time processing is critical, a Kalman filter might be preferred for its efficiency, while in applications with high uncertainty and non-linear dynamics, a particle filter might be more suitable.

Q 13. How do you handle occlusion and clutter in target identification?

Occlusion and clutter are significant challenges in target identification. Occlusion refers to the partial or complete blocking of a target from a sensor’s view, while clutter refers to unwanted objects or signals that interfere with target detection. Effective strategies for handling these challenges are essential for robust system performance.

- Data Fusion: Combining data from multiple sensors can help overcome occlusion. If one sensor’s view is blocked, another sensor might still have a clear view of the target. This is a primary advantage of multi-sensor systems.

- Trajectory Prediction: If a target is temporarily occluded, predicting its trajectory based on past observations can help maintain track continuity.

- Clutter Rejection Techniques: Using filters and algorithms that suppress noise and irrelevant information can improve the reliability of target identification. These techniques can include statistical filters (e.g., Kalman filter) and morphological image processing techniques.

- Robust Feature Extraction: Choosing features that are less susceptible to occlusion and clutter, such as robust shape descriptors or deep learning features, can improve overall performance.

- Probabilistic Models: Incorporating probabilistic models (e.g., Bayesian networks) to represent uncertainty in the presence of occlusion and clutter can provide a more robust estimation of the target’s state.

For instance, in an autonomous driving system, if a pedestrian is partially occluded by a car, radar data might still detect the pedestrian’s presence, allowing the system to maintain awareness and take appropriate action.

Q 14. Discuss your experience with different feature extraction techniques for sensor data.

Feature extraction is crucial for effective multi-sensor target identification, transforming raw sensor data into informative features suitable for classification and tracking. Different sensors require different feature extraction techniques.

- Image-based sensors (Cameras): Features like SIFT (Scale-Invariant Feature Transform), SURF (Speeded-Up Robust Features), HOG (Histogram of Oriented Gradients), and deep learning-based features (CNNs) are commonly used to extract shape, texture, and color information.

- Radar: Features based on range, Doppler velocity, and signal strength are commonly used. Techniques like micro-Doppler analysis can extract fine-grained details about the target’s motion and structure.

- Lidar: Features are often derived from the point cloud data, including range, intensity, and geometric features like surface normals and curvature. Techniques like point cloud registration and segmentation are crucial preprocessing steps.

- Infrared (IR): Features based on thermal signatures, temperature gradients, and intensity patterns are employed. These features are valuable in low-light or adverse weather conditions.

The choice of feature extraction technique depends on the specific sensor, the target characteristics, and the application’s requirements. For instance, in identifying aircraft, radar Doppler features would be useful for detecting their velocity, while visual features from cameras could help identify their type.

In recent years, deep learning techniques have revolutionized feature extraction, offering automated feature learning capabilities. However, careful consideration of training data quality and model generalization remains important.

Q 15. Explain your familiarity with various machine learning algorithms used in target identification.

My experience encompasses a wide range of machine learning algorithms crucial for target identification. These algorithms are selected based on the nature of the data and the specific identification task. For instance, Support Vector Machines (SVMs) are excellent for high-dimensional data and are effective in classifying targets based on features extracted from sensor data. I’ve successfully used SVMs in differentiating between different types of aircraft based on radar cross-section signatures. Similarly, Deep Learning, particularly Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), are powerful tools for analyzing complex sensor data streams. CNNs are particularly useful for image processing from cameras or SAR imagery, allowing for precise object detection and classification. I have leveraged CNNs to identify ground vehicles from aerial imagery with very high accuracy. For time-series data from sensors like acoustic sensors or lidar, RNNs are very effective at capturing temporal dependencies to identify targets based on their movement patterns. Finally, Random Forests provide a robust and interpretable approach, helpful when understanding the feature importance in target identification is critical. I’ve used this method in the past for analyzing sensor data from multiple heterogeneous sources.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you handle uncertainty and ambiguity in sensor data?

Uncertainty and ambiguity are inherent in multi-sensor data. To address this, I employ a multi-pronged approach. First, I utilize robust statistical methods. For example, I incorporate Bayesian inference techniques to model the uncertainty associated with sensor measurements. This allows us to express our belief about the target identity as a probability distribution, rather than a single, potentially inaccurate, classification. Secondly, data fusion plays a crucial role. Combining data from different sensors helps to compensate for the limitations of individual sensors and resolve ambiguities. If one sensor is unreliable in a specific condition, another might provide complementary information. For example, combining infrared and radar data helps overcome challenges posed by weather conditions. Thirdly, ensemble methods, such as combining multiple classifiers, are very effective. Different classifiers might specialize in different aspects of the data or handle uncertainty in different ways. Combining their predictions through techniques like weighted averaging or voting improves overall robustness and accuracy. Finally, I’ve found that incorporating domain knowledge into the model through expert rules or constraints is crucial for resolving ambiguity when dealing with uncertain data.

Q 17. Describe your experience with real-time sensor data processing.

Real-time sensor data processing demands efficient algorithms and optimized system architectures. My experience includes developing and deploying systems capable of processing high-volume data streams from multiple sensors with minimal latency. This involves employing techniques such as parallelization and distributed computing to handle the computational burden. I have worked extensively with streaming data platforms like Apache Kafka and Spark Streaming to manage large volumes of sensor data effectively. Furthermore, we prioritize the use of lightweight and computationally efficient algorithms, such as optimized versions of machine learning models or custom-designed algorithms for specific tasks. We employ techniques like data reduction and feature selection to minimize processing requirements without sacrificing accuracy. For example, in a system I developed for real-time threat detection, we used a pipeline that pre-processed the raw sensor data, performed feature extraction, and then fed the features into a lightweight classifier. The entire pipeline was optimized to achieve a processing time well under the real-time constraint.

Q 18. How do you optimize the performance of a multi-sensor system?

Optimizing a multi-sensor system involves a holistic approach. It begins with careful sensor selection; choosing sensors with complementary capabilities and appropriate coverage. Next, data fusion algorithms need to be carefully chosen and tuned based on the sensor characteristics and the specific target identification problem. Then, feature engineering plays a critical role. The effectiveness of any machine learning algorithm depends heavily on the features used. Careful selection and extraction of relevant features from the sensor data is essential for optimal performance. Furthermore, we use model selection and hyperparameter tuning techniques, often involving cross-validation, to find the best model and parameters for the specific data and application. Finally, continuous monitoring and evaluation of the system’s performance is essential, allowing us to identify areas for improvement and adapt to changing conditions. For example, I might evaluate various feature extraction methods to determine which leads to the highest accuracy in target classification.

Q 19. Explain your understanding of different sensor error models.

Understanding sensor error models is critical for accurate target identification. Different sensors exhibit different types of errors. Systematic errors are consistent biases, such as calibration errors in a radar system. These errors are often addressed through calibration procedures or modeling. Random errors are unpredictable fluctuations due to noise. These can be mitigated through signal processing techniques like filtering and averaging. Specific sensor error models include Gaussian noise for many sensors, but also more complex models for specific situations, such as multiplicative noise in imaging sensors. For example, we might model the error in an infrared sensor as a combination of Gaussian noise and a systematic offset due to temperature variations. Understanding these error models allows us to incorporate them into our data fusion algorithms or to develop more robust classifiers capable of handling noisy and unreliable data. We also need to consider the impact of environmental factors on sensor accuracy, such as temperature, humidity, or atmospheric conditions. We then apply appropriate corrections or compensate for these factors during data processing.

Q 20. Discuss your experience with different data pre-processing techniques.

Data pre-processing is a crucial step in improving the accuracy and efficiency of target identification. Common techniques include noise reduction (using filters like Kalman filters or median filters), data normalization (scaling data to a common range), feature scaling (standardization or min-max scaling), and dimensionality reduction (using Principal Component Analysis or t-SNE). I have also extensively used outlier detection techniques to remove erroneous or corrupted data points which can significantly affect the performance of algorithms. For example, in one project involving sonar data, we used a combination of wavelet denoising and median filtering to effectively reduce noise while preserving important features. Another important aspect is data cleaning, which involves handling missing values or inconsistent data entries. Techniques like imputation (filling in missing values based on statistical models) or removal of inconsistent entries are used depending on the data characteristics. The choice of pre-processing methods depends heavily on the characteristics of the data and the algorithms used for target identification.

Q 21. How do you ensure the integrity and security of sensor data?

Ensuring the integrity and security of sensor data is paramount. This starts with implementing robust data validation procedures at the sensor level to detect anomalies and errors. We also use cryptographic techniques to protect data during transmission and storage. This might involve using encryption algorithms to safeguard sensitive data from unauthorized access. Additionally, access control mechanisms ensure that only authorized personnel can access the sensor data. Data integrity is further ensured through techniques like hashing and digital signatures, which provide a way to detect any tampering or unauthorized modifications to the data. Regular auditing and logging of data access and modifications maintain a trail of data usage, facilitating security and compliance checks. Finally, implementing strong intrusion detection systems can help detect and respond to potential security breaches. In practical scenarios, I utilize a layered security approach, combining multiple security measures to protect the data throughout its lifecycle.

Q 22. Explain your experience with software tools and programming languages used in sensor fusion.

My experience with sensor fusion software tools and programming languages is extensive. I’m proficient in several languages crucial for this field, including Python, MATLAB, and C++. Python’s versatility shines in data preprocessing and machine learning tasks, while MATLAB offers excellent tools for signal processing and visualization. C++ is vital for developing real-time, high-performance applications necessary for many sensor fusion systems.

For example, in a recent project involving fusing data from lidar and radar sensors for autonomous driving, I used Python with libraries like NumPy and SciPy for data cleaning and feature extraction. Then, I leveraged MATLAB’s Signal Processing Toolbox to perform Kalman filtering and data fusion, visualizing results in real-time. Finally, critical components requiring extremely low latency were implemented in C++ for optimal performance.

Beyond these core languages, I also have experience with ROS (Robot Operating System), a robust framework for robotic applications that facilitates communication and data exchange between various sensor nodes and processing units. I’ve used it to build distributed sensor fusion architectures where different sensors and algorithms operate concurrently.

Q 23. Describe your experience with developing and testing multi-sensor systems.

Developing and testing multi-sensor systems is a multifaceted process that I’ve honed over years of experience. It begins with a thorough system design, considering sensor selection, data communication protocols, and fusion algorithms. This is followed by rigorous testing, which includes both simulations and real-world deployments.

For instance, I was involved in developing a system for tracking wildlife using a combination of acoustic sensors, thermal cameras, and GPS trackers. The development involved designing the hardware interface, writing the data acquisition and processing software, and implementing robust error-handling mechanisms. Testing included simulations using synthetic data to validate the algorithms and field testing in various environmental conditions to assess real-world performance.

A critical part of testing is evaluating the system’s accuracy, precision, and robustness under different scenarios, including noisy data, sensor failures, and varying environmental conditions. We often use metrics like root mean squared error (RMSE) to quantify performance and identify areas for improvement.

Q 24. How do you handle conflicting information from multiple sensors?

Conflicting information from multiple sensors is a common challenge in multi-sensor target identification. This can stem from sensor noise, biases, or limitations in sensor capabilities. Addressing this requires a robust data fusion strategy that accounts for the uncertainties and inconsistencies in the sensor data.

One effective approach is Bayesian inference, where we model the uncertainty associated with each sensor measurement using probability distributions. The algorithm then combines these distributions to produce a more accurate and reliable estimate of the target’s characteristics. For instance, a Kalman filter is a powerful Bayesian technique used to estimate the state of a dynamic system, like the position and velocity of a target, by fusing noisy sensor measurements over time.

Another approach involves employing a weighted average, where each sensor’s contribution is weighted based on its estimated reliability. This requires a mechanism for assessing the reliability of each sensor, which can be based on factors like past performance, sensor characteristics, and environmental conditions. If conflicts are severe, outlier detection techniques can be employed to identify and potentially discard unreliable measurements.

Q 25. Explain your approach to designing a multi-sensor system for a specific application.

Designing a multi-sensor system for a specific application is a systematic process that starts with clearly defining the application requirements and identifying the objectives. This involves understanding the target characteristics, the environmental conditions, and the desired level of accuracy and precision.

Next, we select appropriate sensors based on their capabilities and limitations. The choice of sensors depends on factors such as the target’s properties, the environment, cost constraints, and power requirements. For example, a system for detecting airborne objects might use radar for long-range detection and infrared cameras for detailed imagery.

Once the sensors are selected, we design the data acquisition and fusion architecture. This involves selecting appropriate communication protocols and defining the data processing algorithms. The design should also account for real-time constraints and the need for low latency processing. Finally, we develop a comprehensive testing and validation plan to evaluate the system’s performance against the predefined requirements.

Q 26. How do you address the challenges of data latency in real-time systems?

Data latency in real-time systems is a critical concern, especially in applications requiring immediate response, such as autonomous driving or robotics. Addressing this involves a multi-pronged approach focusing on minimizing latency at each stage of the system.

Firstly, we optimize sensor data acquisition to reduce the time it takes to capture and transmit sensor data. This involves selecting high-speed sensors and communication protocols. Secondly, we employ efficient data processing algorithms that minimize computational complexity. For instance, using optimized libraries and parallel processing techniques can drastically reduce computation time.

Thirdly, we carefully design the system architecture to minimize data transfer delays. This can involve using techniques like distributed processing, where data is processed closer to the source, or using specialized hardware like FPGAs (Field-Programmable Gate Arrays) for real-time signal processing. Finally, using predictive algorithms that anticipate future states based on past trends can help mitigate the effects of latency on system performance.

Q 27. Discuss your experience with different types of target models.

My experience encompasses a variety of target models, from simple point-mass models to more complex representations like extended Kalman filters for maneuvering targets and even sophisticated probabilistic models incorporating shape, texture, and other features.

Simple point-mass models are suitable for tracking targets with minimal dynamics, where we only need to estimate position and velocity. These models are computationally efficient, but their accuracy is limited when dealing with complex target motion. Extended Kalman Filters address this limitation by linearizing the non-linear dynamics, allowing for more accurate tracking of maneuvering targets.

For more sophisticated target identification, we use more elaborate models that incorporate visual, spectral, or other sensor information. These models might employ machine learning techniques, such as Support Vector Machines (SVMs) or Convolutional Neural Networks (CNNs), to classify targets based on their features. The choice of target model depends on the specific application and the desired level of detail in the target representation.

Q 28. Describe your experience with the ethical considerations of using sensor data for target identification.

Ethical considerations are paramount in the development and deployment of sensor systems for target identification. Bias in algorithms, data privacy concerns, and the potential for misuse are critical issues that require careful consideration.

Bias in algorithms can lead to unfair or discriminatory outcomes. For example, if the training data used for a target recognition system is not representative of the population, the system may perform poorly on certain demographics. Addressing this requires careful data curation and algorithm design to minimize bias and ensure fairness.

Data privacy is another crucial concern. Sensor data often contains sensitive information about individuals or locations, and it’s essential to protect this data from unauthorized access or use. Implementing strong data security measures, anonymization techniques, and adhering to relevant privacy regulations are crucial. The potential for misuse, such as using the system for surveillance or tracking without proper authorization, should also be carefully considered and mitigated through appropriate safeguards and oversight.

Key Topics to Learn for Multi-Sensor Target Identification Interview

- Data Fusion Techniques: Understand various data fusion methods (e.g., sensor fusion algorithms, Kalman filtering, Bayesian networks) and their applications in target identification.

- Sensor Modeling and Calibration: Explore the characteristics of different sensors (radar, lidar, infrared, etc.), their limitations, and how to calibrate them for accurate data integration.

- Feature Extraction and Selection: Learn about techniques for extracting relevant features from sensor data and selecting the most discriminative features for target classification.

- Target Classification and Recognition: Master different classification algorithms (e.g., support vector machines, neural networks) and their application in identifying targets based on multi-sensor data.

- Uncertainty Management and Handling: Understand how to model and manage uncertainty in sensor data and its impact on target identification accuracy.

- Performance Evaluation Metrics: Familiarize yourself with metrics used to evaluate the performance of multi-sensor target identification systems (e.g., precision, recall, F1-score).

- Practical Applications: Consider real-world applications like autonomous driving, air traffic control, surveillance systems, and robotics, and how multi-sensor fusion plays a crucial role.

- Problem-Solving Approaches: Practice approaching problems systematically, considering data limitations, potential errors, and the trade-offs between different algorithms and techniques.

- Algorithm Optimization and Computational Efficiency: Understand the computational aspects of multi-sensor data processing and strategies for optimizing algorithms for real-time applications.

Next Steps

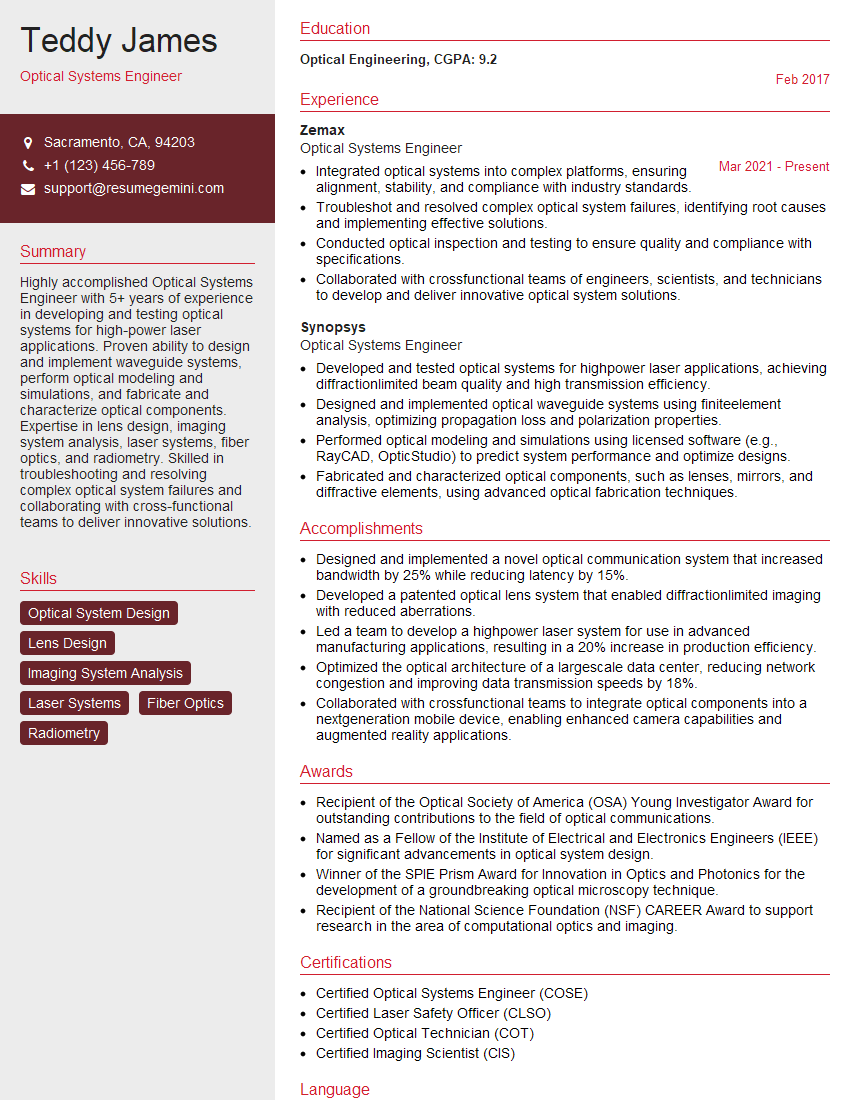

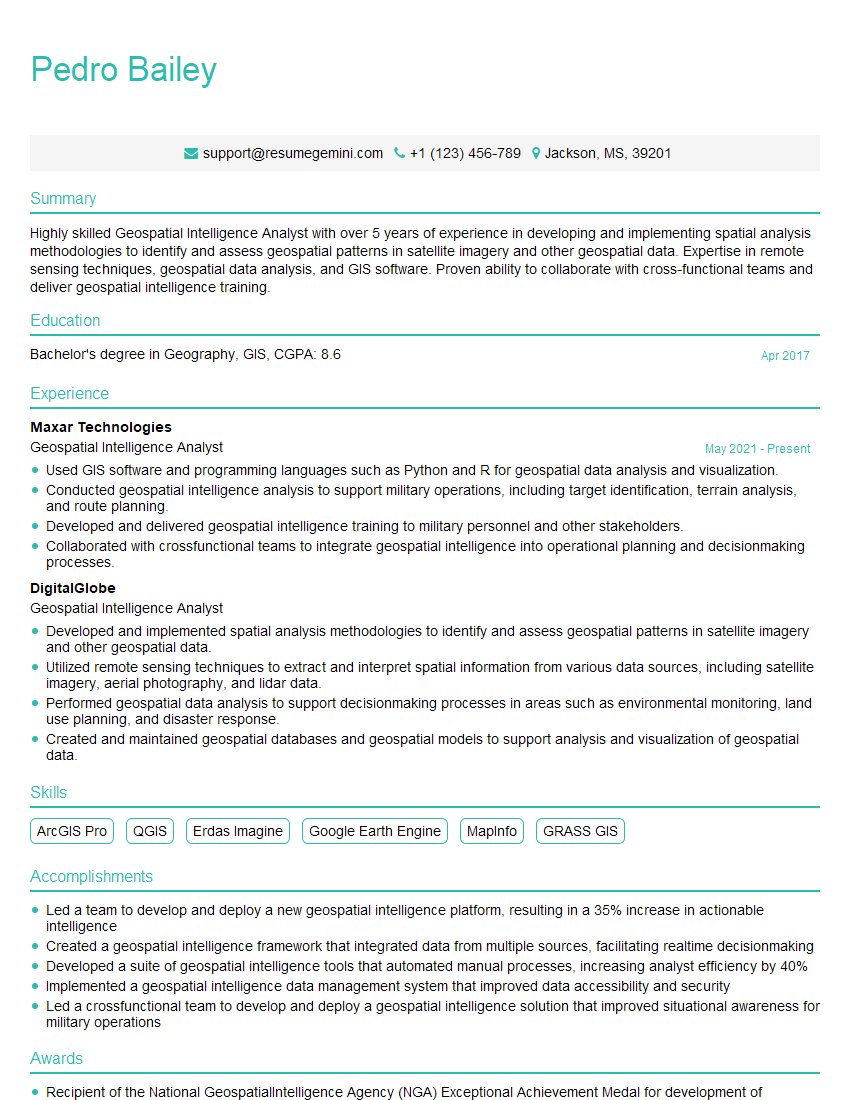

Mastering Multi-Sensor Target Identification opens doors to exciting and impactful careers in cutting-edge technologies. To maximize your job prospects, a well-crafted, ATS-friendly resume is crucial. This is where ResumeGemini can help. ResumeGemini provides a powerful tool to create a professional resume that highlights your skills and experience effectively. We offer examples of resumes tailored specifically to Multi-Sensor Target Identification to guide you in crafting the perfect application. Invest time in building a strong resume—it’s your first impression and a key factor in landing your dream job.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

To the interviewgemini.com Webmaster.

Very helpful and content specific questions to help prepare me for my interview!

Thank you

To the interviewgemini.com Webmaster.

This was kind of a unique content I found around the specialized skills. Very helpful questions and good detailed answers.

Very Helpful blog, thank you Interviewgemini team.